Kernels: A Deep Dive

Author(s): Ayo Akinkugbe

Originally published on Towards AI.

Introduction: What are Kernels

A kernel is a smart way to measure similarity between two things — in particular, data points, images, text documents, or more abstract objects. In mathematics, a kernel is simply a function k(x,y) that takes any two objects, x and y, and gives you a number showing how similar (or related) they are.

Unlike other similarity methods, a kernel function k(x,y) can be viewed as an implicit dot product in a higher-dimensional space. While standard dot products work in the original feature space, kernels compute similarity in a transformed space without explicitly creating that transformation. Mathematically: k(x,y) = ⟨φ(x), φ(y)⟩, where φ maps data to a higher-dimensional space. Also distance measures (like Euclidean or the Mahalanobis) quantify dissimilarity — i.e. smaller means more similar. Kernels quantify similarity — i.e. larger means more similar. Kernels must satisfy mathematical properties (like being positive definite) that distance metrics don’t need to satisfy.

Why Use Kernels in ML?

Classifying Non-linear Patterns

When solving real-world problems with machine learning, especially classification tasks, we often run into a major issue where our data isn’t linearly separable. That means we can’t just draw a straight line (or a flat plane) to simply split categories like spam vs. not spam, or fraud vs. safe. A kernel function can be use to transform the data from a two-dimensional space to a three-dimensional or a higher feature space that is easily separable using a linear classifier. This is where Support Vector Machines (SVMs) shine of which a big part of what makes them powerful is the use of kernels.

When solving real-world problems where data isn’t linearly separable.A kernel function can be used to transform the data from a two-dimensional space to a three-dimensional or higher feature space that is easily separable using a linear classifier.

Computational Efficiency in Working in Complex and Huge Feature Spaces

Asides classifying non-linear patterns, you can work as if your data lives in a high- (even infinite-) dimensional space with kernels, yet never have to actually compute or store those high-dimensional data. Instead of explicitly transforming data into potentially millions of dimensions (or infinite dimensions), you compute similarity directly using the original data. For instance, a polynomial kernel of degree 2 implicitly works in a space with O(d²) dimensions, but computes in O(d) time where d is the original dimension. This is called the kernel trick.

Structured Data Comparison

Kernels let you compare objects like images, text, graphs, or proteins, not just simple numbers. Instead of manually creating numerical features from complex objects (text, images, proteins), kernels can directly compare them. Kernels can also capture domain-specific similarities that vector representations might miss. For example, a string kernel can measure text similarity based on shared subsequences without converting to bag-of-words vectors.

Enablement of Powerful Algorithms

Many state-of-the-art algorithms are enabled or supercharged by kernels. Apart from common ML models that leverage the kernel trick (Support vector machines, String kernels for text analysis etc.) the kernel method has also been used to optimize the attention mechanism found in transformers. For instance, Flash attention is an attention algorithm use to reduce the problem of storing the full attention matrix, causing memory bottlenecks with long sequences. It uses tiling and re-computation to calculate attention without storing the complete attention matrix. It leverages GPU memory hierarchy (SRAM/HBM) to compute attention in blocks, reducing memory usage and increasing speed. This is a type of kernel trick.

Also another problem with attention that has leveraged kernels is that existing attention optimizations are rigid and can’t adapt to different hardware or sequence lengths. Flex attention by Meta solves for this by providing a flexible framework that automatically selects optimal attention implementation based on sequence length, hardware, and other factors. It dynamically switches between different algorithms (including Flash Attention) to maximize efficiency for each specific scenario.

Kernels will always be useful. However before we go further, let’s go back to some foundations.

Flash attention in transformers uses tiling and re-computation to calculate attention without storing the complete attention matrix. This is a type of kernel trick.

Duality: A Different Way to Solve the Same Problem

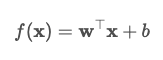

In standard linear models like linear regression, we solve for the weights w directly by minimizing a cost function. This is referred to as the primal problem. In the primal, the model is written directly in terms of its weights (for linear models, these are the coefficients or parameters).

- w are the weights (parameters learned from data)

- x is your input data (a test/example point)

- b is a bias or intercept

- The model is written directly as a dot product of weights and features

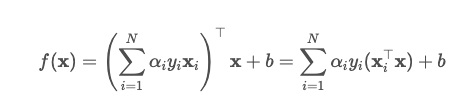

However, there’s another perspective: instead of solving for weights directly, we can express the problem in terms of how each training point contributes to the solution. This is the dual problem. In the dual, the model is written as a weighted sum of the input data points.

so the prediction is

- Each αi is a dual variable (the weight for point i)

- y_i is the label or class for point i

- x_i is the i-th training data point

- The input is written as a sum over all the data points

This is useful because in the dual form, data only appears as dot products between points. This means we can build a more powerful model by focusing on how similar points are to one another, rather than their raw feature values. The dual formulation enables the use of kernels.

In practice, the Lagrangian duality is a technique that allows one for the reformulation of the constrained optimization problem into an equivalent problem, the dual problem, which can sometimes be easier to solve. The general strategy is as follow:

- Identify constraints: Write the objective and convert all constraints into the form where they are less or equal to zero.

- Form the Lagrangian: Combine the objective function with the constraints using Lagrange multipliers.

- Construct the dual function: Minimize the Lagrangian with respect to the original variables. This gives us a lower bound on the original problem.

- Maximize the dual function: With respect to the multipliers, under conditions (e.g., they must be non-negative for inequality constraints).

- Solve the dual problem: When strong duality holds (e.g., under convexity or linearity), solving the dual gives us the same solution as the original problem.

The kernel trick in machine learning enables solving non-linear problems (like classification of non-linearly separable data) by implicitly mapping data to higher-dimensional spaces where dot products can be computed efficiently. This technique works in conjunction with dual formulations of optimization problems, where algorithms only need to compute similarities between data points rather than explicit feature transformations.

When duality is strong, solving the dual problem gives us the same solution as the primal problem.

Positive Definiteness: Making Sure the Math Works

In the dual form, the learning algorithm relies heavily on matrices made up of dot products (referred to as the kernel matrix). For optimization to work reliably (i.e. to ensure we find a true minimum), this kernel matrix needs to be positive definite. A function k(x,y) is a valid kernel if and only if it is positive definite. If the kernel matrix is not positive definite, we can’t guarantee that our optimization will work as expected.

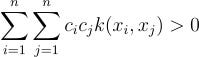

A kernel k(x,y) is positive definite if for any finite set of points {x₁,…,xₙ} and any non-zero vector c, the following holds:

Mercer’s Theorem: The Green Light for Kernels

This is where Mercer’s Theorem comes in. It says: if your kernel function results in a positive definite kernel matrix, then there exists some feature space (possibly infinite-dimensional!) where your data is being compared through dot products.

This simply means if the kernel function passes positive definiteness, we’re guaranteed that there’s a mathematical space where our model can work like magic — without us ever having to see or compute that space.

How Do Kernels Work? — The Kernel Trick

The kernel trick works precisely because of positive definiteness. When a kernel k(x,y) is positive definite, Mercer’s Theorem guarantees it equals an inner product ⟨φ(x), φ(y)⟩ in some feature space. Therefore the Kernel trick is an excellent shortcut that works!

Normally, if we wanted to map our data to a higher-dimensional space (say, turning 2D circles into flat 3D planes), we’d have to compute those new features explicitly — and that can be expensive or even impossible. But the kernel trick lets us do this implicitly. Instead of computing the features, we compute the dot product as if we had already mapped the data. This is much faster and lets us work in high (or infinite) dimensions without the computational cost.

Common Kernels Functions

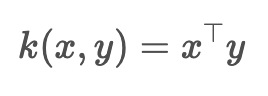

Linear Kernel

This is an ordinary dot product that doesn’t lift the data. It just measures linear similarity between points

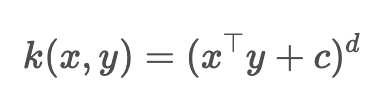

Polynomial Kernel

This kernel lets you work with all polynomial features up to degree d

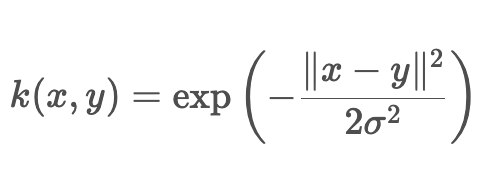

Gaussian (RBF) Kernel

The RBF (Radial Basis Function) gives high similarity to close points, low similarity to far points and can bend infinitely

String/Sequence Kernels

Instead of explicitly mapping strings to feature vectors, string kernels implicitly define a feature space and compute similarity within that space.

Graph Kernels

Leveraged in network analysis, a graph kernel is a kernel function that computes an inner product on graphs. Intuitively it is a function measuring the similarity of pairs of graphs.

Conclusion — Why This Matters

Many machine learning models like logistic regression or basic neural networks rely on explicit features and can struggle when the decision boundary isn’t linear or the features are complex. You often have to engineer features by hand or accept poor performance when your features can’t capture the complexity. SVMs are a common deviation where the model can leverage kernels to handle this problem effortlessly, even in very high-dimensional spaces. With kernels, SVMs let you “bend” the space until your classes are easily separable. The next post focuses in detail on how SVMs work and how they leverage kernels.

For more on the Mathematics for AI & ML 🧮 check out this list:

Maths for AI/ML

View list4 stories

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.