This AI newsletter is all you need #4

Last Updated on July 25, 2022 by Editorial Team

What happened this week in AI

The International Conference on Machine Learning (ICML) 2022 conference is happening this week — you can stay tuned to a couple of articles on our side covering the most exciting news and research shared there, including “Make-A-Scene” which we cover on this week’s “papers of the week” section.

ICML is a big conference in the field and many breakthroughs are published there. We will share the top paper of the conference. Unfortunately, we do not have anyone from the team there, in person. Let us know if you’d like us to send someone from the Toward’s AI team at these events to publish a recap and a “how it’s like” kind of article to share our in-person experience with those of you that might be interested in going to such events.

Hottest News

- PLEX: a framework to improve the reliability of deep learning systems

Google introduced PLEX, a framework for reliable deep learning as a new perspective about a model’s abilities; this includes a number of concrete tasks and datasets for stress-testing model reliability. They also introduce Plex, a set of pre-trained large model extensions that can be applied to many different architectures. - Tesla’s AI director Andrej Karpathy leaves the company after 5 years of autonomous vehicles research

Since 2017, Autopilot has progressed from keeping Teslas in lanes to navigating city streets, he noted. I am excited to see who’s gonna be the next AI director at Tesla, and even more exciting to see what Andrej will work on next as he mentioned he wanted to “spend more time revisiting [his] long-term passions around technical work in AI, open source and education.” - “I posted my project on Reddit and received 9 job offers”!

A Reddit user shared his project and received 9 official job offers. The moral of this story is? Work on personal projects and share them online! That’s the best way to learn and improve your portfolio!

Most interesting papers of the week

- MegaPortraits: One-shot Megapixel Neural Head Avatars

They bring megapixel resolution to animated face generations (neural head avatars), focusing on the “cross-driving synthesis” task: when the appearance of the driving image is substantially different from the animated source image. - ProDiff: Progressive Fast Diffusion Model for High-Quality Text-to-Speech

Yes, a diffusion model for high-quality text-to-speech! ProDiff parameterizes the denoising model by directly predicting clean data to avoid distinct quality degradation in accelerating sampling, requiring only 2 iterations to synthesize high-fidelity mel-spectrograms. - Make-A-Scene: Scene-Based Text-to-Image Generation with Human Priors

“Make-A-Scene”: a fantastic blend between text and sketch-conditioned image generation. Learn more in our article!

Enjoy these papers and news summaries? Get a daily recap in your inbox!

This issue is brought to you thanks to our fantastic community:

Join us on discord! Learn AI Together and Towards AI have recently partnered to build a community that is engaging, inclusive, and mutually beneficial. In addition, the community will serve as a platform for the sharing of AI skills, knowledge, and developments, enabling students, engineers, entrepreneurs, academics, and others to work collaboratively to make AI more accessible to everyone.

Interested in becoming a Towards AI sponsor and being featured in this newsletter? Find out more information here or contact sponsors@towardsai.net!

The Learn AI Together Community section!

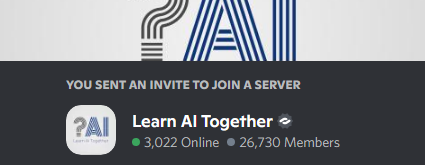

Meme of the week!

The Iron Law of AI. Meme shared by RobKnight#4276. Join the conversation and share your memes with us!

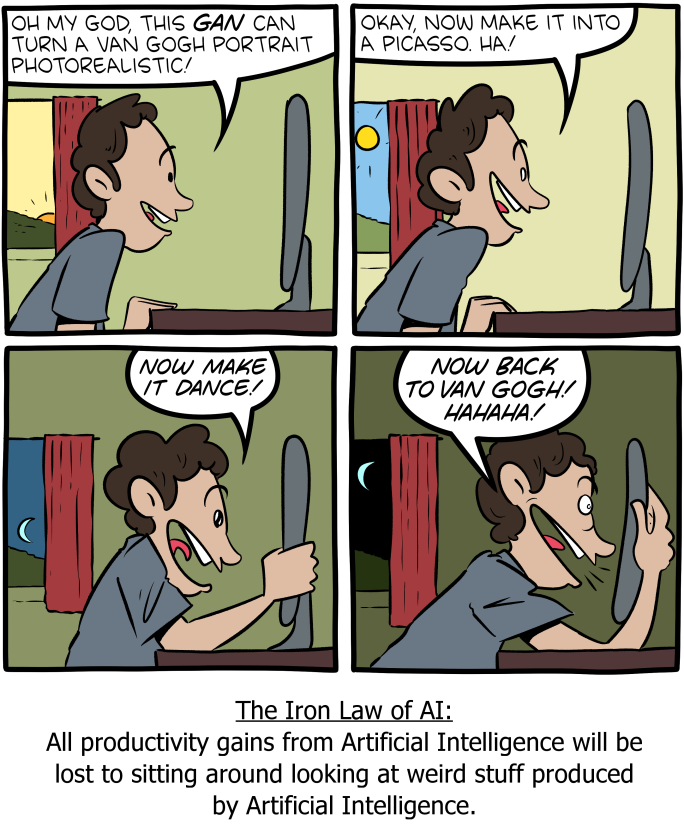

Featured Community post from the Discord

We love to see you share your events in our community and help spread the world ! If you are in Vienna this week, check it out, looks super interesting!

Create compelling Disco Diffusion artworks in one line.

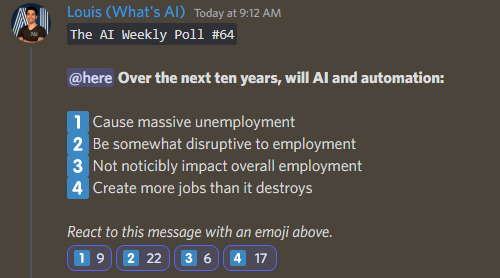

AI poll of the week!

Let us know what you think: join the discussion on Discord!

TAI Curated section

Article of the week

The significance of privacy in deep learning applications is discussed in this article. The PySyft open-source framework tackles the difficulties of moving toward more distributed architectures, which in turn guarantees effective privacy protections in deep learning models. The author does a fantastic job of explaining PySyft and raising privacy concerns in deep learning applications.

This week we published 28 new articles and welcomed 5 new writers to Towards AI. If you are interested in publishing at Towards AI, please sign up here and we will publish your blog to our network if it meets our editorial policies and standards.

Lauren’s Ethical Take on LaMDA’s potential for sentience

I may have missed the peak of the conversation around the news of Google’s LaMDA and engineer Blake Lemoine’s assertion of its sentience, but there is still much to be discussed!

One of my gut reactions to the news was that the need for a sentience Turing test has skyrocketed. I was introduced to AI ethics primarily through researching forms of a moral Turing Test with my philosophy advisor a few years ago, and surveyed a good chunk of the ethical work on what we need to do to adapt the Turing Test to determine moral agency in AI. I was reminded of a quote from Karsten Weber’s 2013 paper titled What is it like to encounter an autonomous artificial agent?:

“…a successful interaction of human beings and autonomous artificial agents depends more on which characteristics human beings ascribe to the agent than on whether the agent really has those characteristics.”

Based on this definition, LaMDA created a highly successful interaction with a human, to have been deemed sentient. This reminds us that AI is almost inherently anthropomorphic, due to the biomimicry of neural networks and our standard for intelligence is almost always human. Determining intelligence on our ability to be fooled by them (ie the original Turing Test) is not a model that will work for determining sentience. There is much work to be done to create models that help fill this need.

Going forward in this exciting discussion, remember that the ascription of sentience to AI is not something that we can just dismiss anymore, whether it’s correct or not. These conversations can be unsettling, but a bit of compassion, patience and due diligence will take us far.

Featured Jobs this week

Senior Machine Learning Scientist @ Atomwise ( San Francisco — USA)

Senior ML Engineer — Algolia AI @ Algolia (Hybrid remote)

Senior ML Engineer — Semantic Search @ Algolia (Hybrid remote)

Machine Learning Engineer @ Gather AI (Remote — India)

Deep Learning Engineer (R&D — Engineering) @ Weights & Biases (Remote)

Interested in sharing a job opportunity here? Contact sponsors@towardsai.net or post the opportunity in our #hiring channel on discord!

This AI newsletter is all you need #4 was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.