From 1T Tokens to Total Cognition: The Numbers Behind the New AI Brain…

Author(s): R. Thompson (PhD) Originally published on Towards AI. “The greatest advancements in AI will not come from models that specialize in single modalities, but from those that can seamlessly integrate our multi-sensory world.” — Andrej Karpathy Since the introduction of the …

Inside a 7-Layer Cognitive Stack: How Claude + MCP Deliver Real-Time Epistemic Intelligence…

Author(s): R. Thompson (PhD) Originally published on Towards AI. “Context is not just a variable. It’s the difference between output and understanding.” The evolution of AI agents has moved beyond prediction and instruction-following into a domain where interpretability, confidence, and memory are …

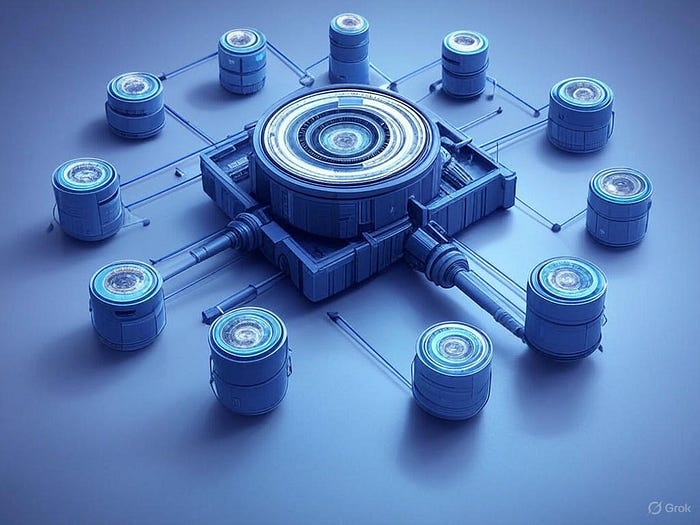

The Secret Protocol Powering GenAI Efficiency?…MCP’s Impact Might Be Bigger Than the Model Itself

Author(s): R. Thompson (PhD) Originally published on Towards AI. “If your AI doesn’t interact with tools, it’s not acting — it’s just predicting.” Powerful large language models like GPT-4, Claude, and DeepSeek R1 can generate accurate, human-like responses. But when it comes …

Misinformation vs. Machine: OpenAI, Perplexity & Grok3 Tested Across 5 Real-World Truth Tasks

Author(s): R. Thompson (PhD) Originally published on Towards AI. In a digital world polluted with falsehoods, three AI titans — OpenAI, Perplexity, and Grok3 — are leading a new kind of war: a war for truth. Their mission? Help humans not just …

DeepSeek R1 on a Budget? Our XGBoost Model Predicts 84% Accuracy and 30–40% RAM Savings via Quantization

Author(s): R. Thompson (PhD) Originally published on Towards AI. (Credit : Developed using AI) “Deploying AI locally is no longer a constraint — it’s a prediction challenge.” Much of today’s generative AI narrative is fixated on benchmarks, token counts, and transformer tweaks. …

How I Deployed DeepSeek R1 Locally with Just 8GB RAM: Benchmarks, Code & RAM-Saving Tricks Included…

Author(s): R. Thompson (PhD) Originally published on Towards AI. “You don’t need a supercomputer to harness superintelligence. You just need the right strategy.” As powerful as language models like DeepSeek R1 are, one question keeps many developers up at night: Can I …

Graphite’s Predictive Edge: How Event-Based AI Slashed Escalations by 41% in Legal Ops

Author(s): R. Thompson (PhD) Originally published on Towards AI. “Event-based systems don’t just react — they forecast. The next evolution of LLMs is predictive, not just generative.” The illustration above presents the comprehensive and layered architecture of the Graphite Framework integrated with …

LangChain vs. CrewAI: Why 5.76x Speed Can’t Beat 92% Accuracy Without This Secret Ingredient

Author(s): R. Thompson (PhD) Originally published on Towards AI. AI agents are evolving beyond simple prompt-response models. They are becoming dynamic systems capable of planning, reflecting, delegating tasks, and interacting with external environments. These agents can retrieve real-time data, interact with APIs, …

Mixtral 8x7B vs LLaMA 2: Why Sparse AI Models Outperform Dense Giants in Real-World Wealth Decisions

Author(s): R. Thompson (PhD) Originally published on Towards AI. Imagine asking a question like, “What’s the tax hit if I sell Tesla stock held for 18 months?” and getting an answer that blends IRS logic, stock market trends, and personalized advice in …

Are We Still Coding… or Just Commanding? The Rise of Autonomous DevOps with ManusAI

Author(s): R. Thompson (PhD) Originally published on Towards AI. The AI landscape has undergone a seismic shift. We’ve moved beyond conversational interfaces to truly autonomous systems capable of executing complex tasks without continuous human guidance. ManusAI, developed by Monica.im, stands at the …

The Predictive Core: Designing Memory-Augmented Architectures for Autonomous AI Agents

Author(s): R. Thompson (PhD) Originally published on Towards AI. The prevailing paradigm in generative AI continues to hinge on stateless transformers. Despite advances in token context length and parameter scale, current architectures overwhelmingly depend on prompt-response cycles, lacking sustained internal representations of …

Fine-Tuning vs Distillation vs Transfer Learning: The $2.3M Deployment Cost Dilemma Every AI Team Must Solve…

Author(s): R. Thompson (PhD) Originally published on Towards AI. In the age of Large Language Models (LLMs), terms like fine-tuning, distillation, and transfer learning dominate technical discussions across AI labs and developer forums alike. But despite their popularity, there’s often confusion around …

From 2TB to 64GB: How Predictive Modeling Transformed Vector Storage in MongoDB + Voyage A

Author(s): R. Thompson (PhD) Originally published on Towards AI. “Scalability isn’t magic — it’s a measurable, predictable science.” Vector databases are often celebrated for unlocking unprecedented capabilities in semantic search, recommendation systems, and retrieval-augmented generation (RAG) applications. Yet beneath the surface, scaling …

The Invisible Backbone of AI: How Real-Time Feedback Loops Quietly Reshape Every Model You Deploy

Author(s): R. Thompson (PhD) Originally published on Towards AI. Traditional software engineering once ended when the code shipped. Today, it begins there. Modern AI-augmented development ecosystems extend beyond coding into real-time prediction serving, continuous monitoring, and adaptive optimization. Predictive analytics no longer …

Before You Mutate: Why the Smartest Genetic Algorithms Will Predict Their Own Success

Author(s): R. Thompson (PhD) Originally published on Towards AI. “The future isn’t random. 🧬 It’s modeled.” Genetic Algorithms (GAs) mirror natural evolution to solve complex optimization puzzles by simulating selection, crossover, and mutation. Yet in real-world deployments, success remains highly unpredictable. Sometimes …