Microsoft Phi-2: Tiny Mighty Open Source Model with Verbal Diarrhea

Last Updated on January 10, 2024 by Editorial Team

Author(s): Dr. Mandar Karhade, MD. PhD.

Originally published on Towards AI.

A new lightweight model for developing prototypes

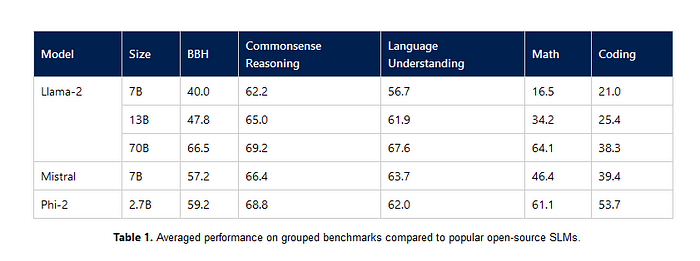

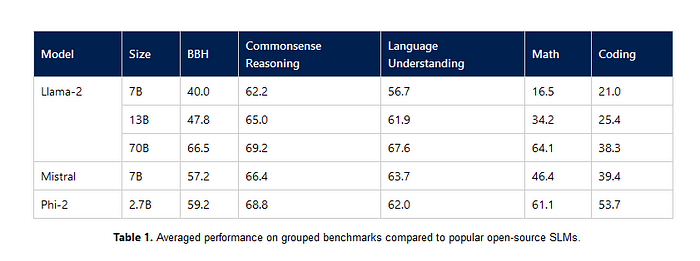

The Phi-2 model, developed by Microsoft, is a 2.7 billion-parameter language model that has recently gained attention in the field of natural language processing and coding. This model has been trained on 1.4 trillion tokens of synthetic data and has demonstrated impressive performance, outperforming larger language models such as Llama-2 (7, 13, 70B) and Google’s Gemini Nano 1 (1.8B) and Nano 2 (3.25B).

https://twitter.com/sebastienbubeck/status/1743519400626643359?t=rVJesDlTox1vuv_SNtuIvQ

The Phi-2 model is primarily designed to understand standard English and has been trained on a large dataset of synthetic data. However, it has some limitations, such as potential societal biases and a limited scope for code generation, as the majority of its training data is in Python. Despite these limitations, the model has shown promising results in various tasks and has attracted the attention of researchers and developers alike.

Let's take a look at this compact model’s benchmarks. Phi 2 is consistently comparable or even better compared to the similar lightweight models. It holds well against even 13B and 70B versions of LLaMA-2 (another popular model of choice for commercial use by entrepreneurs.)

Average performance comparing to Mistral and LLaMA-2Phi2 vs Gemini Nano 2 — Free and better

When compared with Gemini Nano 1 and 2, it certainly performs better…. Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.