AI Copyright Controversy: Who Owns Content Created by Artificial Intelligence?

Author(s): Rohan Mistry Originally published on Towards AI. Fair Use or Foul Play? What happens when AI learns from your artwork, writing, or photography — then creates competing content without paying you? This isn’t a future problem. It’s happening right now — …

Pipelex: Building Reliable AI Workflows with Business Logic, Not API Calls

Author(s): Gowtham Boyina Originally published on Towards AI. AI Workflows That Agents Build & Run The rise of large language models has unlocked tremendous potential for AI-powered applications. Yet, many developers find themselves trapped in a cycle of prompt engineering, wrestling with …

The Generative AI-Powered CX Revolution: Orchestrating Hyper-Personalized Customer Journeys with Low-Code Automation

Author(s): Intelligent Hustle Originally published on Towards AI. Source: Image by author The landscape of customer experience (CX) is undergoing a monumental shift. What was once considered “good service” is now merely the baseline. Today’s customers don’t just expect personalized interactions; they …

OpenAI’s $1 Trillion Gamble: Genius Plan or a House of Cards?

Author(s): Murat Girgin Originally published on Towards AI. Is it a game of thrones or the biggest ecosystem of the future? Behind the popular tools is a high-stakes gamble built on staggering financial commitments, bizarre partnership structures, and a “growth at all …

The Tools That Automate 90% of Your Work While You Get a Good Night’s Sleep

Author(s): Shreyansh Jain Originally published on Towards AI. A practical breakdown of how deep agents like Gemini, ChatGPT, and Claude plan, read, and research for you — even overnight. To understand why tools like Gemini Deep Research feel so powerful, we need …

TAI #178: Kimi K2 Thinking Steals the Open-Source Crown With a New Agentic Contender

Author(s): Towards AI Editorial Team Originally published on Towards AI. What happened this week in AI by Louie The AI playing field was reshaped yet again this week with the release of Kimi K2 Thinking from Moonshot AI. This release feels like …

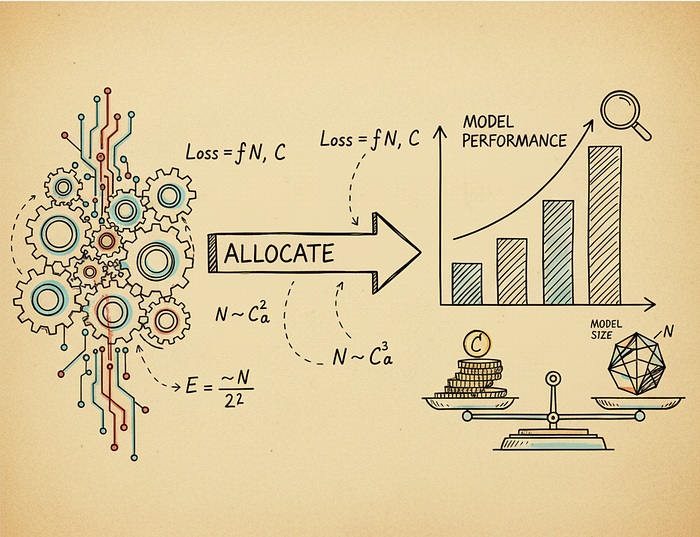

Scaling Laws: How to Allocate Compute for Training Language Models

Author(s): M Originally published on Towards AI. From Chinchilla’s 20:1 rule to SmolLM3’s 3,700:1 ratio: how inference economics rewrote the training playbook Training a language model is expensive. Really expensive. A single training run for a 70 billion parameter model can cost …

Why Every Developer Should Learn Prompt Engineering This Year

Author(s): TCE Tech Jankari Originally published on Towards AI. Photo by Aidin Geranrekab on Unsplash If there is one skill that separates fast-moving developers from the rest in 2025, it is not a new framework, a backend library, or a cloud certification. …

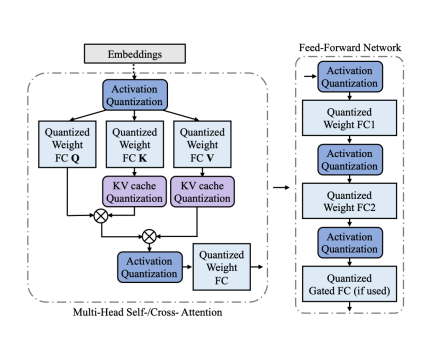

Quantization: How to Accelerate Big AI Models

Author(s): Burak Degirmencioglu Originally published on Towards AI. In the world of deep learning, we are in an arms race for bigger, more powerful models. While this has led to incredible capabilities, it has also created a significant problem: these models are …

How I Fine-Tuned a 7B AI Model on My Laptop (and What I Learned)

Author(s): Manash Pratim Originally published on Towards AI. How I Fine-Tuned a 7B AI Model on My Laptop (and What I Learned) Most people think training large language models requires data centers, huge GPUs, and complex hardware setups. A year ago, that …

Fine-Tuning a Small LLM with QLoRA: A Complete Practical Guide (Even on a Single GPU)

Author(s): Manash Pratim Originally published on Towards AI. Large Language Models are amazing but what if you could turn one into your own domain expert? Large Language Models (LLMs) like GPT-4 or Llama 3 are incredible generalists. They can write essays, answer …

How to Easily Fine-Tune the Donut Model for Receipt Information Extraction

Author(s): Eivind Kjosbakken Originally published on Towards AI. How to Easily Fine-Tune the Donut Model for Receipt Information Extraction The Donut model in Python is a model to extract text from a given image. This can be useful in several scenarios, for …

The Proof is in the Preference: Why DPO is the New RLHF

Author(s): DrSwarnenduAI Originally published on Towards AI. The Proof is in the Preference: Why DPO is the New RLHF Stop debugging PPO. Direct Preference Optimization solved the alignment puzzle with a single, stable loss function. Stop debugging PPO. Direct Preference Optimization solved …

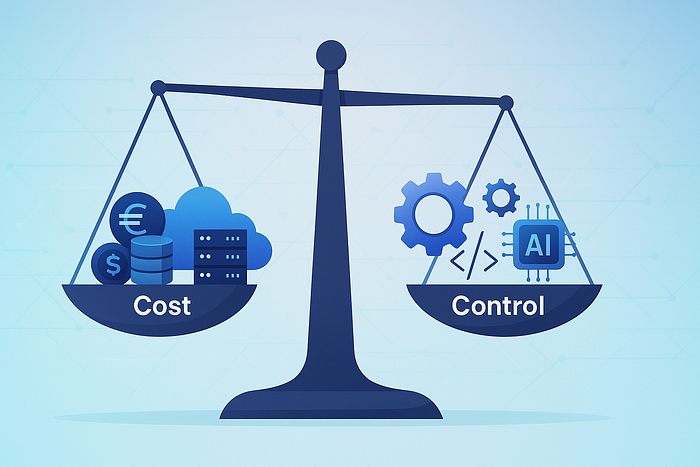

Choosing the right GenAI customization strategy: Balancing cost, control, and performance

Author(s): Laura Verghote Originally published on Towards AI. A practical framework to choose between RAG, fine-tuning, continued pre-training, and training from scratch — through the lens of balancing cost, control, performance and compliance. As Generative AI systems move from prototypes to production, …

From JSON to TOON: Evolving Serialization for LLMs

Author(s): Kushal Banda Originally published on Towards AI. TOON (Token Oriented Object Notation) When you’re scaling AI applications, token efficiency isn’t just a buzzword it’s your bottom line. Every token wasted is money left on the table and latency you didn’t ask …