Quantization: How to Accelerate Big AI Models

Author(s): Burak Degirmencioglu

Originally published on Towards AI.

In the world of deep learning, we are in an arms race for bigger, more powerful models. While this has led to incredible capabilities, it has also created a significant problem: these models are enormous, expensive to run, and power-hungry.

This is where quantization comes in. It is a powerful set of techniques designed to shrink these massive models, making them faster, more efficient, and accessible enough to run on everything from massive data centers to your local smartphone.

This guide will explore what quantization is, why it’s essential, the core methods like Post-Training Quantization (PTQ) and Quantization-Aware Training (QAT), and how you can start using them.

What Exactly is Quantization?

At its core, quantization is the process of converting a model’s parameters (weights and biases) from a high-precision data type, like 32-bit floating point (FP32), to a lower-precision data type, such as 8-bit integer (INT8). This conversion is a trade-off: you sacrifice a small amount of numerical precision in exchange for massive gains in efficiency.

Why Should Deep Learning Care?

The primary goal is to reduce the model’s computational and memory costs without significantly impacting its predictive accuracy. Think of it as taking a high-resolution vector graphic (FP32) and converting it to an optimized PNG (INT8); you lose some theoretical precision, but the image looks identical and is a fraction of the size.

Now that we know what quantization is, let’s explore why it has become an indispensable tool for deploying modern AI.

What is Real-World Benefits of Quantization?

The advantages of quantization are not just theoretical; they translate directly to practical, real-world benefits.

Memory Footprint: It drastically reduces the memory footprint, as an INT8 model is roughly 4x smaller than its FP32 counterpart.

Faster Inference: It delivers faster inference because integer arithmetic is computationally much simpler and faster for CPUs and modern GPUs than floating-point math.

Lower Power Consumption, which is critical for both large-scale data centers and battery-operated devices.

Finally, these efficiencies combine to enable edge device deployment, allowing a large language model that once required a powerful server to run directly on a smartphone or IoT device for on-the-fly, private data processing.

These benefits are compelling, but achieving them requires understanding the fundamental building blocks of the quantization process.

How Does Quantization Actually Map Billions of Numbers?

To convert a 32-bit floating-point number to an 8-bit integer, we need a mathematical mapping.

What is Affine Quantization Scheme?

This is most commonly done using the Affine Quantization Scheme, which is defined by the formula x = S \times (x_q — Z). Here, x is the original FP32 value, and x_q is the new INT8 value.

What is the Scale Factor (S)?

The Scale (S) is a positive floating-point number that defines the “step size” of the mapping. It answers the question: how much change in the original FP32 world corresponds to a single integer step in the INT8 world? For example, if your FP32 range [-10.0, 10.0] is mapped to the INT8 range [-128, 127], the scale determines the “value” of each of those 255 integer steps. It’s the ratio between the original floating-point range and the target integer range.

What is the Zero-Point (Z)?

The Zero-Point (Z) is an INT8 value that represents what the real number 0.0f maps to in the quantized (integer) space. This is crucial for “asymmetric” quantization, where the FP32 range (e.g., -1.0 to +5.0) is not centered around zero. The zero-point acts as an offset, ensuring that the true zero is correctly represented. If a model’s weights or activations are perfectly centered around 0 (e.g., -10.0 to +10.0), the zero-point might be 0, but in most real-world scenarios, it’s a non-zero value that ensures the mapping is accurate.

Granularity: Per-Tensor vs. Per-Channel Quantization

This affine scheme can be applied with different levels of granularity. A Per-Tensor approach calculates a single Scale and Zero-Point for an entire weight matrix, which is simple but can be inaccurate if the values in the matrix have a wide range.

A more precise method is Per-Channel (or per-row/column) quantization, which calculates a unique Scale and Zero-Point for each channel of a convolutional layer or each row of a linear layer. This is far more accurate in preserving model performance, as it can adapt to layers where value ranges differ significantly, and is the most common approach used for LLMs.

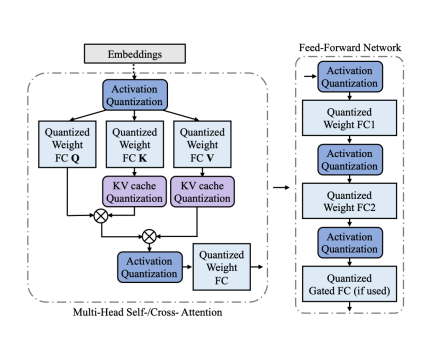

Handling Activations: The Calibration Step

While weights are static, a model’s activations (the outputs of layers) change with every input. To quantize these, we must perform a Calibration step. This involves feeding a small, representative dataset (e.g., 100–200 sample inputs) through the FP32 model and recording the min/max range of the activations for each layer. This statistical data is then used to determine the optimal Scale and Zero-Point for quantizing the activations. This calibration process is the core of Static Post-Training Quantization.

With these core concepts in mind, we can explore the two main strategies for applying quantization to your models.

What Is Post-Training Quantization (PTQ) and When Is It the Easy Win?

Post-Training Quantization (PTQ) is the simplest and most common method. As the name suggests, it is applied after a model has already been fully trained. There are two main types.

Dynamic Quantization only quantizes the model weights to INT8, while the activations are computed in FP32 and then quantized “on-the-fly” just before being used in an operation. This is best for models where memory is the main bottleneck.

The more powerful method is Static Quantization, which quantizes both the weights and the activations. This method requires the “Calibration” step we just discussed to determine the activation ranges. Because both weights and activations are in INT8, this method provides the fastest possible inference speed.

PTQ is fast and simple, but what happens when this ‘easy win’ leads to an unacceptable drop in your model’s performance?

When PTQ Fails, How Does Quantization-Aware Training (QAT) Recover Lost Accuracy?

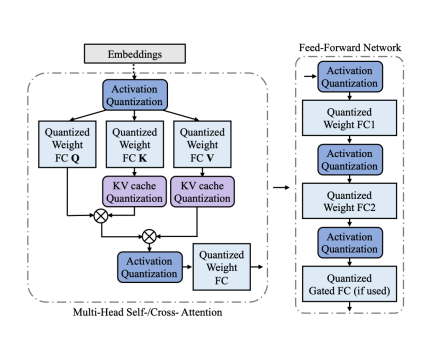

Quantization-Aware Training (QAT) is the solution for when PTQ causes a significant drop in model accuracy. Instead of quantizing after the fact, QAT simulates the effects of quantization during the training (or fine-tuning) process.

It does this by inserting “Fake Quantization” nodes into the model’s computation graph. In the forward pass, these nodes take the FP32 weights and activations, simulate the rounding and clamping effects of converting them to INT8 and back, and then pass the “quantization-aware” FP32 values to the next layer.

In the backward pass, the Straight-Through Estimator (STE) is used to “trick” the optimizer by passing the gradient through the non-differentiable quantization node as if it were an identity function. This allows the model to learn to adjust its weights to become robust to the errors and noise that quantization will introduce, effectively “learning” to be quantized.

This presents a clear trade-off. You have a simple, fast method (PTQ) and a complex, powerful one (QAT). So, how do you decide which one is right for your project?

PTQ vs. QAT: How Do You Choose the Right Strategy for Your Model?

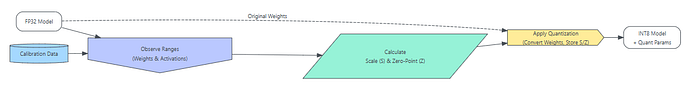

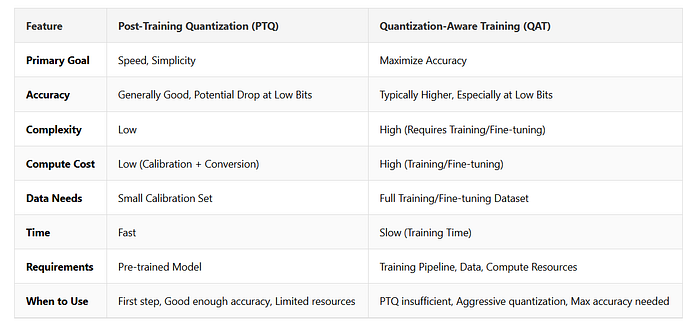

The choice between PTQ and QAT comes down to a trade-off between accuracy and resources.

QAT will almost always result in better accuracy because the model actively learns to compensate for quantization noise; however, it is computationally expensive, requiring a full fine-tuning process and access to a representative training dataset.

PTQ is vastly cheaper and faster, often requiring only a small calibration set and a few minutes to run. The best practice is to always try PTQ first. If the accuracy drop is acceptable, your work is done. If the drop is too large, or if you are targeting very low bit-widths (like 4-bit or 2-bit), you must use QAT to recover that lost performance. A common hybrid approach is to use PTQ to get a baseline quantized model and then fine-tune it with QAT for just a few epochs to quickly regain accuracy.

Once you’ve chosen your strategy, you’ll need the right tools to put it into practice.

What Tools Can You Use to Start Quantizing Models Today?

You don’t need to implement these complex algorithms from scratch. Modern deep learning frameworks provide excellent support for quantization.

The Hugging Face library is a popular choice, offering streamlined APIs to apply quantization using powerful backends like ONNX Runtime, Intel's IPEX, or GPTQ.

Within PyTorch, you can use torch.quantization in Eager Mode or the more flexible FX Graph Mode, which involves specifying Observers (to collect statistics) and FakeQuantize modules (for QAT).

TensorFlow integrates quantization through the Keras Model Optimization Toolkit, which can wrap a standard Keras model to apply QAT logic during training. For example, using Hugging Face optimum, you can often quantize a model with just a few lines of code, specifying whether you want to use static PTQ or QAT.

While these tools make quantization more accessible than ever, it’s not a magic bullet. There are still significant challenges to overcome.

What Hurdles Remain in the Quest for Perfect Model Efficiency?

Despite its benefits, quantization is not without challenges.

The primary hurdle is accuracy degradation, as some models are highly sensitive to the precision loss, and PTQ can cause them to fail.

Another challenge is hardware support; the speed benefits of quantization are only realized if the underlying hardware (the specific CPU, GPU, or TPU) has optimized instructions for low-precision integer arithmetic.

Finally, some model architectures are difficult to quantize, particularly those with complex operations like normalization layers or activation functions that have a very wide and unpredictable dynamic range. Ongoing research is focused on developing new techniques to address these “outlier” values and enable robust quantization for any model.

Quantization is no longer an optional optimization; it’s a critical step in the deployment pipeline for modern AI. By converting models from bulky FP32 to efficient INT8, it allows us to build faster, smaller, and more cost-effective applications. We’ve seen it’s a world of trade-offs — precision for speed, simplicity for accuracy — and navigating it requires choosing the right strategy, whether it’s the fast and simple PTQ or the powerful and complex QAT. With powerful tools from frameworks like PyTorch and Hugging Face, these techniques are more accessible than ever, enabling the next wave of efficient AI on devices everywhere.

What are your experiences with model optimization? Have you found PTQ to be sufficient, or have you had to move to QAT to save your model’s accuracy? Share your challenges and successes in the comments below!

References:

Quantization in Deep Learning – GeeksforGeeks

Your All-in-One Learning Portal: GeeksforGeeks is a comprehensive educational platform that empowers learners across…

www.geeksforgeeks.org

Quantization

We're on a journey to advance and democratize artificial intelligence through open source and open science.

huggingface.co

https://arxiv.org/pdf/2411.02530v1

Quantization Aware Training (QAT) vs. Post-Training Quantization (PTQ)

Smaller models => Faster inference => Better outcomes

medium.com

Core Principles of Post-Training Quantization

Understand the fundamental workflow and ideas behind PTQ.

apxml.com

Static vs Dynamic Quantization Explained

Compare static quantization (weights and activations) and dynamic quantization (weights only).

apxml.com

What is Quantization Aware Training? | IBM

Learn how Quantization Aware Training (QAT) improves large language model efficiency by simulating low-precision…

www.ibm.com

Why Use Quantization-Aware Training (QAT)?

Understand scenarios where PTQ is insufficient and QAT becomes necessary for preserving model accuracy.

apxml.com

How to Simulate Quantization Effects in Training

Explain the concept of inserting fake quantization operations into the model graph during training.

apxml.com

Comparing QAT vs PTQ: Pros and Cons

Summarize the advantages and disadvantages of using QAT compared to PTQ methods.

apxml.com

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.