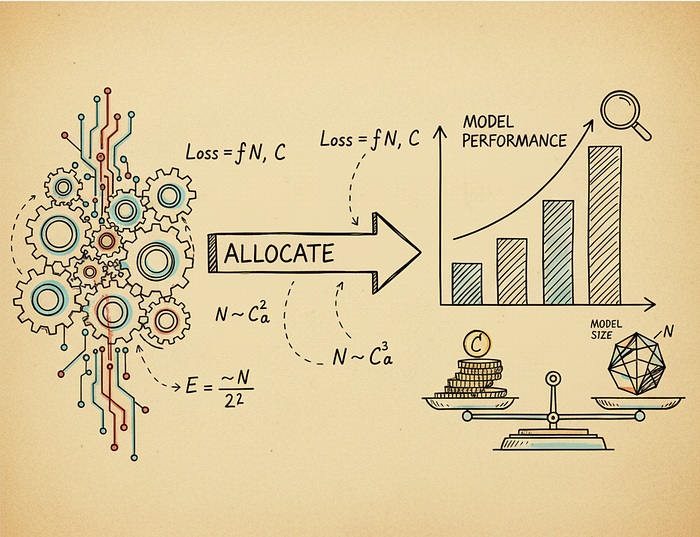

Scaling Laws: How to Allocate Compute for Training Language Models

Author(s): M Originally published on Towards AI. From Chinchilla’s 20:1 rule to SmolLM3’s 3,700:1 ratio: how inference economics rewrote the training playbook Training a language model is expensive. Really expensive. A single training run for a 70 billion parameter model can cost …

Data Quality and Filtering at Scale for Training Large Language Models

Author(s): M Originally published on Towards AI. From heuristic filters to AI classifiers: practical techniques for curating trillion-token datasets Training a language model on the raw internet is like trying to learn from every conversation happening in the world simultaneously. Most of …

Sourcing and Collecting Data for Training Large Language Models

Author(s): M Originally published on Towards AI. Real-world insights from FineWeb, DCLM, The Stack v2, and modern LLM training When people talk about training language models, the conversation often jumps straight to architecture choices or training techniques. But here’s the reality: you …

Does Cognition AI Matter When We Already Have Claude Code, Cursor, and Copilot?

Author(s): M Originally published on Towards AI. How a startup became a $10.2B company without being the smartest in the room. Crazy amounts of money are flowing into AI coding startups, yet we’re already drowning in AI coding tools. GitHub Copilot suggests …

Perplexity AI: Either Brilliant or Screwed

Author(s): M Originally published on Towards AI. What’s up with Perplexity? Right now, Perplexity AI is doing something that looks either incredibly smart or completely insane. In early October 2025, they made their Comet browser completely free after charging $200 a month …

CPUs, GPUs, NPUs, and TPUs: A Deep Dive into AI Chips

Author(s): M Originally published on Towards AI. A deep dive into the hardware powering AI, from massive data centers to the phone in your pocket. The rise of AI didn’t just require better software. It demanded entirely new hardware. Traditional computer chips, …

How Anthropic Trained Claude Sonnet and Opus Models: A Deep Dive

Author(s): M Originally published on Towards AI. The complete story of Anthropic’s model training journey. If you’ve ever wondered how Claude learned to be helpful without being harmful, you’re about to find out. This is the story of how Anthropic built one …

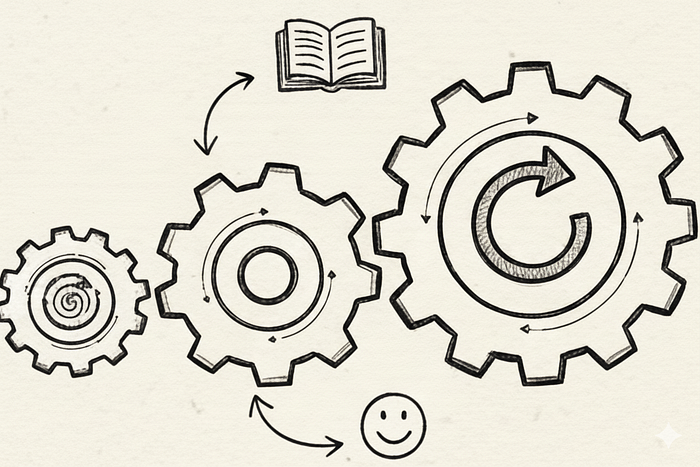

Fine-Tuning and Aligning Large Language Models: A Guide to SFT, RLHF, and What Comes Next

Author(s): M Originally published on Towards AI. A beginner-friendly guide. If you've used ChatGPT, Claude, or any other modern AI assistant, you've used a model that has undergone a complex training process. These models not only learn from large amounts of text …

Apple’s Approach to Large Language Models: Training Methods, Architecture, and Product Integration

Author(s): M Originally published on Towards AI. An analysis of Apple’s AI capabilities and limitations. When Apple announced Apple Intelligence at WWDC 2024, the company finally revealed what it had been quietly building in its machine learning labs. Unlike the splashy product …

Speculative Decoding for Much Faster LLMs

Author(s): M Originally published on Towards AI. How to make LLMs 3x faster without losing quality. Large language models are slow. When you ask ChatGPT or Claude a question, you wait as words come out one by one. This isn’t just frustrating …

Perplexity’s Comet Browser: The AI-Powered Browser That Just Went Free

Author(s): M Originally published on Towards AI. The AI browser wars have begun, and Perplexity just made the first move. Three months ago, if you wanted to try Perplexity’s Comet browser, you’d have to shell out $200 per month. Today, it’s completely …

Advanced Attention Mechanisms in Transformer LLMs

Author(s): M Originally published on Towards AI. A 2025 guide to state-of-the-art attention mechanisms for training and serving modern LLMs. The attention mechanism in the original Transformer can be slow and computationally expensive, particularly with long sequence lengths (i.e., long contexts). Over …

Synthetic Data Generation Methods for LLMs: A Comprehensive Guide

Author(s): M Originally published on Towards AI. A Practical Guide for ML Engineers and Researchers. If you’ve been following the AI landscape, you’ve probably noticed a paradox. While large language models are getting bigger and more capable, the high-quality data needed to …

KV Cache: The Key to Efficient LLM Inference

Author(s): M Originally published on Towards AI. Understanding the optimization that makes real-time LLM generation possible. Large language models face a fundamental efficiency problem during text generation. The attention mechanism at the heart of transformers requires computing relationships between all tokens in …

Essential LLM Papers: A Comprehensive Guide

Author(s): M Originally published on Towards AI. A complete roadmap to understanding the papers that shaped modern AI Large Language Models have fundamentally transformed artificial intelligence in just a few years. What started as academic research has evolved into powerful tools that …