RAG 2.0, Finally Getting RAG Right!

Last Updated on April 11, 2024 by Editorial Team

Author(s): Ignacio de Gregorio

Originally published on Towards AI.

The Creators of RAG Present its Successor

Looking at the AI industry, we have grown accustomed to seeing stuff get ‘killed’ every single day. I myself cringe sometimes when I have to talk about the 23923th time something gets ‘killed’ out of the blue.

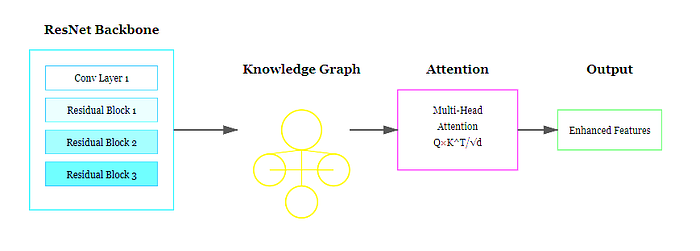

But rarely the case is as compelling as what Contextual.ai has proposed with Contextual Language Models (CLMs), in what they call “RAG 2.0”, to make standard Retrieval Augmented Generation (RAG), one of the most popular ways (if not the most) of implementing Generative AI models, obsolete.

Behind the claim, none other than the initial creators of RAG.

And while this is a huge improvement over the status quo of production-grade Generative AI, one question lingers over this entire subspace: is RAG counting its last days, and are these innovations simply beating a dead horse?

As you may know or not know, all standalone Large Language Models (LLMs), with prominent examples like ChatGPT, have a knowledge cutoff.

What this means is that pre-training is a one-off exercise (unlike continual learning methods). In other words, LLMs have ‘seen’ data until a certain point in time.

For instance, ChatGPT is updated until April 2023 at the time of writing. Consequently, they are not prepared to answer about facts and events that took… Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Take our 90+ lesson From Beginner to Advanced LLM Developer Certification: From choosing a project to deploying a working product this is the most comprehensive and practical LLM course out there!

Towards AI has published Building LLMs for Production—our 470+ page guide to mastering LLMs with practical projects and expert insights!

Discover Your Dream AI Career at Towards AI Jobs

Towards AI has built a jobs board tailored specifically to Machine Learning and Data Science Jobs and Skills. Our software searches for live AI jobs each hour, labels and categorises them and makes them easily searchable. Explore over 40,000 live jobs today with Towards AI Jobs!

Note: Content contains the views of the contributing authors and not Towards AI.