Handling Imbalanced Datasets in Machine Learning: SMOTE, Oversampling & Undersampling Explained

Author(s): Abinaya Subramaniam Originally published on Towards AI. Imbalanced Datasets — Image by Author What are imbalanced Datasets? In many real-world classification problems, the number of samples in each class is not balanced. This is called an imbalanced dataset. For example, in …

Building Smarter LLMs with LangChain and RAG: A Beginner’s Guide

Author(s): Harshit Kandoi Originally published on Towards AI. Photo by Alberto Moya on Unsplash Ever tried your hand at an LLM’s question and got a confident, slick solution that turned out to be completely wrong? I have — many times. I remember …

Is AGI merely a Silicon Valley illusion?

Author(s): Nehdiii Originally published on Towards AI. From OpenAI to DeepSeek, everyone now claims to be an AGI startup, but by 2025, the explosion of such companies is becoming overwhelming. On 14 April 2023, High-Flyer announced the start of an artificial general …

TAI #150: Qwen3 Impresses as a Robust Open-Source Contender

Author(s): Towards AI Editorial Team Originally published on Towards AI. What happened this week in AI by Louie This week, the AI spotlight turned to Alibaba’s Qwen team with the launch of Qwen3, a comprehensive new family of large language models. Offering …

Stop Flattening Your Data! Why NdLinear Might Be Your AI’s Secret Weapon

Author(s): Vivek Tiwari Originally published on Towards AI. Linear layers are fundamental building blocks in countless neural networks, performing essential transformations on data. The standard approach, often implemented as nn.Linear in frameworks like PyTorch, typically operates on flattened input vectors, effectively treating …

Benchmarking Volga’s On-Demand Compute Layer for Feature Serving: Latency, RPS, and Scalability on EKS

Author(s): Andrey Novitskiy Originally published on Towards AI. End-to-end request latencies and storage read latencies during a load test with maximum worker configuration. TL;DR Real-time machine learning systems require not only efficient models but also robust infrastructure capable of low-latency feature serving …

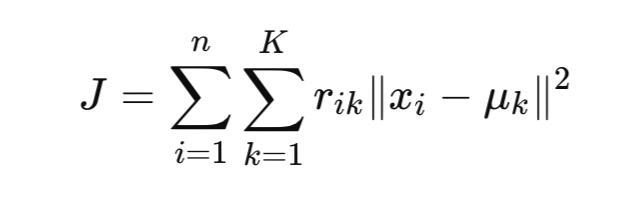

Unsupervised Learning Series #2: K-Means + K-Modes = K-Prototypes — Understanding How Data Type Defines Your Clustering Strategy

Author(s): SETIA BUDI SUMANDRA Originally published on Towards AI. When we step into the world of unsupervised learning, one of the first families of algorithms we meet is the K-Family — K-Means, K-Modes, and K-Prototypes.Each member of this family plays a unique …

DeepSeek Explained Part 5: DeepSeek-V3-Base

Author(s): Nehdiii Originally published on Towards AI. Vegapunk №05 One Piece Character Generated with ChatGPT This article is the fifth installment of our DeepSeek series and the first to specifically highlight the training methodology of DeepSeek-V3 [1, 2]. As illustrated in the …

Scaling LLM Evaluation

Author(s): Nadav Barak Originally published on Towards AI. Photo by Jungwoo Hong on Unsplash. Large Language Models (LLMs) are transforming machine learning, powering applications like chatbots, RAG, and autonomous agents. But building with LLMs comes with a major hurdle: Their output is …