TAI #192: AI Enters the Scientific Discovery Loop

Author(s): Towards AI Editorial Team Originally published on Towards AI. What happened this week in AI by Louie This week, LLMs crossed from tools into participants in scientific discovery. OpenAI released a preprint, “Single-minus gluon tree amplitudes are nonzero,” in which GPT-5.2 …

The 6 Optimization Algorithms: How AI Learns to Learn 10× Faster with 50% Less Memory

Author(s): TANVEER MUSTAFA Originally published on Towards AI. The 6 Optimization Algorithms: How AI Learns to Learn 10× Faster with 50% Less Memory You’re training a language model with 175 billion parameters. Image generated by Author using AIThis article explores six optimization …

OpenAI Hires OpenClaw Creator: The Illusion of the “Open” Agentic Future

Author(s): Mandar Karhade, MD. PhD. Originally published on Towards AI. When the architect of the open source agent revolution joins the closed source giant, we have to ask if innovation is being fostered or fenced in This is a fast one, short …

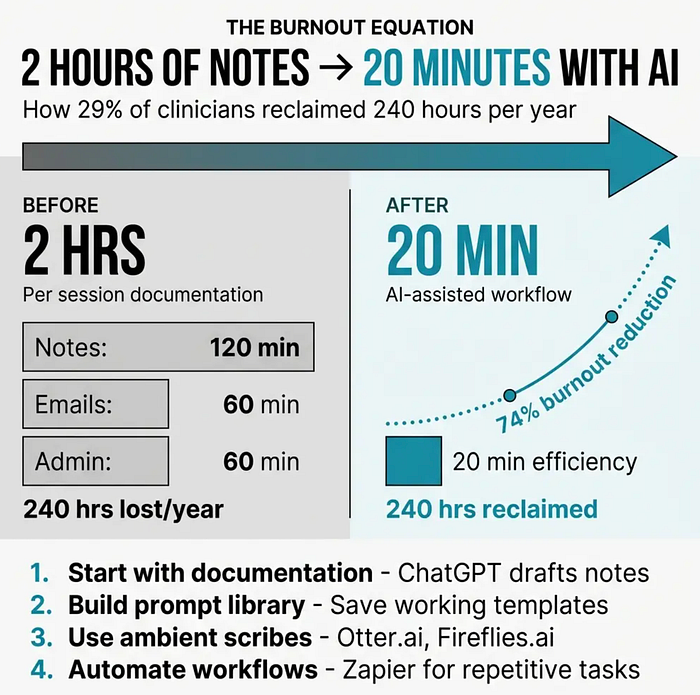

The Admin Work Killing Your Practice Has a Simple Fix You’re Probably Ignoring

Author(s): Bobby Tredinnick Originally published on Towards AI. Article Authored By Bobby Tredinnick LMSW-CASAC; CEO & Lead Clinician at Interactive Health Companies including Coast Health Consulting & Interactive International Solutions Created By OpenAI Clinicians across the field are exhausted. Not the kind …

Practical Local RAG with .NET and Vector Database

Author(s): Nagaraj Originally published on Towards AI. A complete guide to implementing Retrieval-Augmented Generation using .NET, LM Studio embeddings, and local vector storage — no cloud required. Did it never occur to you that ChatGPT could answer queries regarding your company’s documents …

GPU and CPU Utilization While Running Open-Source LLMs Locally using Ollama

Author(s): Muaaz Originally published on Towards AI. Large Language Models (LLMs) are powerful, but running them locally requires significant hardware resources. Many users rely on open-source models due to their accessibility, as closed source models often come with restrictive licensing and high …

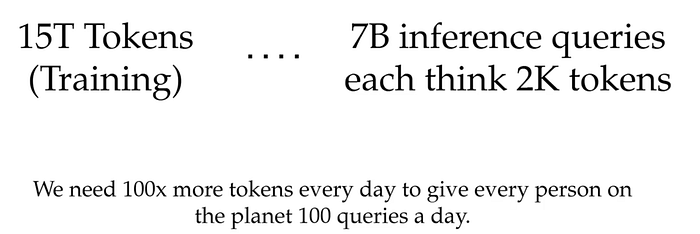

Inside the Forward Pass: Pre-Fill, Decode, and the GPU Economics of Serving Large Language Models

Author(s): Utkarsh Mittal Originally published on Towards AI. Why Inference Is the Endgame Pre-training a frontier large language model typically consumes somewhere between 15 trillion and 30 trillion tokens. That sounds like an enormous number — until you do the arithmetic on …

WebMCP: Transforming How AI Agents Interact with the Web

Author(s): Jageen Shukla Originally published on Towards AI. WebMCP: Transforming How AI Agents Interact with the Web Imagine asking an AI assistant: “Book me a flight to New York for next Monday.” webmcp-agent-mcpWebMCP is a proposed web standard that aims to streamline …

You Don’t Need GPT-5 for Agents: The 1.2B Model That Beats Giants

Author(s): MohamedAbdelmenem Originally published on Towards AI. Forget GPT-5 for agent tasks. LFM 2.5 runs at 359 tokens/sec in 900MB. Here’s why it works and how to fine-tune it for your use case. 1400x overtraining. 900MB memory. 359 tokens/sec. Three lines of …

The boring AI That Keeps Planes in The Sky

Author(s): Marco van Hurne Originally published on Towards AI. One of the ways I keep myself busy in the AI domain is by running an AI factory at scale. And I’m not talking about the metaphorical kind where someone prompts an AI …