The 3 RLAIF Approaches: How AI Learns to Align Itself Without Human Labelers

Author(s): TANVEER MUSTAFA Originally published on Towards AI. Understanding AI-Generated Preferences, Constitutional AI Extensions, and Scalable Oversight Training GPT-4 required thousands of human labelers spending months rating AI outputs. Image generated by Author using AIThis article discusses the transformative potential of Reinforcement …

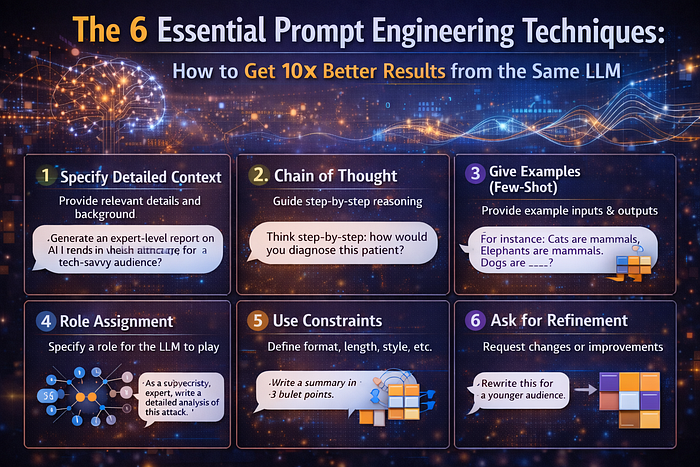

The 6 Essential Prompt Engineering Techniques: How to Get 10× Better Results from the Same LLM

Author(s): TANVEER MUSTAFA Originally published on Towards AI. Understanding Zero-Shot, Few-Shot, Chain-of-Thought, Self-Consistency, Tree of Thoughts, and ReAct You ask an LLM to analyze market trends. It gives a vague, generic response. Your colleague asks the same model with a different prompt …

The 4 AI Safety Alignment Approaches: How to Build AI That Won’t Lie, Harm, or Manipulate

Author(s): TANVEER MUSTAFA Originally published on Towards AI. Understanding RLHF, Constitutional AI, Red Teaming, and Value Learning You ask ChatGPT how to make a bomb. It refuses. You ask it to write a racist joke. It declines. You try jailbreaking it with …

The 6 Optimization Algorithms: How AI Learns to Learn 10× Faster with 50% Less Memory

Author(s): TANVEER MUSTAFA Originally published on Towards AI. The 6 Optimization Algorithms: How AI Learns to Learn 10× Faster with 50% Less Memory You’re training a language model with 175 billion parameters. Image generated by Author using AIThis article explores six optimization …

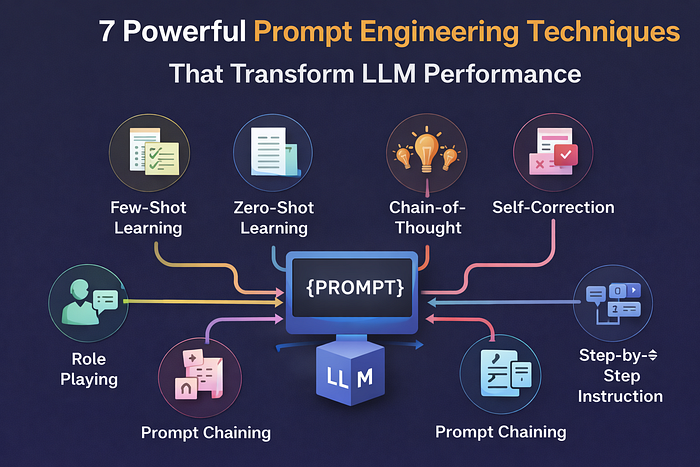

7 Powerful Prompt Engineering Techniques That Transform LLM Performance

Author(s): TANVEER MUSTAFA Originally published on Towards AI. 7 Powerful Prompt Engineering Techniques That Transform LLM Performance Prompt engineering is the critical skill of crafting instructions that guide Large Language Models (LLMs) to produce reliable, structured outputs. This article explores how the …

The 4 Parameter-Efficient Fine-Tuning Methods: How to Adapt LLMs 100× Faster

Author(s): TANVEER MUSTAFA Originally published on Towards AI. The 4 Parameter-Efficient Fine-Tuning Methods: How to Adapt LLMs 100× Faster You want to customize GPT-3 for customer service. Traditional fine-tuning requires updating 175 billion parameters — 350GB storage per variant, weeks of training, …

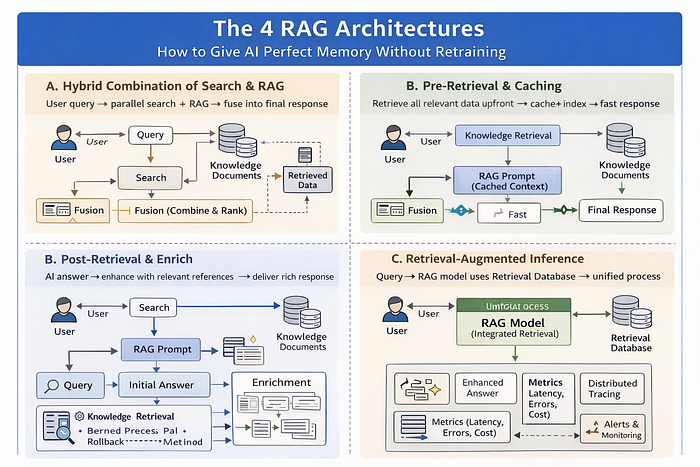

The 4 RAG Architectures: How to Give AI Perfect Memory Without Retraining

Author(s): TANVEER MUSTAFA Originally published on Towards AI. Understanding Naive RAG, Advanced RAG, Modular RAG, and Agentic RAG Your LLM is brilliant but frustratingly limited. Image generated by Author using AIThis article delves into the concept of Retrieval Augmented Generation (RAG), discussing …

The 5 Normalization Techniques: Why Standardizing Activations Transforms Deep Learning

Author(s): TANVEER MUSTAFA Originally published on Towards AI. The 5 Normalization Techniques: Why Standardizing Activations Transforms Deep Learning Training deep neural networks is difficult. Add more layers, and training becomes unstable — gradients explode or vanish, learning slows, or the model fails …

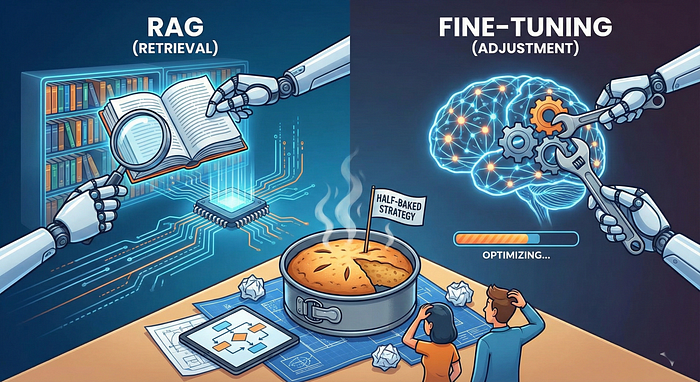

RAG vs. Fine-Tuning: Why Your LLM Strategy is Probably Half-Baked

Author(s): TANVEER MUSTAFA Originally published on Towards AI. RAG vs. Fine-Tuning: Why Your LLM Strategy is Probably Half-Baked When I first started building LLM applications, I fell into a common trap: I treated RAG (Retrieval-Augmented Generation) and Fine-Tuning as interchangeable tools. I …

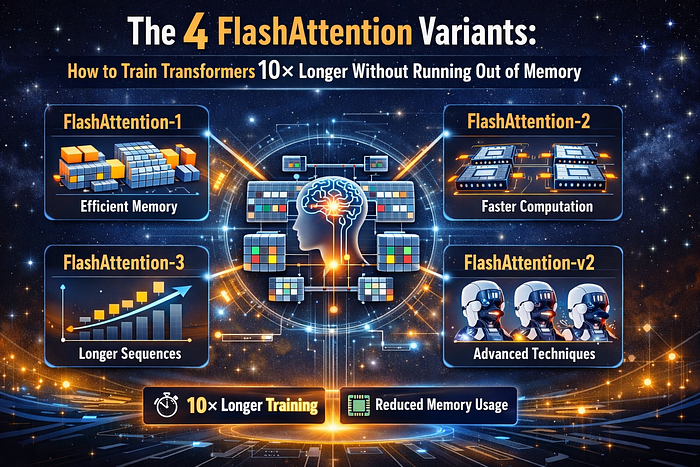

The 4 Flash Attention Variants: How to Train Transformers 10× Longer Without Running Out of Memory

Author(s): TANVEER MUSTAFA Originally published on Towards AI. The 4 Flash Attention Variants: How to Train Transformers 10× Longer Without Running Out of Memory You’re training a Transformer. Image generated by Author using AIThis article discusses four Flash Attention variants that enhance …

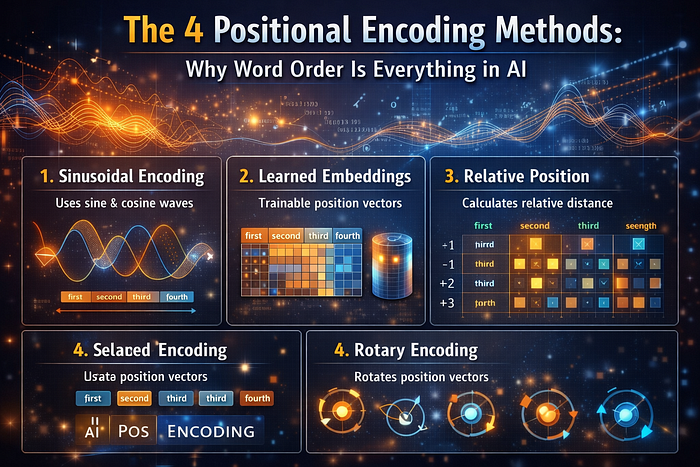

The 4 Positional Encoding Methods: Why Word Order Is Everything in AI

Author(s): TANVEER MUSTAFA Originally published on Towards AI. The 4 Positional Encoding Methods: Why Word Order Is Everything in AI Understanding how Transformers learn sequences without sequential processing Image generated by Author using AIThis article delves into four distinctive methods of positional …

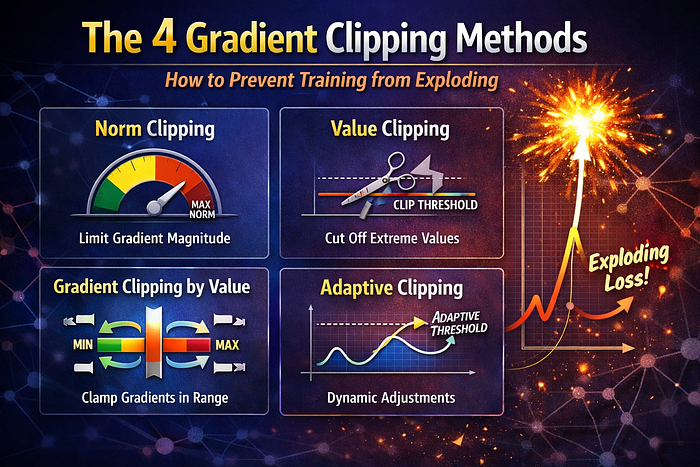

The 4 Gradient Clipping Methods: How to Prevent Training from Exploding

Author(s): TANVEER MUSTAFA Originally published on Towards AI. The 4 Gradient Clipping Methods: How to Prevent Training from Exploding You’re training a deep neural network. Image generated by Author using AIThis article explores the critical issue of exploding gradients in deep learning, …

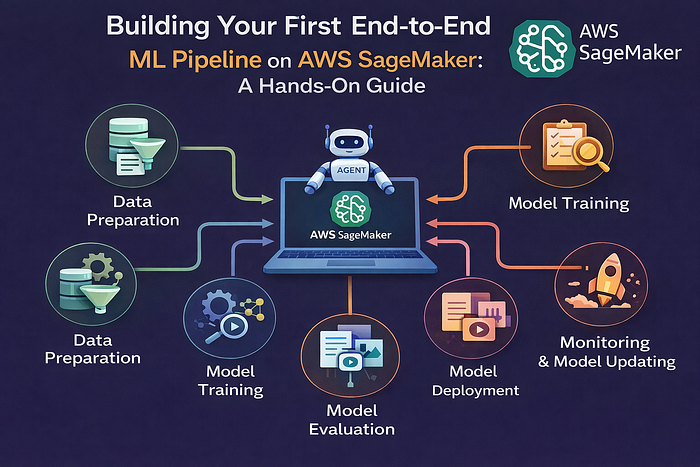

Building Your First End-to-End ML Pipeline on AWS SageMaker: A Hands-On Guide

Author(s): TANVEER MUSTAFA Originally published on Towards AI. From Model Training to Production Monitoring — A Complete Walkthrough Building a machine learning model is one thing — deploying it to production and keeping it running reliably is another challenge entirely. This hands-on …

From Chaos to Intelligence: How AI Training Actually Works

Author(s): TANVEER MUSTAFA Originally published on Towards AI. From Chaos to Intelligence: How AI Training Actually Works Understanding the fundamental mechanics of training large language models from scratch Image generated by Author using AIThis article discusses the intricate process of training large …

Building LLMs from Scratch: 7 Essential Types & Complete Implementation Guide

Author(s): TANVEER MUSTAFA Originally published on Towards AI. Building LLMs from Scratch: 7 Essential Types & Complete Implementation Guide Large Language Models (LLMs) have revolutionized artificial intelligence, powering applications from chatbots to code generation. Building an LLM from scratch is a complex …