Anomaly Detection: A Comprehensive Guide

Author(s): Alok Choudhary Originally published on Towards AI. Anomaly Detection: A Comprehensive Guide Anomaly detection is one of those concepts in machine learning that looks deceptively simple but has a huge impact in real-world applications — from fraud prevention to equipment maintenance, …

Why Every Developer Should Learn Prompt Engineering This Year

Author(s): TCE Tech Jankari Originally published on Towards AI. Photo by Aidin Geranrekab on Unsplash If there is one skill that separates fast-moving developers from the rest in 2025, it is not a new framework, a backend library, or a cloud certification. …

Cookiecutter Data Science: A Standardized, Flexible Approach for Modern Data Projects

Author(s): Abinaya Subramaniam Originally published on Towards AI. In the ever-evolving world of data science, one of the biggest challenges isn’t the algorithms or tools, it’s project organization. If you are working solo or collaborating with a team, maintaining a clean, reproducible, …

Fine-Tuning a Quantized LLM with LoRA: The Phi-3 Mini Walkthrough

Author(s): Akash Verma Originally published on Towards AI. Fine-Tuning a Quantized LLM with LoRA: The Phi-3 Mini Walkthrough In this post, we’ll take our first steps toward efficient large language model (LLM) experimentation — setting up the environment, understanding quantization, and loading …

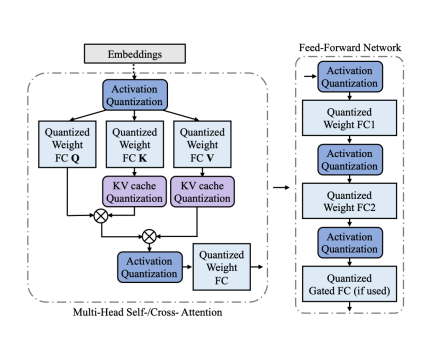

Quantization: How to Accelerate Big AI Models

Author(s): Burak Degirmencioglu Originally published on Towards AI. In the world of deep learning, we are in an arms race for bigger, more powerful models. While this has led to incredible capabilities, it has also created a significant problem: these models are …

How I Fine-Tuned a 7B AI Model on My Laptop (and What I Learned)

Author(s): Manash Pratim Originally published on Towards AI. How I Fine-Tuned a 7B AI Model on My Laptop (and What I Learned) Most people think training large language models requires data centers, huge GPUs, and complex hardware setups. A year ago, that …

Fine-Tuning a Small LLM with QLoRA: A Complete Practical Guide (Even on a Single GPU)

Author(s): Manash Pratim Originally published on Towards AI. Large Language Models are amazing but what if you could turn one into your own domain expert? Large Language Models (LLMs) like GPT-4 or Llama 3 are incredible generalists. They can write essays, answer …

Transformer in Action —Optimizing Self-Attention with Attention Approximation

Author(s): Kuriko Iwai Originally published on Towards AI. Discover self-attention mechanisms and attention approximation techniques with practical examples The Transformer architecture, introduced in the “Attention Is All You Need” paper, has revolutionized Natural Language Processing (NLP). Photo by NordWood Themes on UnsplashThis …

Claude Projects, Sub-Agents, or Skills? Here’s How to Actually Choose

Author(s): Mayank Bohra Originally published on Towards AI. Most people pick the wrong Claude tool for their task and wonder why AI isn’t working. Here’s the decision framework that eliminates guesswork, from someone who’s tested all three in production. I watched a …

Why Language Models Are “Lost in the Middle”

Author(s): Mohit Sewak, Ph.D. Originally published on Towards AI. Our most powerful AIs can read a library of information, but they often forget what’s in the middle chapters. Let’s talk about the weirdest, most hilarious, and frankly, most important problem in AI …