GLM-4.7-Flash: Z.ai’s Free Coding Model and What the Benchmarks Say

Author(s): JP Caparas Originally published on Towards AI. A look at the 30B MoE model that just dropped with unlimited free API access Z.ai announced GLM-4.7-Flash a few hours ago. The model is free. Not “free tier with limits” free, but actually …

What Amodei and Hassabis Said About AGI Timelines, Jobs, and China at Davos

Author(s): JP Caparas Originally published on Towards AI. Engineers who don’t write code, models that learn to deceive, and a “country of geniuses in a data centre” At Davos this week, something unusual happened. Dario Amodei, CEO of Anthropic, and Demis Hassabis, …

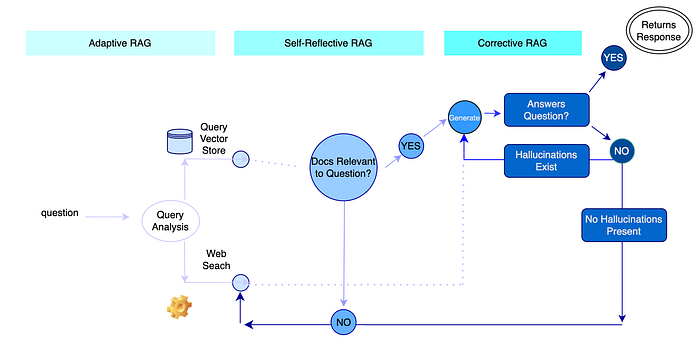

Build Advanced RAG with LangGraph

Author(s): tanta base Originally published on Towards AI. image by author We all know and love Retrieval-Augmented Generation (RAG). The simplest implementation of Retrieval-Augmented Generation (RAG) is a vector store with documents connected to a Large Language Model to generate a response …

KV Cache in LLM Inference

Author(s): Ayoub Nainia Originally published on Towards AI. If you’ve ever tried to run a model with a longer prompt, increased batch size, or enabled beam search and suddenly hit CUDA out-of-memory, there’s a high chance the culprit wasn’t the model weights. …

Persistence in LangGraph — Deep, Practical Guide

Author(s): Rashmi Originally published on Towards AI. Persistence in LangGraph — Deep, Practical Guide Persistence in LangGraph means storing and restoring graph state so an agent/workflow can: Image description not provided in the HTMLThe article explores the importance of persistence in LangGraph, …

Why MCP Matters: A Deep Dive into Model Context Protocol

Author(s): Rashmi Originally published on Towards AI. Why MCP Matters: A Deep Dive into Model Context Protocol MCP = a standard protocol that lets AI apps/agents connect to external tools + data sources in a consistent way. Instead of building a custom …

Stop Googling Your Clients: How to Build an Auto-Updating “Dossier” System for Every Meeting

Author(s): Anna Jey Originally published on Towards AI. A step-by-step guide to using Make.com and Perplexity to automate your pre-meeting research and reclaim your mental energy. We have all been there. It is 1:58 PM. You have a Zoom call at 2:00 …

The Hidden Attack Surface in Every LLM: How Special Tokens Enable 96% Jailbreak Success Rates

Author(s): Suchitra Malimbada Originally published on Towards AI. Understanding how reserved symbols designed to structure AI conversations become weapons for prompt injection. Image made by the author When OpenAI’s tokenizer encounters <|im_start|>, it doesn't see thirteen characters. It sees a single atomic …

A Practical Guide to Vibe Engineering

Author(s): Kamen Zhekov Originally published on Towards AI. Introduction I’ve had LLMs write me entire features in minutes and I’ve had them generate thousands of lines of unusable garbage. The difference is usually not the model, but rather whether I treated it …

The 7 Essential Types of LLM Benchmarking: A Complete Guide to Evaluating AI Language Models

Author(s): TANVEER MUSTAFA Originally published on Towards AI. The 7 Essential Types of LLM Benchmarking: A Complete Guide to Evaluating AI Language Models As Large Language Models (LLMs) become integral to business operations and everyday applications, understanding their true capabilities has never …