KV Cache in LLM Inference

Author(s): Ayoub Nainia Originally published on Towards AI. If you’ve ever tried to run a model with a longer prompt, increased batch size, or enabled beam search and suddenly hit CUDA out-of-memory, there’s a high chance the culprit wasn’t the model weights. …

Learning CUDA From First Principles

Author(s): Ayoub Nainia Originally published on Towards AI. Being a PhD student working on AI and NLP, I’ve spent quite some time using PyTorch and other high-level frameworks that abstract away the GPU. But recent discussions about whether I should learn CUDA …

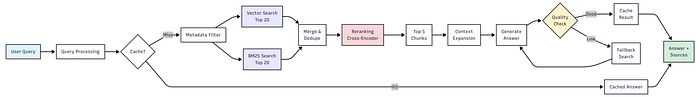

Production RAG: The Chunking, Retrieval, and Evaluation Strategies That Actually Work

Author(s): Ayoub Nainia Originally published on Towards AI. RAG isn’t a retrieval problem, it’s a system design problem. The sooner you start treating it like one, the sooner it will stop breaking. If you’ve built your first RAG (Retrieval-Augmented Generation) system, you’ve …

LLM Evaluation Is Broken: Why BLEU and ROUGE Don’t Measure Real Understanding

Author(s): Ayoub Nainia Originally published on Towards AI. Large Language Models can now summarize research papers, analyze data, and even draft academic arguments. Yet behind the flood of progress reports and leaderboard charts, one question remains stubbornly neglected: How do we actually …