How To Use Counterfactual Evaluation To Estimate Online AB Test Results

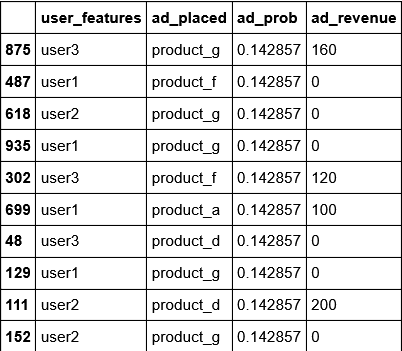

Author(s): ___ Originally published on Towards AI. Definition In this article, I will explain a principled approach to estimate the expected performance of a model in an online AB test using only offline data. This is very useful to help decide which …

Random Walk in Node Embeddings (DeepWalk, node2vec, LINE, and GraphSAGE)

Author(s): Edward Ma Originally published on Towards AI. Graph Embeddings Top highlight Photo by Steven Wei on Unsplash Instead of using traditional machine learning classification tasks, we can consider using graph neural network (GNN) to perform node classification problems. By providing an …

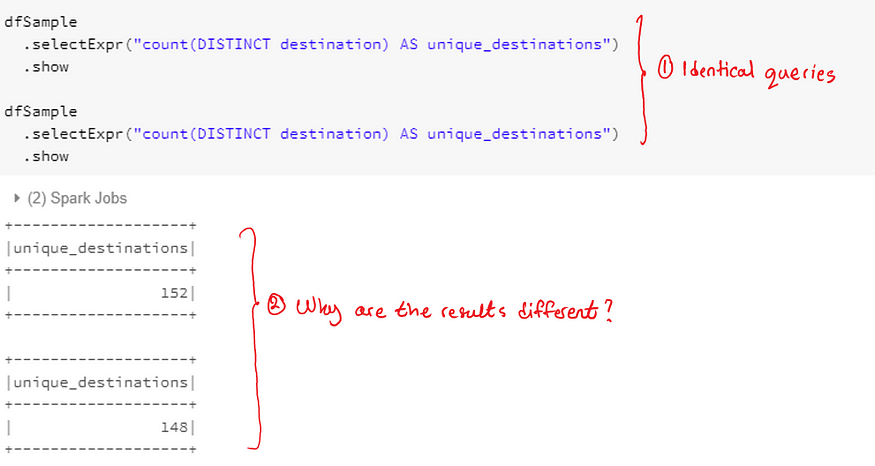

A Practical Tip When Working With Random Samples On Spark

Author(s): ___ Originally published on Towards AI. In this article, I will share a crucial tip when using Spark to analyze a random sample of a data frame. The code to reproduce the results can be found here. It’s an HTML version …

When Variance and Standard Deviation Fail to Explain Variability!

Author(s): Astha Puri Originally published on Towards AI. We all know the definition of variance — it helps us understand how dispersed the data points are, around the mean. If the data points are far off from the mean, we have larger …

Top Restaurant Finder Nearby

Author(s): Chittal Patel Originally published on Towards AI. Photo by Jay Wennington on Unsplash Introduction In this project, I created a Basic Data Science Project namely Top Restaurant Finder which will give the top Restaurants near your address. I did explore the …

Generate Quotes with Web Scrapping, Glove Embeddings, and LSTM in Pytorch

Author(s): Lakshmi Narayana Santha Originally published on Towards AI. Introduction With the rise of advancement in research in NLP specially in Language Models, text generation – a classical machine learning task which solved using Recurrent Networks. In this article we walk through …

Building a Spam Detector Using Python’s NTLK Package

Author(s): Bindhu Balu Originally published on Towards AI. NTLK — Natural Language ToolKit In this part, we will go through an end to end walkthrough of building a very simple text classifier in Python 3. Our goal is to build a predictive …

Why Batch Normalization Matters?

Author(s): Aman Sawarn Originally published on Towards AI. Understanding why batch normalization works, along with its intuitive elegance. Batch Normalization(BN) has become the-state-of-the-art right from its inception. It enables us to opt for higher learning rates and use sigmoid activation functions even …