Inside the Forward Pass: Pre-Fill, Decode, and the GPU Economics of Serving Large Language Models

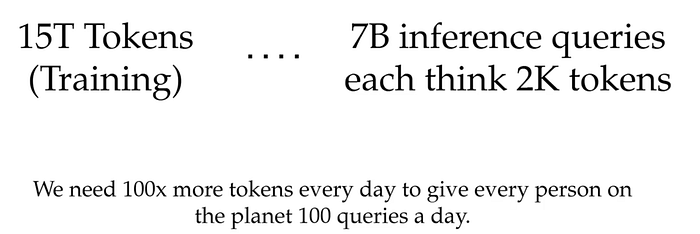

Author(s): Utkarsh Mittal Originally published on Towards AI. Why Inference Is the Endgame Pre-training a frontier large language model typically consumes somewhere between 15 trillion and 30 trillion tokens. That sounds like an enormous number — until you do the arithmetic on …

You Don’t Need GPT-5 for Agents: The 1.2B Model That Beats Giants

Author(s): MohamedAbdelmenem Originally published on Towards AI. Forget GPT-5 for agent tasks. LFM 2.5 runs at 359 tokens/sec in 900MB. Here’s why it works and how to fine-tune it for your use case. 1400x overtraining. 900MB memory. 359 tokens/sec. Three lines of …

The boring AI That Keeps Planes in The Sky

Author(s): Marco van Hurne Originally published on Towards AI. One of the ways I keep myself busy in the AI domain is by running an AI factory at scale. And I’m not talking about the metaphorical kind where someone prompts an AI …

The Roadmap of Mathematics for Machine Learning

Author(s): Tivadar Danka Originally published on Towards AI. A complete guide to linear algebra, calculus, and probability theory Understanding the mathematics behind machine learning algorithms is a superpower. Here’s the full roadmap for you.This article presents a comprehensive curriculum that guides readers …

The Complete Guide to RAG: Why Retrieval-Augmented Generation Is the Backbone of Enterprise AI in 2026

Author(s): Faisal haque Originally published on Towards AI. How a simple architectural pattern became the $11 billion standard for making AI actually useful — and how you can master it Eighteen months ago, a VP at a Fortune 500 company asked me …

The Rise of Synthetic Labor

Author(s): Sam Okoye Originally published on Towards AI. Abstract Advanced economies are entering a sustained structural labor deficit driven by demographic decline, aging populations, and persistent sector-specific shortages. Traditional automation, including robotic process automation and narrow task-based systems, has delivered productivity improvements …

Agent-to-Agent (A2A) Protocol: The Future of Multi-Agent Systems

Author(s): Alok Ranjan Singh Originally published on Towards AI. Understanding A2A, agent communication protocols, and the future of distributed AI systems Most teams aren’t struggling to build AI agents anymore. They’re struggling to live with the ones they already built. The same …

You Can’t Improve AI Agents If You Don’t Measure Them

Author(s): Gowtham Boyina Originally published on Towards AI. This agent-eval Framework Runs Controlled Experiments on Your Codebase I’ve watched teams add MCP servers to their projects, rewrite documentation for AI agents, or switch from Sonnet to Opus — and then have no …

GLM-5 Runs a Vending Machine Business for a Year and Finishes With $4,432

Author(s): Gowtham Boyina Originally published on Towards AI. That’s the Benchmark for Agentic Engineering I’ve tested dozens of coding assistants that can write functions, fix bugs, and refactor code. Most fail spectacularly when you ask them to complete multi-day projects — they …

Agentic Engineering Roadmap

Author(s): Aqil Khan Originally published on Towards AI. Skills, Tools & Resources In 2025, developers experimented with AI agents. In 2026, organizations are hiring agentic engineers to build them at scale. What started as “vibe coding” — typing natural-language prompts and watching …

The Efficiency Wall: Why the Next 1,000x Leap Isn’t More GPUs

Author(s): Kapardhi kannekanti Originally published on Towards AI. The fundamental flaw in modern AI architecture, and the biological “hack” to solve it. We are currently witnessing a massive misallocation of capital in Silicon Valley and beyond. We are burning billions of dollars …

Super Bowl LX: The Night LLMs Went Fully Mainstream (And What It Actually Teaches Us About AI)

Author(s): Nikhil Originally published on Towards AI. Super Bowl LX is the first time foundation models and AI assistants were simultaneously the product, the message, and part of the underlying infrastructure of the event, and that gives us an unusually clear signal …

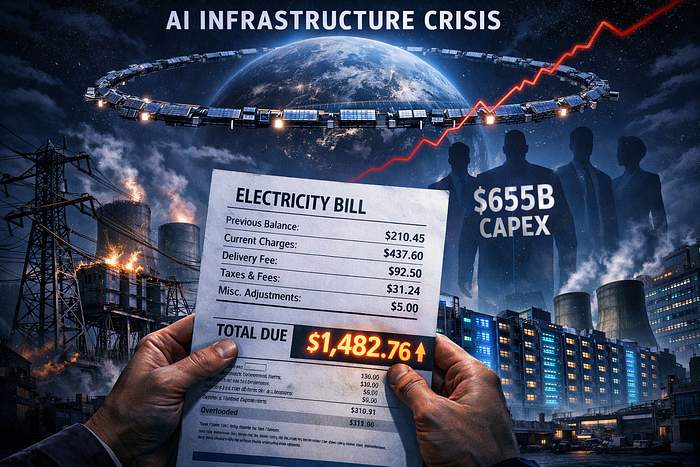

Big Tech Is Burning $655 Billion to Build AI on a Power Grid From the 1950s. Musk Says Put It in Space.

Author(s): Zoom In AI Originally published on Towards AI. Your electric bill is helping bankroll Bezos’s compute buildout. Elon wants to move the whole thing into orbit. Neither plan is proven yet. That is the terrifying part. By Zoom In AI | …

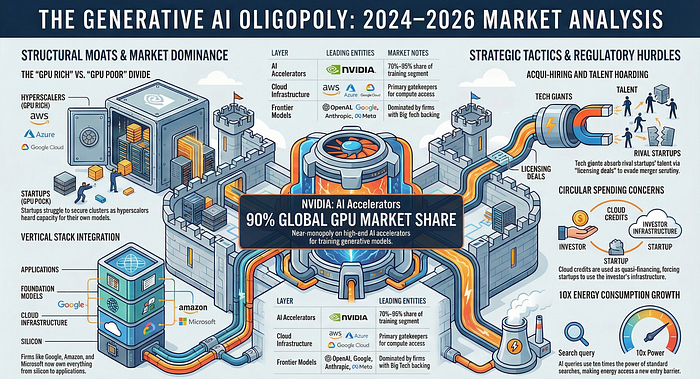

The Generative AI Oligopoly: How Big Tech is Building “Old Moats” for the New Era (2024–2026)

Author(s): Wahidur Rahman Originally published on Towards AI. Is Generative AI Becoming a Big Tech Oligopoly? An In-Depth 2024–2026 Analysis The promise of artificial intelligence was supposed to democratize innovation. Instead, we’re witnessing the construction of the most capital-intensive moat in tech …

Building a Production-Grade Autonomous LLM Agent with Tool Use, Memory, and Multimodal Capabilities

Author(s): Adi Insights and Innovations Originally published on Towards AI. A complete technical walkthrough of designing, implementing, and benchmarking a modern AI agent architecture. This article walks through building a production-grade autonomous agent with: Modern AI systems are moving toward Agentic Architectures, …