Inside Vector Databases: Engineering High-Dimensional Search for Modern AI Systems

Author(s): Rizwanhoda Originally published on Towards AI. Inside Vector Databases: Engineering High-Dimensional Search for Modern AI Systems The real bottleneck in modern AI systems is not the LLM. Photo by Huzeyfe Turan on UnsplashVector databases serve as specialized infrastructure for managing high-dimensional …

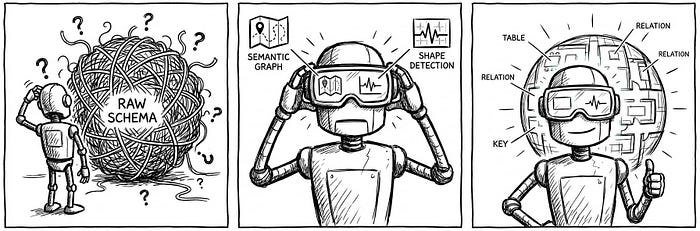

Engineering the Semantic Layer: Why LLMs Need “Data Shape,” Not Just “Data Schema

Author(s): Shreyash Shukla Originally published on Towards AI. Image Source: Google Gemini The “Context Window” Economy In the world of Large Language Models (LLMs), attention is a finite currency. While context windows are expanding, the “Lost in the Middle” phenomenon remains a …

The “Strawberry” Signal: OpenAI’s Next Model Will Eat Its Platform

Author(s): MohamedAbdelmenem Originally published on Towards AI. OpenAI’s Frontier platform locks in today’s AI workflows. Its “Strawberry” research will make them obsolete. Here’s your strategic hedge. If you are a strategist, CTO, or investor tracking the enterprise AI stack, this is the …

I Built an AI App That Trains SQL Like a Personal Trainer

Author(s): Mukundan Sankar Originally published on Towards AI. I built a Streamlit SQL coach that grades your queries and fixes your form without giving away the answer. I use SQL a lot. I made this app to help me when my queries …

The Great Bifurcation: How Hardware Root-of-Trust Determines Whether AI Leads to Reality or Illusion

Author(s): Simplified Complexity Originally published on Towards AI. As the world teeters between a verifiable “Reality” and a synthetic “Illusion,” the difference lies in the silicon. Here is why the United Kingdom’s legal and engineering heritage mandates a shift toward Hardware Root …

What Are World Models? The Blueprint for the Next Decade of AI

Author(s): Ampatishan Sivalingam Originally published on Towards AI. We built machines that can talk. Now we’re building machines that can think, plan, and imagine, before they ever act. A toddler reaches for a stack of wooden blocks. She doesn’t just see the …

What OpenClaw’s Security Disasters Teach Us About the Future of AI Agents

Author(s): thamilvendhan Originally published on Towards AI. In January 2026, a weekend project by Austrian developer Peter Steinberger broke the internet. OpenClaw (originally called Clawdbot) — a self-hosted AI agent that lives in your WhatsApp, Telegram, and Slack — racked up 9,000 …

Agent Triangle: 3 Paths to AI Workforce in 2026

Author(s): Aqil Khan Originally published on Towards AI. Agent Triangle: 3 Paths to AI Workforce in 2026 The agentic AI revolution is no longer a prediction, it’s happening right now. Gartner predicts that up to 40% of enterprise applications will include integrated …

AI’s Diminishing Returns: Avoiding the Overreliance Trap

Author(s): Prasoon Mukherjee Originally published on Towards AI. “I find it hard to see, how there can be a good return on investment given, the current math.” This warning about AI infrastructure economics is not just for tech investors, but it has …

TAI #192: AI Enters the Scientific Discovery Loop

Author(s): Towards AI Editorial Team Originally published on Towards AI. What happened this week in AI by Louie This week, LLMs crossed from tools into participants in scientific discovery. OpenAI released a preprint, “Single-minus gluon tree amplitudes are nonzero,” in which GPT-5.2 …

OpenAI Hires OpenClaw Creator: The Illusion of the “Open” Agentic Future

Author(s): Mandar Karhade, MD. PhD. Originally published on Towards AI. When the architect of the open source agent revolution joins the closed source giant, we have to ask if innovation is being fostered or fenced in This is a fast one, short …

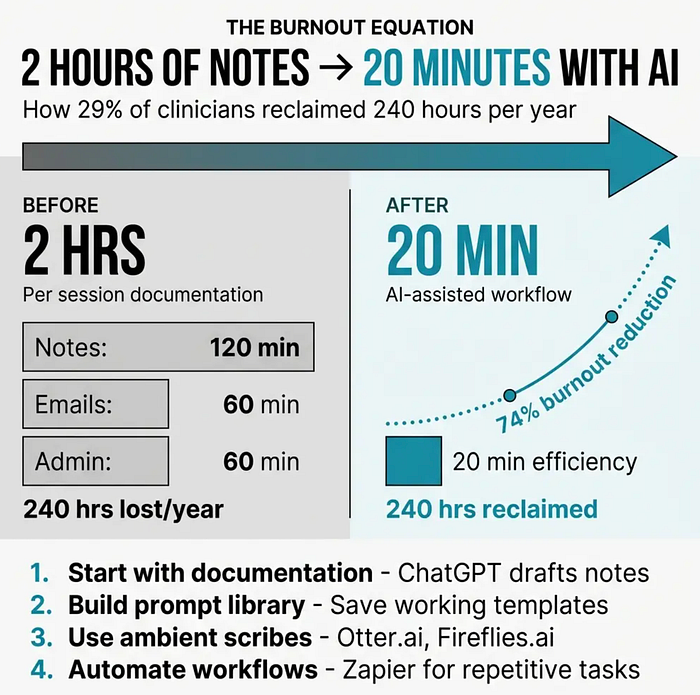

The Admin Work Killing Your Practice Has a Simple Fix You’re Probably Ignoring

Author(s): Bobby Tredinnick Originally published on Towards AI. Article Authored By Bobby Tredinnick LMSW-CASAC; CEO & Lead Clinician at Interactive Health Companies including Coast Health Consulting & Interactive International Solutions Created By OpenAI Clinicians across the field are exhausted. Not the kind …

GPU and CPU Utilization While Running Open-Source LLMs Locally using Ollama

Author(s): Muaaz Originally published on Towards AI. Large Language Models (LLMs) are powerful, but running them locally requires significant hardware resources. Many users rely on open-source models due to their accessibility, as closed source models often come with restrictive licensing and high …

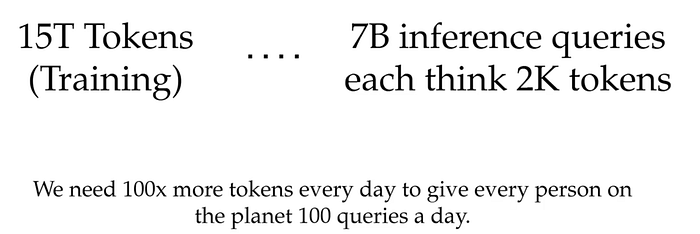

Inside the Forward Pass: Pre-Fill, Decode, and the GPU Economics of Serving Large Language Models

Author(s): Utkarsh Mittal Originally published on Towards AI. Why Inference Is the Endgame Pre-training a frontier large language model typically consumes somewhere between 15 trillion and 30 trillion tokens. That sounds like an enormous number — until you do the arithmetic on …

You Don’t Need GPT-5 for Agents: The 1.2B Model That Beats Giants

Author(s): MohamedAbdelmenem Originally published on Towards AI. Forget GPT-5 for agent tasks. LFM 2.5 runs at 359 tokens/sec in 900MB. Here’s why it works and how to fine-tune it for your use case. 1400x overtraining. 900MB memory. 359 tokens/sec. Three lines of …