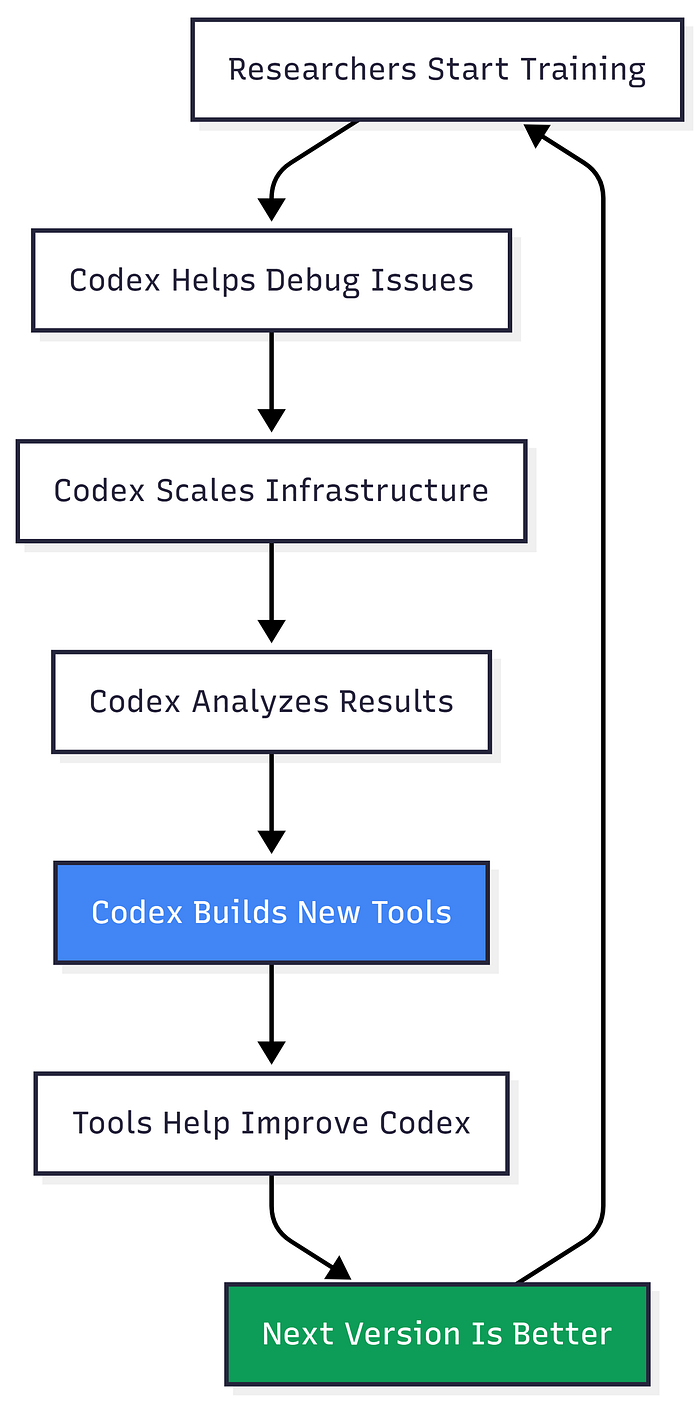

The AI That Built Itself: What GPT-5.3-Codex Means For Developers

Author(s): AbhinayaPinreddy Originally published on Towards AI. The First AI Model That Helped Create Its Own Next Version Just Launched. Here’s What Changed. February 5, 2026, 9:45 AM. Anthropic panics and moves their big launch up by 15 minutes. This isn’t just …

Prompt to Protocol: Architecting Agent-Oriented Infrastructure for Production LLMs

Author(s): Shreya Singhal Originally published on Towards AI. The “Chatbot Era” is dead. Here is how to navigate the microservices moment for AI and choose between LangGraph and AutoGen. If you’ve spent the last few years building AI applications, you’ve probably hit …

I “Vibe Coded” Using Cursor (No Code Required)

Author(s): Adi Insights and Innovations Originally published on Towards AI. I “Vibe Coded” Using Cursor (No Code Required) Andrej Karpathy’s new philosophy changes everything. Here is how I built a RAG app by just talking to my computer. Here is how I …

The Role of Signal-to-Noise in Loss Convergence

Author(s): Austin DeWolfe Originally published on Towards AI. Source: Image by author Consider the normal NLP training curve during pre-training. That nice beautiful line of healthy training. Why does it behave like that? Why does the image above not behave like that? …

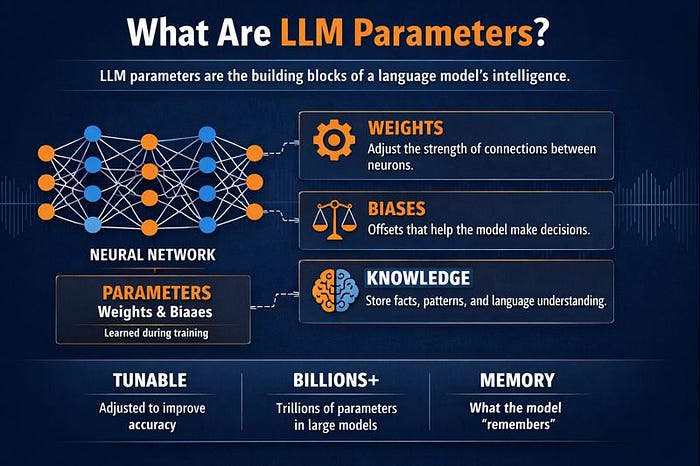

What Are LLM Parameters? A Simple Explanation of Weights, Biases, and Scale

Author(s): Mandar Panse Originally published on Towards AI. No complicated words. Just real talk about how this stuff works. Large Language Models (LLMs) like GPT, LLaMA, and Mistral contain billions of parameters, primarily weights and biases, that define how the model understands …

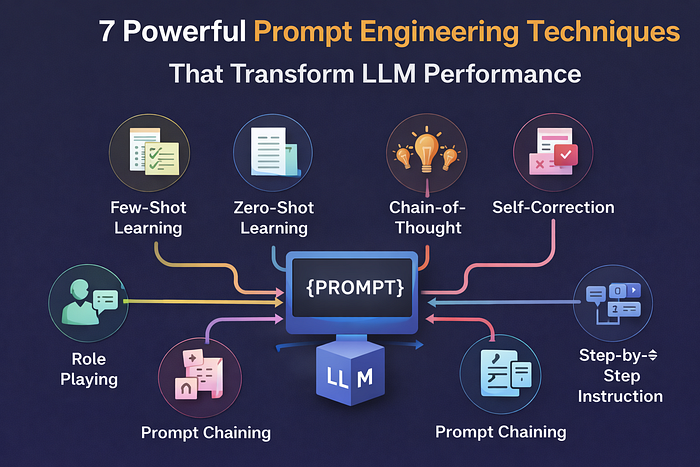

7 Powerful Prompt Engineering Techniques That Transform LLM Performance

Author(s): TANVEER MUSTAFA Originally published on Towards AI. 7 Powerful Prompt Engineering Techniques That Transform LLM Performance Prompt engineering is the critical skill of crafting instructions that guide Large Language Models (LLMs) to produce reliable, structured outputs. This article explores how the …

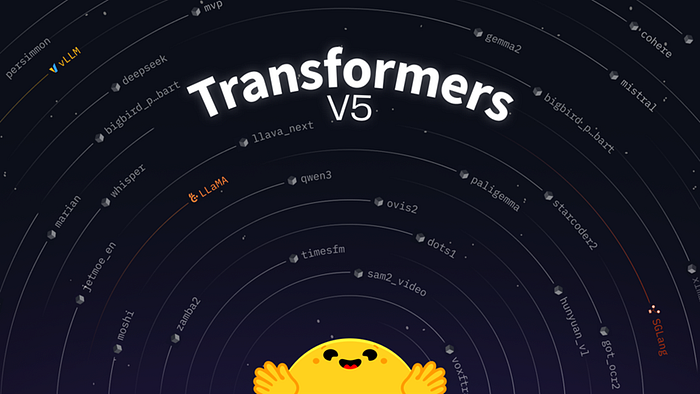

Transformers v5 – Hugging Face’s Next Big Leap in Simple and Powerful AI Models

Author(s): Aniket Sanyal Originally published on Towards AI. Transformers v5 – Hugging Face’s Next Big Leap in Simple and Powerful AI Models Hugging Face has unveiled Transformers v5, the latest major release of its popular open-source library that powers many AI models …

Anthropic’s Improved Workflow: When Your Hacks Ship as Features

Author(s): Nick Porter Originally published on Towards AI. Hiya! My name is Nick and I love writing Web apps! I have been writing in various languages both front-end and back-end professionally since ’98. My workflow, needless to say, has evolved considerably over …

Top 20 Regression KPI Interview Questions and Answers (Part 1 of 2)

Author(s): Shahidullah Kawsar Originally published on Towards AI. Machine Learning Interview Preparation Part 22 Key Performance Indicators (KPIs) such as Mean Squared Error (MSE), Root Mean Squared Error (RMSE), and Mean Absolute Error (MAE) provide quantitative ways to measure how closely model …

The 4 Parameter-Efficient Fine-Tuning Methods: How to Adapt LLMs 100× Faster

Author(s): TANVEER MUSTAFA Originally published on Towards AI. The 4 Parameter-Efficient Fine-Tuning Methods: How to Adapt LLMs 100× Faster You want to customize GPT-3 for customer service. Traditional fine-tuning requires updating 175 billion parameters — 350GB storage per variant, weeks of training, …