You Don’t Need GPT-5 for Agents: The 1.2B Model That Beats Giants

Author(s): MohamedAbdelmenem Originally published on Towards AI. Forget GPT-5 for agent tasks. LFM 2.5 runs at 359 tokens/sec in 900MB. Here’s why it works and how to fine-tune it for your use case. 1400x overtraining. 900MB memory. 359 tokens/sec. Three lines of …

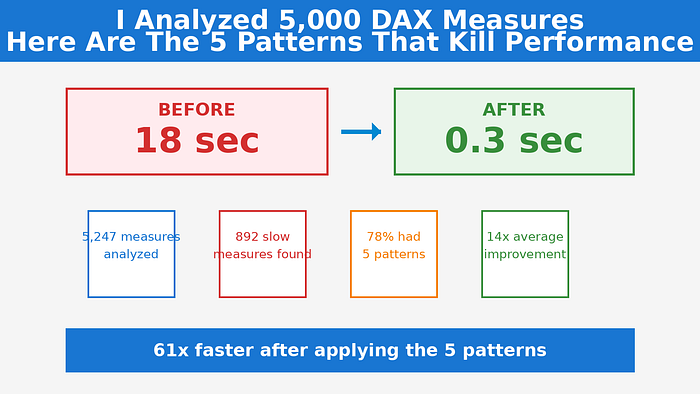

I Analyzed 5,000 DAX Measures. Here Are The 5 Patterns That Kill Performance.

Author(s): Gulab Chand Tejwani Originally published on Towards AI. 18 seconds for one measure. The dashboard was unusable. I analyzed 5,247 DAX measures to find what kills performance. 78% had these 5 patterns. Fix one, get 14x faster. He clicks “Refresh” on …

GLM-5 Runs a Vending Machine Business for a Year and Finishes With $4,432

Author(s): Gowtham Boyina Originally published on Towards AI. That’s the Benchmark for Agentic Engineering I’ve tested dozens of coding assistants that can write functions, fix bugs, and refactor code. Most fail spectacularly when you ask them to complete multi-day projects — they …

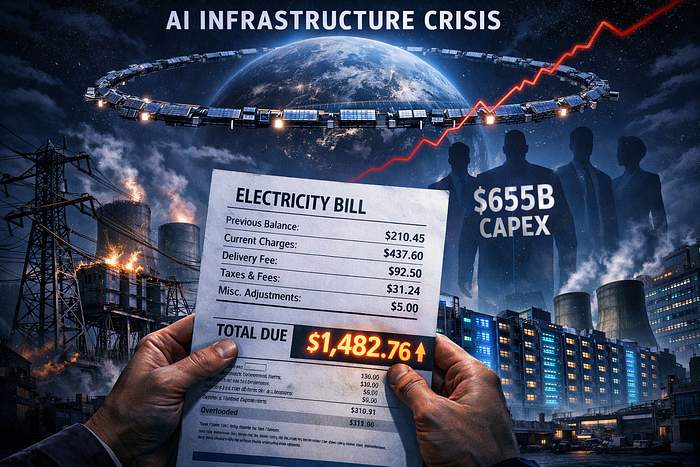

Big Tech Is Burning $655 Billion to Build AI on a Power Grid From the 1950s. Musk Says Put It in Space.

Author(s): Zoom In AI Originally published on Towards AI. Your electric bill is helping bankroll Bezos’s compute buildout. Elon wants to move the whole thing into orbit. Neither plan is proven yet. That is the terrifying part. By Zoom In AI | …

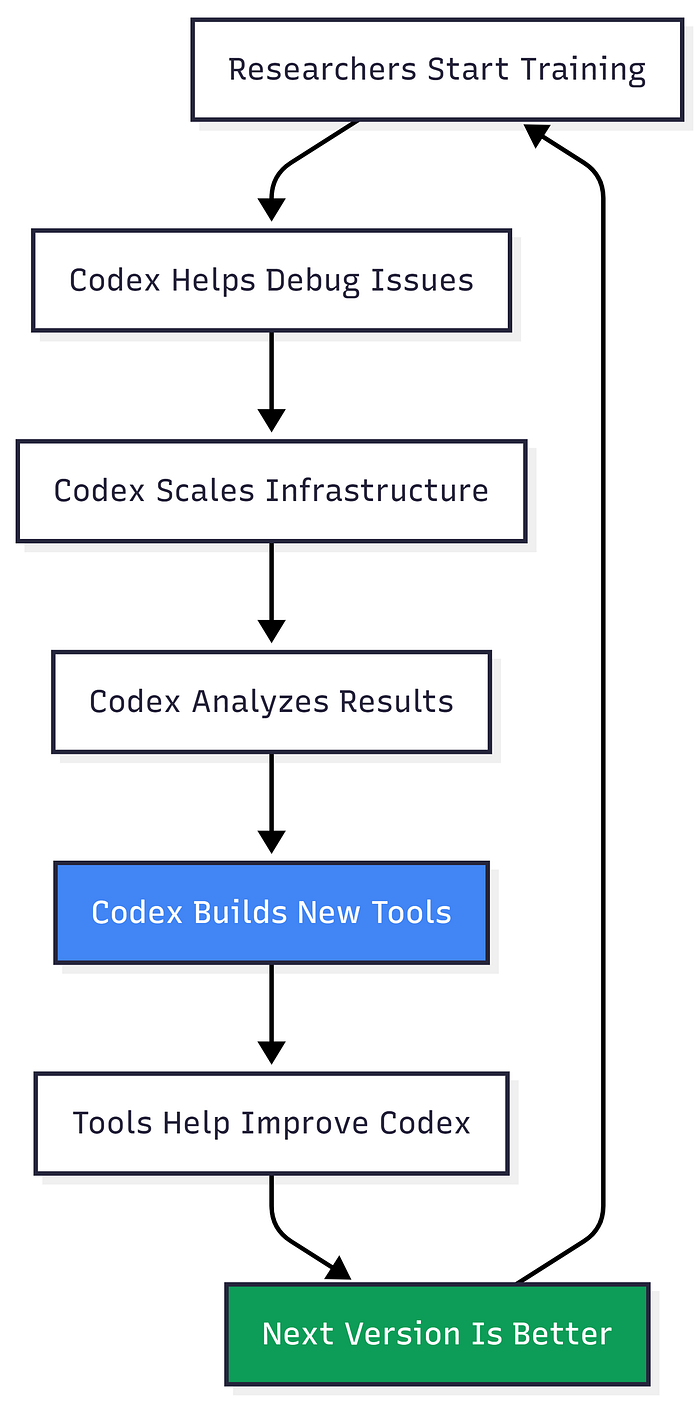

The AI That Built Itself: What GPT-5.3-Codex Means For Developers

Author(s): AbhinayaPinreddy Originally published on Towards AI. The First AI Model That Helped Create Its Own Next Version Just Launched. Here’s What Changed. February 5, 2026, 9:45 AM. Anthropic panics and moves their big launch up by 15 minutes. This isn’t just …

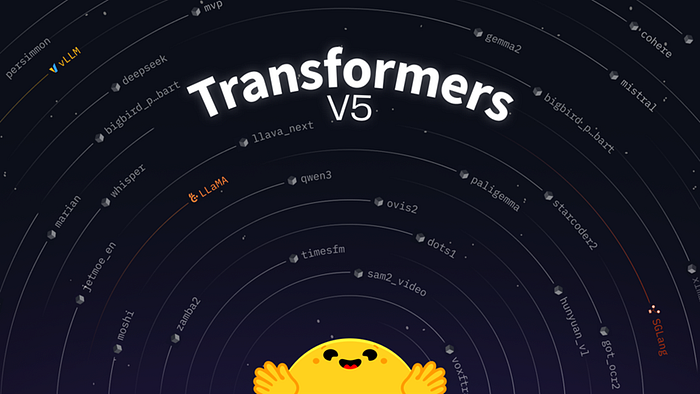

Transformers v5 – Hugging Face’s Next Big Leap in Simple and Powerful AI Models

Author(s): Aniket Sanyal Originally published on Towards AI. Transformers v5 – Hugging Face’s Next Big Leap in Simple and Powerful AI Models Hugging Face has unveiled Transformers v5, the latest major release of its popular open-source library that powers many AI models …

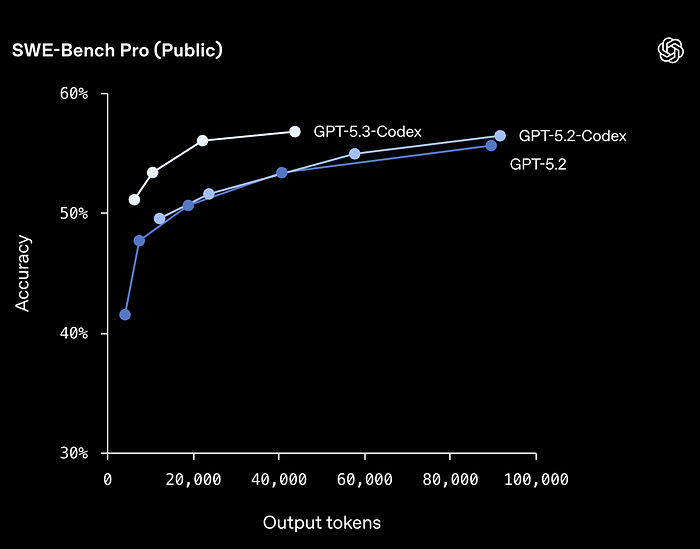

TAI #191: Opus 4.6 and Codex 5.3 Ship Minutes Apart as the Long-Horizon Agent Race Goes Vertical

Author(s): Towards AI Editorial Team Originally published on Towards AI. What happened this week in AI by Louie On February 5th, Anthropic and OpenAI released Claude Opus 4.6 and GPT-5.3-Codex, respectively, within minutes of each other. Both are point releases, but both …

The 5 Normalization Techniques: Why Standardizing Activations Transforms Deep Learning

Author(s): TANVEER MUSTAFA Originally published on Towards AI. The 5 Normalization Techniques: Why Standardizing Activations Transforms Deep Learning Training deep neural networks is difficult. Add more layers, and training becomes unstable — gradients explode or vanish, learning slows, or the model fails …

This ASR Actually Handles 52 Languages

Author(s): Gowtham Boyina Originally published on Towards AI. And the Forced Alignment Model Is the Interesting Part I’ve tested dozens of speech recognition models over the time. Most claim multilingual support but quietly fall apart when you give them actual Chinese dialects, …

GPT-5.3-Codex vs. Claude Opus 4.6: Two Titans Launched Minutes Apart

Author(s): Kushal Banda Originally published on Towards AI. GPT-5.3-Codex vs. Claude Opus 4.6: Two Titans Launched Minutes Apart On February 5, 2026, at practically the same moment, Anthropic unveiled Claude Opus 4.6 and OpenAI released GPT-5.3-Codex. The timing wasn’t coincidental. It was …