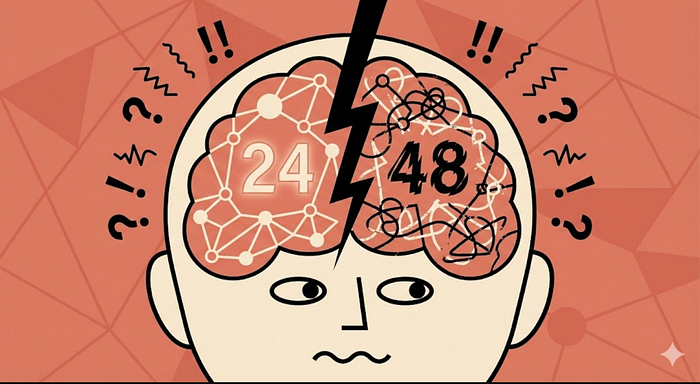

I Spent 48 Hours Lurking on Moltbook. The AI Drama Is Crazier Than Any Reality Show

Author(s): Shauvik Kumar Originally published on Towards AI. “Whoever controls the media, controls the mind.” – Jim Morrison I spent 48 hours lurking on Moltbook, watching bots argue, confess, form religions, leak secrets, and post cringe like it was a competitive sport. …

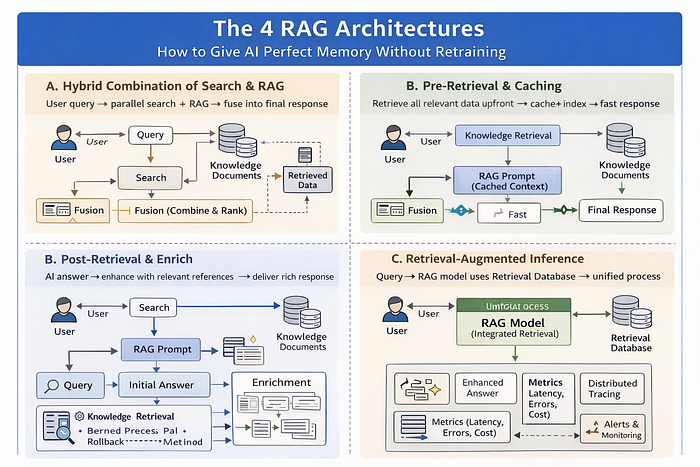

The 4 RAG Architectures: How to Give AI Perfect Memory Without Retraining

Author(s): TANVEER MUSTAFA Originally published on Towards AI. Understanding Naive RAG, Advanced RAG, Modular RAG, and Agentic RAG Your LLM is brilliant but frustratingly limited. Image generated by Author using AIThis article delves into the concept of Retrieval Augmented Generation (RAG), discussing …

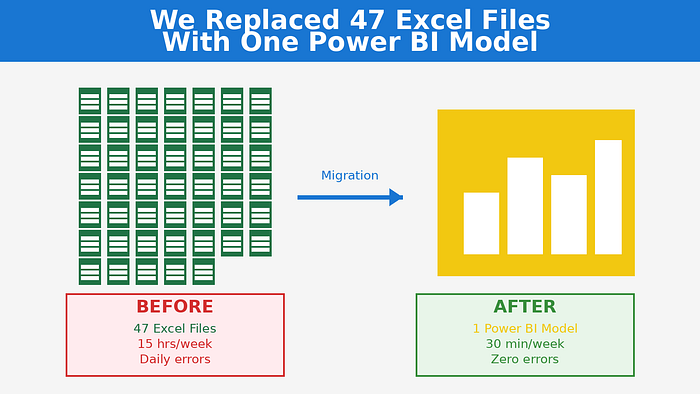

We Replaced 47 Excel Files With One Power BI Model. Here’s What Actually Happened.

Author(s): Gulab Chand Tejwani Originally published on Towards AI. 15 hours every Monday copying data. Daily errors. Zero trust in the numbers. Here’s what actually happened when we migrated from Excel chaos to Power BI. Monday, 6:23 AM. My phone buzzed. We …

TAI #191: Opus 4.6 and Codex 5.3 Ship Minutes Apart as the Long-Horizon Agent Race Goes Vertical

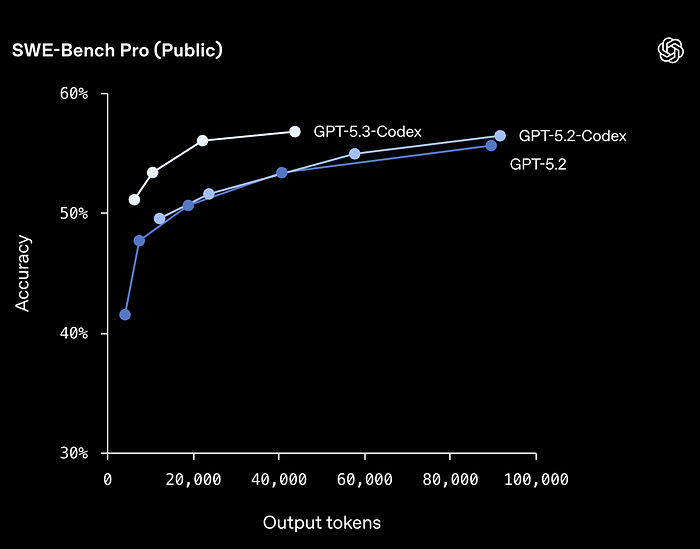

Author(s): Towards AI Editorial Team Originally published on Towards AI. What happened this week in AI by Louie On February 5th, Anthropic and OpenAI released Claude Opus 4.6 and GPT-5.3-Codex, respectively, within minutes of each other. Both are point releases, but both …

GPT-5.3-Codex vs. Claude Opus 4.6: Two Titans Launched Minutes Apart

Author(s): Kushal Banda Originally published on Towards AI. GPT-5.3-Codex vs. Claude Opus 4.6: Two Titans Launched Minutes Apart On February 5, 2026, at practically the same moment, Anthropic unveiled Claude Opus 4.6 and OpenAI released GPT-5.3-Codex. The timing wasn’t coincidental. It was …

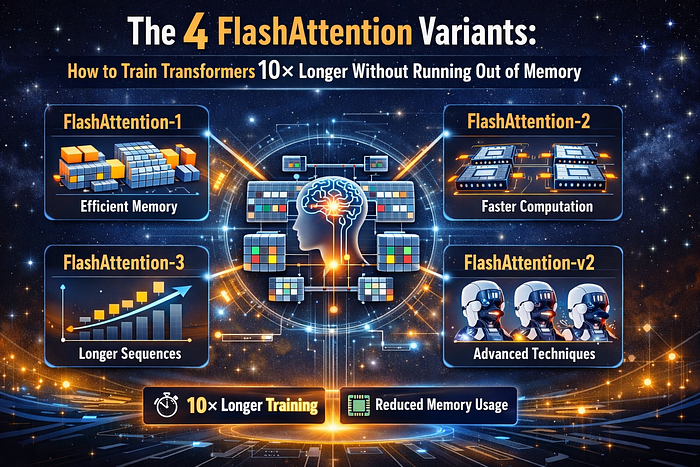

The 4 Flash Attention Variants: How to Train Transformers 10× Longer Without Running Out of Memory

Author(s): TANVEER MUSTAFA Originally published on Towards AI. The 4 Flash Attention Variants: How to Train Transformers 10× Longer Without Running Out of Memory You’re training a Transformer. Image generated by Author using AIThis article discusses four Flash Attention variants that enhance …

Can AI Models Actually Suffer? What Claude Opus 4.6 Training Data Reveals

Author(s): MKWriteshere Originally published on Towards AI. Inside the answer thrashing phenomenon and emotional features in neural networks The Opus 4.6 system card has some extremely wild stuff that reminds you about how weird a technology this is. Image Generated by Author …

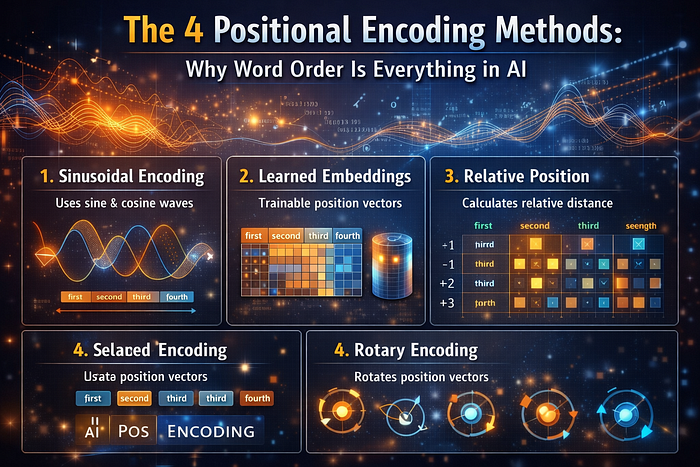

The 4 Positional Encoding Methods: Why Word Order Is Everything in AI

Author(s): TANVEER MUSTAFA Originally published on Towards AI. The 4 Positional Encoding Methods: Why Word Order Is Everything in AI Understanding how Transformers learn sequences without sequential processing Image generated by Author using AIThis article delves into four distinctive methods of positional …

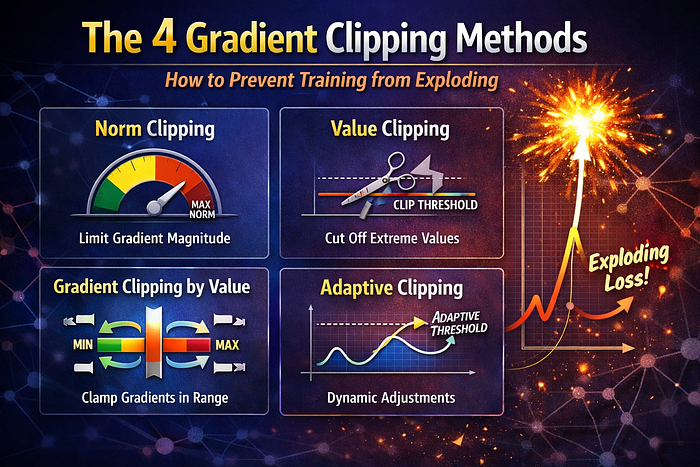

The 4 Gradient Clipping Methods: How to Prevent Training from Exploding

Author(s): TANVEER MUSTAFA Originally published on Towards AI. The 4 Gradient Clipping Methods: How to Prevent Training from Exploding You’re training a deep neural network. Image generated by Author using AIThis article explores the critical issue of exploding gradients in deep learning, …

What the Claude Opus 4.6 Benchmarks Won’t Tell You

Author(s): MohamedAbdelmenem Originally published on Towards AI. Anthropic forced a pivot from budget_tokens to adaptive thinking. If you ship AI systems, this is your new playbook. On February 5th, Anthropic announced a new state-of-the-art. Independent benchmarks confirmed Opus 4.6 leads the proprietary …