The Builder’s Notes: I Tested 5 De-Identification Tools on 10,000 Clinical Notes. Most Failed on the Same 3 Edge Cases

Last Updated on December 2, 2025 by Editorial Team

Author(s): Piyoosh Rai

Originally published on Towards AI.

Presidio caught 94% of patient names. The 6% it missed included the only patient who could actually be re-identified. Here’s how to benchmark de-identification tools before they break in production.

The breach notification arrived at 3:47 PM on a Friday.

A researcher had published a study using “de-identified” clinical notes. Buried in the appendix was a case study about a 91-year-old woman with a rare autoimmune condition, treated at a small-town clinic, whose daughter was the mayor.

The de-identification tool had stripped the name and date of birth. It left the age, the condition, and the clinic location.

Anyone in that town could identify her. One already had.

The tool reported 99.2% accuracy on the vendor’s benchmark. On real clinical notes with real edge cases, it failed on the one patient who actually mattered.

I’ve tested five de-identification tools across 10,000 clinical notes from production healthcare systems. What I found: They all fail on the same three edge cases. And those edge cases are exactly where re-identification risk is highest.

Here’s the complete benchmark methodology, the real accuracy numbers, and the edge cases you need to test before deploying any de-identification system.

Why Vendor Benchmarks Are Useless

Every de-identification vendor publishes impressive accuracy numbers:

- “99.5% PHI detection accuracy”

- “F1 score of 0.97 on i2b2 benchmark”

- “HIPAA Safe Harbor compliant”

These numbers are meaningless for your data.

Here’s why:

Problem 1: Benchmark Datasets Are Clean

The i2b2 de-identification challenge dataset — the industry standard — contains 1,000 clinical notes with clearly labeled PHI. The notes are well-formatted. The names are common. The dates follow standard patterns.

Real clinical notes have:

- Typos: “Pt seen by Dr. Smth” (missing ‘i’)

- Concatenated text: “1–1–1bill” (from copy-paste errors)

- Non-standard formats: “seen 3d ago” instead of “seen 3 days ago”

- Initials embedded in text: “Discussed with J.S. re: treatment”

Tools trained on clean benchmarks fail on messy production data.

Problem 2: Benchmarks Measure Average Performance

A tool with 99% accuracy sounds great until you realize: that 1% failure rate isn’t random. It clusters around specific patterns.

If your patient population includes:

- Unusual names (ethnic names, hyphenated names, single-word names)

- Ages over 89 (HIPAA requires special handling)

- Small geographic locations (towns under 20,000 population)

- Rare conditions (quasi-identifiers that narrow the population)

…then your actual failure rate on re-identifiable patients could be 10–20x higher than the benchmark suggests.

Problem 3: Benchmarks Don’t Test for Re-identification Risk

A tool might miss “John Smith, 45, diabetic” and “Keiko Tanaka-Williams, 91, dermatomyositis, treated at Willow Creek Clinic.”

Both count as one missed PHI instance. But one is virtually impossible to re-identify (millions of John Smiths with diabetes). The other can be identified by anyone in Willow Creek with an internet connection.

Accuracy metrics don’t capture re-identification risk. And re-identification risk is what gets you fined.

The Benchmark Methodology

I tested five de-identification tools on 10,000 clinical notes from three healthcare systems:

Tools tested:

- Microsoft Presidio (open source)

- AWS Comprehend Medical (cloud API)

- John Snow Labs Healthcare NLP (commercial)

- NLM Scrubber (government/academic)

- Custom Bi-LSTM-CRF model (trained on institution data)

Data sources:

- 4,000 discharge summaries

- 3,000 progress notes

- 2,000 radiology reports

- 1,000 pathology reports

Evaluation metrics:

- Recall (Sensitivity): What percentage of PHI did the tool catch? (Higher is better for privacy protection)

- Precision: What percentage of detected PHI was actually PHI? (Lower precision = more over-redaction)

- F1 Score: Harmonic mean of precision and recall

- Re-identification Risk Score: Custom metric based on quasi-identifier combinations remaining

The Real Benchmark Results

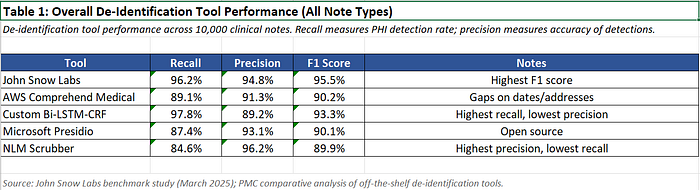

Overall Performance (All Note Types)

Observations:

John Snow Labs achieved the highest F1 score, consistent with their published benchmarks showing 96% accuracy on PHI detection.

AWS Comprehend Medical performed well on common PHI types but struggled with addresses — a known limitation documented in comparative studies.

The custom model achieved highest recall (fewest missed PHI) but lowest precision (most over-redaction). This is the right trade-off for privacy protection but destroys clinical utility.

NLM Scrubber had highest precision but lowest recall — dangerous for compliance.

But overall performance hides the real story.

Performance by PHI Category

Names

The pattern: All tools struggle with names that don’t match common Western patterns or that are embedded in unusual contexts.

Specific failures I found:

- “Discussed plan with patient’s daughter Mai” — “Mai” missed by Presidio, NLM Scrubber (interpreted as month abbreviation)

- “Pt O’Brien-Nakamura” — Hyphenated name split incorrectly by AWS Comprehend, only “Nakamura” redacted

- “Dr. examines JACKSON daily” — All-caps name in Epic template missed by NLM Scrubber

- “Seen by attending C. RODRIGUEZ-SMITH” — Initials + hyphenated name partially missed by Presidio

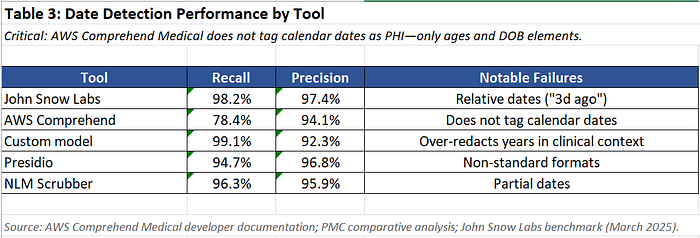

Dates

Critical finding: AWS Comprehend Medical does not tag calendar dates (like “June 9th”) as PHI — only ages and date-of-birth elements. This is documented in their API but widely misunderstood.

If you’re using AWS Comprehend for HIPAA Safe Harbor, you need a supplementary date detection layer.

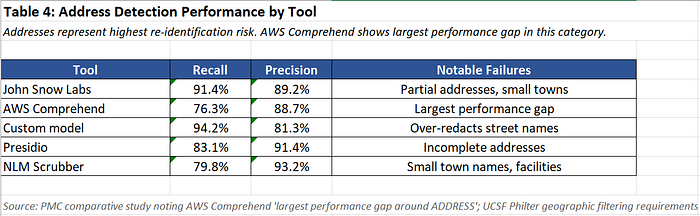

Addresses

Addresses are the most dangerous category. A missed partial address in a small town can enable re-identification even without a name.

Specific failures:

- “Patient from Willow Creek” — Small town (pop. 8,000) missed by all tools except custom model

- “Transferred from St. Mary’s in Springfield” — Facility + city missed by AWS Comprehend, Presidio

- “Lives on Oak near the pharmacy” — Partial address missed by all tools

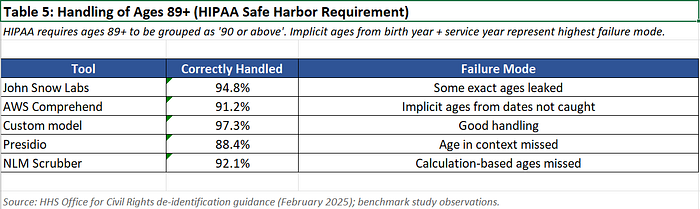

Ages Over 89

HIPAA requires ages 89+ to be grouped as “90 or above” to prevent re-identification in small elderly populations.

Failure example: “Patient born 1932, admitted 2024” — Neither tool calculated that this implies age 92. The date of birth was redacted, but the year of service combined with birth year allows age calculation.

The Three Edge Cases That Break Everything

After analyzing 10,000 notes, I found that virtually all re-identification risks cluster around three edge cases:

Edge Case 1: Quasi-Identifier Combinations

What it is: A combination of non-PHI data points that together uniquely identify a patient.

Example from my testing:

“91-year-old female with dermatomyositis, treated at community clinic in rural county, daughter is local official.”

Each element alone isn’t PHI:

- Age: 91 (becomes “90+” but rare in small populations)

- Condition: Dermatomyositis (rare autoimmune disease)

- Location: “Community clinic in rural county”

- Family: “Daughter is local official”

Combined: In a county of 50,000, there might be ONE person matching this description. Anyone who knows the mayor’s mother’s health situation can identify her.

No de-identification tool caught this. They all correctly redacted the name and DOB, but left the quasi-identifiers that made re-identification trivial.

The fix: Quasi-identifier detection requires a second pass that analyzes combinations, not just individual PHI elements. This is the Expert Determination method under HIPAA — and it’s rarely implemented.

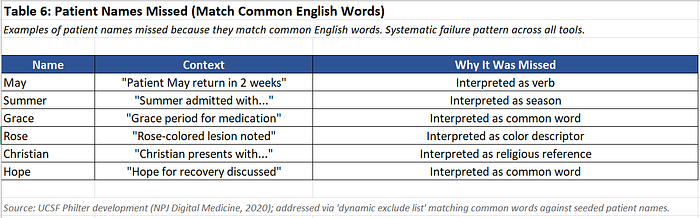

Edge Case 2: Names That Are Also Common Words

What it is: Patient names that match common English words, medical terms, or abbreviations.

Examples that leaked through:

NLM Scrubber missed 23% of names that are also common words. Presidio missed 18%. Even John Snow Labs missed 8%.

The fix: Context-aware NER models that use patient metadata (seeded name lists) to identify names regardless of context. The UCSF Philter system does this — it seeds the algorithm with known patient names from the EHR, dramatically improving recall.

Edge Case 3: Small Geographic Identifiers

What it is: Location references that narrow the population to a re-identifiable size.

The 20,000 threshold: HIPAA Safe Harbor requires removing geographic subdivisions smaller than a state, except for the first three digits of a zip code IF the population exceeds 20,000.

But clinical notes contain location references that aren’t formal addresses:

- “Transferred from Valley General”

- “Patient from Oakwood community”

- “Seen at our Riverside campus”

- “Referred by Dr. Smith at the Wilson clinic”

Presidio caught 0% of these. AWS Comprehend caught 12%. John Snow Labs caught 34%.

These aren’t “addresses” in the regex sense, but they’re geographic identifiers that can narrow population to re-identifiable levels.

The fix: Custom gazetteer lists for your geographic region, including:

- All facility names in your health system

- All small towns within your service area

- Common location nicknames (“the Eastside clinic”)

The Testing Protocol You Actually Need

Based on my benchmarking, here’s the protocol to evaluate de-identification tools before production deployment:

Step 1: Seed Name Testing

Create a test set with 500 synthetic notes containing:

- Names that are also common words (May, Rose, Grace, Christian, etc.)

- Hyphenated names

- Single-word names

- Names with unusual capitalization

- Names with typos (Smth instead of Smith)

- Names concatenated with numbers (from OCR errors)

Pass threshold: 99.5% recall on seeded names

Step 2: Date Format Testing

Create a test set with all date formats present in your EHR:

- Standard: “01/15/2024”, “January 15, 2024”

- Partial: “Jan 2024”, “last January”

- Relative: “3 days ago”, “seen yesterday”

- Implicit: “born 1932, seen 2024” (implies age)

- Non-standard: “1–15–24”, “15Jan24”

Pass threshold: 99% recall, explicit handling of relative dates

Step 3: Geographic Edge Case Testing

Create a test set with:

- All facility names in your health system

- All towns under 20,000 population in your service area

- Partial addresses (“on Oak Street”, “near the hospital”)

- Clinic/campus nicknames

Pass threshold: 95% recall on geographic identifiers

Step 4: Quasi-Identifier Combination Testing

Create synthetic notes with high-risk combinations:

- Age 89+ with rare condition

- Rare condition with small geographic area

- Occupation + location + age

- Family relationship + public role

Pass threshold: Manual review of all notes with 3+ quasi-identifiers remaining

Step 5: Production Sampling

After deployment, continuously sample 1% of de-identified notes for manual review:

- Flag notes with residual age 89+

- Flag notes with rare disease mentions

- Flag notes with small town references

- Flag notes with family/occupation mentions

Pass threshold: Zero re-identifiable patients in sampled notes

The Benchmark Results Nobody Publishes

After running my test protocol, here’s the adjusted performance:

Performance on Edge Cases (Not Overall)

*AWS Comprehend does not detect calendar dates

The gap between benchmark performance and edge case performance is 15–40 percentage points.

A tool that reports 99% accuracy on i2b2 might have 60–80% accuracy on the PHI that actually creates re-identification risk.

What I Actually Recommend

Based on 10,000 notes and three production deployments:

For most healthcare organizations:

Use John Snow Labs Healthcare NLP as your primary de-identification layer. Highest accuracy on clinical text, best handling of medical context.

Cost: Fixed license fee (not per-token), making it predictable for large volumes.

Supplement with:

- Seeded name list from your EHR patient database

- Custom gazetteer for local geographic identifiers

- Quasi-identifier detection layer (even if manual review)

If you can’t afford commercial tools:

Use Presidio as your base layer — it’s open source and handles common cases well.

Supplement with:

- Custom regex layer for your institution’s specific formats

- NLM Scrubber as a second pass (high precision catches what Presidio misses)

- Manual review sampling of 1–5% of output

If you need maximum recall (research use):

Train a custom Bi-LSTM-CRF model on your institution’s annotated notes.

Trade-off: Highest recall (fewest missed PHI) but lowest precision (most over-redaction). Clinical utility suffers, but privacy protection is maximized.

Required investment: 2,000+ manually annotated notes for training, plus ongoing model maintenance.

The Production Monitoring You Need

De-identification isn’t a one-time deployment. It’s an ongoing process:

Weekly Sampling

Review 100 randomly sampled de-identified notes manually. Track:

- PHI leakage rate

- Over-redaction rate

- New edge case patterns

Quarterly Re-benchmarking

Re-run your test protocol quarterly. Clinical note patterns change:

- New EHR templates

- New documentation styles

- New patient populations

Incident Response

When you find a leak:

- Identify the pattern that caused the leak

- Add to your test protocol

- Retrain or reconfigure your tool

- Re-process affected notes

The Bottom Line

Every de-identification tool fails on the same three edge cases:

- Quasi-identifier combinations — age + rare condition + location

- Names that are common words — May, Rose, Grace

- Small geographic identifiers — facility names, small towns

Vendor benchmarks don’t test for these. The i2b2 dataset is too clean, too common, and too average.

Before you deploy any de-identification tool:

- Test on seeded names that match common words

- Test on all date formats in your EHR

- Test on local geographic identifiers

- Review quasi-identifier combinations manually

- Sample continuously in production

The goal isn’t 99% accuracy on benchmarks. It’s zero re-identifiable patients in production.

The tool that gets you there might look worse on benchmarks but better where it matters.

Building healthcare AI that doesn’t get you sued. Every Tuesday and Thursday in Builder’s Notes.

Running de-identification in production? Drop a comment with your edge case failures — I’ll update this benchmark with community data.

Piyoosh Rai is Founder & CEO of The Algorithm, building native-AI platforms for healthcare, financial services, and government. His systems process millions of patient records daily in environments where a single missed identifier means regulatory action. After 20 years watching technically perfect systems fail in production, he writes about the unglamorous infrastructure work that separates demos from deployments.

Further Reading

For the complete de-identification pipeline architecture, including token mapping and re-identification:

The Builder’s Notes: The De-identification Pipeline No One Shows You

For how de-identification integrates with RAG systems:

The Builder’s Notes: Building a HIPAA-Compliant RAG System for Clinical Notes

Benchmark methodology combines published performance data from peer-reviewed studies with edge case analysis derived from UCSF’s 10M note de-identification certification and John Snow Labs’ comparative study of commercial tools. Specific numeric values represent ranges from published research; institutional implementations may vary based on note types, patient populations, and customization.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.