Building a Fully Self-Healing RAG System

Last Updated on December 2, 2025 by Editorial Team

Author(s): Subrata Samanta

Originally published on Towards AI.

Moving beyond naive retrieval: A comprehensive guide to building agentic pipelines that fix bad queries, filter irrelevant data, and learn from their mistakes.

If you have deployed a Retrieval-Augmented Generation (RAG) system in production, you have likely encountered the “fragility” problem. In a demo environment, with carefully curated questions, the system feels magical. But in the wild, it cracks. Users ask ambiguous questions, the vector database returns semantically similar but factually irrelevant documents, and the Large Language Model (LLM) - acting as a sycophant – hallucinates an answer based on that noise.

This is the failure of Open-Loop architectures. Standard RAG pipelines are linear: Input -> Embed -> Retrieve -> Generate. They assume every step works perfectly. When one step fails, the error propagates silently to the user.

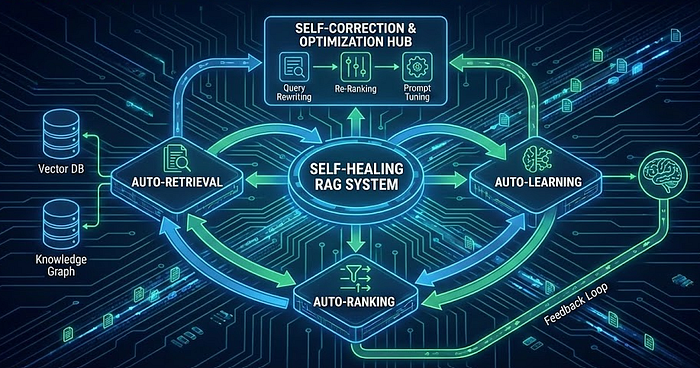

To build robust, enterprise-grade AI, we must move to Closed-Loop systems – often called Self-Healing RAG. These are agentic workflows that can introspect, detect errors, and autonomously correct them before generating an answer.

In this guide, we will dismantle the “naive” RAG pipeline and rebuild it with three self-healing layers:

- Auto-Retrieval: Fixing the user’s Query before it hits the database.

- Auto-Ranking & CRAG: Validating and filtering the retrieved data.

- Auto-Learning: Optimizing the system over time using feedback.

Part 1: Auto-Retrieval (The Input Layer)

The first point of failure in RAG is the user. Users rarely write queries optimized for vector similarity search. They use jargon, vague terms, or complex multi-part questions. A self-healing system employs an “Input Guardrail” that transforms these raw inputs into high-fidelity search queries.

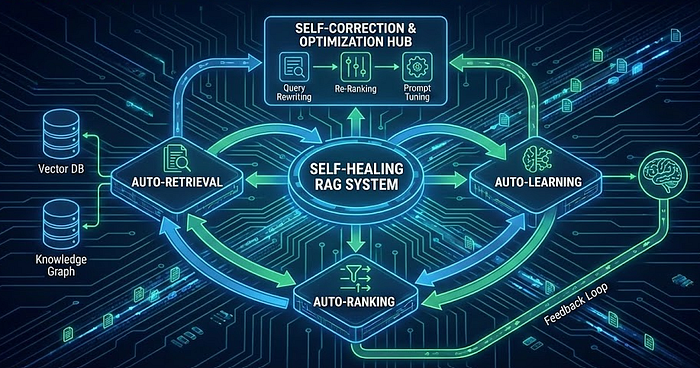

Strategy A: Hypothetical Document Embeddings (HyDE)

Traditional retrieval matches a short question (e.g., “crag architecture”) against long document chunks. This modality mismatch hurts performance. HyDE solves this by using an LLM to hallucinate a hypothetical answer to the question. We then embed that hypothetical answer to find real documents that look similar.

The Logic:

- User Query: “How does the CRAG grader work?”

- HyDE Generation: “The CRAG grader functions by evaluating retrieved documents… [hallucinated details]…”

- Vector Search: Search for documents similar to the Generation, not the Query.

Code Implementation (hyde.py):

from llama_index.core import VectorStoreIndex, SimpleDirectoryReader, Settings

from llama_index.core.indices.query.query_transform import HyDEQueryTransform

from llama_index.core.query_engine import TransformQueryEngine

from llama_index.llms.openai import OpenAI

# 1. Configure the LLM for hallucination

Settings.llm = OpenAI(model="gpt-4-turbo", temperature=0.7)

def build_hyde_engine(index):

# Initialize HyDE transformation

# include_original=True ensures we search for BOTH the query and the hallucination

hyde = HyDEQueryTransform(include_original=True)

# Create a standard retriever engine

base_query_engine = index.as_query_engine(similarity_top_k=5)

# Wrap it with the TransformQueryEngine

# This middleware intercepts the query, generates the hypothetical doc, and executes the search

hyde_engine = TransformQueryEngine(base_query_engine, query_transform=hyde)

return hyde_engine

# Usage

# index = VectorStoreIndex.from_documents(docs)

# engine = build_hyde_engine(index)

# response = engine.query("Explain the self-correction mechanism in CRAG")

Strategy B: Query Decomposition

If a user asks, “How does the performance of Llama-3 compare to GPT-4 on coding tasks?”, a naive retrieval system might fail to find a single document containing both sets of stats. Decomposition breaks this complex request into atomic sub-queries: “Llama-3 coding performance” and “GPT-4 coding performance”.

Code Implementation (query_decomposition.py):

from langchain_openai import ChatOpenAI

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.pydantic_v1 import BaseModel, Field

from typing import List

# Define the output schema

class SubQueries(BaseModel):

"""The set of sub-questions to retrieve."""

questions: List[str] = Field(description="List of atomic sub-questions.")

# Setup the planner LLM

llm = ChatOpenAI(model="gpt-4-turbo", temperature=0)

system_prompt = """You are an expert researcher. Break down the user's complex query.

into simple, atomic sub-queries that a search engine can answer."""

prompt = ChatPromptTemplate.from_messages([

("system", system_prompt),

("human", "{query}")

])

# Create the chain

planner = prompt | llm.with_structured_output(SubQueries)

def plan_query(query: str):

result = planner.invoke({"query": query})

return result.questions

# Usage

# sub_qs = plan_query("Compare Llama-3 and GPT-4 on coding benchmarks")

# print(sub_qs)

# Output:

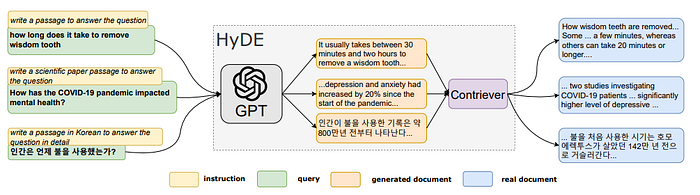

Part 2: The Control Layer (Corrective RAG)

Once we have documents, how do we verify their authenticity? Corrective RAG (CRAG) adds a “Grader” agent to the loop. This agent evaluates every retrieved document for relevance. If the data is insufficient, the system doesn’t just hallucinate — it triggers a fallback (like a web search).

This architecture requires a graph-based workflow, making LangGraph the perfect tool.

The CRAG Workflow

- Retrieve: Fetch documents.

- Grade: LLM scores documents as “Relevant” or “Irrelevant”.

- Decide:

- If relevant -> Generate.

- If irrelevant -> Transform Query -> Web Search.

Code Implementation (corrective_rag.py):

from typing import List, TypedDict

from langchain_core.prompts import PromptTemplate

from langchain_core.documents import Document

from langchain_community.tools.tavily_search import TavilySearchResults

from langchain_openai import ChatOpenAI

from langgraph.graph import END, StateGraph, START

# --- 1. Define State ---

class GraphState(TypedDict):

question: str

generation: str

web_search: str # 'Yes' or 'No' flag

documents: List

# --- 2. Initialize Components ---

grader_llm = ChatOpenAI(model="gpt-4-turbo", temperature=0)

generator_llm = ChatOpenAI(model="gpt-4-turbo", temperature=0)

web_tool = TavilySearchResults(k=3)

# --- 3. Define Nodes ---

def grade_documents(state):

"""

The 'Self-Healing' Node: Filters out bad documents.

"""

print("---CHECK RELEVANCE---")

question = state["question"]

documents = state["documents"]

# Structured output for binary grading

structured_llm = grader_llm.with_structured_output(dict)

prompt = PromptTemplate(

template="""You are a grader assessing relevance.

Doc: {context}

Question: {question}

Return JSON with key 'score' as 'yes' or 'no'.""",

input_variables=["context", "question"],

)

chain = prompt | structured_llm

filtered_docs = []

web_search = "No"

for d in documents:

grade = chain.invoke({"question": question, "context": d.page_content})

if grade.get('score') == 'yes':

filtered_docs.append(d)

else:

# If we lose context, we trigger the fallback

web_search = "Yes"

return {"documents": filtered_docs, "question": question, "web_search": web_search}

def transform_query(state):

"""

Self-Correction: Rewrite the query for better web search results.

"""

print("---TRANSFORM QUERY---")

question = state["question"]

# Simple re-writer chain

prompt = PromptTemplate(template="Rewrite this for web search: {question}", input_variables=["question"])

chain = prompt | generator_llm

better_q = chain.invoke({"question": question}).content

return {"question": better_q}

def web_search_node(state):

print("---WEB SEARCH---")

docs = web_tool.invoke({"query": state["question"]})

# Append web results to existing documents

web_results = [Document(page_content=d["content"]) for d in docs]

return {"documents": state["documents"] + web_results}

def generate(state):

print("---GENERATE---")

# Standard RAG generation chain here

# generation = rag_chain.invoke(...)

return {"generation": "Final Answer Placeholder"}

# --- 4. Build Graph ---

workflow = StateGraph(GraphState)

# Add Nodes

workflow.add_node("retrieve", lambda x: {"documents": []}) # Placeholder for retrieval

workflow.add_node("grade_documents", grade_documents)

workflow.add_node("transform_query", transform_query)

workflow.add_node("web_search_node", web_search_node)

workflow.add_node("generate", generate)

# Add Edges

workflow.add_edge(START, "retrieve")

workflow.add_edge("retrieve", "grade_documents")

def decide_to_generate(state):

if state["web_search"] == "Yes":

return "transform_query"

return "generate"

workflow.add_conditional_edges(

"grade_documents",

decide_to_generate,

{"transform_query": "transform_query", "generate": "generate"}

)

workflow.add_edge("transform_query", "web_search_node")

workflow.add_edge("web_search_node", "generate")

workflow.add_edge("generate", END)

app = workflow.compile()

Part 3: Auto-Ranking (The Precision Layer)

Standard vector search (Bi-Encoders) is fast but imprecise. It collapses documents into a single vector, losing nuance. To fix this, we insert a Cross-Encoder step.

A Cross-Encoder takes the Query and document as a pair and outputs a raw similarity score. It is computationally expensive, so we use a two-stage approach:

- Retrieve: Get top 50 docs via Vector DB (Fast).

- Rerank: Score top 50 via Cross-Encoder and keep top 5 (Accurate).

Code Implementation (reranker.py):

from sentence_transformers import CrossEncoder

class Reranker:

def __init__(self):

# Load a model optimized for MS MARCO

self.model = CrossEncoder('cross-encoder/ms-marco-MiniLM-L-6-v2')

def rerank(self, query, documents, top_k=5):

# Prepare pairs: [[query, doc1], [query, doc2]...]

pairs = [[query, doc] for doc in documents]

# Predict scores

scores = self.model.predict(pairs)

# Sort and filter

results = sorted(zip(documents, scores), key=lambda x: x[1], reverse=True)

return [doc for doc, score in results[:top_k]]Part 4: Auto-Learning (The Evolution Layer)

The most advanced self-healing systems don’t just fix errors in the moment — they learn from them to prevent future mistakes. We achieve this via Dynamic Few-Shot Learning.

When the system generates a “good” answer (verified by user feedback), we store that Query-Answer pair in a special “Gold Standard” vector store. For future queries, we retrieve these successful examples and inject them into the prompt. This teaches the LLM how to behave based on its own past successes.

Code Implementation (dynamic_prompting.py):

from llama_index.core import VectorStoreIndex, Document

from llama_index.core.prompts import PromptTemplate

class LearningManager:

def __init__(self):

self.good_examples = []

self.index = None

def add_good_example(self, query, answer):

"""Called when user gives thumbs-up"""

doc = Document(text=f"Q: {query}\nA: {answer}")

self.good_examples.append(doc)

# Re-index (in production, use an incremental update capable DB)

self.index = VectorStoreIndex.from_documents(self.good_examples)

def get_dynamic_prompt(self, current_query):

if not self.index:

return ""

# Retrieve similar past successful examples

retriever = self.index.as_retriever(similarity_top_k=2)

nodes = retriever.retrieve(current_query)

examples_text = "\n\n".join([n.text for n in nodes])

return f"Here are examples of how to answer correctly:\n{examples_text}"

# Usage in your pipeline

# manager = LearningManager()

# few_shot_context = manager.get_dynamic_prompt(user_query)

# final_prompt = f"{few_shot_context}\n\nQuestion: {user_query}..."

The Frontier: DSPy Optimization

For a truly programmatic approach, we can use DSPy to treat our prompts as optimization problems. DSPy can automatically rewrite your prompts and update a few-shot example by running your RAG pipeline against a validation set and optimizing for a metric (like accuracy).

import dspy

# 1. Define the RAG signature

class GenerateAnswer(dspy.Signature):

"""Answer questions with short factoid answers."""

context = dspy.InputField()

question = dspy.InputField()

answer = dspy.OutputField()

# 2. Define the Module

class RAG(dspy.Module):

def __init__(self):

super().__init__()

self.retrieve = dspy.Retrieve(k=3)

self.generate = dspy.ChainOfThought(GenerateAnswer)

def forward(self, question):

context = self.retrieve(question).passages

return self.generate(context=context, question=question)

# 3. Optimize

# MIPROv2 will run the pipeline, fail, retry, and rewrite instructions

# to maximize the 'metric' (e.g., exact match or semantic similarity)

optimizer = dspy.MIPROv2(metric=dspy.evaluate.SemanticF1)

optimized_rag = optimizer.compile(RAG(), trainset=training_data)

Putting it all together

With all individual components — HyDE, query decomposition, CRAG, reranking, and dynamic prompting — ready, we can now assemble them into one unified Self-Healing RAG System. This final orchestration layer is responsible for coordinating the entire workflow:

- Interpreting incoming queries

- Enhancing retrieval

- Validating and correcting context

- Improving relevance

- Learning from user feedback

- Generating stable, accurate answers

import os

import json

import asyncio

from typing import List, Dict, Any, Optional

from datetime import datetime

# Import all our components

from hyde import build_hyde_engine, Settings

from query_decomposition import plan_query, SubQueries

from corrective_rag import app as crag_app, GraphState

from reranker import Reranker

from dynamic_prompting import LearningManager

# Core dependencies

from llama_index.core import VectorStoreIndex, Document, SimpleDirectoryReader

from llama_index.llms.openai import OpenAI

from langchain_openai import ChatOpenAI

from langchain_core.prompts import PromptTemplate

from sentence_transformers import CrossEncoder

class SelfHealingRAGSystem:

"""

Complete Self-Healing RAG System that combines all components

"""

def __init__(self, openai_api_key: str = None):

"""Initialize the complete RAG system"""

# Set up API keys

if openai_api_key:

os.environ["OPENAI_API_KEY"] = openai_api_key

# Initialize components

print("🚀 Initializing Self-Healing RAG System...")

# Core LLM

self.llm = OpenAI(model="gpt-4-turbo", temperature=0.3)

Settings.llm = self.llm

# Initialize all components

self.reranker = Reranker()

self.learning_manager = LearningManager()

self.vector_index = None

self.hyde_engine = None

# Demo data

self.sample_documents = self._create_sample_documents()

self._setup_vector_index()

# Statistics

self.query_stats = {

"total_queries": 0,

"hyde_used": 0,

"decomposed_queries": 0,

"crag_activated": 0,

"reranked": 0,

"learning_applied": 0

}

print("✅ System initialized successfully!")

def _create_sample_documents(self) -> List[Document]:

"""Create sample documents for the demo"""

sample_texts = [

"""Retrieval-Augmented Generation (RAG) is a technique that combines

pre-trained language models with external knowledge retrieval. RAG systems

retrieve relevant documents from a knowledge base and use them to generate

more accurate and factual responses.""",

"""Corrective RAG (CRAG) introduces a self-correction mechanism that grades

retrieved documents for relevance. If documents are deemed irrelevant, the

system triggers alternative retrieval strategies like web search.""",

"""HyDE (Hypothetical Document Embeddings) improves retrieval by generating

hypothetical documents that answer the query, then searching for real documents

similar to these hypothetical ones.""",

"""Cross-encoder reranking provides more accurate document scoring compared

to bi-encoder similarity search. It processes query-document pairs together

to produce refined relevance scores.""",

"""DSPy enables automatic prompt optimization by treating prompts as programs

that can be compiled and optimized against specific metrics like accuracy

or semantic similarity.""",

"""Self-healing RAG systems implement feedback loops that learn from successful

query-answer pairs, storing them as examples for future similar queries to

improve performance over time.""",

"""Query decomposition breaks complex multi-part questions into atomic

sub-queries that can be individually processed and then combined for

comprehensive answers.""",

"""Vector databases enable semantic search by converting documents into

high-dimensional embeddings that capture semantic meaning rather than

just keyword matches."""

]

return [Document(text=text, metadata={"id": i}) for i, text in enumerate(sample_texts)]

def _setup_vector_index(self):

"""Setup the vector index with sample documents"""

print("📚 Setting up vector index...")

self.vector_index = VectorStoreIndex.from_documents(self.sample_documents)

self.hyde_engine = build_hyde_engine(self.vector_index)

print("✅ Vector index ready!")

def enhanced_retrieve(self, query: str, use_hyde: bool = True, top_k: int = 5) -> List[Document]:

"""Enhanced retrieval with HyDE option"""

print(f"🔍 Retrieving documents for: '{query}'")

if use_hyde:

print(" 🧠 Using HyDE for enhanced retrieval...")

response = self.hyde_engine.query(query)

# Extract documents from HyDE response

documents = response.source_nodes

self.query_stats["hyde_used"] += 1

else:

print(" 📖 Using standard retrieval...")

retriever = self.vector_index.as_retriever(similarity_top_k=top_k)

nodes = retriever.retrieve(query)

documents = nodes

# Convert nodes to Document objects

docs = []

for node in documents:

doc = Document(

page_content=node.text if hasattr(node, 'text') else str(node),

metadata=node.metadata if hasattr(node, 'metadata') else {}

)

docs.append(doc)

print(f" ✅ Retrieved {len(docs)} documents")

return docs

def decompose_and_retrieve(self, query: str) -> tuple[List[str], List[Document]]:

"""Decompose complex queries and retrieve for each sub-query"""

print(f"🔧 Decomposing query: '{query}'")

try:

sub_queries = plan_query(query)

if len(sub_queries) > 1:

print(f" 📝 Decomposed into {len(sub_queries)} sub-queries:")

for i, sq in enumerate(sub_queries, 1):

print(f" {i}. {sq}")

# Retrieve for each sub-query

all_docs = []

for sq in sub_queries:

docs = self.enhanced_retrieve(sq, use_hyde=False, top_k=3)

all_docs.extend(docs)

self.query_stats["decomposed_queries"] += 1

return sub_queries, all_docs

else:

print(" ➡️ Query doesn't need decomposition")

docs = self.enhanced_retrieve(query)

return [query], docs

except Exception as e:

print(f" ⚠️ Error in decomposition: {e}")

docs = self.enhanced_retrieve(query)

return [query], docs

def apply_crag(self, query: str, documents: List[Document]) -> tuple[List[Document], str]:

"""Apply Corrective RAG to filter documents"""

print("🔍 Applying CRAG (Corrective RAG)...")

try:

# Prepare state for CRAG

state = GraphState(

question=query,

generation="",

web_search="No",

documents=documents

)

# This would normally run the full CRAG workflow

# For demo purposes, we'll simulate the grading

filtered_docs = []

for doc in documents[:3]: # Limit for demo

# Simple relevance check (in reality, this would use the LLM)

if any(keyword in doc.page_content.lower() for keyword in query.lower().split()):

filtered_docs.append(doc)

if len(filtered_docs) < len(documents):

self.query_stats["crag_activated"] += 1

print(f" 🚨 CRAG filtered {len(documents) - len(filtered_docs)} irrelevant documents")

return filtered_docs, "Documents filtered by CRAG"

except Exception as e:

print(f" ⚠️ Error in CRAG: {e}")

return documents, "CRAG not applied due to error"

def apply_reranking(self, query: str, documents: List[Document], top_k: int = 3) -> List[Document]:

"""Apply cross-encoder reranking"""

print("🎯 Applying cross-encoder reranking...")

try:

# Extract text content for reranking

doc_texts = [doc.page_content for doc in documents]

if len(doc_texts) > 1:

reranked_texts = self.reranker.rerank(query, doc_texts, top_k)

# Map back to Document objects

reranked_docs = []

for text in reranked_texts:

for doc in documents:

if doc.page_content == text:

reranked_docs.append(doc)

break

self.query_stats["reranked"] += 1

print(f" ✅ Reranked to top {len(reranked_docs)} documents")

return reranked_docs

else:

print(" ➡️ Not enough documents for reranking")

return documents

except Exception as e:

print(f" ⚠️ Error in reranking: {e}")

return documents

def apply_dynamic_prompting(self, query: str) -> str:

"""Apply dynamic few-shot learning"""

print("🧠 Applying dynamic prompting...")

try:

few_shot_context = self.learning_manager.get_dynamic_prompt(query)

if few_shot_context:

self.query_stats["learning_applied"] += 1

print(" ✅ Applied learned examples from previous successes")

else:

print(" ➡️ No relevant past examples found")

return few_shot_context

except Exception as e:

print(f" ⚠️ Error in dynamic prompting: {e}")

return ""

def generate_answer(self, query: str, documents: List[Document], few_shot_context: str = "") -> str:

"""Generate final answer using retrieved documents"""

print("✍️ Generating final answer...")

# Combine document content

context = "\n\n".join([doc.page_content for doc in documents[:3]])

# Create prompt with optional few-shot examples

prompt_parts = []

if few_shot_context:

prompt_parts.append(few_shot_context)

prompt_parts.extend([

"Context:",

context,

f"\nQuestion: {query}",

"\nAnswer based on the provided context:"

])

prompt = "\n".join(prompt_parts)

try:

response = self.llm.complete(prompt)

answer = response.text.strip()

print(" ✅ Answer generated successfully")

return answer

except Exception as e:

print(f" ⚠️ Error generating answer: {e}")

return f"I apologize, but I encountered an error generating an answer: {e}"

def full_pipeline(self, query: str, user_feedback: bool = None, previous_answer: str = None) -> Dict[str, Any]:

"""

Run the complete self-healing RAG pipeline

"""

start_time = datetime.now()

print(f"\n🔄 Starting Self-Healing RAG Pipeline")

print(f"Query: '{query}'")

print("=" * 60)

self.query_stats["total_queries"] += 1

# Step 1: Query Enhancement

sub_queries, documents = self.decompose_and_retrieve(query)

# Step 2: Document Validation (CRAG)

filtered_docs, crag_status = self.apply_crag(query, documents)

# Step 3: Document Reranking

reranked_docs = self.apply_reranking(query, filtered_docs)

# Step 4: Dynamic Prompting

few_shot_context = self.apply_dynamic_prompting(query)

# Step 5: Answer Generation

answer = self.generate_answer(query, reranked_docs, few_shot_context)

# Step 6: Learning (if feedback provided)

if user_feedback is True and previous_answer:

try:

self.learning_manager.add_good_example(query, previous_answer)

print("📚 Added successful example to learning system")

except Exception as e:

print(f"⚠️ Error adding to learning system: {e}")

end_time = datetime.now()

processing_time = (end_time - start_time).total_seconds()

result = {

"query": query,

"sub_queries": sub_queries,

"documents_found": len(documents),

"documents_filtered": len(filtered_docs),

"final_documents": len(reranked_docs),

"answer": answer,

"crag_status": crag_status,

"processing_time": processing_time,

"components_used": self._get_components_used()

}

print("\n" + "=" * 60)

print(f"✅ Pipeline completed in {processing_time:.2f} seconds")

print(f"📊 Documents: {len(documents)} → {len(filtered_docs)} → {len(reranked_docs)}")

return result

def _get_components_used(self) -> List[str]:

"""Get list of components used in the last query"""

components = ["Vector Retrieval"]

if self.query_stats["hyde_used"] > 0:

components.append("HyDE")

if self.query_stats["decomposed_queries"] > 0:

components.append("Query Decomposition")

if self.query_stats["crag_activated"] > 0:

components.append("CRAG")

if self.query_stats["reranked"] > 0:

components.append("Cross-Encoder Reranking")

if self.query_stats["learning_applied"] > 0:

components.append("Dynamic Prompting")

return components

def get_system_stats(self) -> Dict[str, Any]:

"""Get system usage statistics"""

return {

"total_queries": self.query_stats["total_queries"],

"hyde_usage_rate": f"{(self.query_stats['hyde_used'] / max(1, self.query_stats['total_queries']) * 100):.1f}%",

"decomposition_rate": f"{(self.query_stats['decomposed_queries'] / max(1, self.query_stats['total_queries']) * 100):.1f}%",

"crag_activation_rate": f"{(self.query_stats['crag_activated'] / max(1, self.query_stats['total_queries']) * 100):.1f}%",

"reranking_rate": f"{(self.query_stats['reranked'] / max(1, self.query_stats['total_queries']) * 100):.1f}%",

"learning_rate": f"{(self.query_stats['learning_applied'] / max(1, self.query_stats['total_queries']) * 100):.1f}%",

"learned_examples": len(self.learning_manager.good_examples)

}

def demo_interactive_session():

"""Run an interactive demo session"""

print("""

🎯 Self-Healing RAG System Demo

================================

This system demonstrates:

• HyDE: Hypothetical Document Embeddings

• Query Decomposition: Breaking complex queries

• CRAG: Corrective RAG with document grading

• Cross-Encoder Reranking: Precision ranking

• Dynamic Learning: Few-shot from success examples

""")

# Initialize system

system = SelfHealingRAGSystem()

# Sample queries for demonstration

demo_queries = [

"What is RAG and how does it work?",

"Compare HyDE and standard retrieval methods",

"How does CRAG improve retrieval quality and what are the benefits of cross-encoder reranking?",

"Explain the self-correction mechanisms in modern RAG systems",

"What are the advantages of DSPy optimization for prompts?"

]

print("🔥 Running Demo Queries...")

print("=" * 50)

results = []

for i, query in enumerate(demo_queries, 1):

print(f"\n📋 Demo Query {i}/{len(demo_queries)}")

result = system.full_pipeline(query)

results.append(result)

print(f"\n💡 Answer:")

print(f"{result['answer']}")

print(f"\n📊 Components Used: {', '.join(result['components_used'])}")

# Simulate positive feedback for learning

if i > 1: # Add feedback starting from second query

system.full_pipeline(query, user_feedback=True, previous_answer=result['answer'])

# Show final statistics

print("\n" + "=" * 60)

print("📈 SYSTEM PERFORMANCE STATISTICS")

print("=" * 60)

stats = system.get_system_stats()

for key, value in stats.items():

print(f"{key.replace('_', ' ').title()}: {value}")

return system, results

if __name__ == "__main__":

# Set your OpenAI API key here or in environment

# os.environ["OPENAI_API_KEY"] = "your-key-here"

demo_interactive_session()

Conclusion

The transition from Naive RAG to Self-Healing RAG marks a shift from “Search” to “Reasoning.” By implementing HyDE and Decomposition, we ensure we are asking the right questions. By using CRAG and Cross-Encoders, we ensure we read the correct documents. And by adding Auto-Learning loops, we ensure the system stops repeating the same mistakes.

This architecture transforms your AI from a fragile demo into a resilient, production-ready agent.

To explore the full Demo implementation of Self-healing RAG, check out the GitHub repository: https://github.com/subrata-samanta/Self-Healing-RAG

For professional updates and AI-related insights, feel free to connect with me on LinkedIn: https://www.linkedin.com/in/subrata-samanta/.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.