Unsupervised Clustering: Can We Identify Clusters in the Descriptions of Sounds in Music?

Last Updated on June 4, 2024 by Editorial Team

Author(s): Greg Postalian-Yrausquin

Originally published on Towards AI.

The data used is tricky because it is a list of Spotify songs, which are assigned values that describe the sounds in them. At this point, the goal is to see if those descriptions can be used to identify the music genre or the artist.

import numpy as np

import pandas as pd

from sklearn.decomposition import PCA

from sklearn.cluster import KMeans

from sklearn.cluster import AgglomerativeClustering

from sklearn.cluster import Birch

from sklearn.cluster import OPTICS

from sklearn.cluster import DBSCAN

from sklearn.metrics import silhouette_samples, silhouette_score, calinski_harabasz_score

from sklearn.preprocessing import StandardScaler

import matplotlib.pyplot as plt

import matplotlib.cm as cm

import seaborn as sns

import warnings

import ast

Load dataset and show a quick description and sample

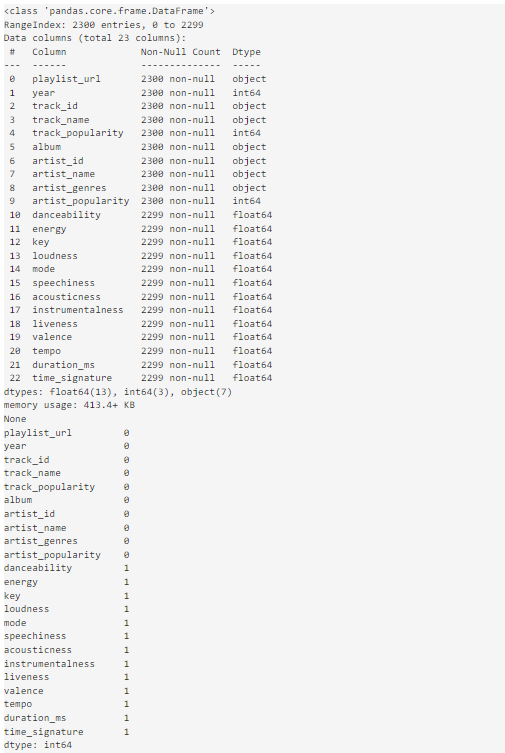

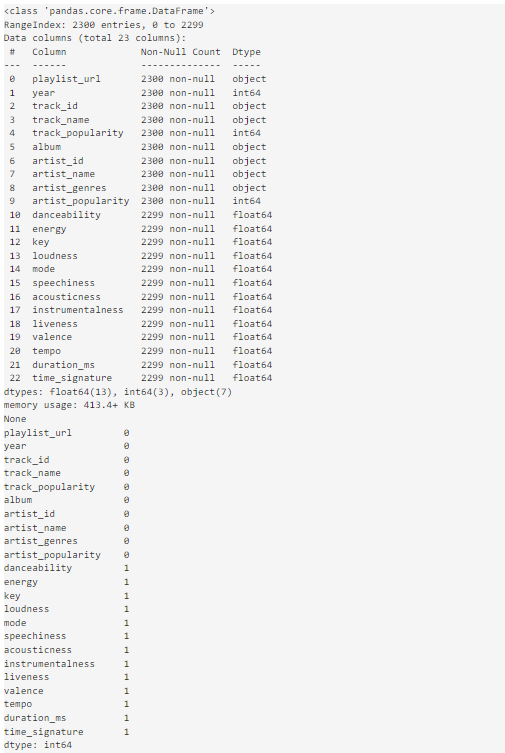

dataset = pd.read_csv("playlist_2010to2022.csv")

print(dataset.info())

print(dataset.isna().sum())

print(dataset.describe())

dataset.head(10)

From this dataset I will select the sound attributes of the songs. I am also saving the metadata appart

dataset = dataset.set_index('track_id')

dataset = dataset.dropna()

metadata = dataset[['track_name','album','artist_id','artist_name','artist_genres','year']]

dataset = dataset[['danceability','energy','key','loudness','speechiness','acousticness','instrumentalness','liveness','valence','tempo','duration_ms','time_signature']]

The following code defines a PCA analysis to reduce the number of variables (might sound as an overkill for this example, but it also serves the purpose to scale the variables properly. In my experience clustering sometimes works better working with principal components than with the actual values). Before the PCA a standard scaler is also run for each column

def varred(simio):

scaler = PCA(n_components=0.85, svd_solver='full')

resultsWordstrans = simio.copy()

resultsWordstrans = scaler.fit_transform(resultsWordstrans)

resultsWordstrans = pd.DataFrame(resultsWordstrans)

resultsWordstrans.index = simio.index

resultsWordstrans.columns = resultsWordstrans.columns.astype(str)

return resultsWordstrans

def properscaler(simio):

scaler = StandardScaler()

resultsWordstrans = scaler.fit_transform(simio)

resultsWordstrans = pd.DataFrame(resultsWordstrans)

resultsWordstrans.index = simio.index

resultsWordstrans.columns = simio.columns

return resultsWordstrans

datasetR = properscaler(dataset)

datasetR = varred(datasetR)

First, I will review the metrics, setting in maximum of 20 clusters to determine the number of clusters using the silhouette and the Calinski Harabasz score.

a = []

X = datasetR.to_numpy(dtype='float')

for ncl in np.arange(2, int(20), 1):

clusterer = AgglomerativeClustering(n_clusters=int(ncl))

cluster_labels1 = clusterer.fit_predict(X)

silhouette_avg1 = silhouette_score(X, cluster_labels1)

calinski1 = calinski_harabasz_score(X, cluster_labels1)

clusterer = KMeans(n_clusters=int(ncl))

with warnings.catch_warnings():

warnings.simplefilter("ignore")

cluster_labels2 = clusterer.fit_predict(X)

silhouette_avg2 = silhouette_score(X, cluster_labels2)

calinski2 = calinski_harabasz_score(X, cluster_labels2)

clusterer = Birch(n_clusters=int(ncl))

cluster_labels3 = clusterer.fit_predict(X)

silhouette_avg3 = silhouette_score(X, cluster_labels3)

calinski3 = calinski_harabasz_score(X, cluster_labels3)

row = pd.DataFrame({"ncl": [ncl],

"silAggCl": [silhouette_avg1], "c_hAggCl": [calinski1],

"silKMeans": [silhouette_avg2], "c_hKMeans": [calinski2],

"silBirch": [silhouette_avg3], "c_hBirch": [calinski3],

})

a.append(row)

scores = pd.concat(a, ignore_index=True)

plt.style.use('bmh')

fig, [ax_sil, ax_ch] = plt.subplots(1,2,figsize=(15,7))

ax_sil.plot(scores["ncl"], scores["silAggCl"], 'g-')

ax_sil.plot(scores["ncl"], scores["silKMeans"], 'b-')

ax_sil.plot(scores["ncl"], scores["silBirch"], 'r-')

ax_ch.plot(scores["ncl"], scores["c_hAggCl"], 'g-', label='Agg Clust')

ax_ch.plot(scores["ncl"], scores["c_hKMeans"], 'b-', label='KMeans')

ax_ch.plot(scores["ncl"], scores["c_hBirch"], 'r-', label='Birch')

ax_sil.set_title("Silhouette curves")

ax_ch.set_title("Calinski Harabasz curves")

ax_sil.set_xlabel('clusters')

ax_sil.set_ylabel('silhouette_avg')

ax_ch.set_xlabel('clusters')

ax_ch.set_ylabel('calinski_harabasz')

ax_ch.legend(loc="upper right")

plt.show()

ncl_AggCl = 11

ncl_KMeans = 17

ncl_Birch = 9

X = datasetR.to_numpy(dtype='float')

clusterer1 = AgglomerativeClustering(n_clusters=int(ncl_AggCl))

cluster_labels1 = clusterer1.fit_predict(X)

n_clusters1 = max(cluster_labels1)

silhouette_avg1 = silhouette_score(X, cluster_labels1)

sample_silhouette_values1 = silhouette_samples(X, cluster_labels1)

with warnings.catch_warnings():

warnings.simplefilter("ignore")

clusterer2 = KMeans(n_clusters=int(ncl_KMeans))

cluster_labels2 = clusterer2.fit_predict(X)

n_clusters2 = max(cluster_labels2)

silhouette_avg2 = silhouette_score(X, cluster_labels2)

sample_silhouette_values2 = silhouette_samples(X, cluster_labels2)

clusterer3 = Birch(n_clusters=int(ncl_Birch))

cluster_labels3 = clusterer3.fit_predict(X)

n_clusters3 = max(cluster_labels3)

silhouette_avg3 = silhouette_score(X, cluster_labels3)

sample_silhouette_values3 = silhouette_samples(X, cluster_labels3)

clusterer4 = OPTICS(min_samples=2)

cluster_labels4 = clusterer4.fit_predict(X)

n_clusters4 = max(cluster_labels4)

silhouette_avg4 = silhouette_score(X, cluster_labels4)

sample_silhouette_values4 = silhouette_samples(X, cluster_labels4)

clusterer5 = DBSCAN(eps=1, min_samples=2)

cluster_labels5 = clusterer5.fit_predict(X)

n_clusters5 = max(cluster_labels5)

silhouette_avg5 = silhouette_score(X, cluster_labels5)

sample_silhouette_values5 = silhouette_samples(X, cluster_labels5)

finalDF = datasetR.copy()

finalDF["clAggCl"] = cluster_labels1

finalDF["clKMeans"] = cluster_labels2

finalDF["clBirch"] = cluster_labels3

finalDF["clOptics"] = cluster_labels4

finalDF["clDbscan"] = cluster_labels5

finalDF["silAggCl"] = sample_silhouette_values1

finalDF["silKMeans"] = sample_silhouette_values2

finalDF["silBirch"] = sample_silhouette_values3

finalDF["silOptics"] = sample_silhouette_values4

finalDF["silDbscan"] = sample_silhouette_values5

finalDFf = pd.merge(finalDF, metadata, left_index=True, right_index=True)

finalDFf['artist_genres'] = finalDFf['artist_genres'].apply(lambda x: ast.literal_eval(x))

fig, [ax1, ax2, ax3, ax4, ax5] = plt.subplots(1,5,figsize=(20,20))

ax1.set_xlim([-0.1, 1])

ax1.set_ylim([0, len(X) + (n_clusters1 + 1) * 10])

y_lower = 10

for i in range(min(cluster_labels1),max(cluster_labels1)+1):

ith_cluster_silhouette_values = sample_silhouette_values1[cluster_labels1 == i]

ith_cluster_silhouette_values.sort()

size_cluster_i = ith_cluster_silhouette_values.shape[0]

y_upper = y_lower + size_cluster_i

color = cm.nipy_spectral(float(i) / n_clusters1)

ax1.fill_betweenx(

np.arange(y_lower, y_upper),

0,

ith_cluster_silhouette_values,

facecolor=color,

edgecolor=color,

alpha=0.7,

)

ax1.text(-0.05, y_lower + 0.5 * size_cluster_i, str(i))

y_lower = y_upper + 10 # 10 for the 0 samples

ax1.set_title("Silhouette plot for Agg. Clustering")

ax1.set_xlabel("Silhouette coefficient values")

ax1.set_ylabel("Cluster labels")

ax1.axvline(x=silhouette_avg1, color="red", linestyle="--")

ax1.set_yticks([]) # Clear the yaxis labels / ticks

ax1.set_xticks([-0.1, 0, 0.2, 0.4, 0.6, 0.8, 1])

ax2.set_xlim([-0.1, 1])

ax2.set_ylim([0, len(X) + (n_clusters2 + 1) * 10])

y_lower = 10

for i in range(min(cluster_labels2),max(cluster_labels2)+1):

ith_cluster_silhouette_values = sample_silhouette_values2[cluster_labels2 == i]

ith_cluster_silhouette_values.sort()

size_cluster_i = ith_cluster_silhouette_values.shape[0]

y_upper = y_lower + size_cluster_i

color = cm.nipy_spectral(float(i) / n_clusters2)

ax2.fill_betweenx(

np.arange(y_lower, y_upper),

0,

ith_cluster_silhouette_values,

facecolor=color,

edgecolor=color,

alpha=0.7,

)

ax2.text(-0.05, y_lower + 0.5 * size_cluster_i, str(i))

y_lower = y_upper + 10 # 10 for the 0 samples

ax2.set_title("Silhouette plot for KMeans")

ax2.set_xlabel("Silhouette coefficient values")

ax2.set_ylabel("Cluster labels")

ax2.axvline(x=silhouette_avg2, color="red", linestyle="--")

ax2.set_yticks([]) # Clear the yaxis labels / ticks

ax2.set_xticks([-0.1, 0, 0.2, 0.4, 0.6, 0.8, 1])

ax3.set_xlim([-0.1, 1])

ax3.set_ylim([0, len(X) + (n_clusters3 + 1) * 10])

y_lower = 10

for i in range(min(cluster_labels3),max(cluster_labels3)+1):

ith_cluster_silhouette_values = sample_silhouette_values3[cluster_labels3 == i]

ith_cluster_silhouette_values.sort()

size_cluster_i = ith_cluster_silhouette_values.shape[0]

y_upper = y_lower + size_cluster_i

color = cm.nipy_spectral(float(i) / n_clusters3)

ax3.fill_betweenx(

np.arange(y_lower, y_upper),

0,

ith_cluster_silhouette_values,

facecolor=color,

edgecolor=color,

alpha=0.7,

)

ax3.text(-0.05, y_lower + 0.5 * size_cluster_i, str(i))

y_lower = y_upper + 10 # 10 for the 0 samples

ax3.set_title("Silhouette plot for Birch")

ax3.set_xlabel("Silhouette coefficient values")

ax3.set_ylabel("Cluster labels")

ax3.axvline(x=silhouette_avg3, color="red", linestyle="--")

ax3.set_yticks([]) # Clear the yaxis labels / ticks

ax3.set_xticks([-0.1, 0, 0.2, 0.4, 0.6, 0.8, 1])

ax4.set_xlim([-0.1, 1])

ax4.set_ylim([0, len(X) + (n_clusters4 + 1) * 10])

y_lower = 10

for i in range(min(cluster_labels4),max(cluster_labels4)+1):

ith_cluster_silhouette_values = sample_silhouette_values4[cluster_labels4 == i]

ith_cluster_silhouette_values.sort()

size_cluster_i = ith_cluster_silhouette_values.shape[0]

y_upper = y_lower + size_cluster_i

color = cm.nipy_spectral(float(i) / n_clusters4)

ax4.fill_betweenx(

np.arange(y_lower, y_upper),

0,

ith_cluster_silhouette_values,

facecolor=color,

edgecolor=color,

alpha=0.7,

)

ax4.text(-0.05, y_lower + 0.5 * size_cluster_i, str(i))

y_lower = y_upper + 10 # 10 for the 0 samples

ax4.set_title("Silhouette plot for Optics")

ax4.set_xlabel("Silhouette coefficient values")

ax4.set_ylabel("Cluster labels")

ax4.axvline(x=silhouette_avg4, color="red", linestyle="--")

ax4.set_yticks([]) # Clear the yaxis labels / ticks

ax4.set_xticks([-0.1, 0, 0.2, 0.4, 0.6, 0.8, 1])

ax5.set_xlim([-0.1, 1])

ax5.set_ylim([0, len(X) + (n_clusters5 + 1) * 10])

y_lower = 10

for i in range(min(cluster_labels5),max(cluster_labels5)+1):

ith_cluster_silhouette_values = sample_silhouette_values5[cluster_labels5 == i]

ith_cluster_silhouette_values.sort()

size_cluster_i = ith_cluster_silhouette_values.shape[0]

y_upper = y_lower + size_cluster_i

color = cm.nipy_spectral(float(i) / n_clusters5)

ax5.fill_betweenx(

np.arange(y_lower, y_upper),

0,

ith_cluster_silhouette_values,

facecolor=color,

edgecolor=color,

alpha=0.7,

)

ax5.text(-0.05, y_lower + 0.5 * size_cluster_i, str(i))

y_lower = y_upper + 10 # 10 for the 0 samples

ax5.set_title("Silhouette plot for DBScan")

ax5.set_xlabel("Silhouette coefficient values")

ax5.set_ylabel("Cluster labels")

ax5.axvline(x=silhouette_avg5, color="red", linestyle="--")

ax5.set_yticks([]) # Clear the yaxis labels / ticks

ax5.set_xticks([-0.1, 0, 0.2, 0.4, 0.6, 0.8, 1])

Since I need to select a genre for the next part of the exercise I need first to undo that column, which is a list, but it is written as text, and I will count the number of words in a genre, to remove the composed ones. This will show the unique values of genres.

finalDFf = pd.merge(finalDF, metadata, left_index=True, right_index=True)

finalDFf['artist_genres'] = finalDFf['artist_genres'].apply(lambda x: ast.literal_eval(x))

genres = pd.DataFrame(finalDFf['artist_genres'].explode())

finalDFgen = pd.merge(finalDF, genres, left_index=True, right_index=True)

finalDFgen = finalDFgen.drop_duplicates()

genrestbl = pd.DataFrame(finalDFgen.groupby('artist_genres')['artist_genres'].count()).reset_index(names="genre").sort_values(['artist_genres'], ascending=False)

print(genrestbl.head(100).to_string())

List of genres to select on the next section

selectedgenres = ['rock','reggaeton','house','hip pop','electro house','trap latino', 'punk', 'nu metal', 'pop dance']

filtered = finalDFgen[finalDFgen['artist_genres'].isin(selectedgenres)]

fig, [ax1, ax2, ax3, ax4, ax5] = plt.subplots(5,1, figsize=(10,20))

sns.scatterplot(data=filtered, x="0", y="1", hue="clAggCl", style="artist_genres",s = 200, alpha = 1, palette="Set3", ax=ax1)

sns.scatterplot(data=filtered, x="0", y="1", hue="clKMeans", style="artist_genres",s = 200, alpha = 1, palette="Set3", ax=ax2)

sns.scatterplot(data=filtered, x="0", y="1", hue="clBirch", style="artist_genres",s = 200, alpha = 1, palette="Set3", ax=ax3)

sns.scatterplot(data=filtered, x="0", y="1", hue="clOptics", style="artist_genres",s = 200, alpha = 1, palette="Set3", ax=ax4)

sns.scatterplot(data=filtered, x="0", y="1", hue="clDbscan", style="artist_genres",s = 200, alpha = 1, palette="Set3", ax=ax5)

ax1.set_title("Agg. Clustering")

ax2.set_title("KMeans")

ax3.set_title("Birch")

ax4.set_title("Optics")

ax5.set_title("DBScan")

ax1.legend(bbox_to_anchor=(1.35, 1), borderaxespad=0)

ax2.legend(bbox_to_anchor=(1.35, 1), borderaxespad=0)

ax3.legend(bbox_to_anchor=(1.35, 1), borderaxespad=0)

ax4.legend(bbox_to_anchor=(1.35, 1), borderaxespad=0)

ax5.legend(bbox_to_anchor=(1.35, 1), borderaxespad=0)

Heatmap between genre and clusters

artistheatAggCl = pd.DataFrame(filtered.groupby(['artist_genres','clAggCl'])['artist_genres'].count()).reset_index(names=["genre","cluster"])

artistheatAggCl = artistheatAggCl.pivot(index="genre", columns="cluster", values="artist_genres")

artistheatKMeans = pd.DataFrame(filtered.groupby(['artist_genres','clKMeans'])['artist_genres'].count()).reset_index(names=["genre","cluster"])

artistheatKMeans = artistheatKMeans.pivot(index="genre", columns="cluster", values="artist_genres")

artistheatBirch = pd.DataFrame(filtered.groupby(['artist_genres','clBirch'])['artist_genres'].count()).reset_index(names=["genre","cluster"])

artistheatBirch = artistheatBirch.pivot(index="genre", columns="cluster", values="artist_genres")

artistheatOptics = pd.DataFrame(filtered.groupby(['artist_genres','clOptics'])['artist_genres'].count()).reset_index(names=["genre","cluster"])

artistheatOptics = artistheatOptics.pivot(index="genre", columns="cluster", values="artist_genres")

artistheatDbscan = pd.DataFrame(filtered.groupby(['artist_genres','clDbscan'])['artist_genres'].count()).reset_index(names=["genre","cluster"])

artistheatDbscan = artistheatDbscan.pivot(index="genre", columns="cluster", values="artist_genres")

fig, axes = plt.subplots(3,2, figsize=(20,20))

ax1, ax2, ax3, ax4, ax5, ax6 = axes.flatten()

sns.heatmap(artistheatAggCl, cmap="YlOrBr", ax=ax1)

sns.heatmap(artistheatKMeans, cmap="YlOrBr", ax=ax2)

sns.heatmap(artistheatBirch, cmap="YlOrBr", ax=ax3)

sns.heatmap(artistheatOptics, cmap="YlOrBr", ax=ax4)

sns.heatmap(artistheatDbscan, cmap="YlOrBr", ax=ax5)

ax1.set_title("Agg. Clustering")

ax2.set_title("KMeans")

ax3.set_title("Birch")

ax4.set_title("Optics")

ax5.set_title("DBScan")

It is possible to do the same exploration by artist instead

artisttbl = pd.DataFrame(finalDFf.groupby('artist_name')['artist_name'].count()).reset_index(names="artist").sort_values(['artist_name'], ascending=False)

print(artisttbl.head(100).to_string())

I selected the artists to explore

selectedartists = ['Jennifer Lopez','50 Cent','Avril Lavigne','Ariana Grande','David Guetta','Adele','Amy Winehouse']

filtered = finalDFf[finalDFf['artist_name'].isin(selectedartists)]

fig, [ax1, ax2, ax3, ax4, ax5] = plt.subplots(5,1, figsize=(10,20))

sns.scatterplot(data=filtered, x="0", y="1", hue="clAggCl", style="artist_name",s = 200, alpha = 1, palette="Set3", ax=ax1)

sns.scatterplot(data=filtered, x="0", y="1", hue="clKMeans", style="artist_name",s = 200, alpha = 1, palette="Set3", ax=ax2)

sns.scatterplot(data=filtered, x="0", y="1", hue="clBirch", style="artist_name",s = 200, alpha = 1, palette="Set3", ax=ax3)

sns.scatterplot(data=filtered, x="0", y="1", hue="clOptics", style="artist_name",s = 200, alpha = 1, palette="Set3", ax=ax4)

sns.scatterplot(data=filtered, x="0", y="1", hue="clDbscan", style="artist_name",s = 200, alpha = 1, palette="Set3", ax=ax5)

ax1.set_title("Agg. Clustering")

ax2.set_title("KMeans")

ax3.set_title("Birch")

ax4.set_title("Optics")

ax5.set_title("DBScan")

ax1.legend(bbox_to_anchor=(1.35, 1), borderaxespad=0)

ax2.legend(bbox_to_anchor=(1.35, 1), borderaxespad=0)

ax3.legend(bbox_to_anchor=(1.35, 1), borderaxespad=0)

ax4.legend(bbox_to_anchor=(1.35, 1), borderaxespad=0)

ax5.legend(bbox_to_anchor=(1.35, 1), borderaxespad=0)

Heatmap between artist and clusters

artistheatAggCl = pd.DataFrame(filtered.groupby(['artist_name','clAggCl'])['artist_name'].count()).reset_index(names=["artist","cluster"])

artistheatAggCl = artistheatAggCl.pivot(index="artist", columns="cluster", values="artist_name")

artistheatKMeans = pd.DataFrame(filtered.groupby(['artist_name','clKMeans'])['artist_name'].count()).reset_index(names=["artist","cluster"])

artistheatKMeans = artistheatKMeans.pivot(index="artist", columns="cluster", values="artist_name")

artistheatBirch = pd.DataFrame(filtered.groupby(['artist_name','clBirch'])['artist_name'].count()).reset_index(names=["artist","cluster"])

artistheatBirch = artistheatBirch.pivot(index="artist", columns="cluster", values="artist_name")

artistheatOptics = pd.DataFrame(filtered.groupby(['artist_name','clOptics'])['artist_name'].count()).reset_index(names=["artist","cluster"])

artistheatOptics = artistheatOptics.pivot(index="artist", columns="cluster", values="artist_name")

artistheatDbscan = pd.DataFrame(filtered.groupby(['artist_name','clDbscan'])['artist_name'].count()).reset_index(names=["artist","cluster"])

artistheatDbscan = artistheatDbscan.pivot(index="artist", columns="cluster", values="artist_name")

fig, axes = plt.subplots(3,2, figsize=(20,20))

ax1, ax2, ax3, ax4, ax5, ax6 = axes.flatten()

sns.heatmap(artistheatAggCl, cmap="YlOrBr", ax=ax1)

sns.heatmap(artistheatKMeans, cmap="YlOrBr", ax=ax2)

sns.heatmap(artistheatBirch, cmap="YlOrBr", ax=ax3)

sns.heatmap(artistheatOptics, cmap="YlOrBr", ax=ax4)

sns.heatmap(artistheatDbscan, cmap="YlOrBr", ax=ax5)

ax1.set_title("Agg. Clustering")

ax2.set_title("KMeans")

ax3.set_title("Birch")

ax4.set_title("Optics")

ax5.set_title("DBScan")

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.