Flash Attention: Underlying Principles Explained

Last Updated on December 21, 2023 by Editorial Team

Author(s): Florian

Originally published on Towards AI.

Flash Attention is an efficient and precise Transformer model acceleration technique proposed in 2022. By perceiving memory read and write operations, FlashAttention achieves a running speed 2–4 times faster than the standard Attention implemented in PyTorch, requiring only 5%-20% of the memory.

This article will explain the underlying principles of Flash Attention, illustrating how it achieves accelerated computation and memory savings without compromising the accuracy of attention.

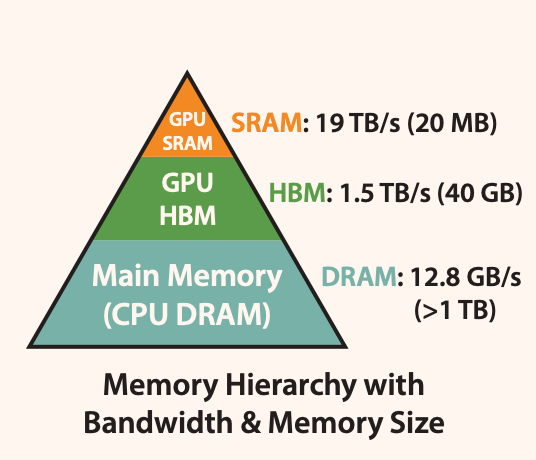

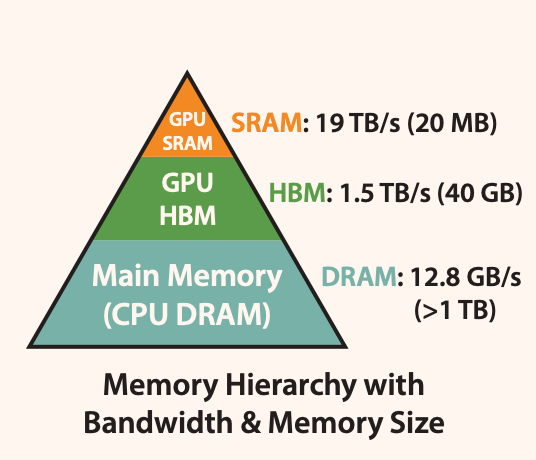

As shown in Figure 1, the memory of a GPU consists of multiple memory modules with different sizes and read/write speeds. Smaller memory modules have faster read/write speeds.

Figure 1: GPU Memory Hierarchy. Source: [1]

For the A100 GPU, the SRAM memory is distributed across 108 streaming multiprocessors, with each processor having a size of 192K. This adds up to 192 * 108KB = 20MB. The High Bandwidth Memory (HBM), which is commonly referred to as video memory, has a size of either 40GB or 80GB.

The read/write bandwidth of SRAM is 19TB/s, while HBM’s read/write bandwidth is only 1.5TB/s, less than 1/10th of SRAM’s.

Due to the improvement in computational speed relative to memory speed, operations are increasingly limited by memory (HBM) access. Therefore, reducing the number of read/write operations to HBM and effectively utilizing the faster SRAM… Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.