Finetuning Qwen3-VL-8B Vision-Language Model: Advanced Knowledge Enhancement Using Python and Unsloth

Author(s): Krishan Walia Originally published on Towards AI. Guide to fine-tuning Qwen3-VL-8B with Python, Unsloth, and open datasets — making powerful Vision AI accessible for every developer. With Qwen3-VL-8B (Qwen Vision-Language), we will unlock the ability to create solutions tailored to our …

Enhancing RAG: The Critical Role of Context Sufficiency

Author(s): Alok Ranjan Singh Originally published on Towards AI. RAG (Retrieval-Augmented Generation) is one of the most exciting ways to make language models more knowledgeable, but relevance alone isn’t enough. Many developers and researchers assume that if a document is relevant, the …

Self-Service Data Analytics in 2025: Empowering Teams with Automated Insights

Author(s): Aasir Waseer Originally published on Towards AI. Self-Service Data Analytics in 2025: Empowering Teams with Automated Insights Picture this: An executive needs a quarterly sales report. Instead of waiting days for the IT team to pull data, format spreadsheets, and schedule …

GitHub Actions or Jenkins? How DevOps Pipelines Evolved by 2025

Author(s): Meghana Thota Originally published on Towards AI. “A pipeline is code — but the platform around it can make or break your developer experience.” If you’ve ever wrestled with plugin breakage, spiraling CI costs, or YAML sprawl across repos, you know: …

The Multiplication Law of Wealth: From Compound Interest Mathematics to the Reinforcement Learning Essence of Human Behavior

Author(s): Shenggang Li Originally published on Towards AI. The Multiplication Law of Wealth: From Compound Interest Mathematics to the Reinforcement Learning Essence of Human Behavior Wealth Inequality Isn’t an Accident — It Follows Natural Laws Photo by Buddha Elemental 3D on UnsplashThis …

A Practical Walkthrough of Min-Max Scaling

Author(s): Amna Sabahat Originally published on Towards AI. In our previous discussion, we established why normalization is crucial for achieving success in machine learning. We saw how unscaled data can severely impact both distance-based and gradient-based algorithms. Now, let’s get practical: How …

RLAD: How AI Learns to Think Strategically Before Solving Hard Problems

Author(s): MKWriteshere Originally published on Towards AI. A new training method teaches language models to generate reasoning strategies first, improving accuracy by 44% on complex math problems Large language models struggle with a specific problem: they optimize for generating longer solutions instead …

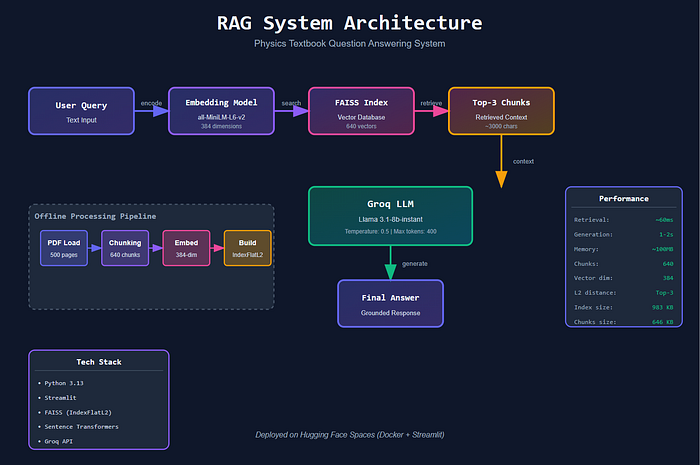

Building and Deploying a RAG Application: From PDF Processing to Production

Author(s): Ashutosh Malgaonkar Originally published on Towards AI. Overview I built a Retrieval-Augmented Generation (RAG) system that answers physics questions by retrieving relevant passages from an AP Physics textbook and generating responses using an LLM. The application processes 500 pages of Electricity …

Data Normalization in ML

Author(s): Amna Sabahat Originally published on Towards AI. In the realm of machine learning, data preprocessing is not just a preliminary step; it’s the foundation upon which successful models are built. Among all preprocessing techniques, normalization stands out as one of the …

The RAG Playbook: A Data Science Guide to Document Chunking

Author(s): The Bot Group Originally published on Towards AI. The RAG Playbook: A Data Science Guide to Document Chunking Large Language Models are only as smart as the data we feed them. This is the fundamental challenge at the heart of modern …

How to Surgically Edit LLMs Without Retraining in Data Science

Author(s): The Bot Group Originally published on Towards AI. How to Surgically Edit LLMs Without Retraining in Data Science Your large language model is a marvel of engineering, trained on vast datasets at an enormous cost. It’s powerful, fluent, and… wrong. It …

The Model That Broke All the Rules in Data Science

Author(s): The Bot Group Originally published on Towards AI. The Model That Broke All the Rules in Data Science For years, the world of sequence modeling was dominated by a single, stubborn idea: to understand language, a model had to process it …

The DINOv3 Playbook for Computer Vision Data Science

Author(s): The Bot Group Originally published on Towards AI. The DINOv3 Playbook for Computer Vision Data Science Self-supervised learning (SSL) has long been the holy grail of machine learning. The promise is simple yet transformative: train powerful foundation models on massive, unlabeled …

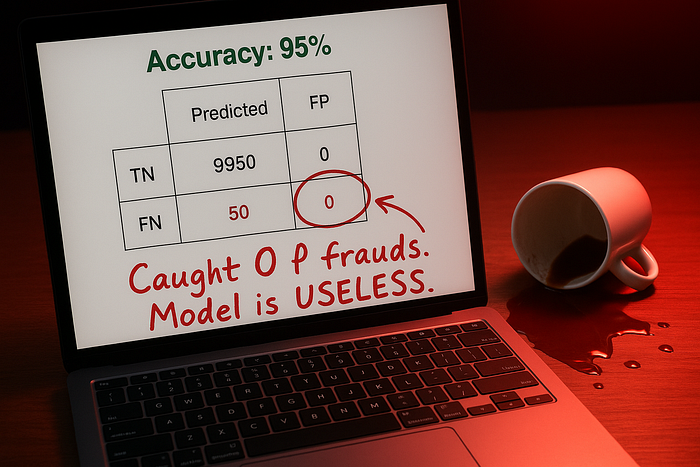

Your Model Has 95% Accuracy. It’s Completely Useless.

Author(s): Rohan Mistry Originally published on Towards AI. You Built a Model That Predicts Everything as “No.” It Has 95% Accuracy. You Just Shipped Garbage. Your boss: “How’s the fraud detection model?”You: “95% accuracy! Ready to deploy!”Your boss: “Great! Ship it.” Source: …

Data Leakage: Your 99% Accuracy Model is a Lie

Author(s): Rohan Mistry Originally published on Towards AI. Training Accuracy: 99%. Production Accuracy: 53%. Welcome to Data Leakage Hell. You spent 3 months building the perfect model. Source: Image by author.The article discusses the challenges of data leakage in machine learning, where …