Your Camera Doesn’t Just See — It Explains

Author(s): Lindo St. Angel Originally published on Towards AI. Image by the author (unknown person was me out of the scene) Smart-home cameras are noisy storytellers. A leaf moves, a light flickers, and your phone buzzes again. What you get is motion …

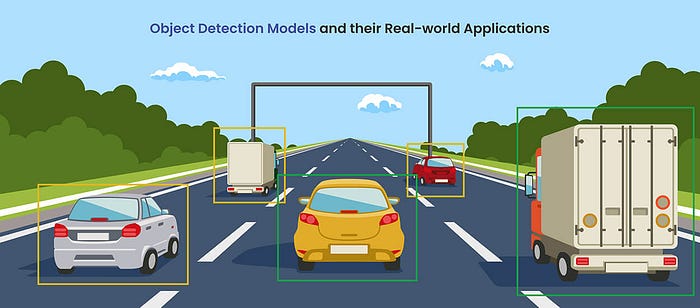

Breaking Down YOLO: How Real Time Object Detection Works Step by Step

Author(s): Abinaya Subramaniam Originally published on Towards AI. Object detection is one of the most interesting areas of computer vision. It is the process of identifying and locating objects in an image. Popular examples include detecting cars on a road, identifying products …

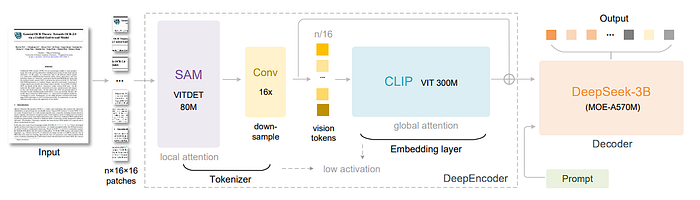

DeepSeek-OCR: Contexts Optical Compression (Paper Review)

Author(s): Hira Ahmad Originally published on Towards AI. The Shift from Recognition to Understanding From recognizing letters to reasoning through meaning, DeepSeek-OCR redefines what it means for machines to read. Source ImageDeepSeek-OCR revolutionizes optical character recognition by integrating comprehension and contextual reasoning …

The Evolving Vision: From Block World to Intelligent Perception

Author(s): Hira Ahmad Originally published on Towards AI. The Evolving Vision: From Block World to Intelligent Perception In the vast history of artificial intelligence, vision has remained one of its most profound and persistent pursuits not merely to capture what humans see, …

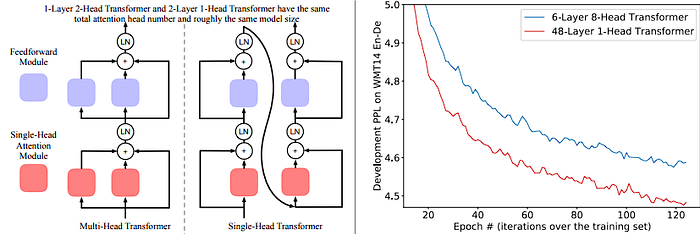

When Transformers Multiply Their Heads: What Increasing Multi-Head Attention Really Does

Author(s): Hira Ahmad Originally published on Towards AI. When Transformers Multiply Their Heads: What Increasing Multi-Head Attention Really Does Transformers have become the backbone of many AI breakthroughs, in NLP, vision, speech, etc. A central component is multi-head self-attention: the notion that …

The DINOv3 Playbook for Computer Vision Data Science

Author(s): The Bot Group Originally published on Towards AI. The DINOv3 Playbook for Computer Vision Data Science Self-supervised learning (SSL) has long been the holy grail of machine learning. The promise is simple yet transformative: train powerful foundation models on massive, unlabeled …

Multimodal AI Is Just Tensor Algebra: The Linear Algebra Truth Behind Vision-Language Models

Author(s): DrSwarnenduAI Originally published on Towards AI. The Mathematical Symphony That Powers Billion-Dollar AI Systems After reverse-engineering the mathematical foundations of GPT-4V, DALL-E, and Claude 3, I’ve discovered something profound: these systems that seem to “understand” images and text are performing a …

MAP in Object Detection: I Bet You’ll Remember This Forever!

Author(s): Debasish Das Originally published on Towards AI. Hey there! 👋 Ever trained an object detection model and wondered, “Is this thing actually any good?” Welcome to the club! If terms like MAP, AP, and IoU make your brain go “404 error,” …

DoodlAI- Build a Real-Time Doodle Recognition AI with CNN

Author(s): Abinaya Subramaniam Originally published on Towards AI. Have you ever wondered if a computer could recognize your doodles of cats, trees, cars, or even clocks, as you draw them? That’s exactly what DoodlAI does. In this blog, I’ll take you step …

ARGUS: Vision-Centric Reasoning with Grounded Chain-of-Thought

Author(s): Yash Thube Originally published on Towards AI. Existing Multimodal LLMs, primarily driven by advancements in large language models (LLMs), often underperform when accurate visual perception and understanding of specific regions-of-interest (RoIs) are crucial for successful reasoning. Argus tackles this by proposing …

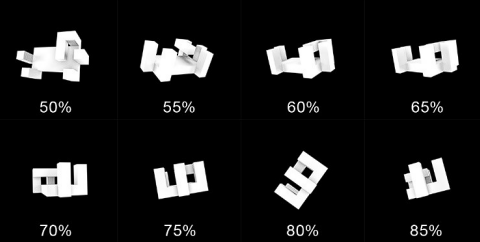

From Pixels to Understanding: A Better Way for AI to See

Author(s): Kaushik Rajan Originally published on Towards AI. How a new “denoising” technique is making on-device computer vision faster, smarter, and ready for your next app. Computer vision on mobile devices is a quiet miracle. It powers the face-unlock on your phone, …

“Building Vision Transformers from Scratch: A Comprehensive Guide”

Author(s): Ajay Kumar mahto Originally published on Towards AI. Building Vision Transformers from Scratch: A Comprehensive Guide A Vision Transformer (ViT) is a deep learning model architecture that applies the Transformer framework, originally designed for natural language processing (NLP), to computer vision …

From Pixels to Understanding: A Better Way for AI to See

Author(s): Kaushik Rajan Originally published on Towards AI. How a new “denoising” technique is making on-device computer vision faster, smarter, and ready for your next app. Computer vision on mobile devices is a quiet miracle. It powers the face-unlock on your phone, …

“Building Vision Transformers from Scratch: A Comprehensive Guide”

Author(s): Ajay Kumar mahto Originally published on Towards AI. Building Vision Transformers from Scratch: A Comprehensive Guide A Vision Transformer (ViT) is a deep learning model architecture that applies the Transformer framework, originally designed for natural language processing (NLP), to computer vision …

Harness DINOv2 Embeddings for Accurate Image Classification

Author(s): Lihi Gur Arie, PhD Originally published on Towards AI. If you don’t have a paid Medium account, you can read for free here. Introduction Training a high-performing image classifier typically requires large amounts of labeled data. But what if you could …