Mixtral-8x7B + GPT-3 + LLAMA2 70B = The Winner

Author(s): Gao Dalie (高達烈) Originally published on Towards AI. While everyone’s focused on the release of Google Gemini quietly in thebackground Mixtral-8 x 7 Billion releases their open-source model. So, In this Article, we’re diving into some of the latest AI developments …

Inside FunSearch: Google DeepMind’s New LLM that is Able to Discover New Math and Computer Science Algorithms

Author(s): Jesus Rodriguez Originally published on Towards AI. Created Using DALL-E I recently started an AI-focused educational newsletter, that already has over 160,000 subscribers. TheSequence is a no-BS (meaning no hype, no news, etc) ML-oriented newsletter that takes 5 minutes to read. …

Off the Mark: The Pitfalls of Metrics Gaming in AI Progress Races

Author(s): Tabrez Syed Originally published on Towards AI. One of my favorite cautionary tales of misaligned incentives is the urban legend of the Soviet nail factory. As the story goes, during a nail shortage in Lenin’s time, Soviet factories were given bonuses …

GNoMe, An AI that Advances Humanity by 800 Years

Author(s): Ignacio de Gregorio Originally published on Towards AI. Scaling Material Discovery with AI Top highlight Rarely us humans witness a technology that pushes our knowledge boundaries by months, or even years. Some would argue that ChatGPT has brought to us things …

A Layman’s Guide to Whether AI Could Really Kill Us All

Author(s): Chandrayan Gupta Originally published on Towards AI. Are reports of the demise of humankind greatly exaggerated?Photo courtesy of the author I have a dual degree in law and business management and my areas of speciality are mental health, writing tips, self-improvement, …

Learn AI Together — Towards AI Community Newsletter #5

Author(s): Towards AI Editorial Team Originally published on Towards AI. Good morning, AI enthusiasts! This week’s podcast episode is a must-listen and stands out as the best among all 24 episodes so far. Greg shares incredible insights relevant not only for entrepreneurs …

Make Any* LLM fit Any GPU in 10 Lines of Code

Author(s): Dr. Mandar Karhade, MD. PhD. Originally published on Towards AI. An ingenious way of running models larger than the VRAM of the GPU. It may be slow but it freaking works! Who has enough money to spend on a GPU with …

Driving in the AI Era: Will Fear of AI Change Cost Lives?

Author(s): Pranath Fernando | AI Consultant Originally published on Towards AI. When your preferences cause more deaths, it might be time to reflectImage by Dalle-3 and the author You know that, sadly, many people die every year from driving accidents. But do …

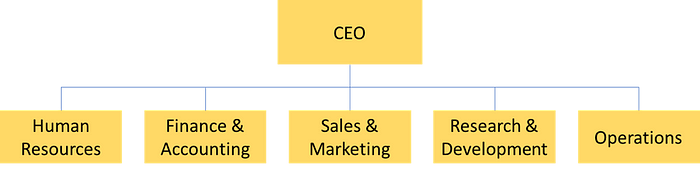

Succeed on LinkedIn with 2020 and 2021 top voice Greg Coquillo

Author(s): Louis Bouchard Originally published on Towards AI. Episode 24 of the What’s AI Podcast I had a fascinating conversation with Greg Coquillo, a recognized LinkedIn top voice, senior product manager, and investor in AI startups. I surely took this opportunity to …

This AI newsletter is all you need #77

Author(s): Towards AI Editorial Team Originally published on Towards AI. What happened this week in AI by Louie This week in AI, the news was dominated by new Large Language Model releases from Google (Gemini) and Mistral (8x7B). The highly divergent approach …

(New Approach🔥) LLMs + Knowledge Graph: Handling Large Documents and Data or any Industry — Advanced

Author(s): Vaibhawkhemka Originally published on Towards AI. (New ApproachU+1F525) LLMs + Knowledge Graph: Handling Large Documents and Data or any Industry — Advanced Most of the real-world data replicates the Knowledge graph. In this messy world, one will hardly find linear data …

How “Towards AI” detects AI-Generated Articles

Author(s): Ahmad Mustapha Originally published on Towards AI. Photo by Brett Jordan on Unsplash The last time I submitted an article to the “Towards AI” publication I was surprised by their reply. It said: Your blog has been flagged as containing AI-generated …

What we Know About Mixtral 8x7B: Mistral New Open Source LLM

Author(s): Jesus Rodriguez Originally published on Towards AI. Created Using DALL-E I recently started an AI-focused educational newsletter, that already has over 160,000 subscribers. TheSequence is a no-BS (meaning no hype, no news, etc) ML-oriented newsletter that takes 5 minutes to read. …

Mistral AI: (8x7b) Releases First Ever Opensource Model Of Experts (MoE) Model

Author(s): Dr. Mandar Karhade, MD. PhD. Originally published on Towards AI. Mistral continues their commitment to the Open Source World by releasing the first 56 billion token model (8 models, 7 billion tokens each) via a Torrent !! A few days ago, …

Why OpenHermes-2.5 Is So Much Better Than GPT-4 And LLama2 13B — Here The Result

Author(s): Gao Dalie (高達烈) Originally published on Towards AI. the AI news in the past 7 days has been insane, with so much happening in the world of AI So, In this Article, we’re diving into some of the latest AI developments …