Beyond “Looks Good to Me”: How to Quantify LLM Performance with Google’s GenAI Evaluation Service

Author(s): Jasleen Originally published on Towards AI. The Production Hurdle The greatest challenge faced by industry today is converting a solution from demo to production. And the main reason behind this is confidence in the results. The evaluation dataset and metrics that …

Understanding LLM Sampling: Top-K, Top-P, and Temperature

Author(s): Sai Bhargav Rallapalli Originally published on Towards AI. Mastering Creativity and Control with Temperature, Top-K, and Top-P LLM sampling is how a model decides the next word to generate from a list of possibilities. Rather than simply picking the most likely …

Beyond pre-trained LLMs: Augmenting LLMs through vector databases to create a chatbot on organizational data

Author(s): Leapfrog Technology Originally published on Towards AI. In the ever-evolving realm of AI-driven applications, the power of Large Language Models (LLMs) like OpenAI’s GPT and Meta’s Llama2 cannot be overstated. In our previous article, we introduced you to the fascinating world …

17 Mistakes to Avoid When Using AI for SEO

Author(s): Shauvik Kumar Originally published on Towards AI. “A life spent making mistakes is more useful than a life spent doing nothing.” — George Bernard Shaw Mistakes are part of growth, especially with new technology. I’ve made nearly all of them myself. …

TAI #171: How is AI Actually Being Used? Frontier Ambitions Meet Real-World Adoption Data

Author(s): Towards AI Editorial Team Originally published on Towards AI. What happened this week in AI by Louie This week, AI models continued to push the frontiers of capability, with both OpenAI and DeepMind achieving gold-medal-level results at the 2025 ICPC World …

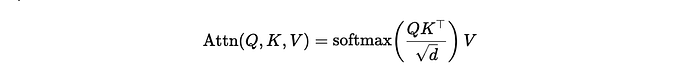

Attention = Soft k-NN

Author(s): Joseph Robinson, Ph.D. Originally published on Towards AI. Transformers = soft k-NN. One query asks, “Who’s like me?” Softmax votes, and the neighbors whisper back a weighted average. That’s an attention head; a soft, differentiable k-NN: measure similarity → turn scores …

Mastering LoRA: A Gentle Path to Custom Large-Language Models

Author(s): Harshit Kandoi Originally published on Towards AI. Mastering LoRA: A Gentle Path to Custom Large-Language Models Imagine owning a magnificent state-of-the-art, without any comparison. Think of an unbridled artist capable of preparing any world’s best cuisine. It bakes, roasts, sautés, and …

ATOKEN: A Unified Tokenizer for Vision Finally Solves AI’s Biggest Problem

Author(s): MKWriteshere Originally published on Towards AI. How Apple eliminated the need for separate visual AI systems with one tokenizer that handles all content types While competitors grabbed headlines with flashy AI demos, Apple’s researchers were quietly solving visual AI’s most fundamental …

Building an AI Debate Panel: Agents that Argue and Give a Final Conclusion

Author(s): Michalzarnecki Originally published on Towards AI. Building an AI Debate Panel: Agents that Argue and Give a Final Conclusion A single LLM prompt or a plain ReAct (reasoning & take actions) agent often gives you a plausible answer – sometimes great, …

The ABC of Retrieval Augmented Generation (RAG) with Implementation— Part 1

Author(s): Shweta Originally published on Towards AI. The ABC of Retrieval Augmented Generation (RAG) with Implementation— Part 1 This is a series of multiple articles that will cover the basics of Retrieval Augmented Generation (RAG) and its different building blocks with implementation …