Where LLMs Belong in Agentic Systems: Gating, Approval, and Human-in-the-Loop Design

Last Updated on February 19, 2026 by Editorial Team

Author(s): Vahe Sahakyan

Originally published on Towards AI.

This article closes a four-part series on designing agentic AI systems.

So far, we’ve focused on structure first.

We separated agentic behavior from language models.

We built workflows with explicit control flow, shared state, and clear termination.

We showed that agency emerges from structure before intelligence.

That sequencing was intentional.

Because the most fragile moment in an agentic system is not when you remove the LLM.

It is when you add it back.

Reintroducing a language model into a system with shared state, explicit routing, and real consequences immediately changes the system’s risk profile. Without clear boundaries, probabilistic reasoning begins to leak into control flow. Decisions blur. Execution becomes harder to inspect. Responsibility shifts silently into prompts.

The question is not whether LLMs are powerful.

It is where they belong.

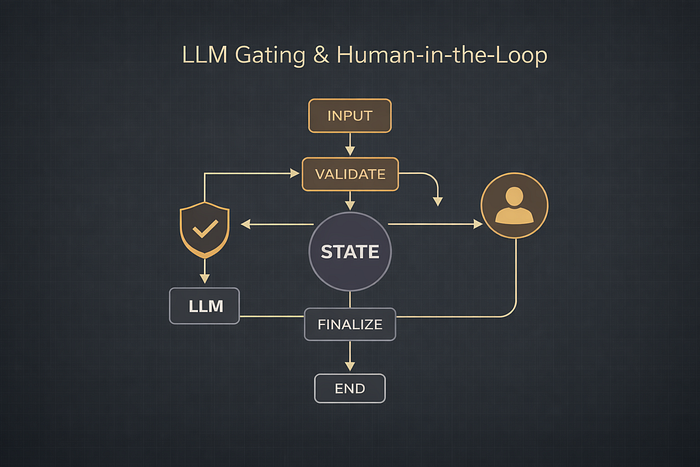

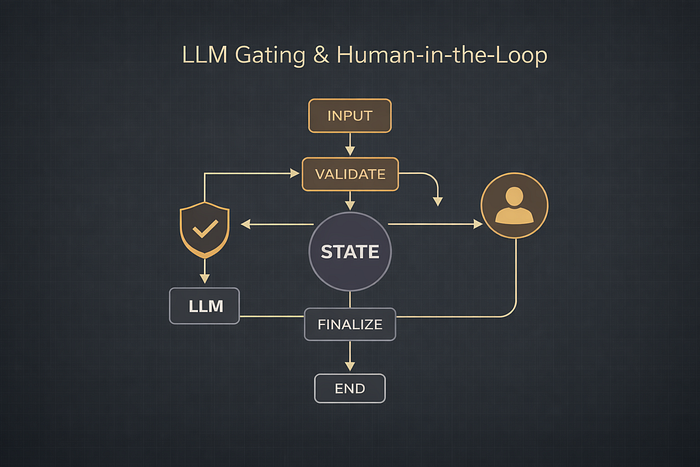

In this final article, we focus on three structural patterns:

- gating LLM invocation behind deterministic routing decisions

- modeling human approval as part of execution flow rather than an external process

- designing workflows where language models assist reasoning without determining control flow

The goal is not to make agents more autonomous.

It is to ensure that once LLMs are introduced, systems remain predictable, inspectable, and accountable.

This is the boundary between impressive demos and durable systems.

The code fragments below are intentionally partial. They illustrate architectural boundaries, not a production-ready tutorial. The goal is to make execution structure visible — not to walk step-by-step through implementation details.

When LLMs Collapse Control Boundaries

So far in this series, we have intentionally kept language models outside the execution path.

That choice was deliberate. Before introducing probabilistic reasoning, we needed a system whose control flow, state, and decision boundaries were already explicit.

Once an LLM is introduced, those boundaries are easy to blur.

Language models are powerful — but they are probabilistic. They do not execute rules. They do not enforce constraints. They generate plausible continuations. When a model is asked to both reason and determine what happens next, the distinction between thinking and acting begins to collapse.

A common early failure mode in agentic systems is allowing the model to implicitly govern execution. The LLM interprets intent, selects tools, decides when to retry, and determines when the task is complete — all within generated text. Control logic migrates into prompts. Branching, validation, and termination cease to be structural properties of the system and become inferences drawn from output.

The result is not only hallucinated content.

It is hallucinated control flow.

Decisions become opaque. Failures are difficult to reproduce. Debugging becomes an exercise in reconstructing prompt history. Responsibility shifts from architecture to model output — where it cannot be enforced or inspected.

This is why generation must be gated.

Language models are valuable as classifiers, planners, and generators. But they should not determine whether execution proceeds. Their outputs must pass through explicit decision points. And when actions have real consequences, humans must be placed at those boundaries — not after the fact.

The objective is not to constrain the model.

It is to preserve what makes agentic systems reliable: explicit control, inspectable decisions, and accountable execution.

Pattern 1: Decision Before Generation

The first safe way to introduce an LLM into an agentic system is to decide — structurally — whether generation is allowed before any generation occurs.

This pattern can be described simply:

The system decides first.

The model acts second.

Instead of asking the LLM what to do next, the workflow evaluates whether invoking the model is justified at all. That decision is deterministic. It is made through state and routing logic — not through generated text.

This separation immediately addresses two common failure modes:

- hallucinating answers when information is missing or irrelevant

- hiding control logic inside prompts instead of architecture

The goal is not to make the model cautious.

It is to make invocation conditional.

Problem setup: a scoped Q&A assistant

Consider a documentation assistant with a narrow responsibility:

- It should answer questions covered by the tutorial.

- It should decline questions outside its scope.

- It should never guess.

Without structural gating, an LLM will almost always produce an answer — even when it shouldn’t. That behavior is not a bug. It is how generative models are designed.

So the workflow must answer a different question first:

Is the system justified in attempting generation?

Only after that question is answered should the model be invoked.

High-level workflow

Conceptually, the workflow contains four steps:

- Receive a question

- Determine whether it is in scope

- Generate an answer (only if allowed)

- Return a controlled response

The key architectural constraint is this:

The LLM is never responsible for deciding whether it should be used.

That decision belongs to the system.

Explicit state

State captures responsibility explicitly. Each field has a single role.

class QAState(TypedDict, total=False):

question: str

is_in_scope: bool

answer: Optional[str]

This separation matters:

- question is input

- is_in_scope is a deterministic decision

- answer is the result of one of two controlled paths

Intake Node

This node performs no reasoning. It simply ensures that a question exists in state.

def input_question_node(state: QAState) -> QAState:

if "question" not in state:

question = input("Ask a question about the tutorial: ").strip()

return {"question": question}

return {}

There is no interpretation. No classification. No model involvement.

Only state initialization.

Deterministic Scope Check

The scope check is intentionally rule-based.

In production, this might involve embeddings or a classifier. Here, a simple keyword rule keeps behavior transparent and reproducible.

ALLOWED_TOPICS = {

"langgraph",

"state",

"nodes",

"edges",

"workflow",

"agent",

}

def scope_check_node(state: QAState) -> QAState:

question = state["question"].lower()

in_scope = any(topic in question for topic in ALLOWED_TOPICS)

return {"is_in_scope": in_scope}

This node answers a single structural question:

Is generation permitted?

Explicit Routing

Routing is separate from execution.

def route_after_scope_check(state: QAState) -> str:

if state.get("is_in_scope"):

return "answer"

return "out_of_scope"

By the time routing occurs, the system has already decided whether the LLM may be invoked.

The model has not been consulted.

Isolated Generation

This is the only location where the LLM appears.

def answer_node(state: QAState) -> QAState:

question = state["question"]

response = llm.invoke([

HumanMessage(content=f"Answer clearly and concisely:\n\n{question}")

])

return {"answer": response.content}

The model does not determine whether it should answer.

It answers because the system has granted permission.

That distinction is architectural.

Out-of-Scope Path

Out-of-scope behavior is not implemented through clever prompting.

It is implemented through an explicit alternative node.

def out_of_scope_node(state: QAState) -> QAState:

return {

"answer": (

"This question is outside the scope of the tutorial. "

"Please ask something related to LangGraph workflows, state, or agents."

)

}

This guarantees predictable behavior when generation is not allowed.

No refusals embedded in prompts.

No reliance on model compliance.

Converging Execution

Both paths — generation and fallback — converge before termination.

def finalize_qa_node(state: QAState) -> QAState:

return {"answer": state.get("answer", "No answer available.")}

This node does not introduce new logic.

It normalizes output and provides a single structural exit point.

Convergence matters. It ensures that no matter which path execution takes, termination is explicit and inspectable.

Build the graph

With nodes defined, the graph encodes the boundary:

from langgraph.graph import StateGraph, END

workflow = StateGraph(QAState)

workflow.add_node("input", input_question_node)

workflow.add_node("scope_check", scope_check_node)

workflow.add_node("generate_answer", answer_node)

workflow.add_node("out_of_scope", out_of_scope_node)

workflow.add_node("finalize", finalize_qa_node)

workflow.set_entry_point("input")

workflow.add_edge("input", "scope_check")

workflow.add_conditional_edges(

"scope_check",

route_after_scope_check,

{

"answer": "generate_answer",

"out_of_scope": "out_of_scope",

},

)

workflow.add_edge("generate_answer", "finalize")

workflow.add_edge("out_of_scope", "finalize")

workflow.add_edge("finalize", END)

app = workflow.compile()

The graph itself enforces the rule:

- There is no path to the LLM without passing the scope check.

- Out-of-scope questions never reach the model.

This guarantee is not expressed in prompts.

It is encoded in execution structure.

Visualizing the workflow

Execution can be visualized directly:

png_bytes = app.get_graph().draw_mermaid_png()

display(Image(png_bytes))

You can see exactly where generation is allowed — and where it is not.

Running the workflow

Invoking the workflow:

app.invoke({"question": "How does LangGraph manage state across nodes?"})

Out:

{'question': 'How does LangGraph manage state across nodes?',

'is_in_scope': True,

'answer': 'LangGraph manages state across nodes by maintaining a centralized or distributed state store that tracks the data and context as it flows through each node. Each node can read from and write to this shared state, enabling consistent data management and coordination throughout the graph execution. This approach ensures that nodes have access to the necessary information to perform their tasks and that updates are propagated appropriately across the graph.'}

Why This Pattern Matters in Production

This example demonstrates a core agentic principle:

LLMs should be workers, not judges.

They generate content when invoked — but they do not determine whether invocation is allowed.

By separating deterministic decision-making (the scope check) from probabilistic generation (the LLM), the system retains authority over its own execution.

That separation has concrete production consequences.

Generation cannot bypass validation or policy because the model never controls routing. Decisions remain explicit and inspectable because they live in state and graph structure. New rules can be introduced without rewriting prompts or retraining behavior, because control logic is architectural rather than textual.

Most importantly, responsibility stays where it belongs: in the system design, not in model output.

In the next section, we extend this boundary further by introducing explicit approval and human-in-the-loop checkpoints. Instead of allowing oversight to exist outside the system, we will model it directly inside execution flow — where it can be enforced rather than assumed.

Pattern 2: Human-in-the-Loop as a First-Class Node

Not all agentic systems should be fully autonomous.

Even when an agent can evaluate options or propose actions, many real-world systems require explicit human approval before irreversible steps occur. That requirement is not a limitation of the model. It is a property of the domain.

Common examples include:

- compliance and regulatory decisions

- financial or pricing changes

- actions with legal or reputational risk

In these settings, human intervention cannot be bolted on after execution. It must be modeled as part of execution.

This leads to a second structural pattern:

Compute a proposal → pause for approval → either apply or reject.

In agentic systems, human-in-the-loop is not a UI concern.

It is a control-flow concern.

Problem setup: discount approval workflow

Imagine a restaurant chain where a manager proposes a discount for a specific day.

The system must:

- evaluate the proposed discount

- pause for human approval

- apply the discount if approved

- reject it otherwise

- produce a final, auditable result

The critical constraint is structural:

Execution must stop until approval is resolved.

Explicit approval state

Approval is modeled directly in shared state.

class DiscountState(TypedDict, total=False):

day: str

discount_pct: float

estimated_revenue: float

estimated_discount_cost: float

approval: Optional[str]

result: Optional[str]

The presence of the approval field makes the checkpoint explicit.

Execution cannot continue without it.

Approval is not implied.

It is required.

Node 1: input proposal

def input_discount_node(state: DiscountState) -> DiscountState:

if "day" not in state:

day = input("Day (Mon/Tue/Wed/Thur/Fri/Sat/Sun): ").strip()

else:

day = state["day"]

if "discount_pct" not in state:

discount_pct = float(input("Discount percent (e.g., 10 for 10%): ").strip())

else:

discount_pct = float(state["discount_pct"])

return {"day": day, "discount_pct": discount_pct}

This node gathers the proposal.

It does not decide whether it should be applied.

Node 2: estimate impact

Before asking for approval, the system computes impact.

BASELINE_REVENUE_BY_DAY = {

"Mon": 1200, "Tue": 1300, "Wed": 1400, "Thur": 1600,

"Fri": 2200, "Sat": 3000, "Sun": 2800

}

def estimate_impact_node(state: DiscountState) -> DiscountState:

day = state["day"]

discount_pct = state["discount_pct"]

revenue = BASELINE_REVENUE_BY_DAY.get(day, 1500)

discount_cost = revenue * (discount_pct / 100.0)

return {

"estimated_revenue": revenue,

"estimated_discount_cost": discount_cost,

}

This separation matters.

The human reviews a proposal with context, not a raw suggestion.

Node 3: human approval checkpoint

This node represents the human-in-the-loop step.

def approval_node(state: DiscountState) -> DiscountState:

if state.get("approval") in {"approve", "reject"}:

return {}

decision = input("Approve discount? (approve/reject): ").strip().lower()

if decision not in {"approve", "reject"}:

decision = "reject"

return {"approval": decision}

Router: approval decides execution

def route_after_approval(state: DiscountState) -> str:

return "apply" if state.get("approval") == "approve" else "reject"

Routing is explicit and inspectable.

Execution cannot proceed without a resolved approval state.

Node 4a: Apply Discount

def apply_discount_node(state: DiscountState) -> DiscountState:

day = state["day"]

pct = state["discount_pct"]

cost = state["estimated_discount_cost"]

return {

"result": (

f"✅ Approved: Apply {pct:.1f}% discount on {day}. "

f"Estimated revenue impact: -${cost:.2f}."

)

}

Node 4b: Reject Discount

def reject_discount_node(state: DiscountState) -> DiscountState:

day = state["day"]

pct = state["discount_pct"]

cost = state["estimated_discount_cost"]

return {

"result": (

f"❌ Rejected: Do not apply {pct:.1f}% discount on {day}. "

f"Estimated revenue impact avoided: ${cost:.2f}."

)

}

These nodes execute outcomes.

They do not decide whether approval exists.

That decision has already been enforced structurally.

Build the graph

from langgraph.graph import StateGraph, END

workflow = StateGraph(DiscountState)

workflow.add_node("input", input_discount_node)

workflow.add_node("estimate", estimate_impact_node)

workflow.add_node("approved", approval_node)

workflow.add_node("apply_discount", apply_discount_node)

workflow.add_node("reject_discount", reject_discount_node)

workflow.set_entry_point("input")

workflow.add_edge("input", "estimate")

workflow.add_edge("estimate", "approved")

workflow.add_conditional_edges(

"approved",

route_after_approval,

{

"apply": "apply_discount",

"reject": "reject_discount",

},

)

workflow.add_edge("apply_discount", END)

workflow.add_edge("reject_discount", END)

app = workflow.compile()

The graph encodes a structural invariant:

Execution cannot progress without explicit human approval.

Visualizing execution

The diagram makes the pause visible.

Approval is not implied.

It is a required state transition.

Running the workflow

final_state = app.invoke({"day": "Sat", "discount_pct": 12})

print(final_state["result"])

Approve discount? (approve/reject): approve

✅ Approved: Apply 12.0% discount on Sat. Estimated revenue impact: -$360.00.

Why Human-in-the-Loop Is Structural, Not Cosmetic

This pattern highlights a subtle but critical point:

Agentic systems are not about removing humans.

They are about placing humans at enforceable boundaries.

In poorly designed systems, human oversight appears after execution — through manual overrides, approval emails, or post-hoc reviews. These mechanisms may exist socially, but they are not enforced by the system itself.

When human approval is modeled explicitly in state and routing:

- workflows remain transparent

- responsibility boundaries are visible

- compliance is enforced by execution structure

The difference is architectural.

When approval is part of shared state, the system cannot proceed without it. There is no prompt to bypass, no hidden conditional to forget, and no ambiguity about who authorized what.

Human judgment becomes a first-class dependency of execution.

This is where graph-based orchestration matters.

By modeling human checkpoints as nodes — subject to the same routing, inspection, and observability as any other step — approval becomes part of the system’s lifecycle rather than an external intervention.

Execution pauses cleanly.

Decisions are inspectable.

Responsibility is explicit.

In agentic systems, autonomy is not defined by the absence of humans.

It is defined by whether the system knows exactly when it must stop and ask.

Controlling LLMs Is an Architectural Problem

Across this series, we’ve moved deliberately — from models to systems, from generation to execution, and from clever prompts to explicit control.

The conclusion is simple, but easy to miss:

The hardest problems in agentic systems are not generative.

They are architectural.

Large language models are powerful reasoning tools. But without structure, they blur responsibility, hide control logic, and turn execution into improvisation. With structure, the same models become contained components inside systems that remain predictable, inspectable, and accountable.

The patterns in this article are not about making LLMs safer through better prompts. They are about making systems safer by design.

Decision-before-generation ensures that models are only invoked when the system has determined it is appropriate.

Human-in-the-loop as a first-class node ensures that oversight is enforced by execution flow, not by social convention.

Explicit state and routing ensure that no model output silently becomes control logic.

Taken together, these patterns point to a single rule:

LLMs may propose, explain, classify, or summarize — but they should not decide when execution proceeds.

That responsibility belongs to the system.

This reframing changes how agentic systems are built. Instead of asking:

“How do we get the model to behave?”

The question becomes:

“Where does probabilistic reasoning belong inside a deterministic process?”

That shift has been the throughline of this series.

Agentic systems are not defined by autonomy for its own sake.

They are defined by controlled autonomy — by systems that know when to act, when to pause, and when to ask.

Structure first.

Reasoning second.

Execution always explicit.

Once that boundary is clear, LLMs stop being a liability.

They become exactly what they should have been all along:

powerful tools inside systems that remain firmly under control.

If you liked this content, follow me for more.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.