Building an Agentic Workflow in LangGraph (No LLM Required)

Last Updated on February 19, 2026 by Editorial Team

Author(s): Vahe Sahakyan

Originally published on Towards AI.

Most introductions to agentic AI start with a language model.

This one doesn’t.

That choice is deliberate.

If you begin with an LLM, it’s easy to mistake intelligence for agency. You end up tuning prompts, adding tools, and hoping the model behaves — without ever making execution structure explicit. The result often looks intelligent, but behaves unpredictably.

Agentic systems are not defined by generation.

They are defined by how decisions are made, how state evolves, and how execution continues over time.

This article focuses on that foundation.

Instead of starting with a model, we will build a small but complete agentic workflow using LangGraph, with no language model involved at all. The goal is not to avoid LLMs, but to show that agentic behavior emerges from structure first.

State persists.

Routing is explicit.

Execution can loop, branch, converge, and terminate — and be inspected after the fact.

Only once that structure exists does it make sense to introduce probabilistic reasoning.

Why Start Without an LLM?

Starting without a language model is not about limitation. It is about visibility.

When an LLM is introduced too early, it becomes easy to mistake fluent output for coherent execution. Reasoning, control flow, validation, and retries collapse into a single probabilistic step. When something goes wrong, it is difficult to tell whether the failure came from the model, the prompt, or the surrounding system.

By removing the model entirely, the mechanics of execution are exposed.

State must be explicit.

Decisions must be represented structurally.

Control flow must be modeled rather than implied.

This forces a different design posture. Instead of asking what the model should say next, you are forced to ask what the system should do next — and under what conditions execution should continue, loop, or stop.

This clarity is difficult to achieve once probabilistic reasoning is in the loop.

Starting without an LLM also makes an important point concrete: agentic behavior does not emerge from intelligence alone. It emerges from how execution is structured over time. Even a fully deterministic workflow can exhibit core agentic properties — persistence, adaptation, and recoverability — when state and control flow are explicit.

Once that structure exists, introducing an LLM becomes a contained decision rather than a foundational one. The model can assist with reasoning, classification, or generation, but it operates inside a system that already knows how to continue, recover, and terminate.

This sequencing is intentional.

Before adding intelligence, the system must know how to run.

Problem Framing: Order Issue Triage

To make agentic execution concrete, we’ll work with a deliberately modest problem: order issue triage.

This is not a chatbot and not a prompt–completion task. It is a small but complete process — one that requires validation, branching, retries, and an explicit end state.

The objective is simple: take a customer’s order issue and route it to the correct handling path.

What makes the problem interesting is not the domain, but the execution requirements.

The system must operate on a set of inputs that may be incomplete or invalid:

- order_id (expected format: ORD-12345)

- issue_type (damaged, late, wrong_item)

- resolution_preference (refund or replacement)

From there, the system cannot simply generate a response and stop. It must manage execution over time:

- collect missing information

- validate inputs against known constraints

- retry when validation fails

- route execution when validation succeeds

- converge on a final, structured outcome

There is no single “correct answer” here. What happens next depends on the current state of the system, and execution may need to revisit earlier steps before it can progress.

This makes the example intentionally well-suited for examining agentic structure. The problem is not about language generation — it is about control flow. Execution must be driven by state, not by a fixed sequence of steps.

With the problem framed, the next step is to make that state explicit.

State as the Contract Between Nodes

In LangGraph, state is not an implementation detail.

It is the contract that binds the system together.

Rather than passing parameters directly between functions, the workflow operates on a shared state object. Every node reads from this state and returns updates to it. Control flow decisions are made by inspecting state — not by inferring what happened inside a previous step.

This changes how workflows are designed.

Instead of asking which function calls which, the more fundamental question becomes:

What does the system know right now — and what is it responsible for producing next?

That answer is encoded directly in the state schema.

State defines:

- what information persists across steps

- what decisions can be made

- when execution can continue, loop, or terminate

Nodes do not coordinate with each other.

They do not manage retries.

They do not decide where execution goes next.

They operate entirely through state.

Making State Explicit

For the order triage workflow, the shared state captures both inputs and progress. A simplified version might look like this:

from typing import TypedDict, Optional, Literal

IssueType = Literal["damaged", "late", "wrong_item"]

Resolution = Literal["refund", "replacement"]

class TriageState(TypedDict, total=False):

order_id: Optional[str]

issue_type: Optional[IssueType]

resolution_preference: Optional[Resolution]

is_valid: bool

errors: list[str]

result: Optional[str]

The state schema makes responsibility explicit. It defines what the system expects to know, what it may still be missing, and what constitutes a terminal outcome. Nothing is hidden inside prompts, function arguments, or transient variables.

As execution progresses, different nodes update different parts of this shared state:

- an input node fills missing fields

- a validation node evaluates constraints and records errors

- routing logic inspects state to choose the next path

- a final node produces a terminal result

No node needs to “know” the whole system. Coordination happens through shared state rather than direct coupling.

State Enables Retries and Loops

This framing is what makes retries and branching possible without hidden logic.

When validation fails, execution loops — not because a function throws an exception, but because the state explicitly indicates the system is not ready to proceed. When required fields are present and valid, execution advances. When a terminal result is produced, the workflow stops.

Control flow emerges from state transitions, not from imperative calls.

This is the critical distinction between agentic structure and ad-hoc automation.

State as Responsibility

Treating state as a contract also makes accountability visible.

If a field is missing, invalid, or inconsistent, it appears directly in state. If execution takes an unexpected path, the conditions that caused it are inspectable. Debugging becomes a matter of examining state transitions rather than reconstructing implicit behavior.

Typed schemas reinforce this contract. They clarify not only what the system knows, but what it is responsible for producing at each stage of execution.

Once state is explicit, execution stops being implicit.

The system no longer guesses what to do next.

It checks.

With state defined, we can now turn the abstract workflow into something concrete: routing execution through explicit transitions between nodes.

Validation and Routing: Decisions Become Structure

Once inputs are collected and validated, the workflow reaches its first real decision point.

Should execution proceed?

Should it retry?

And if it proceeds, which path should it take?

In LangGraph, these decisions are not hidden inside node implementations. They are lifted into the graph itself.

This is where agentic design becomes structural.

Instead of embedding if / else logic deep inside a function, LangGraph uses a router function that returns a label indicating where execution should go next:

def route_after_validation(state: TriageState) -> str:

if not state.get("is_valid", False):

return "retry_input"

if state.get("resolution_preference") == "refund":

return "refund_flow"

return "replacement_flow"

The router does not perform the work.

It does not modify state.

It does not trigger side effects.

It answers a single question:

Given the current state, what should happen next?

That answer becomes part of the graph’s control flow.

This separation is subtle but important. Decisions are now:

- explicit

- testable

- inspectable

- independent from business logic

Validation determines whether the system is ready.

Routing determines where execution flows next.

Handling nodes determine what actually happens.

These concerns no longer bleed into one another.

Building the Graph (Nodes, Edges, and a Retry Loop)

With state and routing defined, we can now assemble the workflow itself.

At this point, the specific logic inside each node matters less than how they are connected. Even without inspecting node implementations, the structure of the system is already visible.

from langgraph.graph import StateGraph, END

workflow = StateGraph(TriageState)

# Register nodes (functions defined elsewhere)

workflow.add_node("input", input_node)

workflow.add_node("validate", validate_node)

workflow.add_node("refund", refund_node)

workflow.add_node("replacement", replacement_node)

workflow.add_node("finalize", finalize_node)

# Linear start: input → validate

workflow.set_entry_point("input")

workflow.add_edge("input", "validate")

# Conditional routing after validate

workflow.add_conditional_edges(

"validate",

route_after_validation,

{

"retry_input": "input", # loop back

"refund_flow": "refund",

"replacement_flow": "replacement",

},

)

# Converge both branches and end

workflow.add_edge("refund", "finalize")

workflow.add_edge("replacement", "finalize")

workflow.add_edge("finalize", END)

app = workflow.compile()

A deliberate choice is worth calling out here: the individual node implementations are not shown.

That omission is intentional. Each node is a simple, self-contained function that reads from state and returns updates to state. Nodes do not call one another directly, and they do not control execution flow. Coordination happens entirely through the graph and the shared state.

Even without the node internals, several architectural properties are immediately apparent:

- The retry loop is explicit

Validation can route execution back to input without throwing exceptions or restarting the system. - Branching is explicit

Refund and replacement flows are separate paths, not conditional blocks buried in code. - Convergence is explicit

Both branches reunite at a single finalize step, making the workflow easier to reason about and evolve. - Compilation produces an executable process

The graph is no longer a diagram — it becomes a runnable system.

This is the core shift LangGraph introduces:

Control flow is modeled, not implied.

Running the Workflow (Valid vs. Invalid Inputs)

Once compiled, the workflow can be invoked with an initial state.

A valid run follows a single branch and terminates naturally:

final_state = app.invoke({

"order_id": "ORD-12345",

"issue_type": "late",

"resolution_preference": "refund",

})

print(final_state["result"])

Refund flow selected for ORD-12345.

Issue type: late.

Next steps: verify eligibility → initiate refund → notify customer.

Here, validation passes, routing selects the refund path, and execution completes.

An invalid run behaves differently.

If validation fails, execution does not crash. It loops.

In a real system, this might mean prompting a user, requesting missing data from another service, or applying a retry limit. In this example, the loop simply routes back to input.

This is an important lesson:

Graphs make looping easy — but they also make responsibility explicit.

You must decide:

- how many retries are allowed

- how correction happens

- when to stop safely

LangGraph gives you the structure. Policy is still your job.

Observability: Visualizing and Inspecting State

One of the most practical advantages of modeling execution as a graph is observability.

Instead of guessing what happened inside a long prompt or opaque agent loop, you can inspect both:

- the structure of execution

- the evolution of state

Even at this early stage, the workflow is already inspectable.

Once the graph is compiled:

app = workflow.compile()

You can visualize its structure directly:

from IPython.display import Image, display

png_bytes = app.get_graph().draw_mermaid_png()

display(Image(png_bytes))

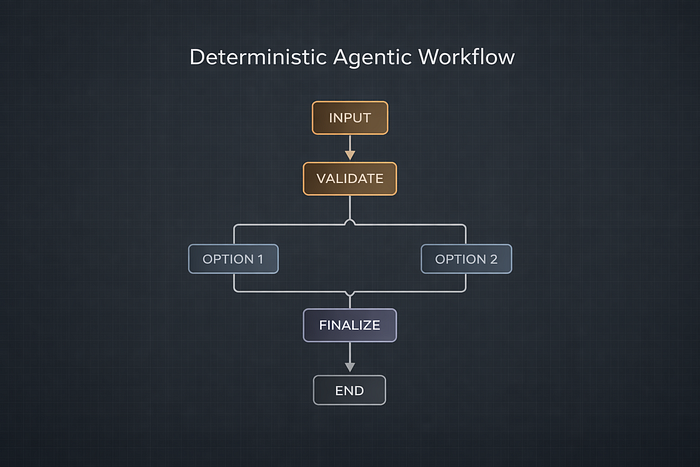

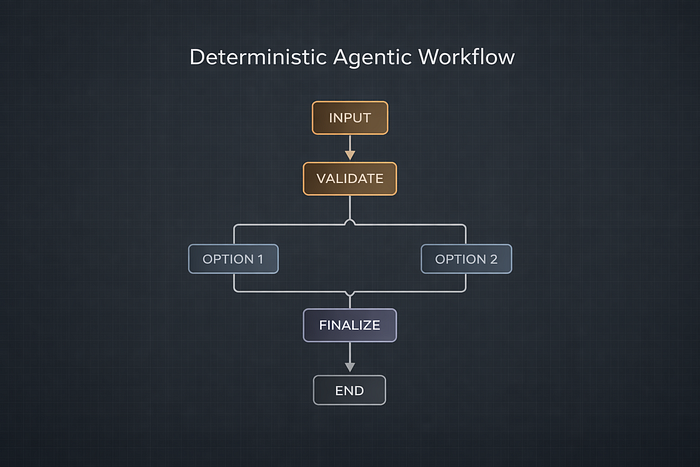

The resulting diagram makes the execution model tangible:

- where execution starts

- where it can branch

- where it loops

- where it terminates

As workflows grow more complex — with additional branches, retries, or human checkpoints — this kind of visibility becomes essential. It turns agentic behavior from something you hope works into something you can reason about.

What This Example Proves

At this point, the system we’ve built contains no language model at all.

And yet, it already behaves in a recognizably agentic way.

State persists across steps and across retries. Decisions are explicit, inspectable, and testable. The system adapts its next action based on evolving conditions, and execution paths can be visualized, traced, and debugged after the fact.

None of this comes from intelligence in the model.

It comes from structure in execution.

This is the critical takeaway.

Agentic behavior does not emerge when you add an LLM. It emerges when you make control flow, state, and decision boundaries explicit.

Once that structure exists, introducing a language model becomes an implementation choice — not the foundation of the system. A model can assist with reasoning, classification, or generation, but it operates inside a process that already knows how to continue, recover, and terminate.

Without that structure, an LLM can only approximate agency through probabilistic guesses. With it, even a deterministic workflow begins to look — and behave — like an agent.

Where the LLM Goes Later — and Why It Must Be Gated

At this point, we’ve intentionally built an agentic workflow without introducing a language model.

What this example demonstrates is that the hardest parts of agentic systems are not generative. They are structural: modeling state, making decisions explicit, handling retries, and controlling execution over time. Until those elements are in place, adding an LLM does not make a system more capable — it makes it less predictable.

Once structure exists, the role of the LLM becomes clearer.

A language model is not responsible for running the system.

It is responsible for assisting decisions inside a system that already knows how to continue, recover, and terminate.

In practice, this means LLMs should appear at specific, constrained points in the graph:

- as classifiers operating on well-defined inputs

- as planners that propose options, not actions

- as generators whose outputs are validated before execution continues

Crucially, they should not control execution flow directly.

This is why gating matters.

Without explicit decision boundaries, LLM outputs blur into execution logic. Retries become guesswork. Failures become opaque. Debugging turns into post-hoc storytelling. With gating, probabilistic reasoning is contained inside deterministic structure.

That is the transition this series is building toward.

In the next article, we will reintroduce LLMs — but carefully. We will place them inside the graph, behind validation and approval steps, and examine how to design systems where language models inform decisions without silently taking control of them.

Structure first.

Reasoning second.

Execution always explicit.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.