The Hidden Assumptions Behind Linear Regression

Last Updated on January 26, 2026 by Editorial Team

Author(s): Samith Chimminiyan

Originally published on Towards AI.

Linear Regression is one of the first topics that we hear when we start learning Machine Learning. Because of that, many people treat it as something to quickly “get through” before moving on to more complex models.

Often, it is labeled as a basic model that we need to learn and then move beyond.

Is that a mistake?

Yes. Absolutely.

Because if you truly understand Linear Regression, you already understand the core of Machine Learning.

Why So?

To understand this properly, we need to step back and examine what Linear Regression really is, how it’s used in practice, and the intuition behind the mathematics.

What Linear Regression Really Is?

In theory, Linear Regression is a supervised machine learning algorithm used to predict a continuous numerical value (e.g., price, age, temperature) based on the linear relationship between a dependent variable and one or more independent variables.

That definition is correct.

But it doesn’t tell us much.

At its core, Linear Regression is not about prediction first.

It’s about describing a relationship between variables in the simplest possible way.

Dependent and Independent Variables (Without the Jargon)

The variable we want to predict is called the dependent variable.

The variables we use to make that prediction are called independent variables.

In a house price prediction problem:

- The price of the house is the dependent variable

- Features like size, number of bedrooms, and location are independent variables

So far, nothing surprising.

But the real insight comes when we visualize the data.

What the Model Is Actually Trying to Do

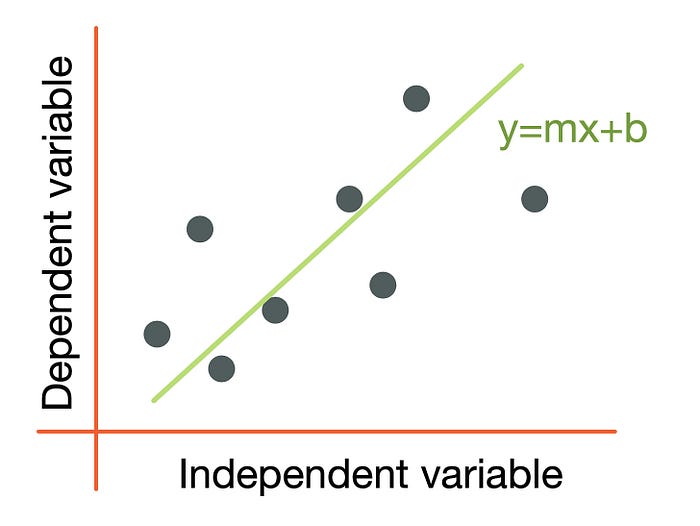

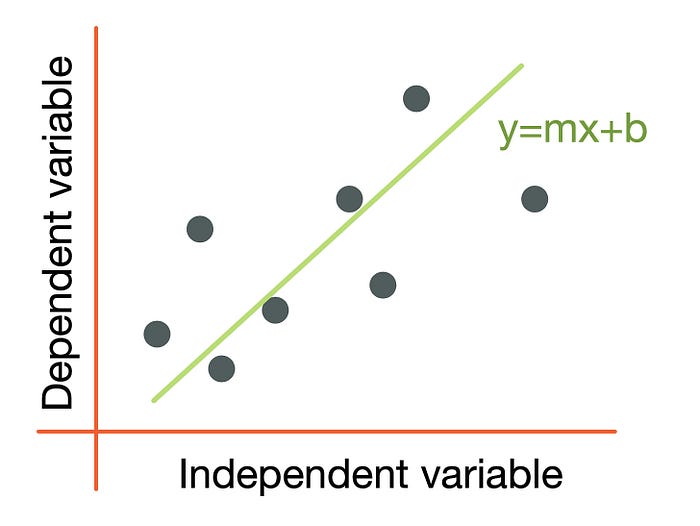

If we plot the data points on a scatter plot, Linear Regression tries to draw a straight line that best represents the overall trend in the data.

Not a perfect line.

Not a line that passes through every point.

A line that is “wrong in the least possible way.”

That immediately raises an important question:

How do we decide which line is “best”?

Can We Fit Any Line We Want?

No.

The line is not chosen by intuition or convenience.

It is chosen by error.

For every data point:

- The model makes a prediction

- We measure how far that prediction is from the actual value

This difference is called the error.

When we calculate this error for all data points and combine them, we get the total error of the model.

Linear Regression simply asks:

Which line produces the smallest total error across all data points?

That’s it.

No magic.

No intelligence.

Just optimization.

Where Linear Regression Came From (And Why That Matters)

Linear Regression is not a pure Machine Learning concept.

Even before Machine Learning was introduced, it was used in statistics.

It mattered a lot more in statistics than it sounds.

Originally used to explain instead of predicting

The main purpose of linear regression is to understand the relationship with data so that prediction accuracy can be improved.

Statisticians used to answer many questions related to the data by using Linear Regression.

Linear Regression makes sure that we think more about assumptions, uncertainty, and interpretability. The concepts that we ignore more in model machine learning.

Designed for a Simpler World

Linear Regression is a model that makes strong assumptions in exchange for clarity.

It was introduced as a simple, explainable, and mathematically tractable model for a small, noisy, and easy-to-collect dataset.

Because of this, the model has assumptions like relationships are stable, noise behaves nicely, and inputs don’t fight each other. Even though it’s a powerful model.

Why Linear Regression Still Matters in Modern ML

We are nowadays we are dealing with some powerful models, so the ultimate questions come to everyone’s mind.

Why do we need Linear Regression? What does Linear Regression bring to the table that complex models can’t?

Linear Regression is preferred because it’s an honest model.

A linear model:

- exposes data leakage quickly

- highlights weak or irrelevant features

- sets a performance baseline that keeps expectations realistic

If a complex model performs only marginally better than a linear one, that’s a signal, not a success.

Linear Regression also remains valuable when:

- interpretability matters more than raw accuracy

- The data is limited or noisy

- decisions need to be explained, not just optimized

In many real systems, a slightly less accurate but explainable model is the correct choice.

The Math — Only What You Need to Think Clearly

When we think about math behind algorithms, we are presented with big formulas and equations.

But in the case of Linear Regression its not required. Linear Regression makes predictions using a weighted sum of inputs, and those weights need to be adjusted to reduce the error.

Loss function matters the most in Linear Regression. For every prediction, the model measures how wrong it is. Those errors are combined into a single number that represents how well the model is performing.

Mathematically, it is represented as below.

Linear Regression From Scratch

Implementing Linear Regression from scratch helps you see what actually happens under the hood.

There are two main ways to do it:

Closed-Form Solution

The simplest way is the closed-form solution, which calculates the best-fit line directly using a formula from linear algebra.

import numpy as np

class LinearRegressionClosed:

def __init__(self):

self.coef_ = None

self.intercept_ = None

def fit(self, X, y):

X = np.asarray(X)

y = np.asarray(y)

Xb = np.c_[np.ones((X.shape[0], 1)), X]

A = np.linalg.inv(Xb.T @ Xb) @ Xb.T @ y

self.intercept_ = A[0]

self.coef_ = A[1:]

def predict(self, X):

X = np.asarray(X)

return X @ self.coef_ + self.intercept_

This method solves for the exact line that minimizes error.

It’s fast and elegant for small datasets, but it doesn’t scale well to very large data.

If you want to see this tested on real data, check my GitHub: linear-regression from scratch closed form

Gradient Descent

The other approach is iterative using gradient descent to gradually find the best line.

import pandas as pd

def gradient_descent(m_now, b_now, points, alpha):

x = points['MedInc'].values

y = points['MedHouseVal'].values

n = len(x)

y_pred = m_now * x + b_now

m_gradient = -(2/n) * sum(x * (y - y_pred))

b_gradient = -(2/n) * sum(y - y_pred)

m = m_now - alpha * m_gradient

b = b_now - alpha * b_gradient

return m, b

m = 0

b = 0

alpha = 0.01

epochs = 1000

for i in range(epochs):

m, b = gradient_descent(m, b, df, alpha)

if i % 100 == 0:

print(f"Epoch {i}: m={m:.4f}, b={b:.4f}")

print("\nFinal values:")

print(f"m = {m}")

print(f"b = {b}")

Gradient descent teaches you the process of learning:

start with a guess → measure error → adjust gradually until it fits

This is exactly what all Machine Learning models do, even deep neural networks.

If you want to see this tested on real data, check my GitHub: linear-regression-from-scratch-in-python

That’s a Wrap!:

Linear Regression is not important because it’s simple. It’s important because it teaches you how models think, how data behaves, and where assumptions quietly shape results.

In this newsletter, I’ve tried to explain Linear Regression in my own way, why it’s useful, why it’s interesting, and why understanding it well changes the way you approach Machine Learning.

Once you see that, Machine Learning stops feeling like a collection of algorithms

and starts feeling like a system you can reason about.

Resources & Further Reading

If you want to explore Linear Regression in more depth, here are some helpful resources:

Tutorials & Courses:

- Google ML Crash Course — Linear Regression — Great for intuition and interactive exercises.

- YouTube: Linear Regression Explained — Concise, visual explanation.

- Linear Rregression — Wiki

Research & Articles:

- Fisher, R.A. (1922). On the Interpretation of χ² from Contingency Tables, and the Calculation of P. — Classic statistics paper introducing regression ideas.

- Seber, G.A.F., & Lee, A.J. (2012). Linear Regression Analysis. — Comprehensive reference for understanding assumptions and theory in regression.

Pro Tip:

Even if you don’t read the papers in full, skimming the introduction and conclusions can give you insights into why Linear Regression works the way it does and why it’s still relevant in modern ML.

Cheers,

Samith Chimminiyan

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.