Hierarchical Reasoning Models: When 27M Parameters Outperform Chain-of-Thought

Last Updated on January 26, 2026 by Editorial Team

Author(s): Kyouma45

Originally published on Towards AI.

Paper-explained Series 4

TL;DR

Most AI models “reason” by talking themselves through problems using chain-of-thought, which is slow, brittle, and expensive.

This article explains a different idea called the Hierarchical Reasoning Model (HRM).

Instead of reasoning in words, HRM thinks silently in layers, similar to how the human brain separates planning from execution. A slow, high-level module decides what to do, while a fast, low-level module figures out how to do it — repeating this cycle until the problem is solved.

Despite being much smaller and trained on very little data, HRM solves hard problems (like complex Sudoku, mazes, and abstract puzzles) that even large language models fail at.

The big takeaway: better reasoning doesn’t necessarily come from bigger models or longer explanations — sometimes it comes from better internal structure.

In this article, we will cover A-Z about HRMs…

link to original paper: https://arxiv.org/pdf/2506.21734

Core Philosophy and Architecture

HRM doesn’t reason by talking to itself — it reasons by thinking longer.

The Hierarchical Reasoning Model (HRM) is designed to overcome a core limitation of modern Transformers: fixed computational depth. No matter how long the input or how hard the task, a standard Transformer always performs the same number of layer-wise computations. This fundamentally limits its ability to carry out long-horizon reasoning, search, and backtracking.

HRM takes a different approach. Instead of increasing depth through more layers or longer chains of thought, it decouples computation time from architectural depth. This allows the model to think longer internally — inside its hidden states — before producing an output. This idea is known as latent reasoning: reasoning happens in continuous internal representations rather than explicit text tokens.

What HRM Actually Is (Architecturally)?

Despite being conceptually different, HRM is still built from Transformer blocks:

- Both reasoning components are encoder-only Transformers

- They use full self-attention (not linear or causal attention)

- Modern enhancements are included: Rotary Positional Encoding, RMSNorm, gated feed-forward layers

- HRM is trained in a sequence-to-sequence setup, just like standard models

- It is not autoregressive in the usual sense — reasoning does not proceed token by token, but through recurrent state updates

So HRM does not replace Transformers — it reuses them inside a recurrent, hierarchical loop.

The Two-Module Hierarchical Structure:

HRM splits reasoning across two tightly coupled recurrent modules that operate at different timescales, mirroring how the brain separates high-level planning from low-level execution.

High-Level Module (zᴴ) — The Planner

- Updates slowly

- Responsible for abstract reasoning, strategy, and long-term planning

- Updates once per reasoning cycle

- Provides a stable, global context (high-dimensional latent state vector) that guides lower-level computation

You can think of this module as deciding what kind of solution strategy should be pursued next.

Low-Level Module (zᴸ) — The Executor

- Updates rapidly

- Handles detailed, local computation such as search, constraint propagation, or refinement

- Runs for T internal steps for every single update of the high-level module

- Performs intensive computation while the high-level state remains fixed

This is the part that does the “heavy lifting” within a given plan.

The Interaction Loop (How Reasoning Actually Happens)

HRM reasoning unfolds through repeated hierarchical cycles:

- Input Encoding

The input x is mapped into a latent working representation (x̄) using an embedding network.

For example: Converts discrete tokens (or grid values like Sudoku cells) into continuous vectors

Note: Uses full self-attention - Low-Level Computation (Inner Loop)

With the high-level state held constant, the low-level module iterates for T steps:

- It updates based on its previous state

- It attends to the input representation

- It is guided by the current high-level context

- During these steps, the low-level module converges toward a local equilibrium-effectively performing search or refinement.

3. High-Level Update (Outer Loop)

After T steps, the high-level module:

- Observes the final low-level state

- Updates its own state to reflect progress

- Establishes a new global context

- Reset and Restart

The low-level module is now exposed to a new high-level state.

This resets its convergence, allowing it to begin a fresh computational phase instead of stalling.

This process — called hierarchical convergence — allows HRM to perform deep, multi-stage reasoning while remaining stable and efficient

Why This Matters Compared to Transformers + CoT

- Transformers rely on depth in space (layers) → HRM uses depth in time

- Chain-of-thought externalizes reasoning into text → HRM keeps reasoning internal and continuous

- Standard RNNs converge too early → HRM avoids this via hierarchical resets

- Backpropagation Through Time is expensive → HRM uses a one-step gradient approximation with constant memory

The result is a model that can execute algorithmic reasoning, search, and backtracking — all without generating intermediate reasoning text.

Mathematical Foundations

HRM’s ability to reason deeply without exploding memory or training instability comes from two tightly connected mathematical ideas:

(1) how computation unfolds over time and

(2) how gradients are computed without backpropagating through that entire history.

I. Hierarchical Convergence

A core problem with standard recurrent models is premature convergence.

In a typical RNN, repeated application of the same update function causes the hidden state to quickly settle into a fixed point. Once this happens, updates become negligibly small, effectively halting computation — the model stops “thinking,” even if more steps are allowed.

Note: It is premature convergence is different from Over-Smoothing problem also faced by RNNs.

HRM avoids this failure mode through hierarchical convergence.

How it works:

- During a reasoning cycle, the low-level module (L-module) repeatedly applies the same update function while the high-level state is held fixed.

- Under these conditions, the L-module naturally converges toward a local fixed point z_L^*, representing a locally consistent partial solution under the current strategy.

- Crucially, once the cycle ends, the high-level module updates its state, changing the global context.

- This update reshapes the solution landscape, meaning the old fixed point is no longer valid.

- As a result, the low-level module is forced to diverge and recompute, beginning a new convergence process toward a different equilibrium.

This alternating pattern — local convergence followed by deliberate disruption — allows HRM to sustain long-running, meaningful computation instead of collapsing early like standard RNNs

II. Fixed Point Theorem & Implicit Function Theorem (IFT)

Unrolling (same layers taking there output as input for N steps) recurrent computation across many timesteps usually requires Backpropagation Through Time (BPTT).

Backpropagation Through Time (BPTT) is the standard algorithm used to train Recurrent Neural Networks (RNNs).

The Process:

- Forward Pass (Thinking): The input goes into Copy 1, which passes its state to Copy 2, and so on, until the end. You must save the state of every single copy in memory.

- Calculate Error: At the end of the sequence (or at each step), you compare the output to the target to find the error.

- Backward Pass (Learning): You calculate the gradient (the necessary correction) starting from the last step and moving backward to the first. The error signal flows "through time" from T -> 0.

BPTT stores all intermediate states, leading to:

- Memory usage growing with the number of steps O(T)

- Poor scalability

- Biological implausibility

HRM avoids this by reframing the problem.

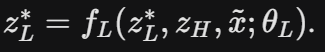

Fixed-point assumption: During each cycle, the paper assumes the low-level module approximately satisfies:

That is, the low-level state behaves reaches a fixed point of its update function under a fixed high-level context.

Fixed point is premature convergence state about we discussed earlier.

Why this matters?

If the final state is defined implicitly by this equation, then its dependence on the model parameters can be computed without unrolling the entire trajectory.

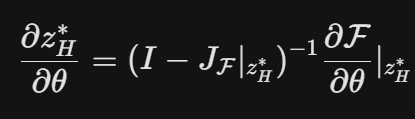

Using the Implicit Function Theorem, the gradient of the fixed point can be expressed as:

where:

- F is the update mapping

- J_F is the Jacobian of F with respect to the hidden state

The math (Implicit Function Theorem) proves that if you are at a stable Fixed Point, you don’t need the history to calculate the gradient. You can calculate the correct update using only the fixed point z^*

This provides a mathematically principled way to compute gradients without BPTT

III. Deep Supervision

Deep supervision plays a crucial role in making HRM trainable at large reasoning depths. Instead of waiting until the very end of a long reasoning process to compute gradients, HRM introduces periodic, structured learning checkpoints during inference.

This addresses two core issues:

- Vanishing gradients in deep or recurrent computation

- Unstable learning when reasoning spans many internal steps

Deep supervision turns long reasoning into a sequence of short learning episodes, each nudging the model in the right direction without forcing it to remember every step.

A. Segmented Reasoning

Rather than performing a single extremely long forward pass, HRM breaks computation into segments.

Each segment consists of a full HRM reasoning cycle:

- Multiple low-level updates

- One or more high-level updates

- The output of each segment becomes the starting state for the next

This allows the model to reason progressively while exposing intermediate internal states to learning.

B. Intermediate Supervision

After each segment m:

- The model produces an intermediate prediction y^(m)

- A loss L(m)=LOSS(y^(m),y) is computed against the ground-truth target

These intermediate losses act as dense feedback signals, nudging the model toward useful representations even if the final solution has not yet fully emerged.

Importantly, the intermediate outputs are not required to be fully correct — they serve as directional guidance rather than strict checkpoints.

C. State Detachment (The Critical Step)

Before the next segment begins, the hidden state z(m) is explicitly detached from the computation graph.

This means:

- Gradients from segment m+1 cannot flow backward into segment m

- Each segment is treated as a locally independent optimization step

From a mathematical standpoint, this creates a 1-step approximation of what would otherwise be a deeply recursive gradient flow.

IV. Adaptive Computation Time (ACT)

Not all problems require the same amount of reasoning. Some can be solved almost instantly, while others demand prolonged deliberation. HRM incorporates Adaptive Computation Time (ACT) to dynamically allocate computation based on task difficulty — mirroring the brain’s distinction between fast, automatic reasoning (System 1) and slow, deliberate thinking (System 2).

Rather than fixing the number of reasoning cycles, HRM learns when to stop thinking.

A. The Halting Mechanism

At the end of each reasoning segment m, HRM evaluates whether further computation is beneficial.

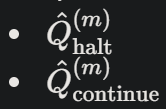

Q-Head

A dedicated Q-head reads the current high-level latent state z_H^(m)

It outputs two values:

These values estimate the expected utility of stopping now versus reasoning longer.

Importantly, the Q-head does not operate on outputs or tokens — it reasons purely over the internal planning state.

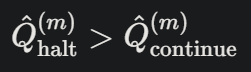

B. Decision Logic (When to Stop)

The halting decision follows simple but carefully designed rules:

The model halts if either:

- The number of segments exceeds a hard cap Mmax, or

- The predicted value of halting exceeds continuing:

and the model has already completed at least Mmin segments

To encourage exploration, Mmin is randomized during training with a small probability, nudging the model to occasionally “think longer” even when early stopping seems attractive.

C. Training the Halting Policy (Q-Learning)

The Q-head is trained using a lightweight Q-learning objective, integrated directly into HRM’s supervised training loop.

Reward Structure:

- Halt action → Reward = 1 if the prediction is correct, 0 otherwise

- Continue action → Reward = 0, and the episode proceeds to the next segment

This framing teaches the model a simple but powerful trade-off: Is one more round of thinking likely to improve the final answer?

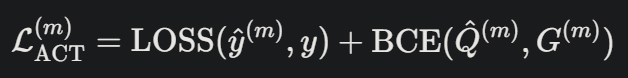

D. Joint Loss Function

At each segment, HRM minimizes a combined objective:

Where:

- The first term enforces task correctness

- The second trains the halting decision

This joint loss aligns accuracy and efficiency, rather than optimizing them separately.

E. Inference-Time Scaling (A Hidden Superpower)

A remarkable consequence of ACT is inference-time scaling.

- A model trained with a small Mmax can be run with a larger Mmax at inference

- This allows the model to use extra computation without retraining

Empirically:

- Hard Sudoku instances benefit significantly from more compute

- ARC tasks saturate quickly, indicating true adaptivity rather than blind looping

Adaptive Computation Time enables HRM to dynamically trade computation for accuracy, allowing the model to stop reasoning as soon as additional thinking is unlikely to improve the final answer.

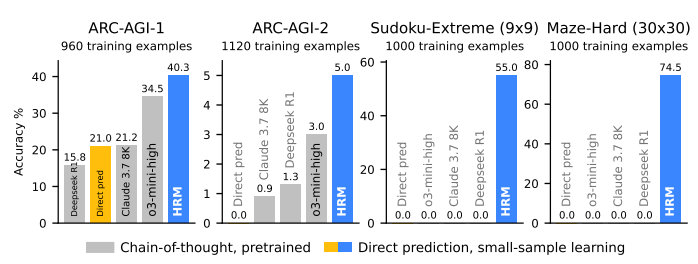

Performance and Benchmarks

HRM is evaluated on reasoning-heavy benchmarks explicitly chosen to stress long-horizon planning, search, and abstraction — areas where standard Transformers and chain-of-thought (CoT) models struggle.

Benchmarks Used:

- ARC-AGI (v1 & v2)

Measures abstract, inductive reasoning using grid-based puzzles that require discovering unseen rules from very few examples. - Sudoku-Extreme

A deliberately hard dataset requiring deep search and backtracking, far beyond standard Sudoku benchmarks. - Maze-Hard (30×30)

Optimal pathfinding in large mazes, testing long-range planning and algorithmic reasoning.

Key Results:

Across all benchmarks, HRM demonstrates strong performance with minimal data and compute:

- Only ~27M parameters, trained from scratch

- ~1,000 training examples per task

- No pretraining

- No chain-of-thought supervision

- No long context windows

Despite these constraints:

- HRM outperforms much larger CoT-based models on ARC-AGI

- Solves Sudoku-Extreme and Maze-Hard, where leading LLMs achieve near-zero accuracy

- Benefits from inference-time scaling: allowing the model to “think longer” improves results without retraining

A massive congratulations is in order for the research team at Sapient Intelligence and Tsinghua University — specifically Guan Wang, Jin Li, Yuhao Sun, Xing Chen, Changling Liu, Yue Wu, Meng Lu, Sen Song, and Yasin Abbasi Yadkori.

Their work on the Hierarchical Reasoning Model (HRM) represents a bold and elegant step away from the brute-force “scaling laws” that dominate current AI. By taking inspiration from the hierarchical and multi-timescale processing of the human brain, they have demonstrated that depth of thought matters more than sheer size.

Achieving state-of-the-art results on the ARC-AGI, Sudoku-Extreme, and Maze-Hard benchmarks with a model of only 27 million parameters — trained on just 1,000 examples — is a staggering achievement in data efficiency and architectural design. They have effectively shown that we can build “System 2” reasoning capabilities without needing trillion-parameter giants, paving the way for more accessible and biologically plausible General Artificial Intelligence.

Coming Next: Tiny Recursive Models (TRM)

In our next deep dive, we will shift gears to explore an even more advanced concept that pushes the boundaries of efficiency further: Tiny Recursive Models (TRM) from Alexia Jolicoeur-Martineau.

We will discuss how TRM builds upon these recursive principles to achieve powerful reasoning with even fewer parameters, potentially challenging everything we know about the “minimum viable size” for intelligence. Stay tuned!

Until next time folks…

El Psy Congroo

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.