Solving Deepfakes with Traces, Frequency, and Attention!

Last Updated on September 4, 2025 by Editorial Team

Author(s): Shreyash Pawar

Originally published on Towards AI.

Introduction

Think videos where world leaders say wild things they never actually did, or photos so altered you question reality itself. As these fakes get scarily realistic, spotting them becomes crucial to fight misinformation and protect trust in what we see online.

Deepfakes are essentially AI-generated images/videos where faces are altered to look authentic, often powered by Generative Adversarial Networks (GANs). GANs use two neural networks against each other: a generator creating fakes and a discriminator judging them, resulting in outputs that deceive even experts. In this blog, I’ll walk you through my dive into deepfake detection, focusing on clever tricks like extracting hidden traces, tapping into frequency domain secrets, and using attention mechanisms to zero in on the sneaky stuff.

What can you expect out of this blog? Firstly, we will discuss some previously done works in simple digestible chunks, which can be seen as simplified translation of research work and then create our desired model using each of the critical piece. Which can be then used as a unique methodology for approaching deepfakes or a cool project that you can implement and ideate upon or maybe just a chill read 🙂 We’ll explore the models we built and tested, share some wins and lessons, and hopefully inspire you to tinker with this tech too. Whether you’re new to AI or a seasoned coder, let’s unmask these digital illusions together!

Our Story

We explore upon ways to conquer deepfake detection and implemented various techniques to find the holy grail, and here’s what we found out — Detection relies on spotting clues: spatial analysis checks pixel patterns, frequency domain (via transforms like DCT) reveals hidden signals from compression or morphing, and traces highlight tampering footprints. Tools like attention mechanisms then amplify the important bits, making models smarter at separating real from fake. This foundation sets the stage for building robust detectors, blending forensics with modern AI to stay one step ahead.

The Traces: Understanding AMTENnet

Can AI spot what the human eye can’t?

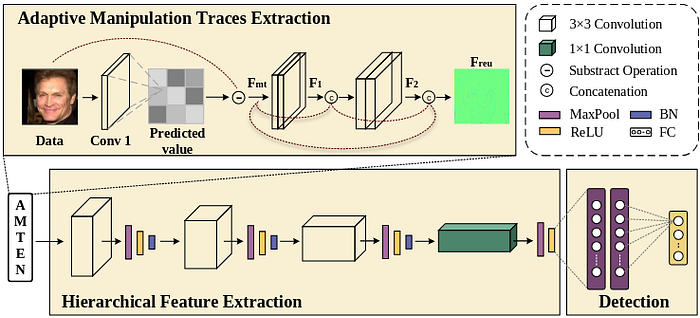

In the world of deepfakes, the answer is yes. A key component of our project is the Adaptive Manipulative Trace Extraction Network or AMTEN. Drawing inspiration from MISLnet, this model is crafted to uncover subtle traces of manipulation in images — details often invisible to the human eye.

The Trace Extractor

The adaptive manipulative trace extraction Network Module or AMTEN module is inspired originally by the MISLnet , there is a constrained convolution layer at the start of the network which means the convolution layer is not allowed to learn freely, instead a restrictive constraint is applied which helps the initial layers to extract low level subtle artifacts better.

But is this the best way to capture these delicate traces? In MISLnet, the constrained convolution layer resets its learnable weights after a set number of iterations — but is that ideal? Another question pops up: is feeding these fragile traces into a standard feed-forward network the smartest move, when they could easily fade away across multiple layers? These are the challenges AMTENnet tackles head-on.

How AMTENnet Works

The brilliance of AMTENnet lies in its straightforward yet effective approach: it predicts what the original image should look like, then subtracts that from the actual image to reveal manipulation traces. Here’s the formula behind it:

These traces are called Fmt (manipulation traces) , the weights of these layers are fine-tuned using stochastic gradient descent (SGD). I won’t dive into the nitty-gritty here — if you’re curious, check out the original paper or my GitHub page for the PyTorch implementation details.

Now moving onto the problem of fragile traces, we don’t just process them and move on. Instead, we borrow an idea from DenseNet: each layer’s input is combined with its output and passed along, so this double the information and kernels required to contain these feature maps. This way, earlier features stick around and get reused.

We also stack two convolutional layers as composite functions, skipping pooling layers entirely to preserve every bit of information, why you ask? It’s because when pooling max or average is taken from a patch usually this is a excellent practice to capture important information will reducing data but here the traces are weak so such practice is avoided but you’ll see them later 😉 .

Fake face detection via adaptive manipulation traces

extraction network

Inside AMTENnet’s Architecture

In our project, AMTENnet was a vital piece of our final system (more on that later). For our requirement, I re-implemented the original AMTENnet from Caffe framework in PyTorch. PyTorch was being used by our team for everything, but if you’d rather explore the author’s version, their GitHub link is below.

The name “AMTENnet” blends “AMTEN” (the trace extraction module) with “CNN” (convolutional neural network). After extracting low-level traces, we transform them into high-level insights using a hierarchical feature extraction network. This includes convolutional layers, four max-pooling layers (MaxPool), four ReLU activation functions (ReLU), and three batch normalization (BN) layers. This feeds into a classification network with a 300-neuron fully connected layer, narrowing down to a final output for real-or-fake classification.

We also performed a trace pattern analysis using Grad-CAM at the conv6 as the traces from Freu were vividly visible there. The results were pretty clear:

The Class Activation Map traces show us that GAN generated images have dispersed GAN artifacts throughout the image while the real image these traces are minimal.

Here’s the Code snippet for AMTENnet in PyTorch:

class AMTENNet(nn.Module):

def __init__(self):

super(AMTENNet, self).__init__()

# Conv1: Predict pixel values (3 in -> 3 out)

self.conv1 = nn.Conv2d(3, 3, kernel_size=3, stride=1, padding=1)

# Composite Conv2 + Conv3: F1

self.conv2 = nn.Conv2d(3, 3, kernel_size=3, stride=1, padding=1)

self.bn2 = nn.BatchNorm2d(3)

self.conv3 = nn.Conv2d(3, 3, kernel_size=3, stride=1, padding=1)

self.bn3 = nn.BatchNorm2d(3)

# Composite Conv4 + Conv5: F2

self.conv4 = nn.Conv2d(6, 6, kernel_size=3, stride=1, padding=1)

self.bn4 = nn.BatchNorm2d(6)

self.conv5 = nn.Conv2d(6, 6, kernel_size=3, stride=1, padding=1)

self.bn5 = nn.BatchNorm2d(6)

# HFE: Conv6 -> Conv7 -> Conv8 -> Conv9

self.conv6 = nn.Conv2d(12, 24, kernel_size=3, stride=1, padding=1)

self.bn6 = nn.BatchNorm2d(24)

self.conv7 = nn.Conv2d(24, 48, kernel_size=3, stride=1, padding=1)

self.bn7 = nn.BatchNorm2d(48)

self.conv8 = nn.Conv2d(48, 64, kernel_size=3, stride=1, padding=1)

self.bn8 = nn.BatchNorm2d(64)

self.conv9 = nn.Conv2d(64, 128, kernel_size=1, stride=1) # No BN

# Classification Head

self.fc1 = nn.Linear(128 * 7 * 7, 300)

self.fc2 = nn.Linear(300, 300)

self.fc3 = nn.Linear(300, 2) # Output logits for 2 classes

self.pool = nn.MaxPool2d(kernel_size=3, stride=2)

def forward(self, x,return_features=False):

pred = self.conv1(x)

fmt = pred - x # Residual (manipulation traces)

f1_inter = F.relu(self.bn2(self.conv2(fmt)))

f1 = F.relu(self.bn3(self.conv3(f1_inter)))

f1_concat = torch.cat([f1, fmt], dim=1)

f2_inter = F.relu(self.bn4(self.conv4(f1_concat)))

f2 = F.relu(self.bn5(self.conv5(f2_inter)))

freu = torch.cat([f2, f1_concat], dim=1)

# HFE

out = self.conv6(freu)

out = self.pool(out)

out = F.relu(out)

out = self.bn6(out)

out = self.conv7(out)

out = self.pool(out)

out = F.relu(out)

out = self.bn7(out)

out = self.conv8(out)

out = self.pool(out)

out = F.relu(out)

out = self.bn8(out)

out = self.conv9(out)

out = self.pool(out)

out = F.relu(out)

# Conv9 has no BN

out = out.view(out.size(0), -1)

out = F.relu(self.fc1(out))

out = F.relu(self.fc2(out))

logits = self.fc3(out)

if return_features:

return logits, fmt, f1, f2, freu

else:

return logits

MCNet and VANet

The next paper we drew inspiration from is “Manipulation Classification for JPEG Images Using Multi-Domain Features.” The authors’ key insight stems from JPEG’s use of Discrete Cosine Transform (DCT) for compression. But DCT isn’t just for shrinking files; it also helps us see an image’s frequency domain version, revealing hidden patterns.

When we replicated and trained the original MCNet, Grad-CAM visualizations showed the frequency learner outperforming expectations, even on localized morphing — like subtle edits in deepfakes.

The compression network, however, fell flat, producing nearly uniform gradient maps for any image. Our hunch was confirmed when accuracy comparisons revealed MCNet was likely overfitting. As you’ll see in the accuracy table later. VANet — essentially MCNet minus the compression part — performed better. This could tie back to the original MCNet training on the ALASKA steganalysis dataset (over 400k images, not tailored for faces), even with transfer learning yielding okay results. Beyond ditching the compression net, we slimmed down layers in both spatial and frequency networks, which likely cut model complexity and boosted efficiency.

Now, let’s break down the original MCNet architecture and our tweaks.

First, the input formats: For the spatial domain learner, we fed in raw RGB pixel data. We’ll skip deep dives on the compression subnetwork — check the MCNet paper for that. For the frequency domain, we applied a 4×4 2D block DCT(using scipy) to capture local frequency components. The red, green, and blue channels were processed separately with stride-2 convolutions, as experiments showed this excelled at pulling out frequency details. This yielded 48 kernels, reordered randomly and scanned in a zigzag pattern.

Frequency domain analysis shines in spotting GAN traces invisible to the naked eye but crystal clear when decomposed into cosine (or sine) subparts. These breakdowns expose inconsistencies, like unnatural smoothing in fakes.

For the compression learner, we transformed images into binarized DCT form, as shown here:

This quantized DCT array works because JPEG relies on DCT for lossy compression, discarding high-frequency details that might hide manipulations.

Now, the core network architecture:

Disclaimer: The diagram shows the original MCNet, but we’ll discuss it through our modifications, focusing on VANet and its elements.

Four block types make up the network — here’s what each handles:

BT1 and BT2 extract and preserve low-level features without pooling layers.

class BT1(nn.Module):

def __init__(self, in_channels, out_channels,groups = 1):

super().__init__()

self.conv = nn.Sequential(

nn.Conv2d(in_channels,out_channels,stride = 1 ,kernel_size= 3, padding = 1,groups=groups),

nn.BatchNorm2d(out_channels),

nn.ReLU(inplace=False)

)

def forward(self, x):

return self.conv(x)

class BT2(nn.Module):

def __init__(self, channels):

super().__init__()

self.block = nn.Sequential(

nn.Conv2d(channels, channels, kernel_size=3, padding=1),

nn.BatchNorm2d(channels),

nn.ReLU(inplace=False),

nn.Conv2d(channels, channels, kernel_size=3, padding=1),

nn.BatchNorm2d(channels)

)

def forward(self, x):

return self.block(x) + x

BT3 acts as a residual block with 3×3 and 1×1 convolutions at stride 2, halving feature resolution.

class BT3(nn.Module):

def __init__(self, in_channels, out_channels):

super().__init__()

self.main = nn.Sequential(

nn.Conv2d(in_channels, out_channels, kernel_size=3, padding=1),

nn.BatchNorm2d(out_channels),

nn.ReLU(inplace=False),

nn.Conv2d(out_channels, out_channels, kernel_size=3,padding=1),

nn.BatchNorm2d(out_channels),

nn.AvgPool2d(kernel_size=3, stride=2, padding=1)

)

self.skip = nn.Sequential(

nn.Conv2d(in_channels, out_channels, kernel_size=1, stride=2),

nn.BatchNorm2d(out_channels)

)

def forward(self, x):

return self.main(x) + self.skip(x) # No ReLU after addition

BT4 fuses features from different domains and vectorizes them via global average pooling.

class BT4(nn.Module):

def __init__(self, in_channels):

super().__init__()

self.conv = nn.Sequential(

nn.Conv2d(in_channels, in_channels, kernel_size=3, padding=1),

nn.BatchNorm2d(in_channels),

nn.ReLU(inplace=False),

nn.Conv2d(in_channels, in_channels, kernel_size=1),

nn.BatchNorm2d(in_channels),

nn.AdaptiveAvgPool2d((1, 1))

)

def forward(self, x):

return self.conv(x)

VANet is essentially a two-branch network that processes images in two ways: one branch handles the spatial domain (raw pixel layout), and the other tackles the frequency domain (hidden patterns in image signals). These branches work together to spot manipulations.

The Spatial Domain Branch

This branch takes a standard RGB image (size 128×128 with 3 color channels) as input. It uses a series of building blocks:

- Two BT1 blocks and four BT2 blocks at the start: These extract subtle clues (like manipulation traces) without any pooling layers. Pooling can blur or lose delicate details, so skipping it helps the model zero in on tampering hints rather than the overall image content.

- Four BT3 blocks next: These reduce the feature map size (dimensionality reduction) and build higher-level insights from the low-level features gathered earlier.

class SpatialLearner(nn.Module):

def __init__(self):

super().__init__()

self.spatial = nn.Sequential(

BT1(3,64),

BT1(64,16),

BT2(16),

BT2(16),

BT2(16),

BT2(16),

BT3(16,32),

BT3(32,64),

BT3(64,128),

BT3(128,256)

)

def forward(self, x):

return self.spatial(x)

Think of it like zooming in on fine details first, then stepping back to see the bigger picture.

The Frequency Domain Branch

This branch starts with a transformed version of the image — a 4×4 block Discrete Cosine Transform (DCT) feature map sized 63x63x48.

- We split this into four subgroups (each 63x63x12) to separate high-frequency (detailed, edgy parts) from low-frequency (smoother areas) components.

- It kicks off with three BT1 blocks and uses group convolution in the first one: This processes each subgroup independently before merging, keeping high- and low-frequency features from mixing too soon. It reduces parameters (making the model lighter) and trains on related channels for better efficiency.

- Then, five BT2 blocks and three BT3 blocks follow: The rest use standard convolutions to learn from all channels combined.

class FrequencyLearner(nn.Module):

def __init__(self):

super().__init__()

self.freq = nn.Sequential(

BT1(48,48,groups=4),

BT1(48,96),

BT1(96,32),

BT2(32),

BT2(32),

BT2(32),

BT2(32),

BT2(32),

BT3(32,32),

BT3(32,64),

BT3(64,128),

)

def forward(self, x):

return self.freq(x)

This setup leverages how frequencies reveal artifacts — like unnatural textures in deepfakes — that pixels alone might miss.

The Fusion

The number of BT3 blocks in each branch is balanced so their outputs match in resolution: the spatial branch ends with an 8x8x256 feature map, and the frequency one with 8x8x128.

- These two outputs are combined (concatenated) into one unified feature set.

- L2-normalization evens out any differences in their scales.

- A BT4 block mixes everything and flattens it using global average pooling (averaging across the map to create a compact vector).

- Finally, a single fully-connected layer predicts probabilities for each class (e.g., real or fake), and the whole model trains by minimizing cross-entropy loss function.

Code snippet for final VAnet Structure

class VANet(nn.Module):

def __init__(self, num_classes):

super().__init__()

self.spatial = SpatialLearner()

self.freq = FrequencyLearner()

self.l2norm = nn.LayerNorm([384, 8, 8])

self.bt4 = BT4(in_channels=384)

self.classifier = nn.Linear(384, num_classes)

def forward(self, rgb, dct):

x_spatial = self.spatial(rgb)

x_freq = self.freq(dct)

x = torch.cat([x_spatial, x_freq], dim=1)

x = self.l2norm(x)

x = self.bt4(x)

x = x.view(x.size(0), -1)

return self.classifier(x)

Attention Please!

Here’s an overview of the attention mechanism we used: the Convolutional Block Attention Module (CBAM), a lightweight attention module. Unlike encoder-decoder transformer-style blocks, CBAM is simple and easy to integrate into feed-forward CNNs. It refines intermediate feature maps by sequentially applying channel and spatial attention.

For those new to attention, it’s a deep learning technique that guides the model on where to focus or which parts matter most for the task. It relies on Key, Query, and Value pairs, where values weighs each input element to highlight what’s key. For a solid grasp, check out this video — {Attention in Transformers} — or read the foundational transformer paper, “Attention is All You Need.”

A quick peek at channel and spatial attention: 👀

Channel Attention: Features from the original image span multiple channels, much like splitting a multicolor image into Red, Green, and Blue. But later features are machine-decided. To capture inter-channel relationships, we use channel attention. We squeeze the spatial dimension of the input feature map (CxHxW) via pooling. Experiments showed using both max-pool and avg-pool in parallel works best. Their outputs feed into a shared MLP, then get concatenated, and weights emerge via a sigmoid function. This shrinks the map to Cx1x1.

Spatial Attention: Just as channel attention uncovers inter-channel links, this finds inter-spatial relationships — the “where” part. Avg- and max-pooling are applied along channels, concatenated (F_avg^s and F_max^s ∈ R^{1×H×W}), then processed with a 7×7 convolution and sigmoid to yield a 1xHxW map.

CBAM shines when channel and spatial attention are applied sequentially.

In our work, we used this plug-and-play tool like a magnifying glass to zoom in on modification traces, boosting model performance.

Putting It All Together: Our Proposed Model

We’ve covered the techniques inspiring our model in detail — now it’s time to tweak and tune for the optimal setup.

We built on VANet as our base, with its spatial and frequency learners. Then, we integrated the AMTEN module upfront in the spatial learner, so it processes traces rather than raw images.

The frequency learner was solid on its own, so we left it unchanged.

The game-changer was adding CBAM: we attached modules to the ends of both the spatial network and the frequency network. This sharpened focus on key manipulation artifacts, like subtle pixel inconsistencies in fakes.

At the fusion stage — merging spatial and frequency outputs — we added another CBAM module plus convolutional layers. This refined the combined features further, enhancing detection of tricky traces such as localized morphing (e.g., unnatural blending in facial areas).

The result? Our hybrid model hit ~98.87% test accuracy on the Hybrid Fake Face and Kaggle 140k datasets. It adaptively highlighted relevant patterns while cutting noise, all without much extra compute overhead. This setup not only improved robustness but also showed how blending traces, frequency analysis, and attention creates a powerful deepfake detector.

Experimentation and Results

Before diving into our core hybrid model, we explored several established techniques for deepfake detection to benchmark and inspire our approach. These included traditional methods like Error Level Analysis (ELA) and Shallow-FakeFaceNet (SFFN).

ELA is a classic forensic tool for spotting image tampering, especially in lossy formats like JPEG. It works by resaving the image at a fixed quality level and calculating pixel-wise differences to highlight compression artifacts. Original regions show low error levels (local minima), while tampered areas spike (local maxima). — think of it as revealing “scars” from edits. While effective for handcrafted manipulations, it struggled with GAN-generated deepfakes due to their seamless blending.

We also looked at SFFN, a lightweight neural network designed for detecting both handcrafted facial manipulations (e.g., Photoshop edits) and GAN fakes. It uses a shallow architecture to focus on facial landmarks and RGB data alone, avoiding reliance on easily spoofed metadata. Which highlighted highlighted the need for deeper frequency analysis in our work.

To evaluate these and other models, we ran extensive experiments on the Hybrid Fake Face Dataset and Kaggle’s 140k Real and Fake Faces. Here’s a summary of key accuracies:

A notable flop was the autoencoder approach: we trained it solely on real images as a one-class classifier, expecting high reconstruction errors to be flagged fake. However, it hit rock-bottom at 50% accuracy — basically random guessing. The t-SNE plots showed poor separation between real and fake embeddings, likely because the model couldn’t grasp nuanced differences like GAN-induced artifacts, treating everything as “close enough.”

On the success side, AMTENet excelled at trace extraction, . Our hybrid AMTEN-Freq-CBAM built on top of previously discussed models, yielding the top accuracy. These explorations solidified that blending traces, frequency, and attention outperforms standalone methods, especially under time constraints.

Author’s Experience

My research internship at the Bhabha Atomic Research Centre (BARC) in the Deep Learning Lab at the Security, Electronics, and Cyber Technology Department was truly awe-inspiring. I gained so much knowledge and perspective along the way. Being inside BARC, with its top secret lab type feel, was nothing short of mesmerizing. From the powerful supercomputers to gigantic nuclear reactors, halls that echoed with innovation, and even the unexpectedly delicious canteen meals. I was also fortunate to attend a research seminar on breast cancer organized by our department!

I’m especially grateful to my guide, Mr. P. Rajsekhar, whose work ethic was unbelievable . He’d often stay in the lab until 10 PM, not out of necessity but sheer dedication — it motivated me to push harder. Thanks to his guidance, I now know techniques like Grad-CAM and others that became crucial to our project.

This was my first real encounter working with Linux, a multi-user system felt like entering uncharted territory — challenging at first, but ultimately rewarding as I adapted. Reading research papers for hours was tough for someone like me, who’s more comfortable with videos than dense texts, but I’m making the shift toward embracing books and academic depth, one page at a time! Replicating those papers, brainstorming creative tweaks, dealing with issues like overfitting, and figuring out how to pinpoint localized morphing — these were the hurdles that tested me, but overcoming them built my confidence. All in all, it was an unforgettable chapter that blended technical growth with moments of wonder.

Why This Isn’t a Formal Research Paper

There are a couple of solid reasons this work doesn’t qualify as a full-fledged research paper, tied to both practical limitations and the scope of our exploration. For starters, deepfakes has evolved beyond GANs, with diffusion models now leading the charge in creating hyper-realistic fakes. Unfortunately, BARC’s secure network restrictions meant we couldn’t access the latest SOTA datasets. It limited our testing to earlier GAN-focused scenarios, preventing a comprehensive evaluation against the latest threats .

The other key factor is the absence of thorough ablation studies, which are crucial for declaring something as groundbreaking. With time constraints and dataset access issues, we simply couldn’t conduct that level of analysis to robustly validate our findings. While our hybrid model delivered strong results, like 98.97% accuracy on what we had. Perhaps in the future, with fewer barriers, it could evolve into one .

Conclusion

As we wrap up the journey of deepfake detection, we have demonstrated that accuracy can reach approximately 99% when we combine the trace elements from AMTENet, frequency information in VANet, and focused attention by a CBAM indicating a hybrid approach is a strong weapon in combating fakes. Challenges such as restricted access and limited ablations prevented this from being a complete research paper, yet we illustrated the power of creativity in a constrained environment.

If anything, this reinforces how vital ongoing work in AI ethics and detection is, especially as tech evolves. I hope this sparks your interest — grab the code from my GitHub, experiment with new datasets, or drop a comment with your thoughts. You can find the the source code for the architectures of these models here: https://github.com/shreyash1706/Solving-Deepfakes-with-Traces-Frequency-and-Attention

Got any doubts? Want to talk more on deepfakes or any latest advancements in AI-ML? , feel free to drop me a mail at : shreyashpawar1706@gmail.com or connect with me on LinkedIn. Let’s keep the conversation going and build a more trustworthy digital world together!

Thanks for reading!

References

- Guo, Z., Yang, G., Chen, J., & Sun, X. (2021). Fake face detection via adaptive manipulation traces extraction network. Computer Vision and Image Understanding, 204, 103170.

- I. -J. Yu, S. -H. Nam, W. Ahn, M. -J. Kwon and H. -K. Lee, “Manipulation Classification for JPEG Images Using Multi-Domain Features,” in IEEE Access, vol. 8, pp. 210837–210854,( 2020)

- Woo, S., Park, J., Lee, J. Y., & Kweon, I. S. (2018). Cbam: Convolutional block attention module. In Proceedings of the European conference on computer vision (ECCV) (pp. 3–19).

- Zhang, W., Zhao, C., & Li, Y. (2020). A novel counterfeit feature extraction technique for exposing face-swap images based on deep learning and error level analysis. Entropy, 22(2), 249.

- Lee, S., Tariq, S., Shin, Y., & Woo, S. S. (2021). Detecting handcrafted facial image manipulations and GAN-generated facial images using Shallow-FakeFaceNet. Applied soft computing, 105, 107256.

- Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A. N., … & Polosukhin, I. (2017). Attention is all you need. Advances in neural information processing systems, 30.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.