Running Unsloth On a Jetson AGX Orin Device With Jetson-Containers

Last Updated on December 2, 2025 by Editorial Team

Author(s): Stephen Cow Chau

Originally published on Towards AI.

Background

I have been for a long time container first, mainly because of the ease of environment isolation (including but not limited to system package, programming library package…). For Jetson it’s a little more handy as flashing a Jetson to test new setup or revert to image is pretty tedious (just my opinion).

Running container in Jetson device have several constraints, including at least:

- Most package are included in Jetpack, which is carefully build packages regarding the specially build Tegra Linux (a variant of Ubuntu) and the Jetson hardware, it’s most stable when the container OS align with the host OS as container sometimes need support from host on lower level operations

- If we are working on GPU related workload, we would need CUDA support and NVIDIA container toolkit, and especially the container is highly preferred to use the same CUDA version that the Jetson host installed (mostly come with Jetpack, but allow upgrade, need to check supported version, see reference )

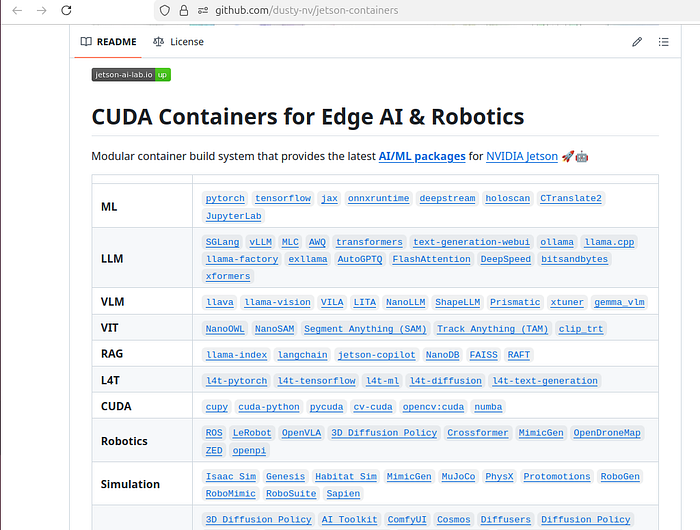

So here come the great dusty-nv’s jetson-containers project (See Github), which one can use it to build or run prebuilt containers that serve different machine learning or LLM purpose.

My environment and objective

So happen I have a Jetson AGX Orin 64GB, the great thing about it is the 64GB unified memory, which can load a larger model, and so I am trying to use it to try finetuning a LLM model (which unsloth’s Google Colab with free T4 GPU with 16GB VRAM might not fit in)

The detail of the Jetson:

cat /etc/nv_tegra_release

# R36 (release), REVISION: 4.3, GCID: 38968081, BOARD: generic, EABI: aarch64, DATE: Wed Jan 8 01:49:37 UTC 2025

# KERNEL_VARIANT: oot

# ...

uname -a

# Linux ubuntu 5.15.148-tegra #1 SMP PREEMPT Tue Jan 7 17:14:38 PST 2025 aarch64 aarch64 aarch64 GNU/Linux

nvcc --version

# nvcc: NVIDIA (R) Cuda compiler driver

# Copyright (c) 2005-2024 NVIDIA Corporation

# Built on Wed_Aug_14_10:14:07_PDT_2024

# Cuda compilation tools, release 12.6, V12.6.68

# Build cuda_12.6.r12.6/compiler.34714021_0

So we have Jetson R36.4.3, and it’s Jetpack 6.2, with CUDA 12.6 and based on a Ubuntu 22.04 (jammy) (ref: https://developer.technexion.com/docs/embedded-software/linux/nvidia-jetpack/usage-guides/jetpack620/)

What failed

1. Using official docker container from unsloth docker hub

This fail fast, as the docker containers are all built with linux/amd64, while a Jetson is ARM device.

2. Build and run jetson-container with unsloth package

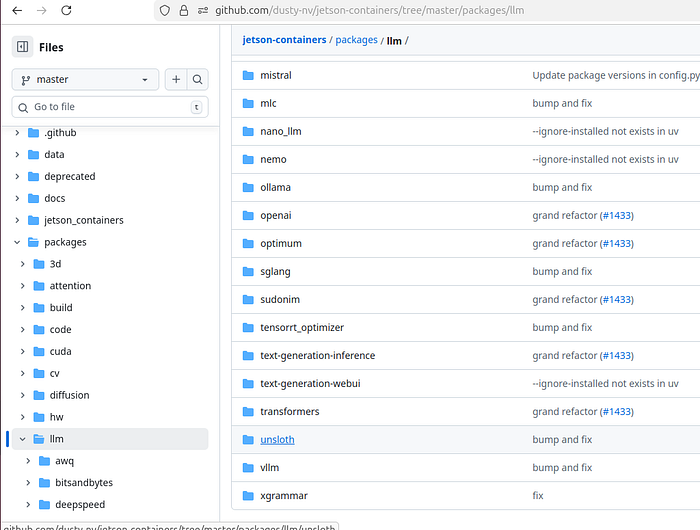

Even it’s not on home page of jetson-containers, the package is actually added under package “llm”

Note: Please read the document before build

I tried build with:

jetson-containers build unsloth

Multiple trials was attempted, with autotag (auto discover information based on host Jetson device hardware and software), or specify different Python, PyTorch, CUDA version.

All trials failed at certain point (step), the commonality is building take quite a lot of time, because it would build from the base Ubuntu image and compile and build all necessary package, including CUDA, cuDNN, PyTorch…

What worked

3. Locate a prebuilt container image to install unsloth

This is the pathway I found successful eventually but got quite a lot of failures throughout the path.

3.1 Locating the image

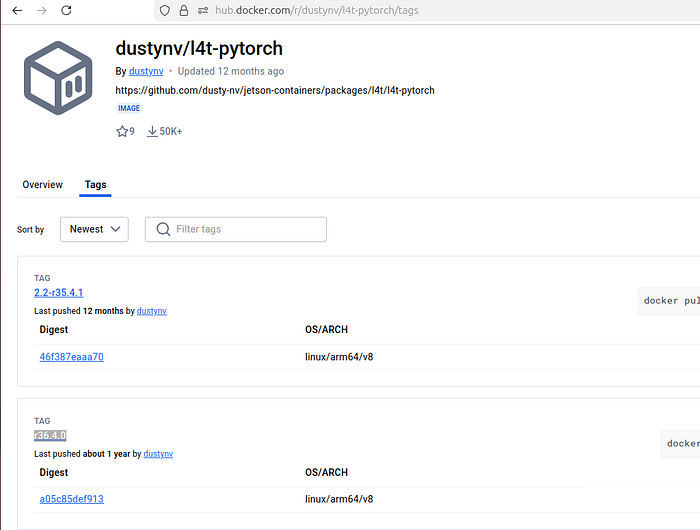

dustynv’s docker hub repo have pre-built containers, but not all container is a good image to start with.

The idea would be the closest to unsloth requirement is the best, so starting from unsloth pyproject.toml, we can see it require torch, bitsandbytes, transformers, xformers, possibly fast attention…

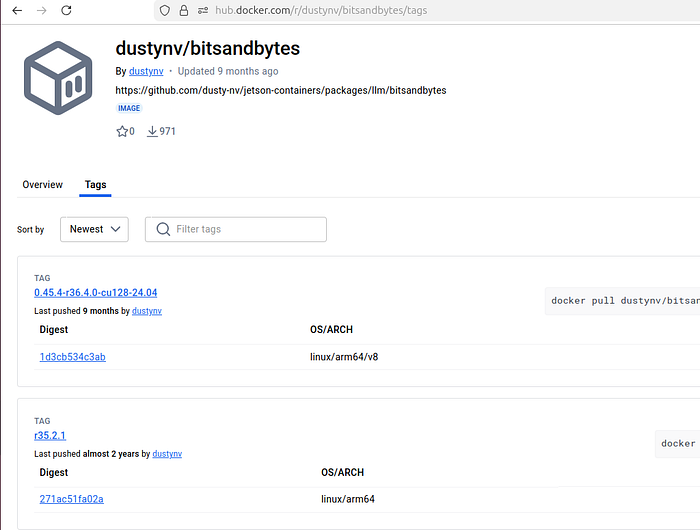

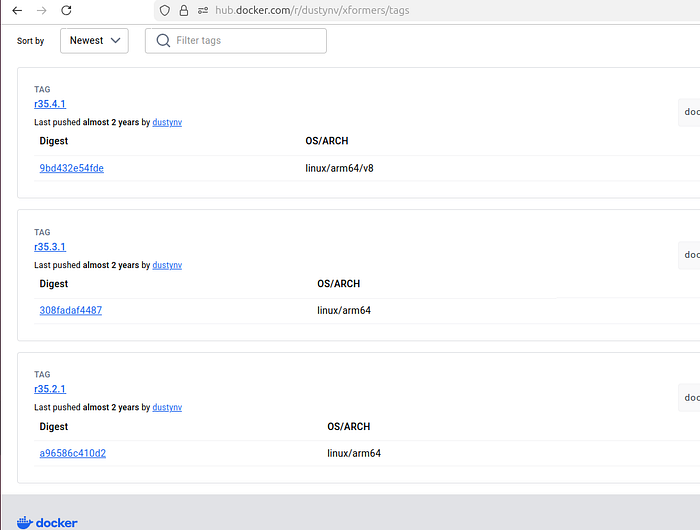

So we can go check the containers tags

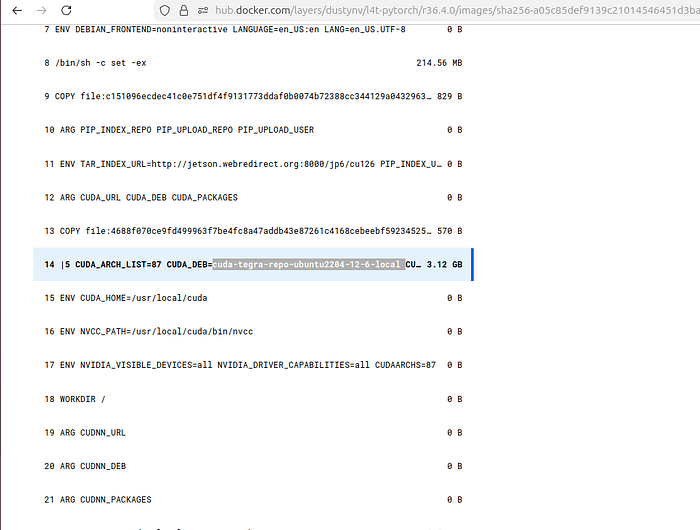

We can see only the more foundation l4t-pytorch container image match our r36.4, and further check the container build, the CUDA also is the expected CUDA 12.6

3.2 Run the container and install unsloth

follow the document on running jetson containers using image tag, we can do:

docker run --runtime nvidia -it --rm --network=host dustynv/l4t-pytorch:r36.4.0

It would land us into the bash shell, from there we would try running pip install unsloth but immediately we face a timeout issue.

The reason is the Python package are also specially built and rely on a specific pypi repo instead of the usual one, it’s defined in environment variable PIP_INDEX_URL=”http://jetson.webredirect.org/jp6/cu126", the redirect point to pypi.jetson-ai-lab.dev, but the working repo is at pypi.jetson-ai-lab.io.

So we can do the following to override the env variable:

export PIP_INDEX_URL=https://pypi.jetson-ai-lab.io/jp6/cu126

3.3 Tackling python package mismatch

The following is just struggling with the Python package dependency system, which breaking changes between version make the code not able to run as well as some missing dependency when installing unsloth (wheel)

There are a few logic and hints to check.

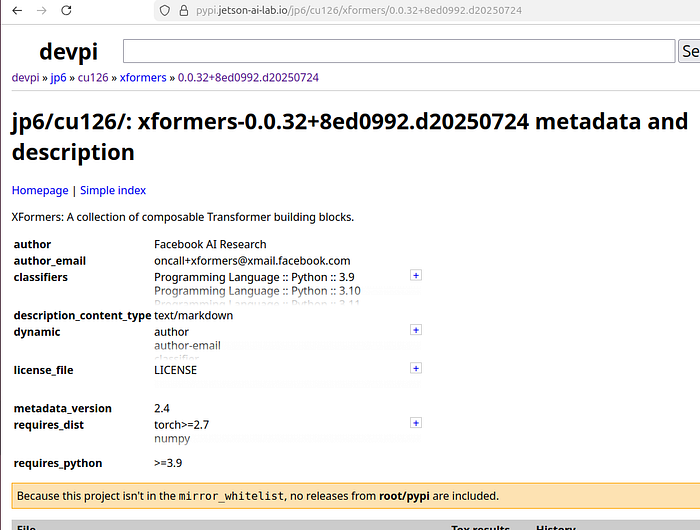

First, checking the custom pre-built python wheels at https://pypi.jetson-ai-lab.io/jp6/cu126

we can see some key packages: unsloth (2025.7.9), torch (2.8.0), bitsandbytes (0.47.0.dev0), triton (3.4.0), torchvision (0.23.0), xformers (0.0.32+8ed0992.d20250724), flash-attn (2.8.2)

Then checking unsloth pyproject.toml requirement dependency, it state that some cudat version is using some pytorch version.

3.3.1 Different failures

The first failure attempt is to install unsloth 2025.7.9 from prebuilt wheel, and install unsloth_zoo. The problem we face would be some mismatch between the pytorch 2.4.0 come with the container image and the transformers library requirement (My aim was finetuning Gemma 3N, which require transformer 4.53.0, and that’s the version introduce the breaking change that unsloth 2025.7.9 is unexpected).

So the next meaningful trial is to install unsloth from git, multiple failures, again due to the mismatch on PyTorch version (2.4.0 from container image), transformers version…

The working setup being:

export PIP_INDEX_URL=https://pypi.jetson-ai-lab.io/jp6/cu126

pip install "torch==2.8.0"

pip install "torchvision==0.23.0"

pip install bitsandbytes

pip install xformers

pip install "flash-attn==2.8.2"

pip install "unsloth[huggingface] @ git+https://github.com/unslothai/unsloth.git"

pip install unsloth-zoo

pip install timm

pip install juypterlab

After testing the installation sequence, docker container:

FROM dustynv/l4t-pytorch:r36.4.0

ENV PIP_INDEX_URL=https://pypi.jetson-ai-lab.io/jp6/cu126

RUN pip install "torch==2.8.0" && \

pip install "torchvision==0.23.0" && \

pip install bitsandbytes && \

pip install xformers && \

pip install "flash-attn==2.8.2" && \

pip install "unsloth[huggingface] @ git+https://github.com/unslothai/unsloth.git" && \

pip install unsloth-zoo && \

pip install timm && \

pip install --index-url https://pypi.jetson-ai-lab.io/root/pypi jupyterlab ipywidgets

CMD ["sleep", "infinity"]

Note that running the initialization would complain about the xformer is not setup properly, it complain about our python version being 3.10, and the wheel is built for 3.12, but checking the wheel, it should be supporting 3.9–3.12

Anyway, so I added the flash attention library and seems the running od Gemma 3N finetune and inference is about the same speed compare to the Colab notebook unsloth provide when run on a T4 (which is still slow on inference)

Side note: compare T4 and Jetson AGX Orin (reference)

Conclusion

Hope this help someone (including future me).

The key is not following what version allow us to make it work, but the logic on what to check and what to try to potentially make things work

I have also been posting on the github issue, some detail not being in this article might be found there.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.