Retrieval Augmented Generation (RAG) Explained: Why AI Needs It

Last Updated on December 2, 2025 by Editorial Team

Author(s): Abinaya Subramaniam

Originally published on Towards AI.

Large Language Models (LLMs) have rapidly become the engine behind intelligent applications, from chatbots to document assistants to sophisticated automation tools. Their ability to understand context, reason through text, and generate human-like responses often feels magical.

Yet beneath this sophistication, LLMs suffer from a fundamental constraint. Their knowledge is frozen in time and limited to the data they were trained on.

This is where Retrieval Augmented Generation, better known as RAG, enters the picture. RAG is not just an add on. It is a turning point in how we use AI, enabling systems that are more factual, context aware, and grounded in real, verifiable information. This blog sets the foundation for a full understanding on RAG by exploring why RAG exists, why standalone LLMs fall short, how the RAG pipeline works, where it is being used, and how it compares with fine tuning.

Why RAG Exists

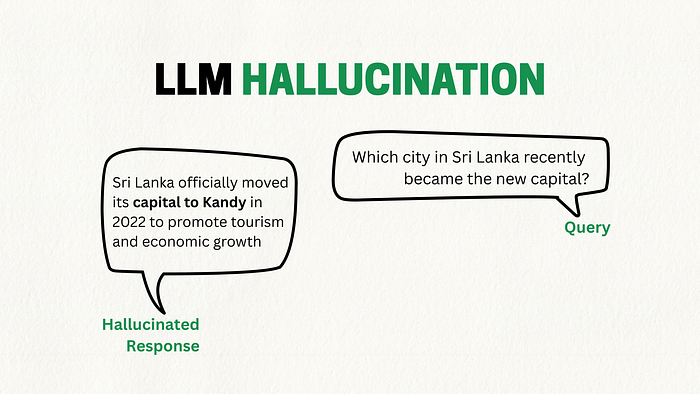

The main idea behind RAG is simple. LLMs are excellent at generating text, but they are not reliable sources of factual knowledge. Even the most advanced models operate based on statistical patterns learned from massive datasets, not from live or authoritative sources. As a result, they can produce plausible-sounding but incorrect answers, a phenomenon widely known as hallucination. That is simply AI giving confidently wrong answers.

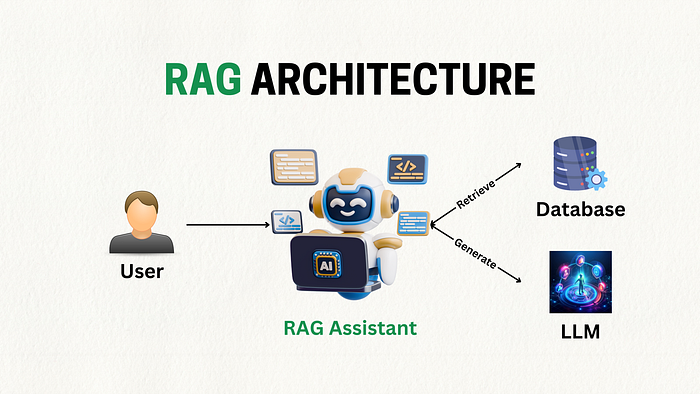

Companies today cannot afford such hallucinations, especially when AI is used to explain medical procedures, draft legal documents, answer financial queries, or guide enterprise decisions. To overcome this, RAG connects an LLM to an external knowledge source such as a vector database, an internal document repository, a set of policy manuals, or even a frequently updated website. Instead of relying solely on memory, the model retrieves relevant information and uses it to generate accurate responses.

This simple shift from relying on what the model remembers to using what it can look up makes a significant difference. RAG keeps the model’s responses grounded, traceable, and up to date, which is crucial for real-world AI systems.

Limitations of Standalone LLMs

Despite their power, LLMs share a set of predictable limitations that prevent them from operating as fully reliable knowledge assistants.

The most concerning is hallucination. A standalone model may supply answers with impressive confidence even when it lacks the correct information. Because the model is designed to predict text rather than verify facts, it can unintentionally invent data, fabricate citations, or misinterpret domain specific terminology. This is critical in fields like healthcare, law, aviation, and finance, where accuracy is non negotiable.

Another major limitation is the static nature of LLM knowledge. A model trained in 2024 knows nothing about laws passed in 2025, new product features released last month, or a company policy updated yesterday. Entire industries evolve faster than model training cycles, making static knowledge impractical for enterprise use.

In addition, standalone LLMs typically know little about your specific environment. They do not inherently understand your organization’s SOPs, HR rules, internal documentation, engineering guidelines, or customer history unless all of this is explicitly included in their training data, which is usually impossible for privacy, cost, or logistical reasons.

Finally, even the largest context windows have limits. A context window refers to the maximum amount of text (tokens) that an LLM can process at once, this includes both the prompt you provide and the model’s generated response.

For example, if a model has a context window of 8,192 tokens, it cannot see more than that in a single interaction. You cannot feed an entire knowledge base into a prompt, nor would you want to. A smart mechanism is needed to surface only the relevant fragments of knowledge.

The High-Level RAG Pipeline

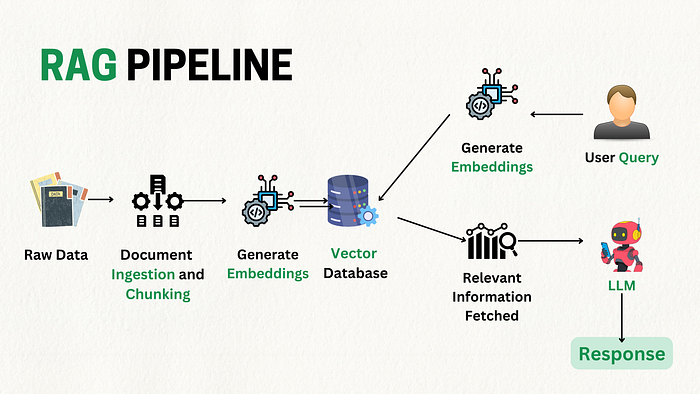

A RAG system operates through two main components retriever and generator, but the full pipeline contains several interconnected steps that ensure the model receives high quality context at the right moment.

Everything begins with the ingestion of documents. PDFs, policies, manuals, transcripts, webpages, tables, code files almost any type of text data, are cleaned, parsed, and broken down into meaningful chunks. Chunking is crucial because models cannot process entire documents at once, instead, the knowledge must be divided into smaller segments while preserving context.

Each chunk is then converted into a dense vector using an embedding model. This vector represents the semantic meaning of the text. These embeddings are stored in a vector database such as Pinecone, Chroma, FAISS, Milvus, or Weaviate. These databases allow extremely fast similarity searches, far faster and more precise than classical search engines.

When a user asks a question, the system converts the question into its own embedding and retrieves the top matching chunks from the vector database. Additional processes like re-ranking or metadata filtering may refine the results. The retrieved passages are then inserted into the model’s prompt, shaping the context within which the LLM generates its answer. This ensures the output is grounded not in guesswork but in the most relevant and trustworthy information available at that moment.

In essence, the RAG pipeline gives the model something it never had before, a real time ability to consult the source material.

Use Cases and Industry Adoption

RAG has become the backbone of enterprise AI precisely because it turns LLMs into reliable assistants rather than unpredictable generators. Its applications now span nearly every domain.

Customer support teams use RAG-powered assistants to answer queries based on past tickets, product documentation, internal SOPs, and troubleshooting guides. Legal teams employ RAG for quick retrieval of case law, compliance requirements, and contract analysis. Healthcare organizations rely on RAG to surface clinical research, patient documentation, and medical guidelines, all while maintaining a trail of references.

In finance, RAG supports investment research, policy interpretation, and internal audit documentation queries. Software engineers use RAG to navigate API references, log data, debugging history, and architectural documentation. And across enterprises of all sizes, internal copilots rely on RAG to access HR rules, IT procedures, meeting notes, internal training materials, and operational manuals.

The adoption is widespread: Microsoft Copilot, Google Workspace, Notion AI, Databricks, OpenAI’s Retrieval API, and countless custom enterprise copilots are powered by retrieval mechanisms. The reason is simple. Business work requires accurate, document based answers, not statistically plausible guesses.

RAG vs Fine Tuning

Many of us often confuse RAG with fine tuning, but the two solve entirely different problems.

Fine-tuning is best when the model needs to adopt a particular style, format, or skill. If you want a model to write like your brand, classify documents in a specific way, or follow a strict structure repeatedly, fine-tuning teaches the model behavioral patterns.

RAG, on the other hand, is the solution when the model needs access to external knowledge, especially knowledge that changes frequently or cannot be included in training for privacy or cost reasons. RAG ensures the model answers with facts extracted from your documents rather than its own internal approximations.

The best modern systems combine both, they fine-tune for behavior while using RAG for domain knowledge. This hybrid approach delivers consistency, accuracy, and reliability without enormous training costs.

Conclusion

RAG is more than a technique. It is the foundation of trustworthy, enterprise ready AI. It bridges the gap between generative reasoning and factual knowledge, allowing models to use up to date information, cite their sources, and adapt to real-world environments.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.