LangGraph & Redis: Build Smarter AI Agents With Memory & Persistence

Last Updated on December 2, 2025 by Editorial Team

Author(s): Kushal Banda

Originally published on Towards AI.

Redis and LangGraph now work together. You can build AI agents that remember conversations across sessions using LangGraph framework paired with Redis’ persistent memory layer.

Before this, agents started fresh each conversation. Now they retain context, learn from past interactions, and make better decisions over time. Thread-level persistence keeps individual conversation histories intact, while cross-thread memory lets agents tap into patterns across multiple sessions.

This matters because stateful agents outperform stateless ones. Your agent can reference what happened last week, spot trends, and handle follow-ups without losing context.

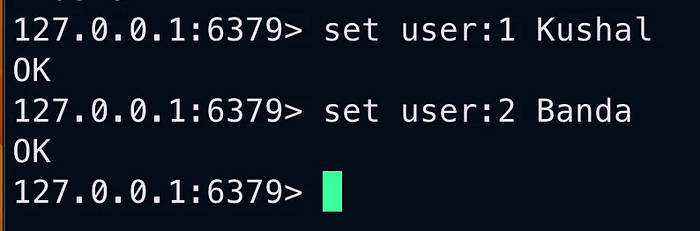

Getting Redis running

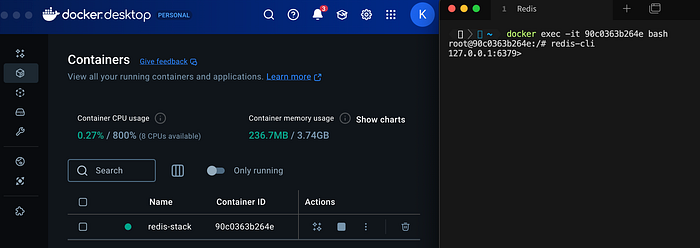

Start with Docker

docker run -d \

--name redis-stack \

-p 6379:6379 \

-p 8001:8001 \

redis/redis-stack:latest

docker exec -it <image_id> bash

redis-cli

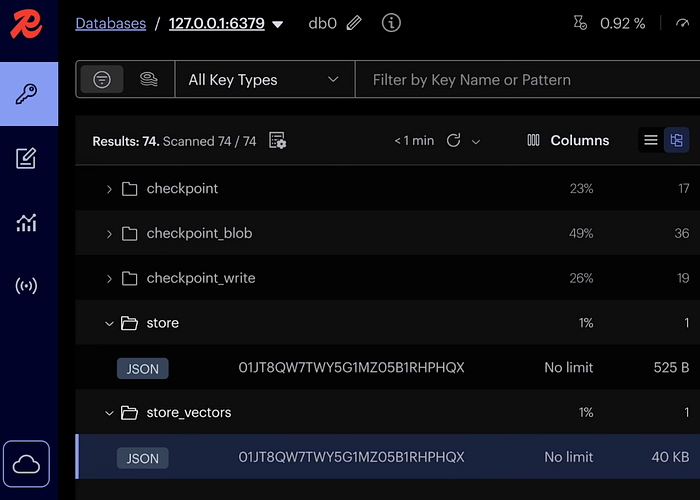

Your Redis UI is now live on port 8001, and the Redis CLI is up and responding on port 6379.

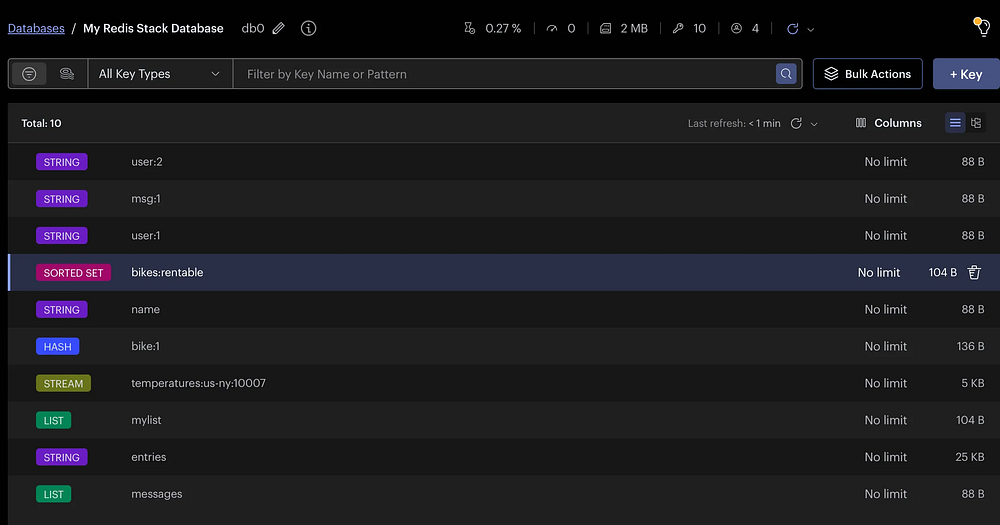

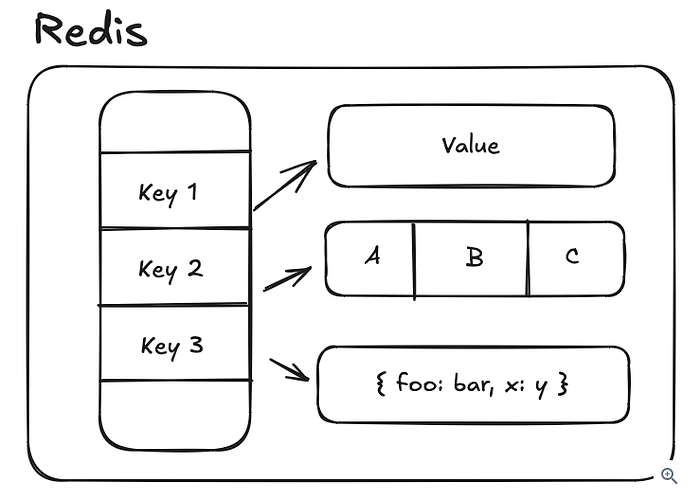

What is Redis ?

Redis is a self-described “data structure store” written in C. It’s in-memory and single threaded making it very fast and easy to reason about.

Some of the most fundamental data structures supported by Redis:

- Strings

- Hashes (objects/dictionaries)

- Lists

- Sets

- Sorted Sets (Priority Queues)

- Bloom Filters (probabilistic set membership; allows false positives)

- Geospatial Indexes

- Time Series

In addition to simple data structures, Redis also supports different communication patterns like Pub/Sub and Streams, partially standing in for more complex setups like Apache Kafka or AWS SNS (Simple Notification Service) / SQS (Simple Queue Service).

The core structure underneath Redis is a key-value store. Keys are strings while values which can be any of the data structures supported by Redis: binary data and strings, sets, lists, hashes, sorted sets, etc. All objects in Redis have a key.

Capabilities

- Redis as a Cache

- Redis as a Distributed Lock

- Redis for Leaderboards

- Redis for Rate Limiting

- Redis for Proximity Search

- Redis for Event Sourcing

- Redis for Pub/Sub

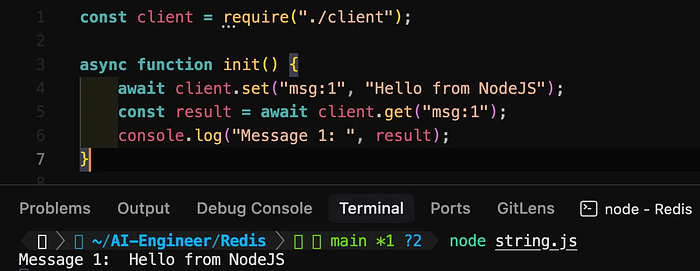

Redis using NodeJS

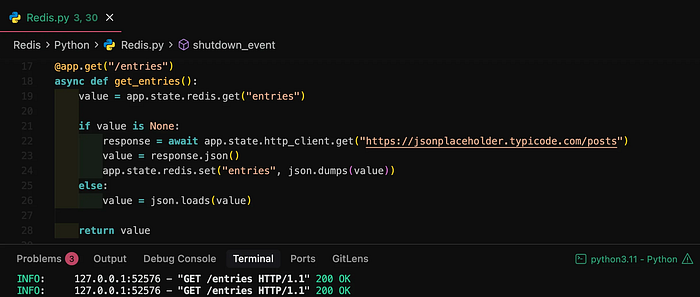

Redis using Python (FastAPI)

Redis for AI agents?

When building effective AI agents, memory is crucial. The most successful implementations use simple, composable patterns that manage memory effectively. Redis is perfect for this role:

- High-performance persistence: Ultra-fast read/write operations (<1ms latency) for storing agent state

- Flexible memory types: Support for both short-term (thread-level) and long-term (cross-thread) memory

- Vector capabilities: Built-in vector search for semantic memory retrieval

- Scalability: Linear scaling for production deployments with increasing memory needs

- Developer-friendly: Simple implementation that complements LangGraph’s focus on composable patterns

How langgraph-checkpoint-redis works

The langgraph-checkpoint-redis package offers two core capabilities that map directly to memory patterns in agentic systems:

1. Redis checkpoint savers: Thread-level “short-term” memory

RedisSaver and AsyncRedisSaver provide thread-level persistence, allowing agents to maintain continuity across multiple interactions within the same conversation thread:

- Preserves conversation state: Perfect for multi-turn interactions where context matters

- Efficient JSON storage: Optimized for fast retrieval of complex state objects

- Synchronous and async APIs: Flexibility for different application architectures

from typing import Literal

from langchain_core.tools import tool

from langchain_openai import ChatOpenAI

from langgraph.prebuilt import create_react_agent

from langgraph.checkpoint.redis import RedisSaver

# Define a simple tool

@tool

def get_weather(city: Literal["nyc", "sf"]):

"""Use this to get weather information."""

if city == "nyc":

return "It might be cloudy in nyc"

elif city == "sf":

return "It's always sunny in sf"

else:

raise AssertionError("Unknown city")

# Set up model and tools

tools = [get_weather]

model = ChatOpenAI(model="gpt-4o-mini", temperature=0)

# Create Redis persistence

REDIS_URI = "redis://localhost:6379"

with RedisSaver.from_conn_string(REDIS_URI) as checkpointer:

# Initialize Redis indices (only needed once)

checkpointer.setup()

# Create agent with memory

graph = create_react_agent(model, tools=tools, checkpointer=checkpointer)

# Use the agent with a specific thread ID to maintain conversation state

config = {"configurable": {"thread_id": "user123"}}

res = graph.invoke({"messages": [("human", "Which state did I ask?")]}, config)

# Extract clean response - get the last AI message content

res = res["messages"][-1].content

This simple setup allows the agent to remember the conversation history for “user123” across multiple interactions. The thread ID acts as a conversation identifier, and all state is stored in Redis for fast retrieval.

2. Redis Store: Cross-thread “long-term” memory

RedisStore and AsyncRedisStore enable cross-thread memory, letting agents access and store information that persists across different conversation threads:

- Vector search capabilities: Retrieve semantically relevant information using embeddings

- Metadata filtering: Find specific memories based on user IDs, categories, or other attributes

- Namespaced organization: Structure memories for different users and use cases

import uuid

from langchain_anthropic import ChatAnthropic

from langchain_core.runnables import RunnableConfig

from langgraph.checkpoint.redis import RedisSaver

from langgraph.graph import START, MessagesState, StateGraph

from langgraph.store.redis import RedisStore

from langgraph.store.base import BaseStore

# Set up model

model = ChatAnthropic(model="claude-3-5-sonnet-20240620")

# Function that uses store to access and save user memories

def call_model(state: MessagesState, config: RunnableConfig, *, store: BaseStore):

user_id = config["configurable"]["user_id"]

namespace = ("memories", user_id)

# Retrieve relevant memories for this user

memories = store.search(namespace, query=str(state["messages"][-1].content))

info = "\n".join([d.value["data"] for d in memories])

system_msg = f"You are a helpful assistant talking to the user. User info: {info}"

# Store new memories if the user asks to remember something

last_message = state["messages"][-1]

if "remember" in last_message.content.lower():

memory = "User name is Bob"

store.put(namespace, str(uuid.uuid4()), {"data": memory})

# Generate response

response = model.invoke(

[{"role": "system", "content": system_msg}] + state["messages"]

)

return {"messages": response}

# Build the graph

builder = StateGraph(MessagesState)

builder.add_node("call_model", call_model)

builder.add_edge(START, "call_model")

# Initialize Redis persistence and store

REDIS_URI = "redis://localhost:6379"

with RedisSaver.from_conn_string(REDIS_URI) as checkpointer:

checkpointer.setup()

with RedisStore.from_conn_string(REDIS_URI) as store:

store.setup()

# Compile graph with both checkpointer and store

graph = builder.compile(checkpointer=checkpointer, store=store)

When using this agent, we can maintain both conversation state (thread-level) and user information (cross-thread) simultaneously:

# First conversation - tell the agent to remember something

config = {"configurable": {"thread_id": "convo1", "user_id": "user123"}}

response = graph.invoke(

{"messages": [{"role": "user", "content": "Hi! Remember: my name is Bob"}]},

config

)

# Second conversation - different thread but same user

new_config = {"configurable": {"thread_id": "convo2", "user_id": "user123"}}

response = graph.invoke(

{"messages": [{"role": "user", "content": "What's my name?"}]},

new_config

)

# Agent will respond with "Your name is Bob"

Memory types and agentic systems

For memory in agentic workflows, Turing Post categorizes AI memory into long-term and short-term components:

Long-term memory includes:

- Explicit (declarative) memory for facts and structured knowledge

- Implicit memory for learned patterns and procedures

Short-term memory consists of:

- Context window (amount of information available in current interaction)

- Working memory (dynamic information used for current reasoning)

Redis excels at supporting both memory types for agentic workflows:

- Thread-level persistence with RedisSaver handles working memory and context

- Cross-thread storage with RedisStore provides long-term explicit memory

- Vector search through Redis enables efficient retrieval of relevant memories

Building better agents with memory

Successful agentic systems are built with simplicity and clarity, not complexity. The Redis integration for LangGraph adopts this philosophy by providing straightforward, performant memory solutions that can be composed into sophisticated agent architectures.

Whether you’re building a simple chatbot that remembers conversation context or a complex agent that maintains user profiles across multiple interactions, langgraph-checkpoint-redis gives you the building blocks to make it happen efficiently and reliably.

Getting started

To start using Redis with LangGraph today:

pip install langgraph-checkpoint-redis

Check out the GitHub repository at https://github.com/redis-developer/langgraph-redis for comprehensive documentation and examples.

🔗 Resources

💻 GitHub

🌐 Connect

For more insights on AI, data formats, and LLM systems follow me on:

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.