Part 1 -Model Context Protocol (MCP) Fundamentals

Last Updated on November 25, 2025 by Editorial Team

Author(s): Fernando Prieto

Originally published on Towards AI.

I recently built an MCP server in Kotlin that acts as an HTTP client you can control with natural language. It connects with tools like Cursor and Claude, and the whole process taught me lots— from figuring out the right architecture to understanding the protocols behind the scenes and how an LLM actually talks to it.

Check out my GitHub repo for more context: MCP-Http-Client. It’s a Kotlin-based server that lets LLMs make HTTP/HTTPS requests, run GraphQL queries, and even establish TCP/Telnet connections. It also includes an intelligent LRU (Least Recently Used) cache with TTL expiration, which automatically caches GETrequests to improve performance and reduce redundant calls — especially useful when LLMs repeatedly hit the same endpoints during reasoning.

What Is the Model Context Protocol?

The Model Context Protocol (MCP) is basically a standard way for AI assistants to talk to external tools.

Think of it as a toolbelt for LLMs — it defines how a model can discover, call, and communicate with tools in a structured and predictable way.

Here’s what makes MCP special:

- Standardised Communication: It uses JSON-RPC 2.0 over standard input/output (stdio), so everything speaks the same language.

- Tool Discovery: LLMs can list available tools and understand their schemas — like checking a toolbox before getting to work.

- Safe Execution: Built-in input validation and error handling keep interactions clean and predictable.

- Extensible: You can expose any capability as an MCP tool — HTTP clients, databases, shell commands, you name it.

Why JSON-RPC 2.0 (and Why It’s the Choice for MCP)

When you’ve got an LLM or server that needs to talk to external tools in a structured, safe, and extensible way, a protocol like JSON-RPC 2.0 is a very good fit. Here’s the breakdown of what it is, why it works, and why it’s used by MCP.

What is JSON-RPC 2.0

- JSON-RPC is a lightweight remote procedure-call (RPC) protocol that uses JSON for formatting requests and responses.

- It is transport-agnostic: meaning you can use it over stdio, HTTP, TCP, WebSockets — whichever you prefer.

Why it’s a good fit for MCP tools

JSON‑RPC 2.0 maps perfectly to how LLMs interact with tools:

- Structured calls: Invoke tools by name with JSON parameters and get a predictable response or error.

- Lightweight: Simple request/response format without complex handshakes.

- Supports notifications & batching: Send one‑way calls or multiple requests at once.

- Transport‑agnostic: Works over stdio, HTTP, TCP, or WebSockets.

- Standardised errors: Makes it easy to handle failures in a consistent way.

In short, it gives MCP a simple, reliable, and flexible communication layer between LLMs and external tools.

Why MCP Chooses JSON‑RPC 2.0

MCP relies on a simple, predictable, and interoperable protocol for discovering tools, invoking them, handling responses, and integrating results into LLM reasoning.

Using JSON‑RPC 2.0 avoids reinventing the wheel, provides a widely supported JSON-based RPC format, ensures consistency across tools, simplifies debugging, and gives clear semantics — all essential for building robust toolchains.

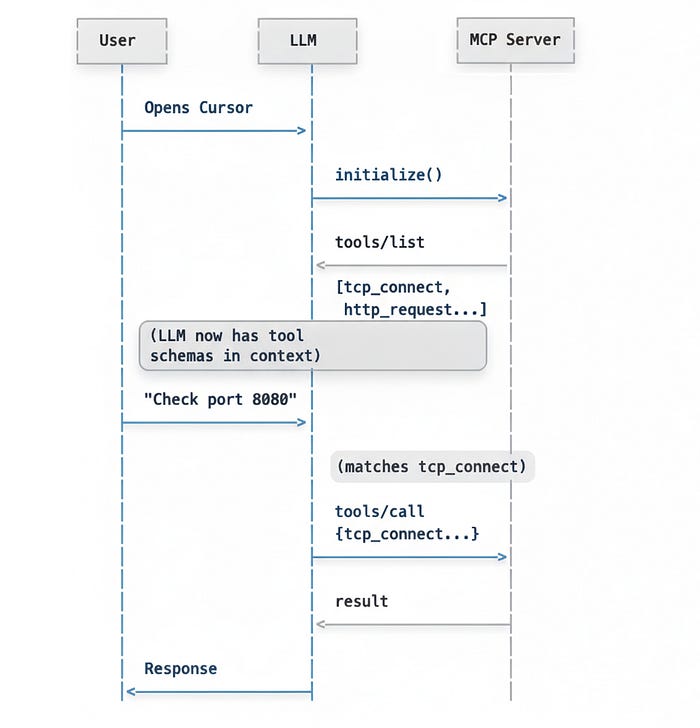

How LLMs Use MCP Tools

When an LLM gets a request from a user, it doesn’t just “magically” know what to do. Under the hood, it follows a reasoning process that looks something like this:

- Intent Recognition: Understand what the user wants in natural language.

- Tool Matching: Find the right MCP tool that can handle that request.

- Parameter Extraction: Convert the user’s request into structured JSON parameters.

- Tool Invocation: Call the MCP tool via JSON-RPC with those parameters.

- Response Integration: Take the tool’s response and blend it into the final answer for the user.

Example:

User: "Get the latest posts from https://api.example.com/posts"

LLM Thinking Process:

→ The user wants to fetch data from a URL

→ This requires an HTTP GET request

→ I have a 'make_request' tool available

→ Parameters needed: url="https://api.example.com/posts", method="GET"

→ Invoke tool with these parameters

→ Format the response for the user

How the LLM “Thinks” When Using MCP Tools

When an LLM handles a request using MCP tools, it follows a structured reasoning process that turns natural language into actionable commands and interprets the results. Here’s a step-by-step example:

0. Tool Discovery Phase (Initial Connection)

When MCP Server Connects:

1. Client (Cursor/Claude) → MCP Server: "What tools do you provide?"

2. MCP Server → Client: Responds with tools/list

Server Response:

{

"tools": [

{

"name": "tcp_connect",

"description": "Test TCP connectivity to a host and port",

"inputSchema": {

"type": "object",

"properties": {

"host": {"type": "string", "description": "Host to connect to"},

"port": {"type": "integer", "description": "Port number"},

"timeout": {"type": "integer", "default": 5}

},

"required": ["host", "port"]

}

},

{

"name": "another_tool",

...

}

]

}

3. Client → LLM Context: Injects tool schemas as available functions

LLM Now Knows:

✓ Tool name: "tcp_connect"

✓ What it does: "Test TCP connectivity..."

✓ Parameters: host (string), port (int), timeout (int)

✓ Required params: host, port

Tool Registration in LLM Context

The LLM's system prompt gets updated with something like:

"You have access to the following tools:

tcp_connect(host: string, port: integer, timeout?: integer):

Test TCP connectivity to a host and port.

http_request(url: string, method?: string, headers?: object):

Make HTTP requests to URLs.

..."

This becomes part of the LLM's "awareness" for that conversation.

1. Natural Language Understanding

(Now the LLM can match user intent to discovered tools)

User: "Can you check if port 8080 is open on localhost?"

LLM Internal Processing:

→ Intent: Check network connectivity

→ Scans available tools from discovery phase

→ Matches to: tcp_connect (based on description)

→ Target: localhost:8080

→ Action: TCP connection test

→ Tool Match: tcp_connect

The LLM first identifies what the user wants, the target resource, the required action, and which MCP tool can handle it.

2. Parameter Extraction

LLM Schema Awareness:

tcp_connect requires:

- host (string, required): "localhost"

- port (integer, required): 8080

- message (string, optional): null

- timeout (integer, optional): 5 (default)

Extracted from natural language:

host = "localhost"

port = 8080

timeout = 5 (default)

Next, it converts the user’s request into structured parameters matching the tool’s schema.

3. JSON Construction

{

"name": "tcp_connect",

"arguments": {

"host": "localhost",

"port": 8080,

"timeout": 5

}

}

The LLM formats the parameters into a JSON-RPC request that the tool can process.

4. Result Interpretation

Tool Response: "TCP Connection to localhost:8080

Status: SUCCESS

Response: ..."

LLM Reasoning:

→ Status = SUCCESS means port is open

→ Translate to user-friendly message

→ Provide context and explanation

Once the tool responds, the LLM interprets the result and prepares a coherent explanation.

5. User-Friendly Output

"Yes, port 8080 on localhost is open and accepting connections.

I successfully established a TCP connection to it."

Finally, the LLM presents a clear, user-friendly answer to the original request.

Wrapping Up

That’s a quick tour through the fundamentals of the Model Context Protocol (MCP) — what it is, why it matters, and how LLMs use it to communicate with external tools in a structured, predictable way.

Understanding these basics is essential before diving into implementation, because once you start building your own MCP server, concepts like JSON-RPC 2.0, tool schemas, and structured reasoning suddenly click into place.

What’s Next

In Part 2 — MCP Server Architecture and Implementation Guide, I’ll break down how I actually built a working Kotlin-based MCP server from scratch.

We’ll go over:

- How to design a modular and scalable architecture

- How the server handles discovery, requests, and responses

- Key patterns, challenges, and lessons learned along the way

- Some real examples of how it connects with tools like Cursor or Claude

If you found this introduction helpful, stay tuned — the next part dives deep into the technical side and will be a complete reference for anyone building their own MCP server.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.