Maths behind ML Algorithms (Logistic Regression and gradient descent)

Last Updated on November 25, 2025 by Editorial Team

Author(s): Atharv Tembhurnikar

Originally published on Towards AI.

Logistic Regression is a supervised machine learning algorithm used for classification problems. Unlike linear regression which predicts continuous values it predicts the probability that an input belongs to a specific class.

→ It is used for binary classification where the output can be one of two possible categories such as Yes/No, True/False or 0/1.

→ It uses sigmoid function to convert inputs into a probability value between 0 and 1.

Assumptions:

1. Independent observations: no correlation or dependence between the input samples.

2. Binary variables should be dependent, if any.

3. Linearity relationship between independent variables and log odds: The model assumes a linear relationship between the independent variables and the log odds of the dependent variable which means the predictors affect the log odds in a linear way.

4. No outliers

5. Large sample size

– — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — —

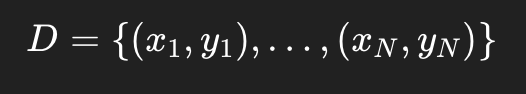

Suppose we have training data as follows-

- xi∈Rd (feature vector)

- yi∈{0,1} or sometimes {−1,+1}

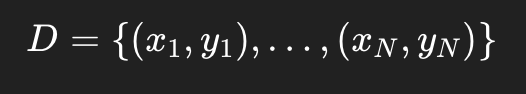

Let us assume that each class generates data from a Gaussian distribution, then Baye’s theorem gives-

This is often referred to as sigmoid function which outputs probabilities. Probability threshold of 0.5 is used to determine class of output.

Now, given model:

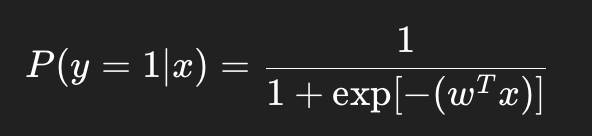

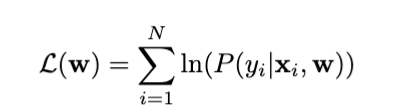

And, the likelihood function is:

We will apply log on both sides to convert multiplications into additions (easing computational complexity)

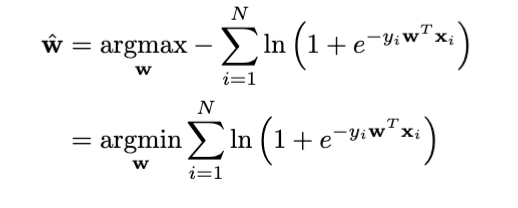

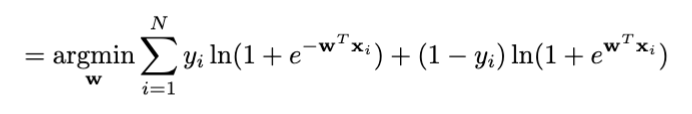

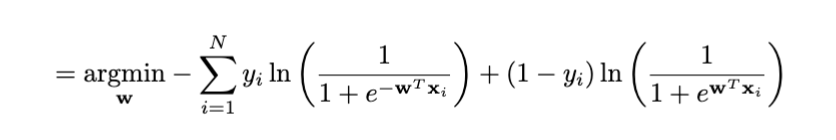

Put the sigmoid function in it-

We have to maximise this log-likelihood or minimise the negative log-likelihood.

- σ(x) asymptotically approaches 1 as x → ∞ and 0 as x → −∞, with σ(0) = 1/2

It is also referred to as Binary Cross Entropy Loss.

Closed form solution?

When we take derivative of this function and equate it to zero, it does not provide any analytical solution.

Now, how do we optimize this. Various optimization methods –

Gradient Descent

It tells you the direction in which the loss increases. So you move in the opposite direction.

How it works?

1) Start with random w0

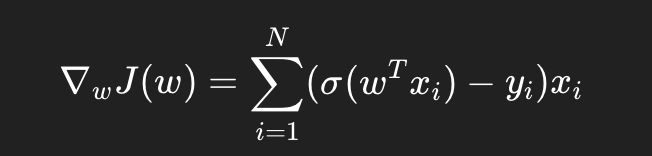

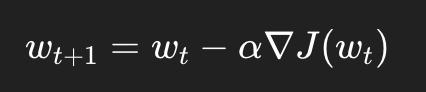

2) Compute gradient

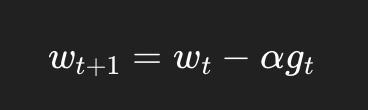

3) Update using the gradient rule

4) Repeat until changes become very small

Effect of learning rate:

1) Very small: very slow

2) too large: divergence

→ Slow, stable

→ Good for small Datasets

— — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — —

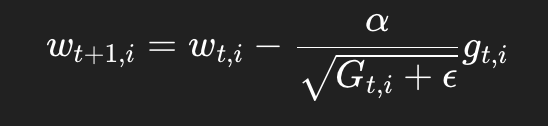

AdaGrad (Adaptive Gradient)

It utilizes an idea that each parameter needs different learning rate. So, parameters with large gradients get smaller learning raters over time.

How it works?

It starts with highlearning rate (take big steps), keep decreasing learning rate, making steps smaller over time.

Big steps help to get out of local minima, small step helps finding convergence.

Ups:

→ Works well for sparse data

→ Adaptive learning rate

Downs:

→ Learning rate keeps shrinking, may stop early

→ Memory Overhead: Extra memory for storing Gi

→ Not good for deep learning

— — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — —

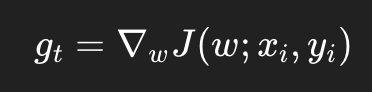

Stochastic Gradient Descent (SGD)

It uses one small batch (random sample) to update-

Ups:

→ Faster updates

→ Good for massive datasets

→ better generalization, works well with non-convex problems

Downs:

→ Hard to tune with learning rate

→ High variance

→ Can zig-zag around the minimum

— — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — —

Mini Batch SGD

It uses N batches instead of 1 in SGD

→ Smoother

→ Best for deep learning

— — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — — —

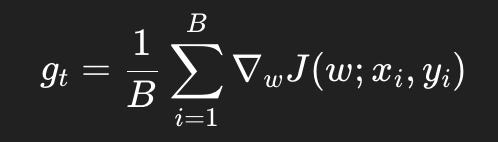

Newton’s Method

Newton’s method is a second-order optimization algorithm that incorporates curvature information from the Hessian

matrix.

How it works?

→ It can converge quadratic equation in one step.

→ Computational Cost: Computing and inverting H(w) is expensive for high-dimensional problems.

→ If H(w) is not positive definite, Newton’s method may not converge.

→ If the Hessian has large eigenvalue variations, step sizes can be unstable.

(This method is a bit complex, just focus on pros and cons so that you can determine when to use this method in future)

Code implementation of Logistic Regression:

import numpy as np

import matplotlib.pyplot as plt

from sklearn.datasets import make_blobs

N = 1000

X, y = make_blobs(n_samples=N, n_features=2, centers=2, cluster_std= 1,random_state=1001)

X = np.transpose(X)

N = 1000

X, y = make_blobs(n_samples=N, n_features=2, centers=2, cluster_std= 1,random_state=1001)

X = np.transpose(X)

N_tr = int(N*0.8)

N_tst = N-N_tr

x_tr = X[:, 0:N_tr]

x_tr = np.vstack((np.ones(N_tr), x_tr))

y_tr = np.transpose(y[0:N_tr])

x_tst= X[:, N_tr:]

x_tst = np.vstack((np.ones(N_tst), x_tst))

y_tst = np.transpose(y[N_tr:])

def g_new(w, x):

return 1/ (1+ np.exp(-np.dot(w.T,x)))

def logistic_cost (w, x, y):

N = x.shape[1]

cost = 0

for i in range(N):

g_val = g_new(w, x[:, i:i+1])

cost += -y[i] * np.log(g_val + 1e-15) - (1- y[i]) * np.log(1- g_val+ 1e-15)

return float(cost/N)

def quadratic_cost(w,x,y):

N = x.shape[1]

cost=0

for i in range(N):

pred = np.dot(w.T,x[:, i:i+1])

cost += (pred-y[i])**2

return float(cost/N)

def logistic_gradient(w, x, y):

N = x.shape[1]

grad = np.zeros_like(w)

for i in range(N):

g_val = g_new(w, x[:, i:i+1])

grad += (g_val - y[i]) * x[:, i:i+1]

return grad/N

epsilon = 1e-3

d= np.shape(x_tr)[0]

w = np.zeros([d,1])

eta = 0.1

max_iterations = 10000

train_logistic_costs = []

test_logistic_costs = []

train_quadratic_costs = []

test_quadrati_costs = []

for t in range(max_iterations):

grad = logistic_gradient(w, x_tr, y_tr)

w_new = w - eta* grad

train_logistic_costs.append(logistic_cost(w_new, x_tr, y_tr))

test_logistic_costs.append(logistic_cost(w_new, x_tst, y_tst))

train_quadratic_costs.append(quadratic_cost(w_new, x_tr, y_tr))

test_quadrati_costs.append(quadratic_cost(w_new, x_tst, y_tst))

if np.linalg.norm(w_new - w) < epsilon:

break

w = w_new

w_star = w

print('optimal weight vector:', w_star.flatten())

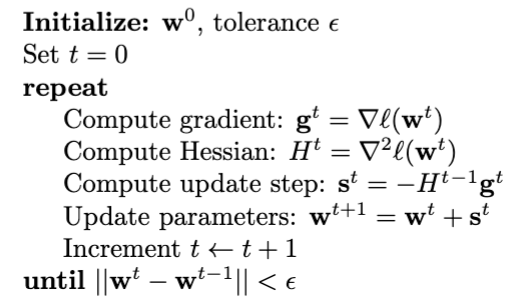

plt.figure(figsize=(8, 6))

x1_min, x1_max = X[0, :].min() - 1, X[0, :].max() + 1

x2_min, x2_max = X[1, :].min() - 1, X[1, :].max() + 1

xx1, xx2 = np.meshgrid(np.linspace(x1_min, x1_max, 200),np.linspace(x2_min, x2_max, 200))

Z = np.zeros(xx1.shape)

for i in range(xx1.shape[0]):

for j in range(xx1.shape[1]):

x_point = np.array([[1], [xx1[i, j]], [xx2[i, j]]])

Z[i, j] = g_new(w_star, x_point)

plt.contourf(xx1, xx2, Z, levels=[0, 0.5, 1], alpha=0.3, colors=['blue', 'red'])

plt.contour(xx1, xx2, Z, levels=[0.5], colors='black', linewidths=2.5, linestyles='--')

plt.scatter(x_tr[1, y_tr == 0], x_tr[2, y_tr == 0], c='blue', marker='o', s=50, label='Class 0', edgecolors='k', alpha=0.7)

plt.scatter(x_tr[1, y_tr == 1], x_tr[2, y_tr == 1], c='red', marker='s', s=50, label='Class 1', edgecolors='k', alpha=0.7)

plt.xlabel('x1')

plt.ylabel('x2')

plt.title('Decision Regions')

plt.legend()

plt.grid(True, alpha=0.3)

plt.tight_layout()

plt.show()

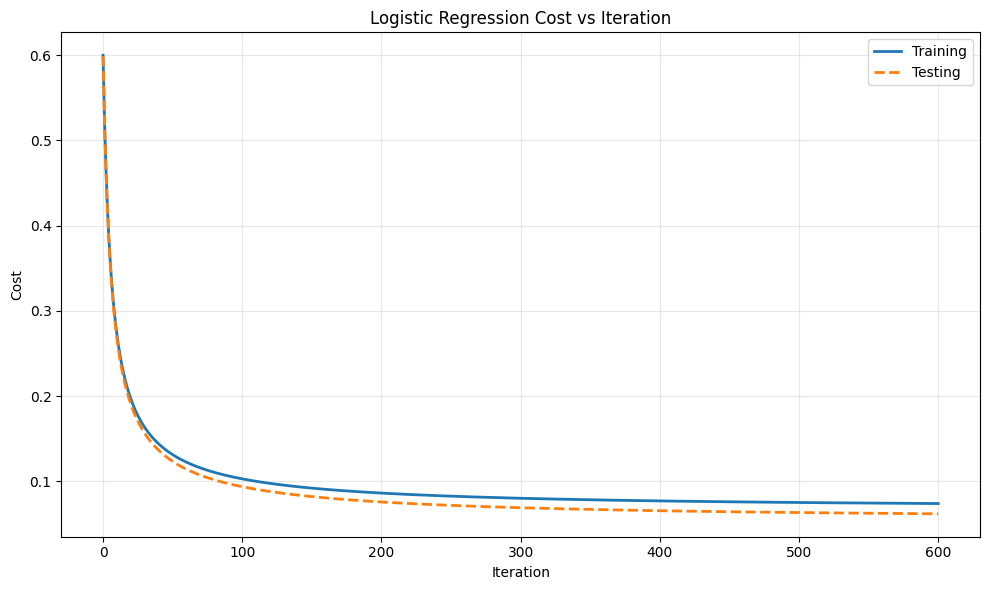

plt.figure(figsize=(10,6))

plt.plot(train_logistic_costs, label='Training', linewidth=2)

plt.plot(test_logistic_costs, label='Testing', linewidth=2, linestyle='--')

plt.xlabel('Iteration')

plt.ylabel('Cost')

plt.title('Logistic Regression Cost vs Iteration')

plt.legend()

plt.grid(True, alpha=0.3)

plt.tight_layout()

plt.show()

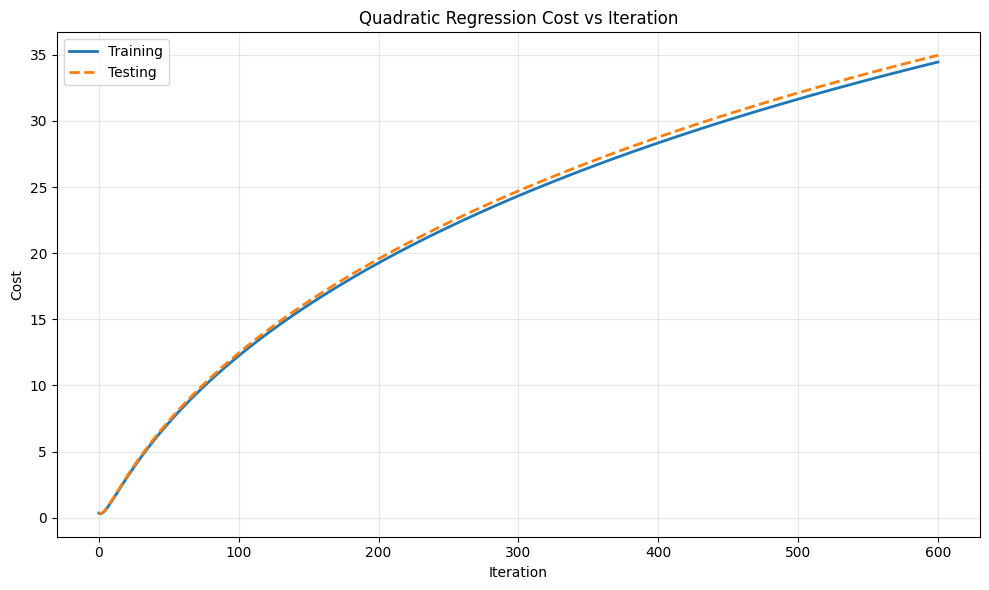

plt.figure(figsize=(10,6))

plt.plot(train_quadratic_costs, label='Training', linewidth=2)

plt.plot(test_quadrati_costs, label='Testing', linewidth=2, linestyle='--')

plt.xlabel('Iteration')

plt.ylabel('Cost')

plt.title('Quadratic Regression Cost vs Iteration')

plt.legend()

plt.grid(True, alpha=0.3)

plt.tight_layout()

plt.show()

def predict(w,x):

predictions = np.zeros(x.shape[1])

for i in range(x.shape[1]):

predictions[i] = 1 if g_new(w, x[:, i:i+1]) >= 0.5 else 0

return predictions

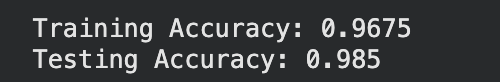

train_pred = predict(w_star, x_tr)

test_pred = predict(w_star, x_tst)

train_accuracy = np.mean(train_pred == y_tr)

test_accuracy = np.mean(test_pred == y_tst)

print('Training Accuracy:', train_accuracy)

print('Testing Accuracy:', test_accuracy)

Now try this code with different random states and compare what result you get.

References:

“Pattern Classification” by Richard O. Duda, Peter E. Hart, and David G. Stork , Publisher: Wiley Interscience;

2nd edition (November 9, 2000), ISBN-10: 0471056693

University of Maryland, College Park. DATA603 Principles of Machine Learning Fall 2025 Course

Thank you so much for reading. If you liked this article, don’t forget to press that clap icon. Follow me on Medium and LinkedIn for more such articles.

Are you struggling to choose what to read next? Don’t worry, I have got you covered.

Maths behind ML Algorithms (Bayesian Decision Theory)

Bayesian Decision Theory

)

Bayesian Decision Theorypub.towardsai.net

Human Decision Making and Biases : Fair Judgement and 12 Angry Men (1957)

Human decision making process can be viewed as a rational process in which we evaluate facts, weigh pros and cons, and…

ai.plainenglish.io

Euro 2024 Predictions

Tie up your football fever, cuz the stage is set for the Euro 2024 championship with crazy competition across 6 groups…

python.plainenglish.io

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.