Will you Spot the Leaks?

Author(s): Marco Hening Tallarico

Originally published on Towards AI.

Not another explanation

You’ve probably heard of data leakage, and you might know both flavours well: Target Variable and Train-Test Split. But will you spot the holes in my faulty logic, or the oversights in my optimistic code? Let’s find out.

I’ve seen many articles on Data Leakage, and I thought they were all quite insightful; however, I did notice that they tended to focus on the theoretical aspects of it. I found them somewhat lacking in examples that zero in on those lines of code or the decisions that lead to an overly optimistic model.

My goal in this article is not theoretical; it is to truly put your Data Science skills to the test and see if you can spot all the decisions I make that lead to Data leakage through an example.

Solutions at the end

An Optional Review

1. Target (Label) Leakage

When features contain information about what you’re trying to predict

- Direct Leakage: Features computed directly from the target → Example: Using “days overdue” to predict loan default

- Indirect Leakage: Features that serve as proxies for the target → Example: Using “insurance payout amount” to predict hospital readmission

- Post-Event Aggregates: Using data from after the prediction point → Example: Including “total calls in first 30 days” for a 7-day churn model

2. Train-Test (Split) Contamination

When test set information leaks into your training process

- Exploratory Data Analysis Leakage: Analyzing full dataset before splitting → Example: Examining correlations or covariance matrices of entire dataset → Fix: Perform exploratory analysis only on training data

- Preprocessing Leakage: Fitting transformations before splitting data → Examples: Computing covariance matrices, scaling, normalization on full dataset → Fix: Split first, then fit preprocessing on train only

- Temporal Leakage: Ignoring time order in time-dependent data → Fix: Maintain chronological order in splits

- Duplicate Leakage: Same/similar records in both train and test → Fix: Ensure variants of an entity stay entirely in one split

- Cross-Validation Leakage: Information sharing between CV folds → Fix: Keep all transformations inside each CV loop

- Entity (Identifier) Leakage: When a high‑cardinality ID (e.g. Tail #, Customer ID, Flight Number) appears in both train and test, the model “learns” that ID’s history rather than real predictive signals.

Let the Games Begin

To make this more fun, I’ve structured it as a game with the following rewards:

- +1 identifying a column that leads to data leakage.

- +1 identifying a problematic preprocessing.

- +1 identifying when no data leakage has taken place.

Along the way, when you see:

+10 points

That is to tell you how many points are available in the above section.

Problems in the Columns

Let’s say we are hired by Hexadecimal Airlines to create a machine learning model that identifies planes most likely to have an accident on their trip. In other words, a supervised classification problem with the target variable Outcome in df_flight_outcome.

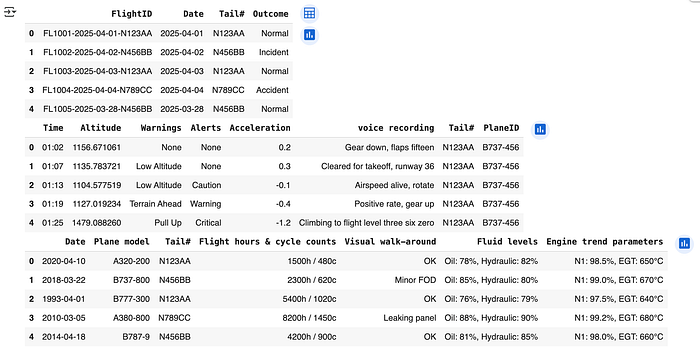

from IPython.display import display

display(

df_flight_outcome.head(),

df_black_box.head(),

df_maintenance.head()

)

This is what we know about our data: Maintenance checks and reports are made first thing in the morning prior to any departures. Our black box data is recorded continuously for each plane and each flight. This monitors vital flight data, including altitude, Warnings, Alerts, and Acceleration. Conversations in the cockpit are even recorded to help determine investigations in the event of a crash. At the end of every flight, a report is generated, then an update is made to df_flight_outcome.

Question 1: Based on this information, what columns can we immediately remove from consideration?

+12 points

A Convenient Categorical

Now suppose we review the original .csv files we received from Hexadecimal Airlines and realize they went through all the work of splitting up the data into 2 files (no_accidents.csv and previous_accidents.csv). Separating planes with accident history from planes with no accident history. Believing this to be useful data, we add it to our data frame as a categorical column.

Question 2: Has data leakage taken place?

+1 point

Needles in the Hay

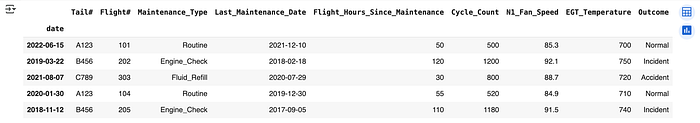

Now let’s say we join our data on date and Tail#. To get the resulting data frame which we will use to train our model. In total, we have 12,345 entries, over 10 years of observation, with 558 unique tail numbers, and 6 types of maintenance checks. This data has no missing entries and has been joined together correctly using SQL, so no temporal leakage takes place.

- Tail Number is a unique identifier for the plane.

- Flight Number is a unique identifier for the flight.

- Last Maintenance Day is always in the past.

- Flight hours since last maintenance is calculated prior to departure.

- Cycle count is the number of takeoffs and landings completed, used to track airframe stress.

- N1 fan speed is the rotational speed of the engine’s front fan, shown as a percentage of maximum RPM.

- EGT temperature stands for Exhaust Gas Temperature, which measures engine combustion heat output.

Question 3: Could any of these features be a source of data leakage?

Question 4: Is there a missing preprocessing step that could lead to data leakage?

+3 points

Hint — If there is are missing preprocessing step or problematics columns I do not fix them in the next section, i.e the mistake carries through.

Analysis and Pipelines

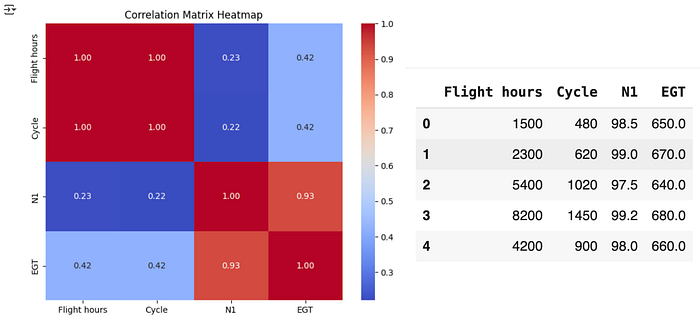

Now we focus our analysis on the numerical columns in df_maintenance. Our data shows a high amount of correlation between (Cycle, Flight hours) and (N1, EGT), so we make a note to use Principal Component Analysis (PCA) to reduce dimensionality.

We split our data into training and testing sets, use OneHotEncoder on categorical data, apply StandardScaler, then use PCA to reduce the dimensionality of our data.

# Errors are carried through from the above secion

import pandas as pd

from sklearn.pipeline import Pipeline

from sklearn.preprocessing import StandardScaler, OneHotEncoder

from sklearn.decomposition import PCA

from sklearn.compose import ColumnTransformer

n = 10_234

# Train-Test Split

X_train, y_train = df.iloc[:n].drop(columns=['Outcome']), df.iloc[:n]['Outcome']

X_test, y_test = df.iloc[n:].drop(columns=['Outcome']), df.iloc[n:]['Outcome']

# Define preprocessing steps

preprocessor = ColumnTransformer([

('cat', OneHotEncoder(handle_unknown='ignore'), ['Maintenance_Type', 'Tail#']),

('num', StandardScaler(), ['Flight_Hours_Since_Maintenance', 'Cycle_Count', 'N1_Fan_Speed', 'EGT_Temperature'])

])

# Full pipeline with PCA

pipeline = Pipeline([

('preprocessor', preprocessor),

('pca', PCA(n_components=3))

])

# Fit and transform data

X_train_transformed = pipeline.fit_transform(X_train)

X_test_transformed = pipeline.transform(X_test)

Question 5: Has data leakage taken place?

+1 point

Solutions

Answer 1: Remove all 4 columns from df_flight_outcome and all 8 columns from df_black_box, as this information is only available after landing, not at takeoff, when predictions would be made. Including this post-flight data would create temporal leakage, resulting in an artificially accurate but practically useless model. (12 pts.)

Answer 2: This is a source of data leakage as we are using the file name data that would not be available at the time of prediction. Furthermore could be essentially giving away the answer by adding a column that tells us if a plane has had an accident or not. (1 pt.)

Answer 3: Although all listed fields are available before departure, including high‐cardinality identifiers (Tail #, Flight Number) causes entity (ID) leakage — the model simply memorizes “Plane X never crashes” rather than learning genuine maintenance signals. To prevent this memorization leakage, you should either drop those ID columns entirely or use a group‑aware split so that no single plane appears in both train and test. (1 pt.)

Corrected code for Q3 and Q4

df['Date'] = pd.to_datetime(df['Date'])

df = df.sort_values('Date').reset_index(drop=True)

# Group-aware split so no Tail# appears in both train and test

groups = df['Tail#', 'Flight Number']

gss = GroupShuffleSplit(n_splits=1, test_size=0.25, random_state=42)

train_idx, test_idx = next(gss.split(df, groups=groups))

train_df = df.iloc[train_idx].reset_index(drop=True)

test_df = df.iloc[test_idx].reset_index(drop=True)

Answer 4: If we look carefully we see that the date column is not in order, we did not sort the data chronologically. If you randomly shuffle time‐ordered records before splitting, “future” flights end up in your training set, letting the model learn patterns it wouldn’t have when actually predicting. That information leak inflates your performance metrics and fails to simulate real‐world forecasting. (1 pt.)

Answer 5: Data Leakage has taken place because we looked at the covariance matrix for df_maintenance, which included both train and test data. (1 pt.)

Conclusion

Data leakage’s core principle sounds simple — never use information unavailable at prediction time — yet its application proves remarkably elusive. The most dangerous leaks slip through undetected until deployment, transforming promising models into costly failures. True prevention requires not just technical safeguards but a philosophical commitment to experimental integrity. By approaching model development with rigorous skepticism, we transform data leakage from an invisible threat to a manageable challenge.

Key Takeaways: Leakage infiltrates through seemingly innocuous channels. Prevention demands both technical tools and professional discipline. Validation protocols are essential to maintaining real-world model integrity. The greatest risk lies in what we don’t recognize until it’s too late

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.