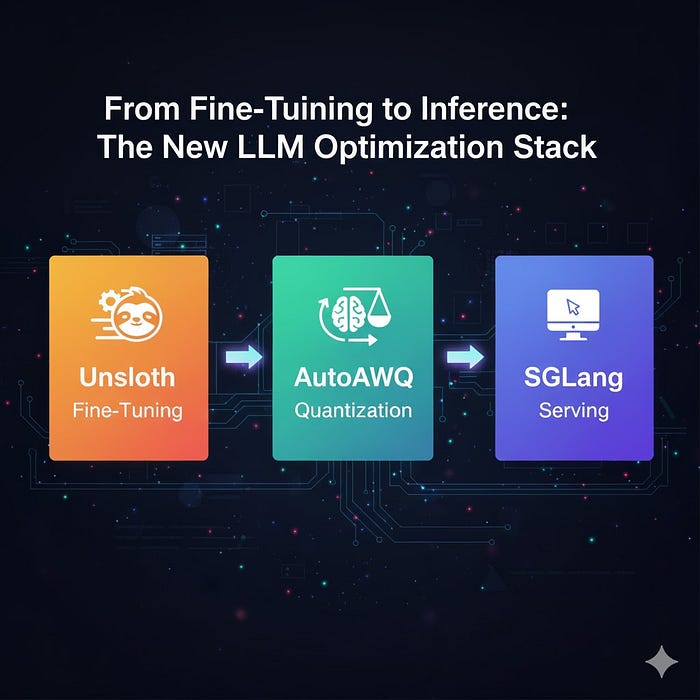

From Fine-Tuning to Inference: The New LLM Optimization Stack with Unsloth, SGLang, and AutoAWQ

Author(s): Ramya Ravi Originally published on Towards AI. Training and deploying LLMs has remained expensive and resource-intensive as LLMs become more powerful. In recent times, a new generation of lightweight AI optimization frameworks has emerged, which enables developers to train, compress, and …