From Words to Worlds: Rethinking Embeddings and Ranking in Retrieval

Author(s): Hira Ahmad Originally published on Towards AI. To choose the right model for semantic search, consider the trade-offs between a bi-encoder’s speed, a cross-encoder’s precision, and ColBERT’s balance of both. Words alone are insufficient to capture communication; the full message is …

Hugging Face Transformers: The Framework Redefined Modern AI

Author(s): Hira Ahmad Originally published on Towards AI. Introduction: What We See Is Only the Surface Most people know Hugging Face as a library that helps load models like BERT, GPT, or T5 in a few lines of code. But that’s barely …

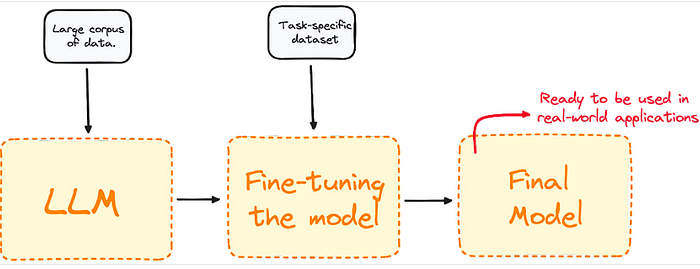

Building the Practical Foundation of Fine-Tuning Large Language Models (LLMs)

Author(s): Hira Ahmad Originally published on Towards AI. Building the Practical Foundation of Fine-Tuning Large Language Models (LLMs) Large Language Models (LLMs) like GPT, LLaMA, and Falcon have changed how machines understand and generate human-like text. Yet, their true power emerges not …

Inter-GPU Communication

Author(s): Hira Ahmad Originally published on Towards AI. Introduction Every major leap in AI over the past decade has been powered by scaling. Models have grown to billions or even trillions of parameters, datasets span millions of examples, and GPUs are deployed …