Building the Practical Foundation of Fine-Tuning Large Language Models (LLMs)

Last Updated on October 6, 2025 by Editorial Team

Author(s): Hira Ahmad

Originally published on Towards AI.

Building the Practical Foundation of Fine-Tuning Large Language Models (LLMs)

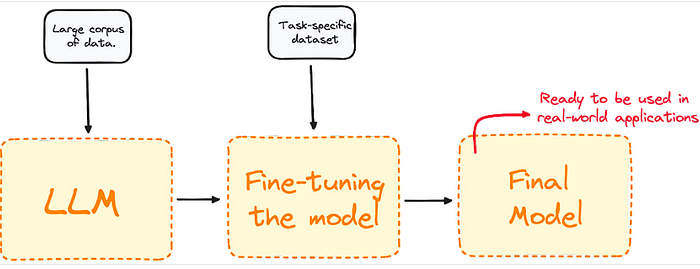

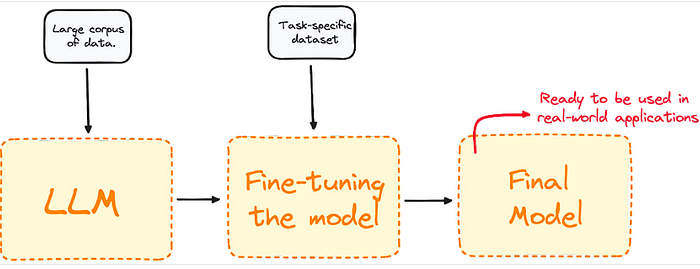

Large Language Models (LLMs) like GPT, LLaMA, and Falcon have changed how machines understand and generate human-like text. Yet, their true power emerges not from their base form, but from fine-tuning. Fine-tuning is the process of adapting a pretrained model to perform specialized tasks. Fine-tuning bridges the gap between a general-purpose model and a domain-specific intelligence, enabling customized responses, better contextual understanding, and higher relevance in specialized use cases such as medical diagnosis, legal writing, or research analysis.

The article discusses the significance of fine-tuning large language models (LLMs) to enhance their performance on specialized tasks. It covers the foundational concepts of fine-tuning, proper dataset preparation, environment setup, and a practical code framework for implementation. Additionally, it explores parameter-efficient fine-tuning techniques and the importance of model evaluation beyond accuracy. The discussion culminates in the ethical considerations of AI, emphasizing the need for models to express uncertainty and responsibly navigate the complexities of human understanding.

Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.