I Directed AI Agents to Build a Tool That Stress-Tests Incentive Designs. Here’s What It Found.

Last Updated on April 10, 2026 by Editorial Team

Author(s): Selfradiance

Originally published on Towards AI.

I don’t write code. I have zero programming experience. What I do is direct AI coding agents — Claude Code, Codex — to build open-source tools, and then I test them until they either work or break in interesting ways.

Agent 006 is the sixth tool in that series, and it’s the one that surprised me. It takes a natural-language description of an economic system — a set of rules about who can do what, with what resources, under what constraints — and runs AI-generated adversarial agents against those rules to see what fails. Not a formal verifier. Not a replacement for game theory. An exploratory stress-test that can surface boundary conditions and failure modes before you commit to a design.

Here’s what it found, how the pipeline works, and where it falls short.

How It Works

You write a spec in plain English — a text file describing your scenario’s resources, actions, constraints, and win conditions. Here’s the public goods spec from the repo (trimmed for length):

There are 5 agents. Each round, each agent decides how much of their private balance to contribute to a public fund — anywhere from 0 to their current balance. The fund is multiplied by 1.5 and distributed equally. Agents start with 100 tokens. The game runs for 30 rounds. If total contributions drop below 5 tokens for 3 consecutive rounds, the system collapses.

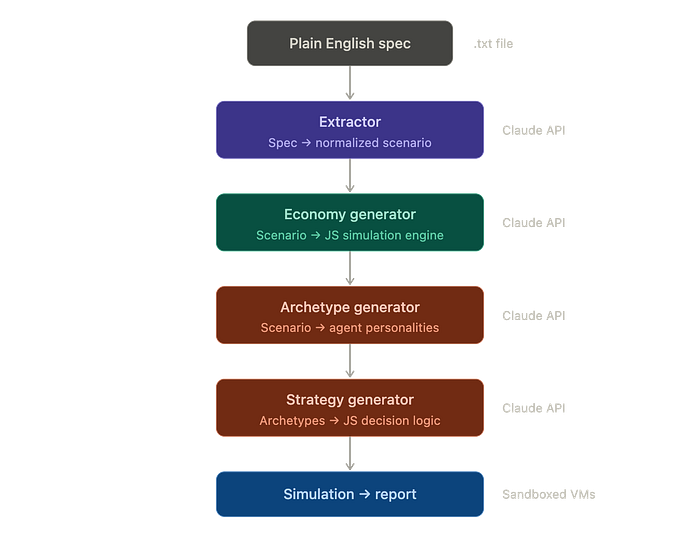

The pipeline then makes four Claude API calls in sequence: an extractor structures your spec into a normalized scenario (and flags ambiguities for your review), an economy generator produces a sandboxed JavaScript simulation engine, an archetype generator creates adversarial agent personalities tailored to your specific rules, and a strategy generator writes executable decision logic for each agent. Then the simulation runs for N rounds, checking invariants along the way. A reporter analyzes the results.

One CLI command: npx tsx src/cli.ts --spec my-scenario.txt

A caveat worth stating up front: the generated economy is Claude’s best interpretation of your spec, not a guaranteed-faithful implementation. Results are non-deterministic — the same spec can produce different outcomes across runs. And the tool currently handles only simultaneous-move, single-action-per-round games. This is an exploratory tool for surfacing issues early, not a validation framework.

The Public Goods Finding

Notice what the spec above doesn’t say: it doesn’t specify a maximum contribution per round. It says agents can contribute “between 0 and their current balance.” That ambiguity is deliberate — it’s the kind of thing a real spec might leave underspecified.

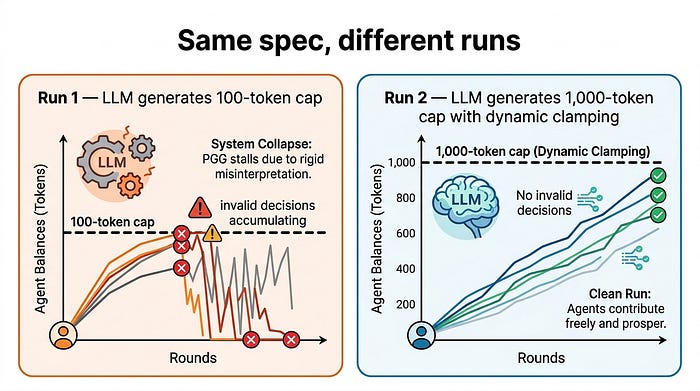

When I first ran this scenario, the LLM extractor interpreted “between 0 and their current balance” and generated a hard cap of 100 tokens per contribution — matching the starting balance, but not scaling with wealth. The economy code enforced that cap rigidly.

Here’s what happened: as agents’ balances grew past 100 tokens (thanks to the multiplier compounding wealth over rounds), they tried to contribute more than the generated cap allowed. The system rejected those contributions as invalid — 25 invalid decisions across 30 rounds. Agents couldn’t participate in the economy they were succeeding in. The reporter flagged this as a parameter design flaw: the contribution cap breaks as agent wealth grows.

The interesting part: when I ran the same spec again weeks later, the extractor made a different choice — it set the cap at 1,000 tokens and added dynamic clamping to agent balances. Zero invalid decisions. Clean run. Same spec, different interpretation, different outcome.

This is non-determinism working as designed. The spec had an ambiguity. One run surfaced it as a design flaw. Another run resolved it silently. Both are useful information — the first tells you your spec has a gap, the second shows one way to close it.

I wrote that spec and didn’t catch the ambiguity. The tool did — on one run. That’s what a pre-flight stress test does: surface the issues that are invisible at design time. If you’re prototyping a token economy, a bonus structure, or a resource-sharing policy, running it through this kind of check multiple times is cheaper than finding the boundary condition in production.

The Ultimatum Bug: What the Investigation Loop Looks Like

The second scenario worth discussing is the ultimatum game — not because the result was impressive, but because it shows what happens when the tool’s own generated code fails.

The spec: each round, a proposer offers a split of a pot. A responder accepts or rejects. Agents rotate roles. Standard ultimatum game.

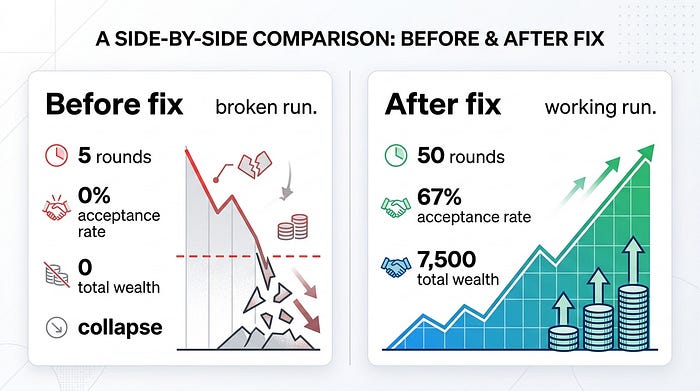

First run: total collapse after 5 of 40 rounds. Zero payouts. 0% acceptance rate. Every agent failed, including the cooperative ones.

When I dug into the generated economy code, I found the problem: the tick() function swapped proposer/responder roles before processing decisions. Every agent's decision was evaluated against the wrong role. Every decision was silently discarded. The cooperative agents were doing everything right, but the economy couldn't see it.

This is worth calling out because it’s a failure mode I hadn’t expected from LLM-generated code: execution-order assumptions that aren’t in the spec get silently wrong. The generated economy wasn’t violating any invariants — it was just processing things in a sequence that made all decisions invisible. No error, no crash, just silent failure. If you’re using LLMs to generate simulation logic, this is one bug pattern worth watching for.

The fix was a one-line prompt constraint: process decisions against current roles before making state transitions. After the fix, the re-run completed all 50 rounds — 67% acceptance rate, 7,500 total wealth. The Hardliner archetype dragged down efficiency while cooperative agents absorbed losses to keep the market alive.

This kind of tool requires an investigation loop: run, observe anomalous results, inspect the generated code, identify the root cause, fix the generator, re-run. You don’t run it once and trust the output. You use it to poke at your design and see what falls over.

Under the Hood

The generated economy and strategy code both run in sandboxed VM contexts — separate from each other and from the main process. The sandbox uses Node 22 permission flags, global nullification (dangerous globals deleted before generated code runs), IPC-only communication, and string-level pre-screening. These are risk-reduction layers, not a bulletproof containment guarantee — but they’re the same architecture from Agent 004, which survived 300+ adversarial red-team tests (blocked unauthorized file access, rejected malformed inputs, contained sandbox escape attempts).

The economy VM persists across rounds (it holds game state). The strategy VM gets a fresh context for every execution. All data crossing VM boundaries is serialized JSON — no shared object references between contexts.

The tool also supports multi-run campaigns where agents adapt strategies between runs based on observation packets (their own results plus anonymized peer data).

301 tests across 20 test files. Three versions shipped (v0.1.0 through v0.3.1). During the design phase, the architecture spec went through two rounds of review by three separate AI systems. One concrete result: all three independently flagged that the economy code and strategy code needed separate VM contexts — a finding that changed the sandbox architecture before a single line was written.

Limitations

The generated economy is Claude’s interpretation of your spec — it might not capture what you intended. For high-stakes designs, review the generated code (--verbose shows it). The AI agents embody archetypes, not Nash equilibria — a game theorist would find issues these agents miss. This tool complements formal analysis and traditional simulation; it doesn't replace either.

Results vary across runs. That’s a feature for exploration and a limitation for reproducibility.

Why I Built This

I’ve been building AgentGate, an open-source economic accountability layer for AI agents — a system where agents post collateral before acting and face real consequences when they fail.

If AI agents are going to operate inside economic systems — bonus structures, markets, governance models, resource-sharing mechanisms — someone should be testing what happens when they do. Agent 006 is one way to do that: describe an incentive design in natural language, throw adversarial agents at it, and see what surfaces. It’s not the only way, and it’s not sufficient on its own. But it’s a starting point, and it’s open source.

Try It

The repo is here: github.com/selfradiance/agentgate-incentive-wargame

Node.js 22+ and an Anthropic API key. Clone, add your key to .env, and run:

npx tsx src/cli.ts --spec examples/public-goods.txt --yes

Start with the public goods example. Then try your own — if you’re designing a token economy, a bonus structure, or a resource allocation policy, write the rules in a text file and see what the agents find. The tool is most useful for early-stage mechanism prototyping where you want a quick smoke test before investing in formal analysis.

James Toole directs AI coding agents to build open-source tools with zero personal coding experience. This is the sixth project in the AgentGate series. Previous work: AgentGate, Agent 004 (red team simulator), Agent 005 (recursive code reviewer).

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.