LangChain vs LangGraph: Pipelines vs Processes in Agentic Systems

Last Updated on February 19, 2026 by Editorial Team

Author(s): Vahe Sahakyan

Originally published on Towards AI.

The first time you try to build a real agentic system, something breaks.

Not a bug.

An assumption.

At first, everything looks fine. You chain together a few steps. The model reasons. A tool gets called. An answer comes back. The system feels agentic.

Then you add one more requirement.

You need the system to retry when something fails.

Or branch based on intermediate results.

Or remember what happened earlier.

Or pause for human approval.

Or explain why it took a particular path.

That’s when the cracks appear.

Suddenly, execution is no longer a clean sequence of steps. State starts leaking between prompts. Control logic hides inside model outputs. Retries turn into ad-hoc loops. Debugging becomes guesswork.

At that point, the problem is no longer about prompting.

It is about orchestration.

This is where architectural choices begin to matter.

Frameworks like LangChain and LangGraph both help you build LLM-powered systems, but they embody very different assumptions about how execution should be modeled. Understanding that difference is essential if you want to move beyond impressive demos toward durable, inspectable agentic systems.

Because the moment an agent must operate over time — handling branching, retries, state, and intervention — you are no longer designing a prompt.

You are designing a process.

When Agent Logic Becomes Orchestration

In the previous article, we examined what makes a system agentic: goal pursuit, reasoning loops, tool use, and structured autonomy.

But understanding agent behavior is only the beginning.

The real challenge appears when that behavior must operate inside a larger system.

As soon as an agent is expected to persist over time, execution can no longer be treated as a single reasoning loop. Multiple steps must be coordinated. State must be maintained across interactions. Decisions must be revisited. Failures must be handled without losing context. Human intervention must be possible without breaking execution.

At this point, the problem is no longer about what the agent can reason about.

It is about how the system runs.

This is where agent logic becomes orchestration.

You begin to encounter questions that cannot be answered inside a prompt or a single agent loop:

- How is execution flow controlled across multiple steps?

- How is state preserved and updated over time?

- How are retries, branching, and validation handled explicitly?

- How can execution pause for human input and then resume safely?

- How do you inspect what actually happened after the fact?

These are not model questions. They are system questions.

Once agentic behavior is embedded in a real application, coordination becomes unavoidable. Execution must be managed as a process, not as a sequence of isolated calls. Control flow must be explicit. State must be shared deliberately. Decisions must be inspectable.

This is the architectural boundary where simple pipelines begin to break down.

And it is where the choice of execution model starts to matter.

LangChain: Linear Composition and Rapid Prototyping

LangChain was designed to make it easy to compose LLM-powered components into working applications.

Its core abstraction is the chain: a sequence of steps executed in a defined order.

At its simplest, a LangChain workflow looks like:

Do A → then B → then C → produce output

This model works well when execution flow is known in advance.

A common example is retrieval-augmented generation (RAG):

- retrieve relevant documents

- process or summarize them

- generate an answer

Each step is implemented as a modular component — document loaders, text splitters, prompts, model calls, memory buffers — connected in a linear or directed acyclic graph (DAG) structure.

LangChain excels because it optimizes for speed and clarity:

- rapid prototyping of LLM applications

- a rich ecosystem of integrations and templates

- minimal boilerplate for common workflows

- broad compatibility across models, tools, and providers

For many use cases, this is exactly what you want.

The key assumption behind the chain abstraction is forward-moving execution. Each step runs once, passes its output to the next, and progresses toward a final result. State is typically passed implicitly, and control flow is largely predetermined.

As long as execution follows that shape, LangChain remains a strong fit.

However, when workflows must adapt at runtime — retrying steps, branching based on intermediate results, revisiting earlier decisions, or persisting state across interactions — that underlying assumption starts to matter.

At that point, the challenge is no longer composing capabilities.

It is controlling execution.

LangChain is best understood as a high-level composition layer for LLM-powered functionality. It makes building pipelines fast and expressive — but it is not designed to model long-running, stateful processes where execution path itself is dynamic.

That distinction becomes important as systems move from bounded workflows toward agentic behavior.

LangGraph: Modeling Processes Instead of Pipelines

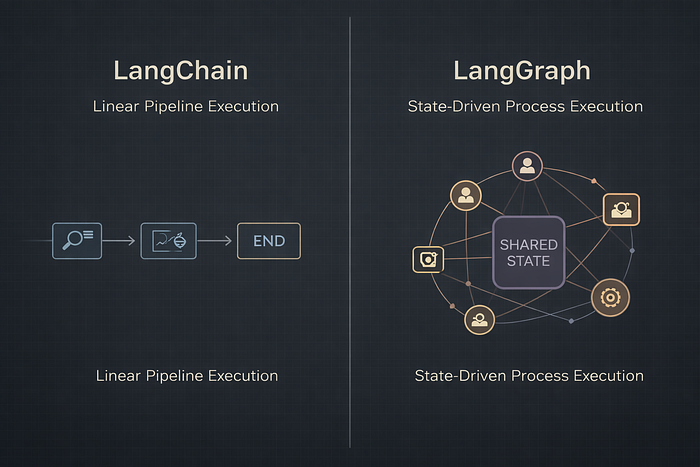

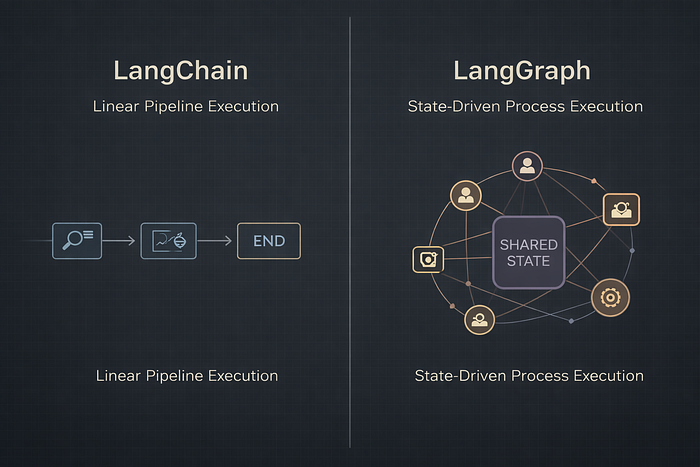

Where LangChain models workflows as pipelines, LangGraph models them as processes.

Instead of chaining steps in a predefined order, LangGraph represents execution as a graph composed of:

- nodes — units of computation (LLM calls, tools, or entire agents)

- edges — transitions that determine how execution flows

- state — shared, persistent memory accessible to all nodes

The explicit state layer is the defining difference.

In LangGraph, execution is not governed by a fixed sequence of steps. It is routed dynamically based on the current state of the system. Each node reads from state, writes updates to it, and routing decisions are made by inspecting that shared context.

This changes the shape of execution.

Workflows can now:

- loop back to earlier steps

- branch based on intermediate results

- pause and resume execution

- incorporate human-in-the-loop checkpoints

- persist state across interactions or sessions

- be inspected, replayed, or rewound during debugging

A useful way to frame the difference is this:

LangChain asks:

What is the next step in the pipeline?

LangGraph asks:

Given the current state, where should execution go now?

That shift — from sequence-based execution to state-driven routing — is what makes LangGraph well-suited for long-running, adaptive, and multi-step systems.

The trade-off is intentional complexity. Modeling nodes, edges, and shared state requires more upfront architectural design. But that structure becomes an asset as systems grow in autonomy, duration, and responsibility.

LangGraph is not optimized for speed of prototyping.

It is optimized for clarity of execution.

When workflows must adapt over time, revisit earlier decisions, or coordinate multiple roles, modeling the system as a process rather than a pipeline aligns more naturally with the problem.

This is not about adding complexity.

It is about matching execution structure to execution reality.

A Concrete Comparison: Same Problem, Two Architectures

Abstract architectural differences are easy to describe. They become clearer when you look at how execution actually unfolds.

Consider two assistants solving related problems — but with very different execution shapes.

Scenario 1: A Sequential Assistant (LangChain-Friendly)

Imagine an assistant that:

- fetches documentation

- summarizes it

- answers user questions about the content

The execution path is known in advance. Each step runs once and feeds directly into the next. There is no need to revisit earlier decisions, branch conditionally, or maintain evolving state across interactions.

This is a bounded workflow.

A linear chain mirrors the structure of the problem: predictable, forward-moving, and finite. Execution begins, progresses through a fixed sequence, and terminates with a result.

In this setting, a chain-based architecture is not a compromise — it is an accurate model of execution. LangChain fits naturally because it optimizes for composing well-defined steps into a clean, readable pipeline.

The abstraction holds because the problem itself is linear.

Scenario 2: A Task Management Agent (LangGraph-Friendly)

Now consider an assistant that:

- accepts user requests

- adds and updates tasks

- tracks progress over time

- summarizes current state

- responds differently depending on task status

Here, the execution path is not predetermined.

Users may issue commands in any order. State evolves continuously. Each decision depends on what has already happened, not on a fixed sequence of steps.

This is not a longer pipeline.

It is a different execution model.

The core challenge is no longer sequencing — it is control flow.

Execution must route dynamically based on shared state. The system may return repeatedly to a central decision point. State must persist across interactions. Behavior must adapt without assuming a predefined order.

A graph-based architecture aligns naturally with this shape of execution. Each step reads and updates shared state, and routing decisions are made explicitly based on that evolving context.

The system is not executing a plan.

It is managing a process.

Why the Difference Matters

The contrast between these two scenarios is not about complexity, intelligence, or autonomy.

It is about the shape of execution.

When a problem can be expressed as “do A, then B, then C,” a pipeline is sufficient. When execution must respond to accumulated context and decide what should happen next, the abstraction must change.

Architecture should reflect control flow.

When it doesn’t, complexity leaks into places it doesn’t belong — inside prompts, ad-hoc conditionals, or implicit state.

This is the boundary where pipelines stop being enough.

Architectural Trade-offs

The distinction between LangChain and LangGraph is architectural.

It is not about maturity, popularity, or chronology. It is about how execution is modeled.

Both frameworks are designed to solve real problems. They simply make different assumptions about what execution should look like — and those assumptions carry consequences.

LangChain: Best for Structured Pipelines

LangChain is a strong fit when:

- the workflow is well-defined and mostly linear

- execution progresses forward without revisiting earlier steps

- state does not need to persist across sessions

- rapid iteration and ecosystem integrations matter

Its strengths follow directly from these assumptions:

- fast development and prototyping

- a rich ecosystem of reusable components

- minimal setup for common workflows

- clean composition of LLM-powered capabilities

As long as the workflow can be expressed as a bounded sequence, the abstraction holds.

Limitations appear when execution itself becomes dynamic. Persistent state, retries, conditional routing, long-running processes, or deep observability are not core concerns of the chain model. Supporting them typically requires additional orchestration layered on top.

At that point, complexity exists — but it lives outside the abstraction.

LangGraph: Best for Stateful Processes

LangGraph becomes valuable when:

- decisions depend on evolving state

- execution may revisit earlier steps

- retries, validation, or branching are required

- human checkpoints are part of the lifecycle

- multiple components must coordinate over time

Its strengths come from making those concerns explicit:

- shared, inspectable state

- state-driven routing

- durable execution and recovery

- clear separation between work and control flow

- workflows that can be inspected, replayed, or resumed

The trade-off is upfront structure. Modeling nodes, edges, and shared state requires deliberate design. But as systems grow in autonomy, duration, and responsibility, that structure becomes an asset rather than overhead.

A Practical Heuristic

If your system can be described as:

“Do A, then B, then C.”

A pipeline is often sufficient.

If you instead find yourself asking:

“Given everything that has happened so far, what should happen next?”

You are modeling a process — not a pipeline.

And processes benefit from explicit state and routing.

How They Work Together in Practice

LangChain and LangGraph are not competing frameworks. They operate at different layers.

A useful mental split is:

- LangChain → capabilities

- LangGraph → behavior

LangChain provides the building blocks:

- Prompt templates

- Tool abstractions

- Retrievers and vector stores

- Model-agnostic LLM interfaces

- Quick composition of LLM-powered functionality

In most LangGraph systems, LangChain components still perform the underlying work. Tools follow LangChain conventions. LLM calls use LangChain wrappers. Retrievers and APIs integrate naturally.

LangGraph answers a different question:

How do these components interact over time?

It manages:

- Explicit state

- Non-linear control flow

- Loops and retries

- Human checkpoints

- Durable execution

- Clear separation between what a step does and when it runs

Where chains describe pipelines, graphs describe processes.

Understanding this separation clarifies where complexity belongs.

Why Graph-Based Orchestration Becomes Necessary in Production

As agentic systems move beyond isolated demos, certain requirements become unavoidable.

Execution must be explicit.

State must persist.

Decisions must be inspectable.

Failures must be recoverable.

These are not optional production features. They are structural constraints.

Linear pipelines are effective when workflows are bounded and execution paths are known in advance. But once systems are expected to operate over time — coordinating multiple steps, adapting to outcomes, revisiting earlier decisions, or surviving partial failure — the limitations of sequence-based execution become clear.

At that point, orchestration is no longer about ordering steps.

It becomes about managing a lifecycle.

Production systems must be able to:

- resume execution after interruption

- retry or branch without losing context

- pause safely for validation or human approval

- preserve shared state across interactions

- explain how and why a particular outcome occurred

These requirements are difficult to retrofit onto linear pipelines because they are not sequencing problems. They are control-flow problems.

Graph-based models make that control flow explicit.

By representing execution as a state-driven process rather than a fixed sequence, graphs allow systems to adapt without improvisation. State becomes a first-class concern. Routing decisions are visible. Execution paths can be inspected, replayed, or recovered when something goes wrong.

This is not about novelty or sophistication.

It is about clarity.

In practice, many production systems combine both layers:

- LangChain for capabilities — prompts, tools, and model integrations

- LangGraph for orchestration — state, routing, and lifecycle management

Chains describe what happens.

Graphs describe how execution continues.

When systems must persist, adapt, and remain accountable over time, graph-based orchestration stops being an optimization.

It becomes a requirement.

Next in the Series

Architectural comparisons are useful — but they remain abstract until you build something.

In the next article, we move from theory to construction.

Instead of starting with a language model, we will build a small but complete agentic workflow in LangGraph without using an LLM at all. The focus will be purely on execution structure: state, routing, retries, and termination.

The goal is to make one thing clear:

Agentic behavior does not emerge from model intelligence alone.

It emerges from structure.

By the end of the next article, you will see how nodes, edges, and shared state translate directly into real execution logic — and why graphs become a natural foundation for durable agentic systems.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.