Top Important Computer Vision Papers for the Week from 17/06 to 23/06

Author(s): Youssef Hosni Originally published on Towards AI. Stay Updated with Recent Computer Vision Research Every week, researchers from top research labs, companies, and universities publish exciting breakthroughs in various topics such as diffusion models, vision language models, image editing and generation, …

Making Bayesian Optimization Algorithm Simple for Practical Applications

Author(s): Hamid Rasoulian Originally published on Towards AI. Image by Author The Goal of this writing is to show an easy implementation of Bayesian Optimization to solve real-world problems. Contrary to Machine Learning modeling which the goal is to find a mapping …

The Voice of AI

Author(s): Sarah Cordivano Originally published on Towards AI. And how it creates overconfidence in its output Non-members of medium can read this story for free through this friend link. In the last year, ChatGPT and similar tools have written a fair amount …

Counter Overfitting with L1 and L2 Regularization

Author(s): Eashan Mahajan Originally published on Towards AI. Photo by Arseny Togulev on Unsplash Overfitting. A modeling error many of us have encountered or will encounter while training a model. Simply put, overfitting is when the model learns about the details and …

Genai With Python: Give Your AI a Personality and Speak With ”Her”

Author(s): Mauro Di Pietro Originally published on Towards AI. LLM & Speech Recognition — Build a voice assistant ChatBot on your laptop with OllamaImage by author In this article, I will show how to build an AI with a specific personality and …

Understanding Mamba and Selective State Space Models (SSMs)

Author(s): Matthew Gunton Originally published on Towards AI. Image by Author The Transformer architecture has been the foundation of most majorlarge language models (LLMs) on the market today, delivering impressiveperformance and revolutionizing the field. However, this success comeswith limitations. One major challenge …

A Visual Walkthrough of DeepSeek’s Multi-Head Latent Attention (MLA) 🧟♂️

Author(s): JAIGANESAN Originally published on Towards AI. A Visual Walkthrough of DeepSeek’s Multi-Head Latent Attention (MLA) 🧟♂️ Exploring Bottleneck in GPU Utilization and Multi-head Latent Attention Implementation in DeepSeekV2. Image by Vilius Kukanauskas from Pixabay In this article, we’ll be exploring two …

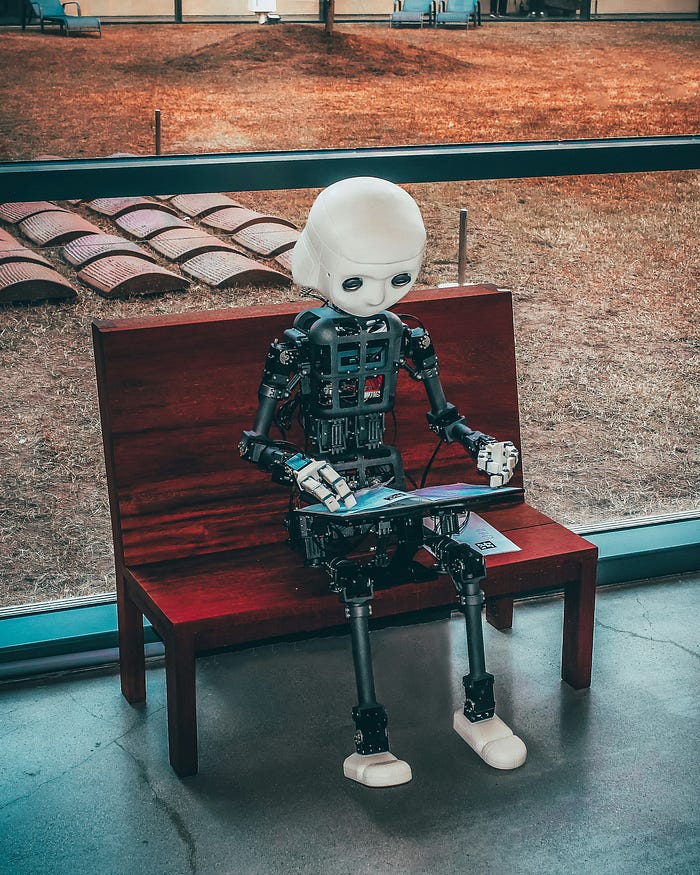

Retrieval Augmented Generation (RAG): A Comprehensive Visual Walkthrough 🧠📖🔗🤖

Author(s): JAIGANESAN Originally published on Towards AI. Retrieval Augmented Generation (RAG): A Comprehensive Visual Walkthrough 🧠📖🔗🤖 Photo by Andrea De Santis on Unsplash You might have heard of Retrieval Augmented Generation, or RAG, a method that’s been making waves in the world …

TAI #104; LLM progress beyond transformers with Samba?

Author(s): Towards AI Editorial Team Originally published on Towards AI. What happened this week in AI by Louie This week we saw a wave of exciting papers with new LLM techniques and model architectures, some of which can quickly become integrated into …

How are LLMs creative?

Author(s): Sushil Khadka Originally published on Towards AI. If you’ve used any generative AI models such as GPT, Llama, etc., there’s a good chance you’ve encountered the term ‘temperature’. Photo by Khashayar Kouchpeydeh on Unsplash For starters, ‘temperature’ is a parameter that …