How does an MCP work?

Author(s): hiruthicSha Originally published on Towards AI. How does an MCP work? Ever since ChatGPT, Bard (now Gemini), and other LLM interfaces entered the scene, our workflows have changed dramatically. A whole ecosystem popped up: “Learn prompt engineering,” “Master ChatGPT,” and so …

How AI Agents Get Smarter: The Cool World of Reinforcement Learning Environments

Author(s): AI Verse Originally published on Towards AI. Discover how reinforcement learning environments — like virtual games and simulators — help AI agents learn by doing, making decisions, and improving with every try. This article explains why these environments matter, how they …

Performance Optimization in NumPy (Speed Matters!)

Author(s): NIBEDITA (NS) Originally published on Towards AI. Hey guys! Welcome back to our NumPy for DS & DA series. This is going to be the 9th article of this series. In our previous article, we discussed Generating Random Numbers with NumPy. …

No Libraries, No Shortcuts: LLM from Scratch with PyTorch

Author(s): Ashish Abraham Originally published on Towards AI. The no BS guide to build, train, and fine-tune a Transformer architecture from scratch OpenAI has recently launched its highly anticipated open-source GPT-OSS models, a moment that invites a minute of reflection on just …

Master AI Engine Optimization (AEO) and its 3 sub-fields: LLMO, GEO, and AAIO.

Author(s): Mohit Sewak, Ph.D. Originally published on Towards AI. The age of SEO is over. The new era of AI Engine Optimization has begun. Alright, grab your cup of chai, pull up a chair, and let’s talk. Because the internet as you …

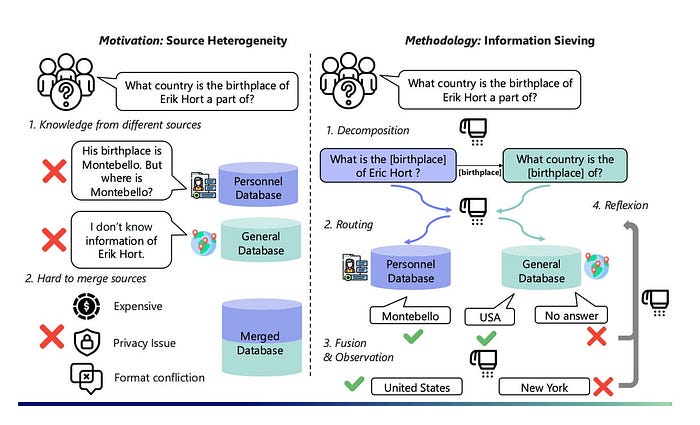

How to Turn RAG into an “Information Sieve” — AI Innovations and Insights 68

Author(s): Florian June Originally published on Towards AI. How to Turn RAG into an “Information Sieve” — AI Innovations and Insights 68 This is Chapter 68 of this insightful series! Figure 1: Motivation and Overview: Left: Compositional queries are hard to answer …

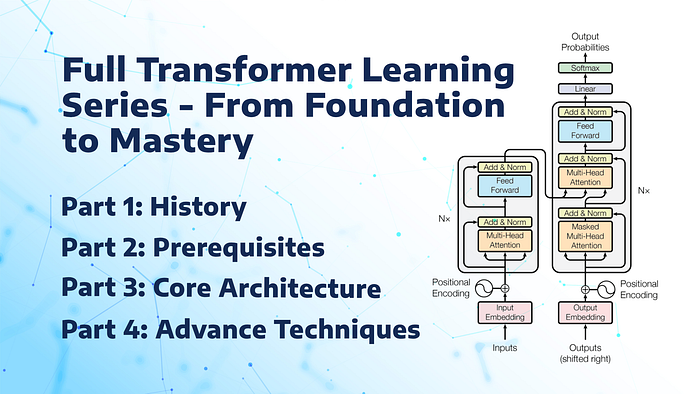

Full Transformer Learning Series: From Foundations to Mastery

Author(s): Rohan Mistry Originally published on Towards AI. Full Transformer Learning Series: From Foundations to Mastery Every revolution has a hidden story. Transformers didn’t just appear out of nowhere — they are the result of decades of strange experiments, brilliant failures, and …

LLM Evaluation: The Crucial Step for AI Success

Author(s): Burak Degirmencioglu Originally published on Towards AI. The capabilities of Large Language Models (LLMs) are advancing every day, creating a revolutionary impact in the field of natural language processing. But how do we know if a model is “successful”? This is …

LAI #95: Fine-Tuning RAG, Smarter Agents, and Tackling GPU Bottlenecks

Author(s): Towards AI Editorial Team Originally published on Towards AI. Good morning, AI enthusiasts, This week’s focus is on making AI systems more efficient and reliable, starting with the question of fine-tuning in RAG pipelines. When does it actually improve retrieval and …

How Are These AI Startups Gathering $Millions in Funding?

Author(s): Krishan Walia Originally published on Towards AI. Real Problems => Real Solutions => Real Funding! How many times, while going to school, have you thought about missing the classes just because you didn’t like the subject or maybe the teaching methods? …