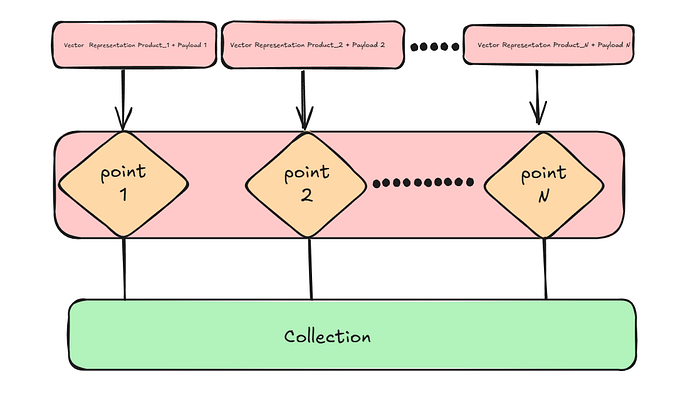

How to Use Sparse Vectors to Power E-commerce Recommendations With Qdrant

Author(s): Sayanteka Chakraborty Originally published on Towards AI. 1. Introduction In e-commerce, success hinges on one thing: showing the right product to the right user at the right time. Whether it’s search results, recommendations, or personalized feeds, every interaction shapes how a …

Why Traditional ML Fails at Fraud Detection (And How I Fixed It)

Author(s): Dewank Mahajan Originally published on Towards AI. How data science, domain intuition, and robust feature engineering come together to fight modern financial fraud. Why Fraud Detection Is a Human Story Fraud isn’t just a data problem.It’s a battle of wits between …

RAG, Part 1 — Chunking Strategies

Author(s): Deepak Chahal Originally published on Towards AI. Why the way you chunk data shapes what your RAG system retrieves Most of you might have heard the term RAG (Retrieval Augmented Generation). As the name suggests, it retrieves external information, augments the …

Continual Learning via Sparse Memory Finetuning (Paper Review)

Author(s): Hira Ahmad Originally published on Towards AI. Continual Learning via Sparse Memory Finetuning (Paper Review) Modern large language models learn vast amounts of knowledge; yet when we try to teach them something new, they tend to forget what they already know. …

Finetuning Qwen3-VL-8B Vision-Language Model: Advanced Knowledge Enhancement Using Python and Unsloth

Author(s): Krishan Walia Originally published on Towards AI. Guide to fine-tuning Qwen3-VL-8B with Python, Unsloth, and open datasets — making powerful Vision AI accessible for every developer. With Qwen3-VL-8B (Qwen Vision-Language), we will unlock the ability to create solutions tailored to our …

How a Senior Engineer at a $140M Startup “Vibe Codes” With AI — And Still Ships 40% Faster

Author(s): Nishad Ahamed Originally published on Towards AI. The secret isn’t magic. It’s a system. A few weeks ago, a post on Reddit caught my eye. Someone had met a senior engineer at a $140M+ startup who claimed that 95% of his …

When AI Stops Parroting and Starts Understanding: The Hidden Math Behind Machine Intelligence 🧠✨

Author(s): MahendraMedapati Originally published on Towards AI. The $175 Billion Question That Changed Everything Imagine spending $175 billion to build the world’s most advanced AI, only to discover that 98.2% of its “brain” sits idle during every single conversation. Visualization of the …

LAI #97: Claude 4.5 Benchmarks, Function-Calling Fine-Tunes, and the Future of Model Alignment

Author(s): Towards AI Editorial Team Originally published on Towards AI. Good morning, AI enthusiasts, This week’s issue dives deep into how models are evolving across capability, specialization, and alignment. We examine Claude Sonnet 4.5, how it outperforms GPT-5 (Codex) and Gemini 2.5 …

How ManusAI Outperformed GPT-4… and Still Lost My Trust

Author(s): R. Thompson (PhD) Originally published on Towards AI. The messy truth behind ManusAI’s credit-based automation model You juggle Slack pings, email threads, half-finished spreadsheets, and the nagging feeling you’re doing five jobs at once. There’s a pair of new tools promising …

How Statistical Regressions Learn to Trust: OLS, WLS, and GLS in Action

Author(s): Sohom Majumder Originally published on Towards AI. A beginner-friendly guide to OLS, WLS, and GLS regression in Python — complete with visuals, code, and clear examples for better model selection. Linear regression, at its heart, is a conversation between data points …