Why Most RAG Systems Fail in Production and the Simple Fix That Improves Accuracy Fast

Last Updated on December 2, 2025 by Editorial Team

Author(s): Divy Yadav

Originally published on Towards AI.

You spent two weeks building a RAG application. It retrieves documents. It generates answers. You tested it with a few questions. It looked good.

Then you put it in production and users start complaining. The answers are sometimes spot-on, sometimes completely wrong. You have no idea which is which until someone tells you.

Sound familiar?

Here’s the uncomfortable truth: most developers ship RAG applications without ever measuring if they actually work.

Let me show you how to fix that.

What is RAG ?

Retrieval Augmented Generation (RAG) is a technique that enhances Large Language Models (LLMs) by providing them with relevant external knowledge. It has become one of the most widely used approaches for building LLM applications. langchain

It is used when u want the llm to answer questions based on ur documents or knowledge base

Why RAG Evaluation Is Not Optional Anymore

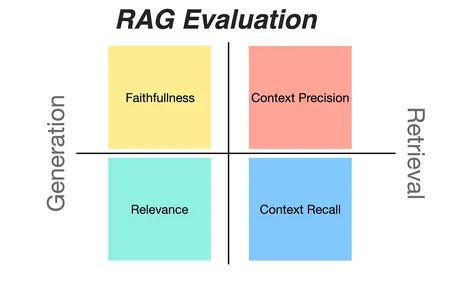

RAG systems are composed of a retrieval and an LLM-based generation module, and evaluating them is challenging because there are several dimensions to consider .

Think about it. Your RAG system has two places where things can go wrong:

The retrieval part:

- Did you find the right documents?

- Did you rank them properly?

- Did you include too much irrelevant stuff?

The generation part:

- Is the LLM actually using your documents?

- Is it making stuff up?

- Is the answer even relevant to the question?

Testing all of this manually? That’s a full-time job.

How RAG Systems Fail (The Real-World Version)

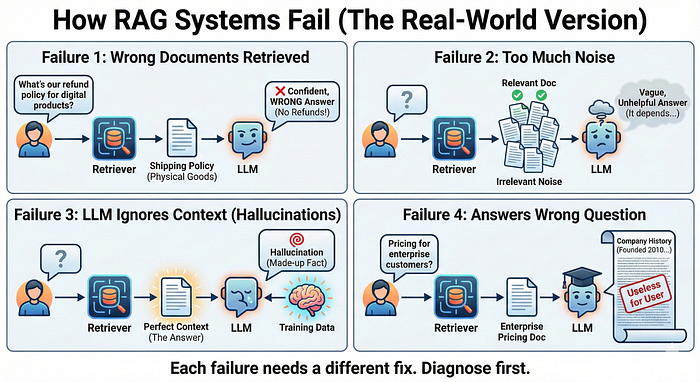

Let’s be specific about what goes wrong:

- Your retriever finds the wrong documents. You ask “What’s our refund policy for digital products?” and it retrieves your shipping policy for physical goods. Your LLM generates a confident answer that’s completely wrong for the user’s situation.

- Your retriever finds too much noise. It returns 10 documents but only 2 are actually relevant. Your LLM gets confused by the contradictory information and hedges its bets with a vague, unhelpful answer.

- Your LLM ignores your documents. Despite retrieving the perfect context, your LLM decides it knows better and generates an answer based on its training data instead of your carefully retrieved documents. Hello, hallucinations.

- Your LLM answers the wrong question. You ask about pricing for enterprise customers, and it gives you a detailed history of the company instead. Technically fluent. Completely useless.

Each of these failures needs a different fix. But first, you need to know which one you’re dealing with.

Traditional Evaluation Methods Don’t Work for RAG

Traditional metrics like BLEU and ROUGE, widely used in natural language processing, often fall short in capturing the nuanced requirements of RAG applications, such as factual accuracy and context relevance.

BLEU compares word overlap. It can’t tell if your answer is factually correct or just similar-sounding.

ROUGE measures recall of words. It can’t tell if your LLM actually used the retrieved documents or just made stuff up that happens to sound right.

You need metrics that understand:

- Factual consistency

- Context usage

- Retrieval quality

- Answer relevance

That’s where Ragas comes in.

Enter Ragas: The Framework That Actually Measures What Matters

It is used for evaluating Retrieval Augmented Generation (RAG) AI systems

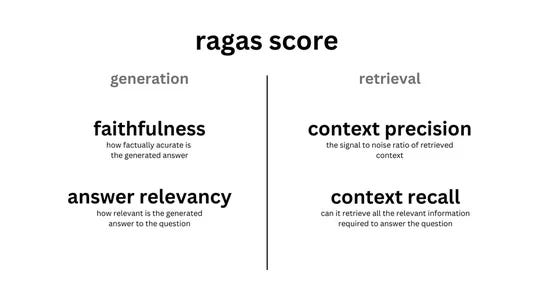

Ragas (Retrieval Augmented Generation Assessment) is a framework for reference-free evaluation of RAG pipelines that introduces a suite of metrics that can evaluate different dimensions without relying on ground truth human annotations .

Read that again. Reference-free evaluation. You don’t need hundreds of human-labeled test cases to get started.

Ragas uses LLMs to evaluate your RAG system across four critical dimensions:

1. Faithfulness — Is your LLM making stuff up?

2. Answer Relevancy — Is your LLM actually answering the question?

3. Context Precision — Did you retrieve the right documents in the right order?

4. Context Recall — Did you miss any important information?

Setting Up Ragas (The Practical Way)

Let’s get hands-on. Here’s the minimal setup you need to start evaluating today

Step 1: Install Ragas

Simple pip install. That’s it.

python

pip install ragas

Step 2: Set up your API key

By default, Ragas uses OpenAI’s models for evaluation DEV Community. Set your API key as an environment variable:

python

import os

os.environ["OPENAI_API_KEY"] = "your-api-key-here"

You can also use Claude, Gemini, or local models. You can easily swap to any provider through the llm_factory LlamaIndex:

python

from ragas.llms import llm_factory

# Use Claude

llm = llm_factory("claude-3-5-sonnet-20241022", provider="anthropic")

# Use Gemini

llm = llm_factory("gemini-1.5-pro", provider="google")

# Use local models

llm = llm_factory("llama2", provider="ollama")

Creating Your Test Dataset

This is what you need to evaluate your RAG system: questions, the contexts retrieved, the answers generated, and optionally, reference answers.

To collect evaluation data, we first need a set of queries to run against our RAG. We can run the queries through the RAG system and collect the response and retrieved_contexts for each query .

Here’s how to structure your data:

python

from ragas.dataset_schema import SingleTurnSample

from ragas import EvaluationDataset

# Create test samples

# Each sample represents one question-answer interaction

data_samples = [

SingleTurnSample(

user_input="When was the first Super Bowl?",

response="The first Super Bowl was held on January 15, 1967",

reference="The first Super Bowl was held on Jan 15, 1967 at the Los Angeles Memorial Coliseum",

retrieved_contexts=[

"The First AFL–NFL World Championship Game was played on January 15, 1967, at the Los Angeles Memorial Coliseum."

]

),

SingleTurnSample(

user_input="What is RAG?",

response="RAG stands for Retrieval Augmented Generation.",

reference="RAG combines retrieval with generation for accurate responses",

retrieved_contexts=[

"Retrieval-Augmented Generation (RAG) combines retrieval with LLMs to produce accurate responses."

]

),

]

# Create evaluation dataset

dataset = EvaluationDataset(samples=data_samples)

What’s happening here: You’re giving Ragas the question, what your RAG system retrieved, what answer it generated, and what the ideal answer should be. Ragas will evaluate each dimension automatically.

Running Your First Evaluation

Now let’s actually measure your RAG system. We can evaluate our RAG system on the collected dataset using a set of commonly used RAG evaluation metrics.

python

from ragas import evaluate

from ragas.metrics import LLMContextRecall, Faithfulness, FactualCorrectness

# Choose your metrics

metrics = [

LLMContextRecall(),

Faithfulness(),

FactualCorrectness()

]

# Run evaluation

result = evaluate(

dataset=dataset,

metrics=metrics

)

# See your scores

print(result)

What this code does: It runs your test dataset through Ragas’s evaluation metrics. Each metric scores a different aspect of your RAG system. You’ll get back scores between 0 and 1 for each metric.

The output looks like:

{'context_precision': 0.817, 'faithfulness': 0.892, 'answer_relevancy': 0.874}

Understanding Your Scores (What The Numbers Mean)

All Ragas metrics are scored between 0.0 and 1.0.

- Faithfulness: 0.89 $\rightarrow$ Your LLM is sticking to the facts 89% of the time.

- Answer Relevancy: 0.40 $\rightarrow$ Red Flag. Your LLM is rambling or answering the wrong question.

- Context Precision: 0.60 $\rightarrow$ Your retrieval system is pulling in too much “junk” documents.

The Rule of Thumb:

- 0.9+: Excellent.

- 0.7–0.9: Good, deployable.

- < 0.7: Needs optimization.

Evaluating Individual Responses in Real-Time

You don’t have to batch evaluate everything. You can check individual responses as they happen.

python

from ragas.metrics import Faithfulness

from ragas.llms import llm_factory

# Set up your evaluator

llm = llm_factory("gpt-4o-mini")

faithfulness_scorer = Faithfulness(llm=llm)

# Create a sample

sample = SingleTurnSample(

user_input="When was the first Super Bowl?",

response="The first Super Bowl was held on January 15, 1967",

retrieved_contexts=[

"The First AFL–NFL World Championship Game was played on January 15, 1967."

]

)

# Score it

score = await faithfulness_scorer.single_turn_ascore(sample)

print(f"Faithfulness Score: {score.value}")

print(f"Reason: {score.reason}")

What’s useful here: You can integrate this into your application to monitor responses in production. Flag low-scoring responses for human review.

The “Secret Weapon”: Synthetic Test Data

The biggest blocker for developers is: “I don’t have a dataset of Questions and Answers to test with.”

Ragas solves this too. It can generate test questions from your own documents.

Python

from ragas.testset.generator import TestsetGenerator

from ragas.testset.evolutions import simple, reasoning, multi_context

# Load your documents

generator = TestsetGenerator.with_openai()

# Generate 10 hardcore questions based on your specific docs

testset = generator.generate_with_langchain_docs(documents, test_size=10, distributions={simple: 0.5, reasoning: 0.25, multi_context: 0.25})

This creates a “Gold Standard” test set specifically for your data, instantly.

The Four Metrics That Tell You Everything

Let me break down what each metric actually measures and why it matters.

Faithfulness: Is Your LLM Lying?

Faithfulness measures the factual consistency of the generated answer against the given context. It is calculated from the answer and retrieved context.

Ragas breaks your answer into individual claims, then checks if each claim can be verified from your retrieved documents.

Example: Your LLM says “The first Super Bowl was held on January 15, 1967 in Los Angeles.”

Ragas checks:

- Claim 1: “The first Super Bowl was held on January 15, 1967” ✓ Found in context

- Claim 2: “It was held in Los Angeles” ✓ Found in context

Score: 1.0 (both claims verified)

Low faithfulness means: Your LLM is adding information that isn’t in your documents. Fix: adjust your system prompt to stick more closely to provided context.

Answer Relevancy: Is Your LLM Actually Answering the Question?

Answer Relevancy focuses on assessing how pertinent the generated answer is to the given prompt.

Your LLM might be faithful to the documents but still answer the wrong question.

Example:

Question: “What’s our refund policy?”

Answer: “We were founded in 2015 and have served over 10,000 customers.”

Factually correct. Completely irrelevant.

Low relevancy means: Your retrieval might be pulling the wrong documents, or your LLM prompt needs better instruction to focus on the question.

Context Precision: Did You Retrieve the Right Stuff?

Context Precision evaluates whether all of the ground-truth relevant items present in the contexts are ranked higher.

This measures your retrieval quality. Are the most relevant documents at the top of your results?

Low precision means: Your vector search or keyword matching is pulling in too much noise. Fix: improve your embedding model, adjust your retrieval parameters, or add better filtering.

Context Recall: Did You Miss Anything Important?

Context recall measures the extent to which the retrieved context aligns with the annotated answer, treated as the ground truth.

This is the only metric that requires a reference answer. It checks if your retrieval found all the information needed to answer the question correctly.

Low recall means: Your retrieval is too narrow. Fix: retrieve more documents, improve your chunking strategy, or expand your search to include related concepts.

A Real-World Workflow

Here’s what evaluation looks like in practice, not in a tutorial:

- Week 1 (Baseline): Generate 20 test questions using the Synthetic Generator. Run them through Ragas. Establish your baseline score.

- Week 2 (Iterate): Identify the lowest metric.

- Low Precision? Fix your Chunking strategy or try a Re-ranker.

- Low Faithfulness? Tweaks your system prompt (e.g., “Answer ONLY using the provided context”).

3. Week 3 (Monitor): Integrate Ragas into your CI/CD pipeline. Every time you change your prompt or embedding model, run the test. If the score drops, don’t merge the code.

Common Mistakes to Avoid

Mistake 1: Testing only happy path questions

Ensure that the dataset covers a diverse range of scenarios, user inputs, and expected responses to provide a comprehensive evaluation LlamaIndex.

Test edge cases. Test ambiguous questions. Test questions your system shouldn’t be able to answer.

Mistake 2: Ignoring production data

Synthetic tests are great for getting started. But real user questions are what matter. Collect them. Evaluate them. Learn from them.

Mistake 3: Evaluating once and forgetting about it

Your RAG system drifts. Your data changes. Your users’ questions evolve. Set up continuous evaluation or you’re flying blind.

The Bottom Line

You can’t improve what you don’t measure.

Ragas is a specialized evaluation framework designed to assess RAG systems by leveraging advanced LLMs as judges, offering scalable and cost-effective solutions LangChain.

- Stop doing the “vibe check.” Stop manually testing 5 questions and calling it good. Stop shipping RAG applications without knowing if they actually work.

- Start measuring. Track your metrics. Fix what’s broken. Iterate based on data.

Your users will thank you. Your stress levels will thank you. And you’ll actually know if your RAG system is working before your users tell you it’s not.

Ready to get started? The Ragas GitHub repository has example notebooks and a helpful community. The official documentation has comprehensive guides for every use case.

Now go measure your RAG system. You might not like what you find, but at least you’ll know where to fix it.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.