Why Most RAG Projects Fail in Production (and How to Build One That Doesn’t)

Last Updated on January 20, 2026 by Editorial Team

Author(s): Bran Kop, Engineer @Conformal, Founder of aiHQ

Originally published on Towards AI.

Enterprise AI discussions often begin with a diagram that looks almost perfect in its simplicity:

Documents → Vector Database → LLM → Answers

It is not wrong. It is just incomplete.

Retrieval-Augmented Generation (RAG) has become one of the most practical patterns in applied AI because it works well in many real scenarios. It improves grounding, reduces hallucinations, and offers a credible path to using internal knowledge without full retraining. In that sense, the industry is right to embrace it.

The problem starts when the diagram becomes the product narrative.

In an enterprise environment, the difference between a successful RAG system and a disappointing one rarely comes down to the “LLM choice” or a fashionable vector database. It comes down to the things that software engineering has always cared about: ingestion quality, data freshness, security boundaries, operational observability, and safe execution paths.

RAG is valuable, but it is not magical. If the architecture surrounding it is weak, the entire system will behave like an expensive prototype that never earns trust.

This article is a practical walkthrough of the production reality: what RAG does well, what it cannot do on its own, and what the full enterprise stack looks like when you build it with discipline.

RAG Works, Until the Data Stops Behaving

In a controlled setting, RAG feels effortless.

You ingest documents, split them into chunks, embed them, store them, retrieve relevant pieces at query time, and feed those pieces into an LLM so the model answers using your content. Ask a question like “Summarize our remote work policy,” and the system can perform beautifully. Retrieval pulls the relevant policy sections, the model produces a coherent summary, and the answer appears grounded.

Enterprises, however, do not operate on tidy libraries.

They operate on sprawling document ecosystems and operational systems that are constantly changing. PDFs exist alongside emails, wikis, incident tickets, spreadsheet exports, and chat logs. Authoritative truth often lives inside structured platforms like Salesforce, SAP, Workday, ServiceNow, and internal SQL databases. Meanwhile, the questions users actually ask often depend on live state, not static documentation.

A naive RAG implementation implicitly assumes that everything is already clean and ready. It assumes ingestion is reliable, updates happen on time, content is semantically chunked, metadata is trustworthy, access is enforced correctly, and retrieval always returns context that actually answers the user’s intent. In production, those assumptions break quickly.

When chunking is crude, retrieval becomes noisy. When updates are not scheduled correctly, answers go stale. When metadata is missing, filtering fails. When permissions are not enforced end-to-end, the system becomes a compliance risk. And when a user asks for real-time operational truth, retrieval from documentation is simply the wrong approach.

That is why enterprise RAG feels “easy” for the first week, then starts to degrade unless the surrounding system is engineered properly.

The Runtime Path Is Simple, but the Outcomes Are Not

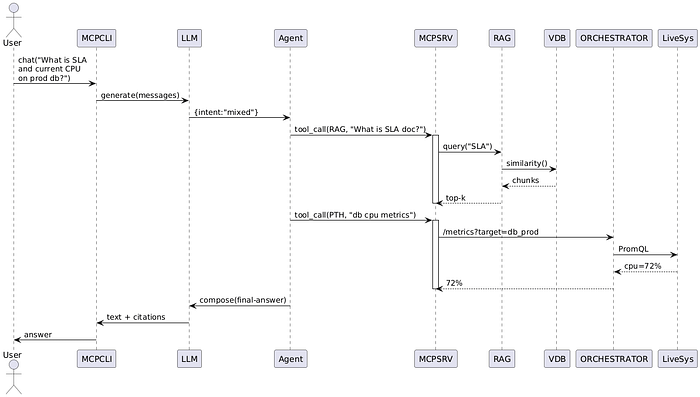

At runtime, most RAG pipelines follow the same mechanical sequence.

The user query is embedded. A nearest-neighbor search retrieves the top candidate chunks. Reranking is applied to improve relevance. A prompt is constructed using system rules plus retrieved context. The model generates an answer.

For static knowledge recall, this is highly effective. It is particularly strong when answers can be supported with citations and when the cost of being slightly outdated is low.

But not all questions are knowledge recall questions.

If a user asks, “Is the VPN down right now,” retrieval should not be the primary mechanism. A static runbook excerpt is not the same as current operational truth. In these cases, a RAG-only solution can produce answers that are confident, well-written, and wrong. Worse, the system may appear plausible enough that non-technical users trust it.

This is where organizations start noticing the predictable failure modes. Token bills rise because irrelevant context is stuffed into prompts. Latencies increase because retrieval, reranking, and long context windows become the default path for everything. Answers drift into long, polished explanations that do not resolve the underlying need. And the system cannot actually execute anything, verify anything, or close the loop with real systems of record.

The lesson is not that RAG is bad. The lesson is that enterprise AI needs routing, tools, and governance around RAG, because RAG is only one capability in a wider operational system.

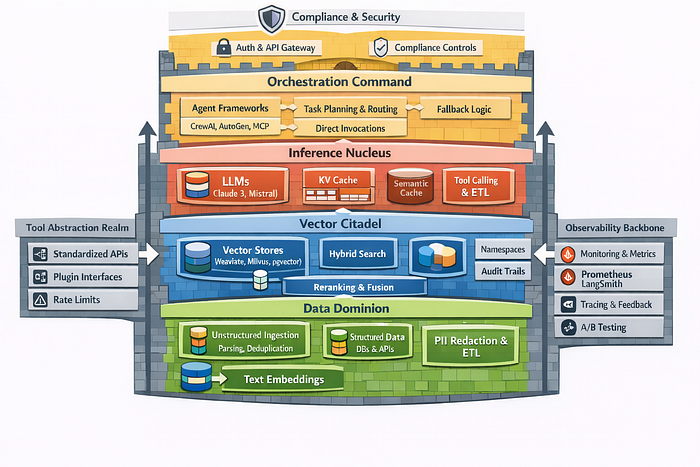

The Enterprise Stack: From Data Reality to Production Reliability

The real story of enterprise AI is not the diagram. It is the architecture behind the diagram.

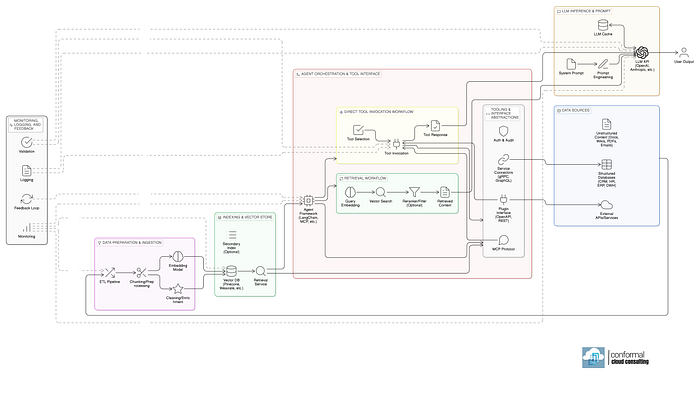

A production-grade system begins at the data layer, where unstructured content must be collected, normalized, and governed before embeddings ever happen. This is not glamorous work, but it is decisive work. You need ingestion pipelines that can parse complex file formats, deduplicate content, detect language and encoding issues, and preserve source context reliably. You need chunking strategies that respect semantics, not arbitrary token slicing, and you need overlap and hierarchical structure so retrieval does not lose meaning at boundaries. You also need a strategy for structured sources, because many enterprise truths are not documents at all. They are rows and relationships. Turning structured data into tool-friendly schemas or textual representations is often required to make it usable by an LLM.

In parallel, you need security and governance to be native to the ingestion process. Sensitive data cannot be treated as an afterthought. PII redaction, classification tags, retention constraints, and access boundaries must be baked into how content enters the system. In regulated environments, “we’ll handle compliance later” is how projects get blocked or shut down.

Once data is prepared, embeddings populate the retrieval layer. This is often described as a “vector database,” but in enterprise practice it is more like a retrieval subsystem with constraints. It must scale, support partitioning, enforce tenant isolation, and offer predictable query performance. It often must support hybrid retrieval, combining keyword search with semantic similarity, because many enterprise queries require both precision and semantic recall. Metadata filtering is not optional, because it is how you enforce access controls, time windows, domain constraints, and “only show documents from this team” logic. Auditability also matters, because enterprises eventually ask, “Which sources caused this answer?”

This is also where retrieval quality becomes an engineering discipline. Reranking using cross-encoders, fusion strategies, and domain-specific tuning often makes the difference between a system that feels intelligent and one that feels random. The retrieval layer must be treated as a product in itself, not plumbing.

Above retrieval sits the inference layer. This is where LLM selection, prompt structures, output schemas, and caching strategies shape both cost and quality. Enterprises increasingly require strict formatting, deterministic sections, and explicit citations because “helpful prose” is not enough. KV caching, semantic caching, and reuse of stable prompt scaffolding can radically reduce spend. Tool-calling capabilities matter because a model that can invoke a function safely is fundamentally more operational than a model that can only generate text.

At this point, many teams stop. They have “RAG,” and it works on the happy path. But enterprise systems are not judged on the happy path. They are judged on what happens when the question is ambiguous, multi-intent, partially real-time, permission-bound, or action-oriented.

That is where orchestration becomes the real control surface.

An orchestration layer takes the user’s request and decides what kind of problem it is. Sometimes the correct answer is retrieval. Sometimes the correct answer is a direct call into a system of record. Sometimes the correct path is a multi-step workflow that collects evidence, validates state, and then generates a response. And sometimes the correct answer is refusing to answer until certain information or permissions exist.

This is the difference between a chatbot and an enterprise assistant.

In a mature architecture, orchestration routes operational queries into tools rather than forcing everything through retrieval. A question like “What’s the training status” may need to call an internal job tracking service. A question like “Why are incidents rising this week” may require pulling from ServiceNow, correlating with monitoring data, then summarizing. A question like “Book a flight and notify the team” is not a retrieval problem at all. It is planning, execution, and confirmation.

None of this is futuristic. It is simply the application of classic systems thinking to AI.

To make tool usage scalable, you also need tool abstraction. Enterprises are full of inconsistent interfaces. Some systems are modern REST APIs, some are gRPC, some are legacy services wrapped behind gateways, and some are internal libraries that were never designed for LLM usage. Tool abstraction normalizes these into stable contracts with authentication, rate limiting, error handling, and safe input/output schemas. It is the difference between “the model can call an API” and “the system can safely operate inside enterprise boundaries.”

Finally, observability ties everything together. In production, you cannot manage what you cannot see. You need metrics for latency, token usage, retrieval quality, cache hit rates, tool invocation errors, and failure modes. You need traces that show how a request flowed through planning, retrieval, tool calls, reranking, and generation. You need evaluation loops, feedback workflows, regression tests, and A/B experiments so improvements do not introduce new failures.

Enterprises do not trust black boxes, and they should not. If an AI system is going to sit near critical decisions, it must be measurable, debuggable, and governable.

RAG Is Not the Star, It Is the Specialist

In a production architecture, RAG has a clear role.

It is excellent at static knowledge recall, especially when answers must be grounded in internal text and supported by citations. It performs well when latency matters, when auditability is required, and when the domain is document-heavy.

But RAG has limits. It is not a real-time state system. It is not a calculation engine. It is not a transaction executor. It does not inherently understand tool outputs or operational truth. It cannot replace systems of record. It should not be forced into roles it was never designed to fill.

That is why the most effective enterprise systems are hybrids.

They combine retrieval with tool invocation, routing logic, caching, validation, and orchestration. They treat RAG as one capability among several, rather than the entire architecture. They reduce hallucinations not by hoping the model “behaves,” but by controlling what the model is allowed to do, what evidence it sees, and how results are verified.

When built this way, enterprises stop buying “chatbots” and start building operational assistants.

What Leaders Should Ask Before Funding a “RAG Platform”

If you are evaluating vendors or internal prototypes, the right questions are not “Which vector database do you use?” or “Which LLM do you support?”

The right questions are architectural.

How do you handle incremental updates and freshness guarantees? How do you chunk and validate content quality? How do you enforce access control end-to-end, including retrieval filtering? How do you detect and prevent sensitive data leakage? How do you route operational queries into tools rather than documents? How do you observe failures, measure quality, and run evaluations over time?

If those answers are vague, you are not buying a production system. You are buying a demo that may never survive contact with real enterprise constraints.

The Builder’s Question: Which Layer Is Your Bottleneck?

Most engineering teams feel the pain in different places.

Some struggle with ingestion reliability and content normalization. Others struggle with retrieval quality and evaluation. Many struggle with tool abstraction and safe execution. In regulated environments, governance and auditability often dominate the roadmap. At scale, cost control and latency become just as important as answer quality.

The teams that succeed are the ones who treat this like systems engineering, not prompt art. They build it the way durable enterprise systems have always been built: layered, observable, secure by design, and resilient under real-world conditions.

RAG is part of that story. It is not the whole story.

If you’re building enterprise AI today, which layer is the hardest for you to scale: data ingestion, retrieval quality, orchestration, tool governance, or observability?

Clap if you found this article useful!

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.