When Optimization Works: The Role of Convexity in Business Decisions

Last Updated on February 9, 2026 by Editorial Team

Author(s): Saif Ali Kheraj

Originally published on Towards AI.

Every business decision operates under constraints, budgets, capacity, regulations, and trade-offs. The structure of those constraints determines whether a decision has a single clear optimal choice or several competing alternatives. Convex problems lead to a decisive answer; non-convex problems create ambiguity, negotiation, and judgment calls.

Three Applied Meanings

1. Convex Sets: Where You Can Operate

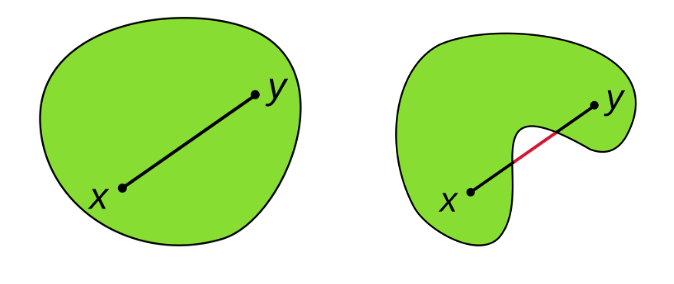

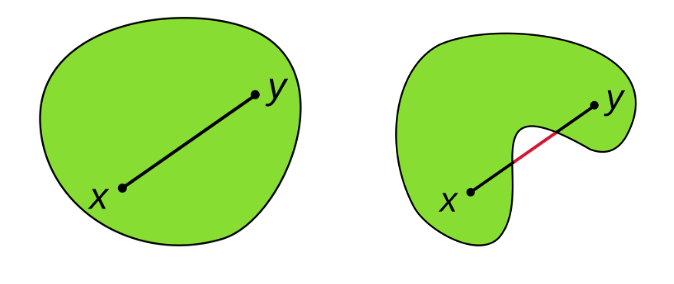

The Math: A set F is convex if for any two points X₁ and X₂ in F, and for any λ ∈ [0,1]: λX₁ + (1-λ)X₂ ∈ F

This means: Pick any two feasible points and draw a straight line between them. Every point on that line must also be feasible. In other words, if X₁ and X₂ are valid decisions, then any weighted mix of them is also valid.

Here, λ is a weight (mixing factor) that controls how much you take from X₁ versus X₂ when forming a new allocation.

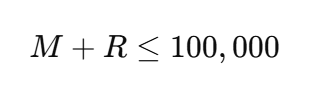

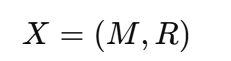

Business Reality: You have $100K to allocate between Marketing (M) and R&D (R), with the constraint

Spending $70K/$30K works. Spending $40K/$60K works. Spending $55K/$45K also works. Any combination under $100K is feasible. The set of all such allocations forms a convex set, allowing you to move smoothly between options.

Here, λ is a weight (mixing factor) that controls how much you take from X₁ versus X₂ when forming a new allocation.

When It Breaks: Suppose a regulation requires choosing either 100% local suppliers or 100% certified suppliers, with no partial mix allowed. The feasible region collapses into two disconnected points, making it non-convex. You can no longer optimize gradually , you must choose one option or the other.

2. Convex Functions: One Bottom, No Surprises

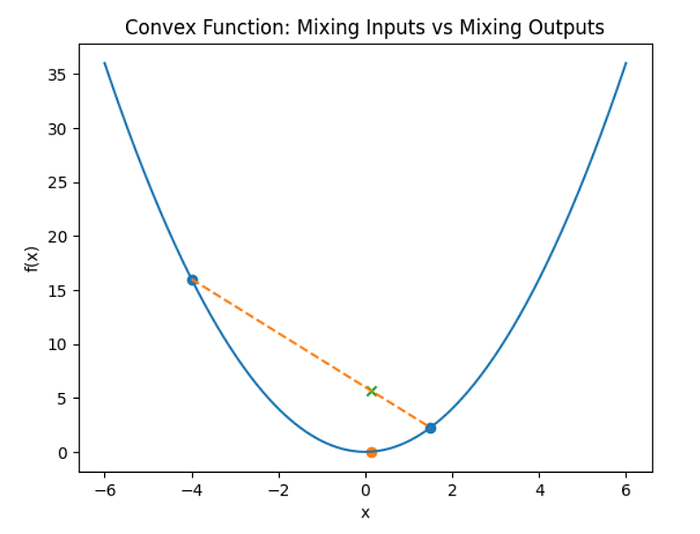

The Math: A function f is convex over set F if for any X₁, X₂ ∈ F and λ ∈ [0,1]:

The function value at any weighted average of two points is less than or equal to the weighted average of the function values. Graphically, the line segment connecting two points on the curve lies above the curve. The function curves upward.

The curve is the function which curves upward.

- The left point (high up) , notice it is much higher.

- The right point (much lower) is f(x2)

- The dashed line connects these two function values.

- The × mark on the dashed line is the weighted average of the two heights

The dot on the curve below it is the value of the function after mixing inputs

In a nutshell, first we measure the heights at x1 and x2, and then take their weighted average using λ. This gives the higher point on the dashed line. Next, we do the opposite: we combine the inputs x1 and x2 using the same λ, go up to the curve, and read the height. This gives the lower dot on the curve.

Why the inequality is true (visually)

Because the function curves upward, the middle of the curve dips below the straight line connecting the two endpoints. So the height you get by mixing inputs first is lower than the height you get by mixing outputs later. That is exactly what the inequality says.

Applied Theorem

In portfolio theory, risk is measured by variance:

- w = portfolio weights (how much money you put in each asset)

- Σ = covariance matrix (how asset returns move together)

Markowitz’s idea is to choose portfolio weights that minimize risk, where risk is measured by variance. This variance function is convex because it can never produce negative risk and always curves upward like a bowl. As a result, blending two portfolios produces a risk that is no worse than the average of their individual risks, which is the mathematical reason diversification works. Because this convex risk surface has only one true minimum, any locally optimal portfolio is also globally optimal. This convexity is what makes Markowitz portfolio optimization reliable and scalable in practice.

What are the two points here?

Think of:

- w1 = Portfolio A (e.g: aggressive, tech-heavy)

- w2 = Portfolio B (e.g: conservative, diversified)

Each portfolio has a risk value:

- Risk(w1)

- Risk(w2)

These are the two dots on the curve.

Mixing inputs in finance means combining two different portfolios by splitting your money between them for example, investing some portion in a risky, tech-heavy portfolio and the rest in a safer, diversified one. The important insight is that the combined portfolio does not simply inherit the average risk of the two. Because the investments do not move exactly together, their ups and downs partially cancel out, so the total fluctuation becomes smoother. In other words, the blended portfolio is usually at least as safe as the average of the two, and often safer. Therefore, when an optimization algorithm searches for the lowest-risk portfolio and finds one, that point is truly the best possible allocation , there are no hidden better portfolios elsewhere and no misleading local optima.

3. Concave Functions: Diminishing Returns

A concave function is the opposite of a convex one. It curves downward like a hill, so when you draw a straight line between two points on the curve, that line lies below the curve.

Business Reality: Advertising spend typically shows diminishing returns. The first $1M in ads drives strong growth, the next $1M adds less, and by the tenth $1M the impact is minimal. Each additional dollar still helps, but by a smaller amount than before. This downward-curving response makes revenue as a function of ad spend concave.

When you maximize a concave function, the problem is clean and predictable. There is only one peak. Once you find it, you know there is no higher value hidden elsewhere.

4. The Most Important Result: Why Convexity Makes Optimization Reliable

Now we reach the real power of convexity.

So far we defined convex sets and convex/concave functions. Here is why they matter.

Proposition 1 : Global Optimality Guarantee

If a function is convex and the feasible region is also convex, then every local minimum is automatically the global minimum.

Two conditions are required:

- The objective function must be convex (bowl-shaped)

- The feasible region must be convex (no gaps or disconnected parts)

When both conditions hold, optimization becomes predictable. The feasible region being convex corresponds to Part 1 (Convex Sets), and the objective function being convex and bowl-shaped corresponds to Part 2 (Convex Functions). When these two properties are present together, a local minimum is guaranteed to be the global minimum.

This explains why:

- Linear programming solutions sit at corners

- Many OR problems only check extreme points

- Algorithms like simplex work

You don’t need to search everywhere, only the corners.

Proposition 2: Where the Optimum Appears

Proposition 1 told us when a solution is trustworthy: if we minimize a convex function over a convex region, any local minimum is also the global minimum.

Now consider the opposite shape : a concave function, which looks like a hill. Suppose we want to make its value as small as possible while staying inside the feasible region.

From any interior point, the surface slopes downward in some direction. Moving slightly toward the boundary produces a smaller value. Repeating this movement keeps lowering the objective, so an interior point cannot be optimal. The decrease stops only when no further reduction is possible at the boundary of the region.

This leads to the second result:

When a concave function is minimized over a convex feasible region, the optimal solution lies at an extreme point (a boundary corner).

References:

[1] https://en.wikipedia.org/wiki/Convex_set

[2] https://web.stanford.edu/~boyd/cvxbook/

[3] https://www.jstor.org/stable/2975974

[4] https://wwnorton.com/books/9781324034292

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.